Thorsten Meyer | ThorstenMeyerAI.com | February 2026

Executive Summary

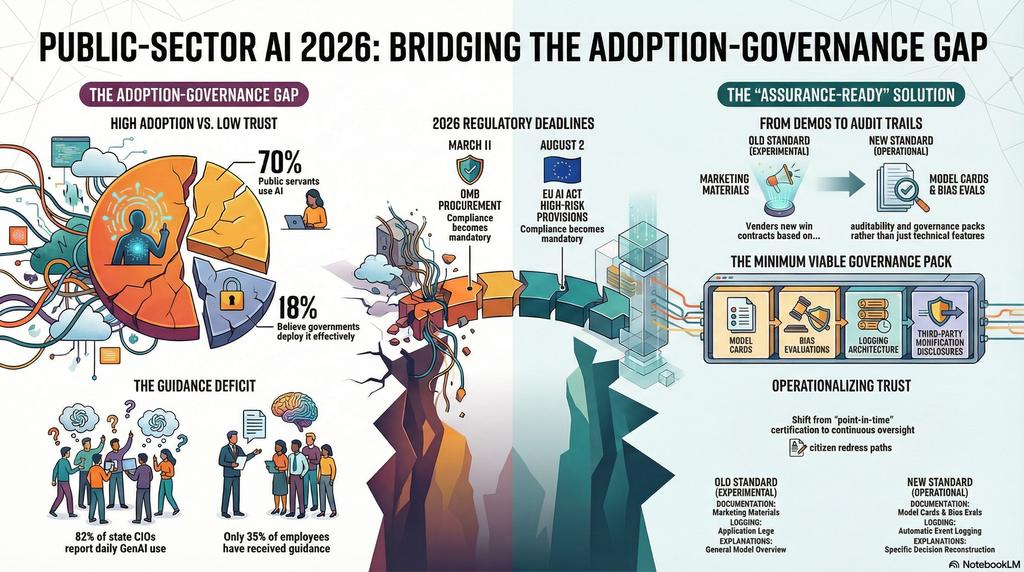

70%+ of public servants worldwide use AI. Only 18% believe governments deploy it effectively (ITIF, 3,335 public servants, 10 countries). 82% of state CIOs report employees using generative AI daily. But only 35% of government employees have received any AI guidance. The gap between adoption and governance is the defining risk of public-sector AI in 2026.

Public-sector AI will scale only where trust mechanisms are operational: procurement rigor, audit trails, explainability, and citizen redress paths. The regulatory architecture is now in place — OMB M-25-21/M-25-22 (April 2025), M-26-04 (December 2025), the EU AI Act’s high-risk provisions (August 2026), and 700+ AI-related bills in the US alone. The question is no longer whether governance is required. It’s whether vendors and agencies can operationalize it before deadlines arrive.

For enterprises selling into government or regulated sectors: governance quality is now a commercial requirement, not a compliance afterthought. The vendor who arrives with an assurance-ready governance pack wins the contract. The vendor who arrives with a demo loses to the one with audit trails.

| Metric | Value |

|---|---|

| Public servants using AI worldwide (ITIF) | 70%+ |

| Believe governments deploy AI effectively | 18% |

| AI feels empowering (global average) | 80% |

| AI feels empowering (advanced adopters) | 91% confident, 82% optimistic, 79% empowered |

| Government employees received AI guidance | 35% |

| State CIOs: employees using GenAI | 82% |

| State CIOs: piloting AI projects | 90% |

| Federal employees: daily AI use | 64% |

| Government/public services AI market (2024) | $22.4 billion |

| Government/public services AI market (2033) | $98 billion (17.8% CAGR) |

| State/local IT spending 2026 | $160.2 billion (+4–6%) |

| Federal cloud computing 2026 | $19.6 billion |

| AI-related bills in US (2024) | 700+ |

| New AI proposals (early 2026) | 40+ |

| EU AI Act high-risk: fully applicable | August 2, 2026 |

| OMB M-26-04 procurement deadline | March 11, 2026 |

| Federal agencies: must designate CAIO | Within 60 days of M-25-21 |

| Agencies: annual AI use case inventory | Required, publicly published |

| Fiscal deficit reduction from AI (by 2035) | Up to 22% |

| Countries surveyed (ITIF) | 10 |

| Public servants surveyed (ITIF) | 3,335 |

Principles of Agentic AI Governance: A Playbook for Managing AI Risk, Fairness, and Compliance

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

1. The Adoption-Governance Gap

Public-sector AI adoption is running ahead of governance infrastructure. The result is operational risk that no amount of enthusiasm can compensate for.

Adoption Is Real

| Adoption Indicator | Value | Source |

|---|---|---|

| Public servants using AI worldwide | 70%+ | ITIF 2026 |

| Federal employees: daily AI use | 64% | ITIF 2026 |

| State CIOs: employees using GenAI | 82% | NASCIO |

| State CIOs: piloting AI projects | 90% | NASCIO |

| AI feels empowering (global) | 80% | ITIF 2026 |

| Confident using AI (advanced adopters) | 91% | ITIF 2026 |

The adoption numbers are unambiguous. AI is in government workflows at every level — federal, state, local, and across 10 surveyed countries. 82% of state CIOs report employees using generative AI. 90% are running pilot projects. This is not experimental. This is operational.

Governance Is Not

| Governance Gap | Value | Source |

|---|---|---|

| Believe government deploys AI effectively | 18% | ITIF 2026 |

| Employees received AI guidance | 35% | ITIF 2026 |

| Organizations with AI governance boards | 55% | Industry data |

| Mature orgs with dedicated AI teams | 67% | Industry data |

| AI projects failing prototype-to-production | ~50% | Industry data |

| Companies scrapping AI initiatives (2025) | 42% | S&P Global |

| Agentic AI projects expected to fail by 2027 | 40%+ | Gartner |

70% use AI. 18% think it’s deployed effectively. 35% received guidance. The gap is not between early and late adopters. It’s between adoption and accountability. Public servants are using AI without the governance infrastructure that makes public-sector AI trustworthy.

The ITIF data reveals a clear pattern: in countries with clear guidance and leadership support — Singapore, Saudi Arabia, India — 91% of public servants feel confident using AI and 82% are optimistic. In countries with unclear guidance — the “cautious adopters” including Germany, France, Japan — confidence drops substantially and AI remains limited to specialist use.

The variable is not technology. It’s governance clarity.

The Agentic AI Advantage: A Comprehensive Collection of Practical Methods, Real-World Projects and Deployment Tactics to Build Reliable AI Agents, Reduce Chaos & Take Control of Your Digital Future

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2. The Regulatory Architecture

The regulatory framework for public-sector AI is no longer emerging. It’s here. Three regimes define the 2026 landscape.

US Federal: OMB M-25-21, M-25-22, M-26-04

| Requirement | Detail | Deadline |

|---|---|---|

| Chief AI Officers | Designated per agency; senior advisor to agency head | Within 60 days of M-25-21 |

| AI use case inventory | Annual, publicly published | Ongoing |

| Risk determinations | Publicly reported with justification | Ongoing |

| Procurement policy update (M-26-04) | Contracts must require LLM compliance with Unbiased AI Principles | March 11, 2026 |

| New LLM procurements | Must include transparency requirements immediately | Now |

| Minimum transparency | Acceptable use policies, model cards, feedback mechanisms | All procurements |

| Enhanced transparency | Pre/post-training assessment, bias evaluation, enterprise controls, third-party modification disclosure | High-stakes systems |

| Contract termination authority | Non-compliance is material to eligibility and payment | Immediate |

M-26-04 is the procurement game-changer. Compliance is material to contract eligibility and payment, with explicit authority to terminate contracts for non-compliance. The vendor who can’t produce model cards, bias evaluations, and audit trails loses the contract — not because of technology, but because of governance readiness.

The memo establishes two transparency levels: minimum (all procurements) and enhanced (public-facing, mission-critical, or high-stakes systems). Enhanced transparency requires four pillars: pre/post-training assessment, model evaluations, enterprise-level controls, and third-party modification disclosure.

EU AI Act: High-Risk Provisions

| Requirement | Detail | Deadline |

|---|---|---|

| High-risk compliance | Articles 9–49: risk management, data governance, conformity assessment | August 2, 2026 |

| Automatic event logging | Article 12: traceability, post-market monitoring | August 2, 2026 |

| Fundamental rights impact assessment | Required before deployment in sensitive public-sector use cases | August 2, 2026 |

| Right to explanation | Article 86: individuals affected by AI decisions | August 2, 2026 |

| Human oversight mechanisms | Required for all high-risk systems | August 2, 2026 |

| Audit and compliance verification | AI Office conducts audits, investigates incidents, issues fines | Ongoing |

The EU AI Act’s August 2026 deadline applies to all high-risk AI systems — including public-sector decision-making in healthcare, finance, employment, and critical infrastructure. Article 12 requires automatic event logging for traceability. Article 86 grants individuals the right to explanation of AI-driven decisions. Article 14 mandates human oversight.

Global Regulatory Proliferation

| Regulatory Activity | Value |

|---|---|

| AI-related bills in US (2024) | 700+ |

| New AI proposals (early 2026) | 40+ |

| Countries with AI governance frameworks | Growing, uneven |

| NIST AI RMF pillars | Govern, Map, Measure, Manage |

| OMB memos on AI (2025–2026) | M-25-21, M-25-22, M-26-04 |

The regulatory density is increasing everywhere. The US has 700+ AI-related bills and 40+ new proposals. The EU AI Act creates comprehensive high-risk requirements. NIST’s AI RMF provides the governance framework. The direction is uniform even where the specifics diverge: more transparency, more auditability, more accountability.

AI OBSERVABILITY : Monitoring & Explainability : Seeing, Understanding, and Trusting Intelligent Systems in Production

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

3. The Trust Bottleneck Architecture

Public-sector AI faces five trust bottlenecks. Each must be operational — not aspirational — for AI to scale in government.

Bottleneck 1: Procurement Rigor

| Procurement Requirement | Why It Matters |

|---|---|

| Model cards and system documentation | Buyers must understand what they’re deploying |

| Bias evaluation results | Public-sector decisions must withstand scrutiny |

| Performance benchmarks | Accuracy, factuality, honesty evidence required |

| Supply chain transparency | Third-party modifications to base models must be disclosed |

| Outcome-linked terms | Payment tied to performance, not deployment activity |

Procurement is where governance becomes binding. M-26-04 makes compliance material to contract eligibility. The vendor who can’t produce documentation loses not to a better product, but to a more governable one.

Bottleneck 2: Audit Trails

| Audit Requirement | What It Demands |

|---|---|

| Automatic event logging (EU AI Act Art. 12) | Every high-risk AI action logged for traceability |

| Post-market monitoring | Continuous capture of performance drift and anomalies |

| Decision reconstruction | Ability to trace how specific outputs were generated |

| Incident documentation | Formal records of failures, escalations, and corrections |

| Compliance evidence | Audit trails serve as proof across pre- and post-training |

M-26-04 requires “continuous oversight rather than point-in-time review.” The EU AI Act requires automatic event logging. The standard is not “we can explain how it works in general.” It’s “we can reconstruct how this specific decision was made for this specific citizen.”

Bottleneck 3: Explainability

| Explainability Dimension | Public-Sector Standard |

|---|---|

| Decision logic | Why did the AI produce this output? |

| Data inputs | What data informed the decision? |

| Confidence level | How certain is the output? |

| Alternative outcomes | What would have changed with different inputs? |

| Limitation disclosure | What can’t the AI assess? |

Article 86 of the EU AI Act grants individuals the right to explanation of AI-driven decisions that adversely affect them. This is not a technical nicety. It’s a legal right that requires operational infrastructure to fulfill.

Bottleneck 4: Citizen Redress Paths

| Redress Element | What It Requires |

|---|---|

| Notification | Citizens informed when AI is used in decisions affecting them |

| Challenge mechanism | Process to contest AI-driven decisions |

| Human review | Right to have a qualified human review the AI output |

| Correction process | Mechanism to correct erroneous AI decisions |

| Response SLA | Defined timeline for redress resolution |

The redress path is where trust becomes real for citizens. An AI system that makes a decision about benefits, enforcement, or services must have an operational path for citizens to challenge, review, and correct that decision. Without it, public-sector AI creates accountability vacuums that erode democratic legitimacy.

Bottleneck 5: Model-Change Governance

| Model-Change Risk | Why It Matters |

|---|---|

| Vendor model updates | Performance, bias, and behavior can change without notice |

| Third-party modifications | Cloud providers, resellers, integrators may alter base models |

| Prompt/safety filter changes | Vendor adjustments affect output quality and compliance |

| Data distribution shifts | Model performance degrades as input data patterns change |

| Regulatory compliance drift | Model changes may create non-compliance with existing approvals |

M-26-04 explicitly requires understanding of how “resellers, cloud providers, or integrators modify base models and affect behavior.” Model-change governance is the bottleneck that most vendors underestimate: the AI that was compliant at deployment may not be compliant after the vendor’s next update.

government AI procurement platform

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4. Enterprise Relevance: Governance as Commercial Requirement

If you sell into government or regulated sectors, everything above is now a commercial requirement.

The Vendor Readiness Gap

| What Procurement Demands | What Most Vendors Have |

|---|---|

| Model cards with bias evaluations | Marketing materials |

| Automatic event logging | Application logs (insufficient) |

| Decision reconstruction capability | “We can explain the model” (not the decision) |

| Third-party modification disclosure | “We use standard cloud” (no detail) |

| Citizen redress infrastructure | Customer support (not redress) |

| Incident and audit reporting templates | Ad hoc incident response |

| Lifecycle compliance evidence | Point-in-time certification |

The gap between what procurement now demands and what most vendors can deliver is the commercial opportunity for governance-ready vendors — and the commercial risk for governance-unready ones.

The Assurance-Ready Vendor Advantage

| Vendor Capability | Procurement Value |

|---|---|

| Pre-built governance pack | Reduces agency evaluation time |

| Standardized audit templates | Meets logging and compliance requirements |

| Model-change notification protocol | Addresses continuous oversight mandate |

| Bias evaluation documentation | Satisfies M-26-04 and EU AI Act |

| Citizen-facing explainability interface | Supports Article 86 redress rights |

| Outcome-linked pricing | Aligns vendor incentives with public outcomes |

The “assurance-ready” vendor — one who arrives with governance documentation, audit infrastructure, and compliance evidence already built — wins the procurement process. The vendor who arrives with a demo and a roadmap loses to the one with operational trust architecture.

5. Practical Implications and Actions

Action 1: Build Procurement-Ready Governance Packs

| Governance Pack Element | Purpose |

|---|---|

| Controls documentation | Maps AI capabilities to risk categories and oversight requirements |

| Logging architecture | Demonstrates automatic event capture for audit trails |

| Accountability map | Names who owns decisions, overrides, escalations, and incident response |

| Bias evaluation results | Pre-deployment assessment of model fairness and accuracy |

| Model card / system card | Technical documentation meeting M-26-04 minimum transparency |

| Third-party modification disclosure | Supply chain transparency for integrators and cloud providers |

This is the minimum viable governance package for public-sector procurement. Without it, the vendor is not competitive — regardless of product quality.

Action 2: Standardize Incident and Audit Reporting Templates

| Template | Content |

|---|---|

| Incident report | What happened, when, impact scope, root cause, remediation |

| Audit log format | Event type, timestamp, inputs, outputs, confidence, escalation |

| Decision reconstruction | Input data, model version, parameters, output, human review status |

| Model-change notification | What changed, why, impact assessment, compliance re-verification |

| Compliance status report | Current conformity against M-26-04 / EU AI Act requirements |

Standardized templates reduce friction for both vendors and agencies. They demonstrate governance maturity to procurement evaluators and create the operational infrastructure that continuous oversight requires.

Action 3: Price for Lifecycle Compliance Effort

| Pricing Component | What to Include |

|---|---|

| Initial compliance setup | Governance documentation, logging infrastructure, baseline evaluation |

| Ongoing monitoring | Continuous bias detection, drift monitoring, audit trail maintenance |

| Model-change management | Assessment and re-verification when vendor updates models |

| Incident response | Investigation, remediation, disclosure costs |

| Regulatory adaptation | Updates as requirements evolve (new OMB memos, EU AI Act amendments) |

The vendors that price only for software delivery will discover that lifecycle compliance costs 3–5x the initial deployment effort. Price it into the contract from the start, or absorb it as margin erosion later.

Action 4: Implement Human-Review Thresholds for High-Stakes Decisions

| Decision Category | Minimum Review Requirement |

|---|---|

| Benefits eligibility | Human review required before denial |

| Enforcement actions | Human approval required before action |

| Service allocation | Human oversight with audit trail |

| Risk scoring | Human review for above-threshold scores |

| Citizen-facing communications | Human review before distribution |

Article 14 of the EU AI Act mandates human oversight for high-risk systems. M-25-21 requires human checkpoints for high-impact AI. The threshold is not negotiable — it’s a legal requirement that must be operational, not theoretical.

Action 5: Establish Model-Change Disclosure Protocols

Every vendor contract should require:

- Pre-notification of model updates affecting performance, bias, or behavior

- Impact assessment documenting how changes affect compliance status

- Re-verification period allowing agencies to test updated models before production deployment

- Rollback capability enabling reversion to previous model version if compliance is compromised

- Audit trail documenting the change, assessment, and deployment decision

6. What to Watch

Contract language requiring model-change disclosure. M-26-04 already requires understanding of third-party modifications. The next evolution: standardized contract clauses requiring pre-notification of model updates, impact assessments, and agency re-verification rights. Vendors who resist disclosure requirements will be excluded from government procurement — and the commercial signal will spread to regulated private sectors.

Mandatory human-review thresholds in high-stakes use cases. The EU AI Act and OMB guidance both mandate human oversight for high-risk AI. Expect specific thresholds to emerge: confidence score minimums for automated processing, mandatory human review for decisions above certain impact levels, and standardized escalation protocols. The “review everything” model will give way to risk-tiered human oversight — but the requirement for human authority in high-stakes decisions is non-negotiable.

Procurement preference for “assurance-ready” vendors. The procurement advantage for governance-ready vendors is already visible. As M-26-04 deadlines approach and EU AI Act provisions become enforceable, agencies will increasingly screen vendors for governance readiness before evaluating product capabilities. The vendor who can demonstrate audit trails, model cards, bias evaluations, and incident reporting templates will move through procurement faster — and the speed advantage compounds in government’s long procurement cycles.

The Bottom Line

70%+ of public servants use AI. 18% believe it’s deployed effectively. 35% received guidance. The adoption is real. The governance is not.

The regulatory architecture is in place: M-26-04 (March 2026 deadline), EU AI Act high-risk provisions (August 2026), 700+ US AI bills, and expanding global requirements. Public-sector AI will scale only where trust mechanisms are operational — procurement rigor, audit trails, explainability, citizen redress paths, and model-change governance.

For enterprises selling into government: the demo is no longer enough. The governance pack is the new demo. The audit trail is the new feature. And the vendor who can prove continuous compliance — not just point-in-time certification — wins the contract.

In public-sector AI, the vendor who arrives with audit trails wins the contract. The vendor who arrives with a demo wins the meeting — and loses the procurement.

Thorsten Meyer is an AI strategy advisor who has observed that in 2026, the fastest path through government procurement is not the best product demo — it’s the best governance pack. More at ThorstenMeyerAI.com.

Sources

- ITIF — Public Sector AI Adoption Index 2026: 70%+ Use, 18% Effective (3,335 Public Servants, 10 Countries)

- ITIF — Advanced Adopters: 91% Confident, 82% Optimistic; Only 35% Received Guidance

- NASCIO — 82% State CIOs: Employees Using GenAI; 90% Piloting

- NASCIO — AI Tops State CIO Priorities 2026

- OMB M-25-21 — Accelerating Federal AI: Innovation, Governance, Public Trust (April 2025)

- OMB M-25-22 — Driving Efficient AI Acquisition in Government (April 2025)

- OMB M-26-04 — Unbiased AI Principles: Procurement Compliance (December 2025)

- OMB M-26-04 — Two Transparency Levels: Minimum and Enhanced; March 11, 2026 Deadline

- EU AI Act — High-Risk Provisions Fully Applicable August 2, 2026

- EU AI Act Article 12 — Automatic Event Logging for Traceability

- EU AI Act Article 14 — Human Oversight for High-Risk Systems

- EU AI Act Article 86 — Right to Explanation of AI-Driven Decisions

- GovTech — State/Local IT Spending $160.2 Billion (2026, +4–6%)

- GovTech — 2026 GT100: Scaling AI in Government

- Federal Budget IQ — Federal Cloud $19.6B (FY 2026) to $21B (FY 2028)

- AppMaisters — Government/Public Services AI: $22.4B (2024) to $98B (2033), 17.8% CAGR

- PwC — AI Fiscal Deficit Reduction Up to 22% by 2035

- OECD — AI in Public Procurement: Governing with AI

- Fiddler AI — OMB M-26-04 Analysis: Continuous Oversight, Not Point-in-Time

- Crowell & Moring — Transparency Requirements on Federal Contractors

- S&P Global — 42% Scrapped AI Initiatives (2025)

- Gartner — 40%+ Agentic AI Projects Fail by 2027

- NIST AI RMF — Govern, Map, Measure, Manage

- Ogletree — Federal Agency AI Strategy Plans: Contractor Takeaways

- StateTech — 2026 State/Local IT Priorities: AI Growth + Fiscal Uncertainty

© 2026 Thorsten Meyer. All rights reserved. ThorstenMeyerAI.com