Interactive Slide Deck Included

Abstract

Artificial Intelligence has evolved from a theoretical concept to a transformative force reshaping industries, societies, and human interaction with technology. This comprehensive guide explores twelve critical aspects of modern AI, from fundamental concepts like foundation models and data privacy to emerging challenges such as environmental impact and emergent behavior. Drawing from extensive research and practical applications, this article serves as both an educational resource and a practical guide for understanding the current state and future trajectory of AI technology.

The accompanying interactive slide deck provides visual learning materials that complement the detailed analysis presented in this article, making complex AI concepts accessible to both technical and non-technical audiences. Together, these resources offer a complete educational framework for understanding the opportunities and challenges that define contemporary artificial intelligence.

Top picks for "understand modern concept"

Open Amazon search results for this keyword.

As an affiliate, we earn on qualifying purchases.

Introduction: The AI Revolution in Context

The artificial intelligence landscape of 2025 represents a remarkable convergence of theoretical breakthroughs, computational advances, and practical applications that have fundamentally altered how we approach problem-solving across virtually every domain of human endeavor. From the early days of rule-based systems and expert systems in the 1980s to today’s sophisticated large language models and multimodal AI systems, the journey has been marked by periods of rapid advancement punctuated by moments of reflection on the broader implications of these technologies.

What distinguishes the current era of AI development is not merely the scale of computational resources or the sophistication of algorithms, but rather the emergence of capabilities that appear to transcend the sum of their programmed parts. Modern AI systems demonstrate behaviors and competencies that their creators did not explicitly design, leading to both unprecedented opportunities and novel challenges that require careful consideration from technical, ethical, and societal perspectives.

The twelve key concepts explored in this article represent the most critical areas of understanding for anyone seeking to comprehend the current state of AI technology. These topics were selected based on their fundamental importance to AI development, their practical implications for users and developers, and their significance in shaping the future trajectory of artificial intelligence research and application.

Each section of this article corresponds to detailed visual materials in the accompanying slide deck, which provides interactive charts, diagrams, and visual explanations that enhance understanding of complex technical concepts. This multimedia approach recognizes that different learners benefit from different presentation formats, and that the complexity of modern AI requires multiple perspectives to achieve comprehensive understanding.

Training Deck – Understanding Modern AI: Key Concepts and Challenges

Chapter 1: The Foundation of Trust – Understanding AI Data Privacy

The relationship between artificial intelligence and data privacy represents one of the most critical challenges facing the technology industry today. As AI systems become increasingly sophisticated and pervasive, their appetite for data grows exponentially, creating unprecedented opportunities for both innovation and privacy violations. Understanding the nuances of AI data privacy is essential for developers, users, and policymakers alike, as the decisions made today will shape the privacy landscape for generations to come.

The Nature of AI Data Consumption

Modern AI systems, particularly large language models and deep learning networks, require vast quantities of data to achieve their remarkable capabilities. This data hunger stems from the fundamental nature of machine learning, which relies on pattern recognition across large datasets to develop predictive capabilities. Unlike traditional software applications that process data according to predetermined rules, AI systems learn from data, making the quality, quantity, and diversity of training data crucial determinants of system performance.

The scale of data consumption in modern AI is staggering. Training datasets for large language models often contain hundreds of billions of tokens, representing text from millions of web pages, books, articles, and other sources. Image recognition systems may train on millions of photographs, while recommendation systems analyze billions of user interactions. This massive data consumption creates multiple privacy challenges that extend far beyond traditional data protection concerns.

Personal information embedded within training datasets can be inadvertently memorized by AI models, leading to potential privacy breaches when the model generates outputs that reveal sensitive information about individuals who never consented to their data being used for AI training. This phenomenon, known as training data extraction, has been demonstrated in various AI systems, highlighting the need for robust privacy protection mechanisms throughout the AI development lifecycle.

Regulatory Frameworks and Compliance Challenges

The regulatory landscape surrounding AI and data privacy is rapidly evolving, with frameworks like the European Union’s General Data Protection Regulation (GDPR) setting important precedents for how AI systems must handle personal data. Under GDPR, AI systems that process personal data must comply with principles of lawfulness, fairness, transparency, purpose limitation, data minimization, accuracy, storage limitation, integrity, confidentiality, and accountability.

These principles create significant challenges for AI developers, particularly in areas such as data minimization and purpose limitation. Traditional AI development practices often involve collecting as much data as possible to improve model performance, directly conflicting with the GDPR principle of data minimization, which requires that only data necessary for specific purposes be collected and processed.

The principle of transparency presents another significant challenge, as many AI systems, particularly deep learning models, operate as “black boxes” where the decision-making process is not easily interpretable or explainable. This opacity conflicts with GDPR requirements for individuals to understand how their data is being processed and to receive meaningful information about the logic involved in automated decision-making.

Compliance with these regulations requires AI developers to implement privacy-by-design principles, incorporating privacy considerations into every stage of the AI development process. This includes conducting privacy impact assessments, implementing data protection measures such as encryption and access controls, and developing mechanisms for individuals to exercise their rights under data protection laws, including the right to access, rectify, erase, and port their personal data.

Technical Approaches to Privacy Protection

The technical AI community has developed several innovative approaches to address privacy concerns while maintaining the effectiveness of AI systems. Differential privacy, a mathematical framework for quantifying and limiting privacy loss, has emerged as one of the most promising approaches for protecting individual privacy in AI training datasets.

Differential privacy works by adding carefully calibrated noise to datasets or model outputs, ensuring that the presence or absence of any individual’s data in the training set cannot be reliably determined from the model’s behavior. This approach allows organizations to gain insights from data while providing formal privacy guarantees to individuals whose data is included in the analysis.

Federated learning represents another significant advancement in privacy-preserving AI. This approach allows AI models to be trained across multiple devices or organizations without centralizing the underlying data. Instead of sending data to a central server, federated learning sends model updates, allowing the benefits of collaborative learning while keeping sensitive data localized.

Homomorphic encryption, while still in early stages of practical application, offers the theoretical possibility of performing computations on encrypted data without decrypting it. This could enable AI training and inference on sensitive data while maintaining cryptographic protection throughout the process.

Synthetic data generation has also emerged as a valuable tool for privacy protection. By training generative models to create artificial datasets that maintain the statistical properties of real data while removing direct links to individual records, organizations can develop and test AI systems without exposing sensitive personal information.

Emerging Privacy Challenges

As AI systems become more sophisticated, new privacy challenges continue to emerge. The development of multimodal AI systems that can process text, images, audio, and video simultaneously creates new opportunities for privacy violations through cross-modal inference, where information from one modality can be used to infer sensitive information about another.

The increasing use of AI in sensitive domains such as healthcare, finance, and criminal justice amplifies privacy concerns, as errors or breaches in these areas can have severe consequences for individuals. The development of AI systems that can generate realistic synthetic content, including deepfakes and synthetic text, creates new challenges for consent and authenticity in data collection and use.

The global nature of AI development and deployment also creates challenges for privacy protection, as data may be processed across multiple jurisdictions with different privacy laws and standards. This complexity requires organizations to navigate a patchwork of regulatory requirements while maintaining consistent privacy protections for users worldwide.

[Reference to Training Slide: The accompanying slide deck includes detailed visualizations of privacy protection mechanisms and regulatory frameworks that complement this analysis.]

Chapter 2: Democratizing AI – The Open Source Revolution

The open source movement in artificial intelligence represents a fundamental shift in how AI technology is developed, distributed, and democratized. Unlike the early days of AI research, when cutting-edge capabilities were largely confined to well-funded research institutions and technology giants, the open source AI ecosystem has created unprecedented opportunities for innovation, collaboration, and access to state-of-the-art AI capabilities.

The Philosophy and Practice of Open Source AI

Open source AI development is grounded in principles of transparency, collaboration, and shared benefit that extend far beyond simple code sharing. At its core, open source AI represents a belief that the transformative potential of artificial intelligence should be accessible to the broadest possible community of developers, researchers, and users, rather than being concentrated in the hands of a few powerful organizations.

This philosophy manifests in multiple dimensions of AI development. Open source AI projects typically provide not only the source code for AI models and training frameworks, but also detailed documentation, training datasets, model weights, and comprehensive guides for reproduction and modification. This level of transparency enables researchers and developers to understand exactly how AI systems work, identify potential biases or limitations, and build upon existing work to create new innovations.

The collaborative nature of open source AI development has led to rapid advancement in AI capabilities through distributed innovation. When researchers publish their models and code openly, other researchers can quickly build upon their work, leading to faster iteration cycles and more robust solutions than would be possible through isolated development efforts. This collaborative approach has been particularly evident in the development of transformer architectures, where innovations from multiple research groups have been rapidly integrated and improved upon by the broader community.

The economic implications of open source AI are profound. By providing free access to sophisticated AI capabilities, open source projects dramatically lower the barriers to entry for AI development and deployment. Small startups, academic researchers, and developers in resource-constrained environments can access the same fundamental AI technologies used by major technology companies, enabling innovation and competition that would otherwise be impossible.

Major Open Source AI Ecosystems

The open source AI landscape is dominated by several major ecosystems, each with its own strengths, focus areas, and community characteristics. Understanding these ecosystems is crucial for anyone seeking to leverage open source AI effectively.

Hugging Face has emerged as perhaps the most significant platform for open source AI, particularly in the domain of natural language processing and multimodal AI. The platform hosts thousands of pre-trained models, datasets, and applications, providing a comprehensive ecosystem for AI development and deployment. Hugging Face’s transformers library has become the de facto standard for working with transformer-based models, offering easy-to-use interfaces for a wide range of AI tasks including text generation, translation, summarization, and question answering.

The platform’s model hub contains contributions from major research institutions, technology companies, and individual researchers, creating a diverse ecosystem of AI capabilities. Models range from small, efficient architectures suitable for edge deployment to large, powerful models that rival proprietary alternatives. The platform also provides tools for model evaluation, comparison, and deployment, making it easier for developers to find and use appropriate models for their specific needs.

PyTorch and TensorFlow represent the foundational frameworks upon which much of the open source AI ecosystem is built. PyTorch, developed by Meta (formerly Facebook), has gained particular popularity in the research community due to its dynamic computation graphs and intuitive Python-first design. TensorFlow, developed by Google, offers robust production deployment capabilities and has been widely adopted in enterprise environments.

Both frameworks provide comprehensive ecosystems including high-level APIs for common AI tasks, distributed training capabilities, model optimization tools, and deployment solutions for various platforms including mobile devices, web browsers, and cloud environments. The competition and collaboration between these frameworks has driven rapid innovation in AI development tools and practices.

Benefits and Advantages of Open Source AI

The advantages of open source AI extend across multiple dimensions, creating value for individual developers, organizations, and society as a whole. From a technical perspective, open source AI provides unparalleled transparency and auditability. Developers can examine the complete implementation of AI models, understand their limitations and biases, and modify them to suit specific requirements.

This transparency is particularly valuable for applications in sensitive domains such as healthcare, finance, and criminal justice, where understanding and validating AI behavior is crucial for ethical and effective deployment. Open source models can be thoroughly tested, audited, and validated by independent researchers, providing greater confidence in their reliability and fairness than proprietary alternatives.

The customization capabilities offered by open source AI are another significant advantage. Organizations can modify open source models to incorporate domain-specific knowledge, adapt to particular data distributions, or optimize for specific performance requirements. This level of customization is often impossible with proprietary AI services, which typically offer limited configuration options and no access to underlying model architectures.

From an economic perspective, open source AI can provide substantial cost savings compared to proprietary alternatives. Organizations can deploy open source models on their own infrastructure, avoiding ongoing licensing fees and usage-based pricing that can become prohibitively expensive at scale. This cost advantage is particularly significant for applications that require processing large volumes of data or serving many users.

The educational value of open source AI cannot be overstated. Students, researchers, and practitioners can learn from real-world implementations of cutting-edge AI techniques, accelerating their understanding and skill development. This educational benefit creates a positive feedback loop, as more knowledgeable practitioners contribute back to the open source community, further advancing the state of the art.

Challenges and Considerations

Despite its many advantages, open source AI also presents certain challenges and considerations that users must carefully evaluate. One of the primary challenges is the technical expertise required to effectively deploy and maintain open source AI systems. Unlike proprietary AI services that provide managed infrastructure and support, open source AI typically requires users to handle their own deployment, scaling, monitoring, and maintenance.

This technical complexity can be particularly challenging for organizations without dedicated AI expertise or infrastructure teams. Deploying large language models, for example, requires significant computational resources, specialized hardware knowledge, and expertise in distributed systems and model optimization. The learning curve for effectively using open source AI tools can be steep, particularly for complex applications.

Quality and reliability considerations also present challenges in the open source AI ecosystem. While many open source models are developed by reputable research institutions and companies, the decentralized nature of open source development means that quality can vary significantly across different projects. Users must carefully evaluate the provenance, testing, and validation of open source models before deploying them in production environments.

Security considerations are another important factor in open source AI deployment. While the transparency of open source code enables security auditing, it also means that potential vulnerabilities are visible to malicious actors. Organizations must implement appropriate security measures and stay current with security updates and patches for their open source AI dependencies.

The support and maintenance model for open source AI projects can also present challenges. While many projects have active communities and commercial support options, some projects may have limited ongoing maintenance or may become abandoned over time. Organizations must carefully evaluate the long-term viability and support ecosystem of open source AI projects before making significant investments in them.

[Reference to Training Slide: The slide deck provides visual comparisons of major open source AI platforms and their respective strengths and use cases.]

Chapter 3: The Reality of Local AI Deployment

The deployment of artificial intelligence models on local infrastructure represents both a significant opportunity and a substantial challenge in the current AI landscape. As organizations and individuals seek greater control over their AI capabilities, reduced dependence on cloud services, and enhanced privacy protection, the appeal of local AI deployment has grown considerably. However, the technical, economic, and operational challenges associated with running sophisticated AI models locally require careful consideration and planning.

Understanding the Local Deployment Landscape

Local AI deployment encompasses a broad spectrum of scenarios, from running small, specialized models on edge devices to deploying large language models on enterprise data center infrastructure. The motivations for local deployment vary significantly across these scenarios, but common drivers include privacy and data sovereignty concerns, latency requirements, cost optimization, and the desire for greater control over AI capabilities.

The technical requirements for local AI deployment have evolved dramatically as AI models have grown in size and complexity. Early AI applications, such as simple image classification or basic natural language processing tasks, could be effectively deployed on modest hardware configurations. However, modern large language models with billions or trillions of parameters require substantial computational resources that challenge even well-equipped data centers.

The hardware landscape for local AI deployment is dominated by graphics processing units (GPUs), which provide the parallel processing capabilities essential for efficient AI inference and training. However, the specific GPU requirements vary dramatically based on the size and type of AI model being deployed. Small models suitable for mobile applications may run effectively on integrated GPUs or specialized AI accelerators, while large language models may require multiple high-end GPUs with substantial memory capacity.

Central processing unit (CPU) requirements for AI deployment are often underestimated but remain crucial for overall system performance. AI workloads typically require substantial memory bandwidth and capacity, fast storage systems, and robust networking infrastructure to support distributed deployment scenarios. The balance between these different hardware components significantly impacts both performance and cost-effectiveness of local AI deployments.

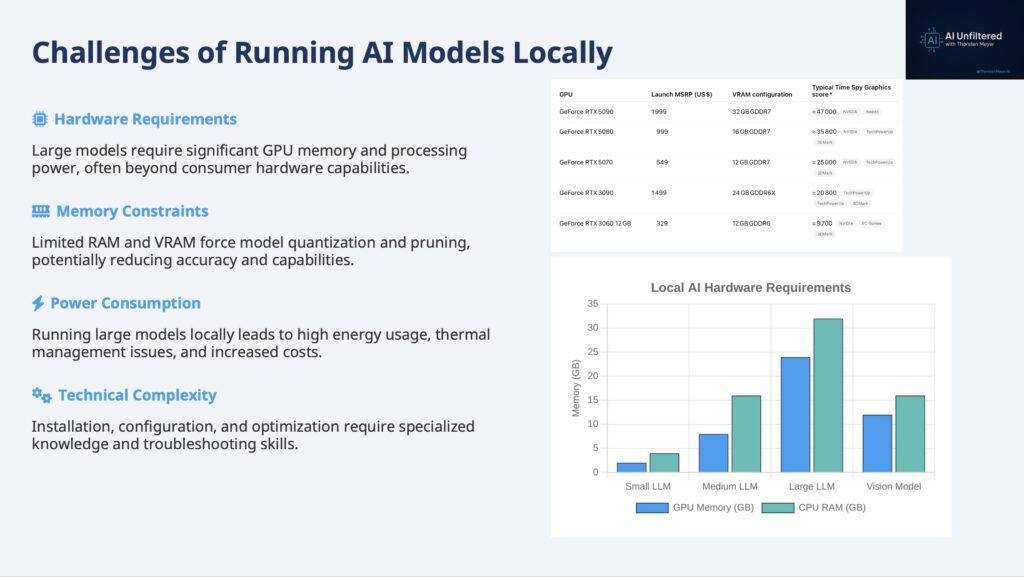

Hardware Requirements and Constraints

The hardware requirements for local AI deployment present one of the most significant barriers to widespread adoption. Large language models, which have become increasingly popular for a wide range of applications, typically require substantial GPU memory to store model parameters during inference. A model with 70 billion parameters, for example, requires approximately 140 GB of memory when loaded in 16-bit precision, necessitating multiple high-end GPUs or specialized high-memory configurations.

Memory requirements extend beyond simple parameter storage to include activation memory for processing inputs, memory for attention mechanisms in transformer models, and additional overhead for the inference framework itself. These requirements can easily double or triple the baseline memory needs, making even modest-sized models challenging to deploy on consumer-grade hardware.

The computational requirements for AI inference vary significantly based on the specific model architecture and the desired throughput. Transformer-based models, which dominate current AI applications, require substantial computational resources for attention calculations, particularly for long input sequences. The quadratic scaling of attention mechanisms with sequence length means that processing long documents or conversations can quickly overwhelm available computational resources.

Specialized hardware accelerators, including tensor processing units (TPUs), field-programmable gate arrays (FPGAs), and dedicated AI chips, offer potential solutions to some of these hardware challenges. These accelerators are designed specifically for AI workloads and can provide significant performance and efficiency advantages over general-purpose GPUs. However, they often require specialized software stacks and may have limited compatibility with existing AI frameworks and models.

The networking requirements for distributed AI deployment add another layer of complexity to local deployment scenarios. Large models that cannot fit on a single device must be distributed across multiple devices, requiring high-bandwidth, low-latency networking to coordinate inference across the distributed system. This networking overhead can significantly impact overall system performance and adds complexity to deployment and management.

Performance and Efficiency Considerations

The performance characteristics of locally deployed AI systems differ significantly from cloud-based alternatives in ways that extend beyond simple throughput metrics. Latency, which is often a primary motivation for local deployment, can be dramatically improved by eliminating network round-trips to remote servers. However, achieving optimal latency requires careful optimization of the entire inference pipeline, from input preprocessing to output generation.

Model optimization techniques play a crucial role in making local AI deployment practical and efficient. Quantization, which reduces the precision of model parameters from 32-bit or 16-bit floating-point numbers to 8-bit or even lower precision integers, can significantly reduce memory requirements and improve inference speed with minimal impact on model accuracy. Advanced quantization techniques, such as dynamic quantization and quantization-aware training, can achieve even better trade-offs between efficiency and accuracy.

Pruning techniques, which remove unnecessary connections or entire neurons from neural networks, offer another approach to reducing model size and computational requirements. Structured pruning, which removes entire channels or layers, can be particularly effective for deployment on hardware with limited parallel processing capabilities. Unstructured pruning, which removes individual connections, can achieve higher compression ratios but may require specialized hardware or software support to realize performance benefits.

Knowledge distillation represents a more fundamental approach to model optimization, involving training smaller “student” models to mimic the behavior of larger “teacher” models. This technique can produce models that are orders of magnitude smaller than their teachers while retaining much of their capability. However, the distillation process requires access to the original training data or carefully constructed synthetic datasets, which may not always be available.

The energy efficiency of local AI deployment has become an increasingly important consideration as organizations seek to minimize operational costs and environmental impact. AI inference can be extremely energy-intensive, particularly for large models running on power-hungry GPUs. Optimizing energy efficiency requires balancing performance requirements with power consumption, often involving trade-offs between inference speed and energy usage.

Economic Implications and Cost Analysis

The economic analysis of local AI deployment involves complex trade-offs between upfront capital expenditures, ongoing operational costs, and the value derived from AI capabilities. The initial hardware investment for local AI deployment can be substantial, particularly for organizations seeking to deploy large, state-of-the-art models. High-end GPUs suitable for AI workloads can cost tens of thousands of dollars each, and complete systems capable of running large language models may require investments of hundreds of thousands or millions of dollars.

However, these upfront costs must be evaluated against the ongoing costs of cloud-based AI services, which typically charge based on usage volume. For organizations with consistent, high-volume AI workloads, local deployment can provide significant cost savings over time. The break-even point depends on factors including the specific models being used, the volume of inference requests, the cost of local infrastructure, and the pricing of alternative cloud services.

The total cost of ownership for local AI deployment extends beyond hardware acquisition to include ongoing operational expenses such as electricity, cooling, maintenance, and personnel costs. Data center-grade AI hardware typically requires substantial power and cooling infrastructure, which can represent a significant portion of ongoing operational costs. Additionally, maintaining and optimizing AI infrastructure requires specialized expertise that may necessitate hiring additional personnel or investing in training for existing staff.

The opportunity costs associated with local AI deployment also merit consideration. Organizations that invest heavily in local AI infrastructure may have fewer resources available for other technology investments or business initiatives. Additionally, the rapid pace of AI hardware and software development means that local infrastructure may become obsolete more quickly than traditional IT investments, requiring more frequent refresh cycles.

Security and Compliance Considerations

Local AI deployment offers significant advantages for organizations with strict security and compliance requirements. By keeping AI processing on-premises, organizations can maintain complete control over their data and ensure that sensitive information never leaves their secure environment. This level of control is particularly important for organizations in regulated industries such as healthcare, finance, and government, where data protection requirements may prohibit the use of external AI services.

However, local AI deployment also introduces new security challenges that organizations must address. AI models themselves can be valuable intellectual property that requires protection from theft or unauthorized access. Additionally, AI systems may be vulnerable to adversarial attacks that attempt to manipulate model behavior or extract sensitive information from training data.

The complexity of AI software stacks, which typically include multiple frameworks, libraries, and dependencies, creates a large attack surface that requires ongoing security monitoring and maintenance. Organizations must implement robust security practices including regular security updates, vulnerability scanning, and access controls to protect their AI infrastructure.

Compliance with data protection regulations such as GDPR or HIPAA may actually be simplified by local AI deployment, as organizations can implement comprehensive data governance and audit trails without relying on external service providers. However, organizations must still ensure that their local AI systems comply with all relevant regulations and industry standards.

[Reference to Training Slide: The slide deck includes detailed charts comparing hardware requirements and cost analysis for different scales of local AI deployment.]

Chapter 4: Foundation Models – The Bedrock of Modern AI

Foundation models represent a paradigm shift in artificial intelligence development, moving away from task-specific models toward large-scale, general-purpose systems that can be adapted for a wide variety of applications. These models, trained on vast datasets using self-supervised learning techniques, have become the cornerstone of modern AI applications and have fundamentally changed how researchers and practitioners approach AI development.

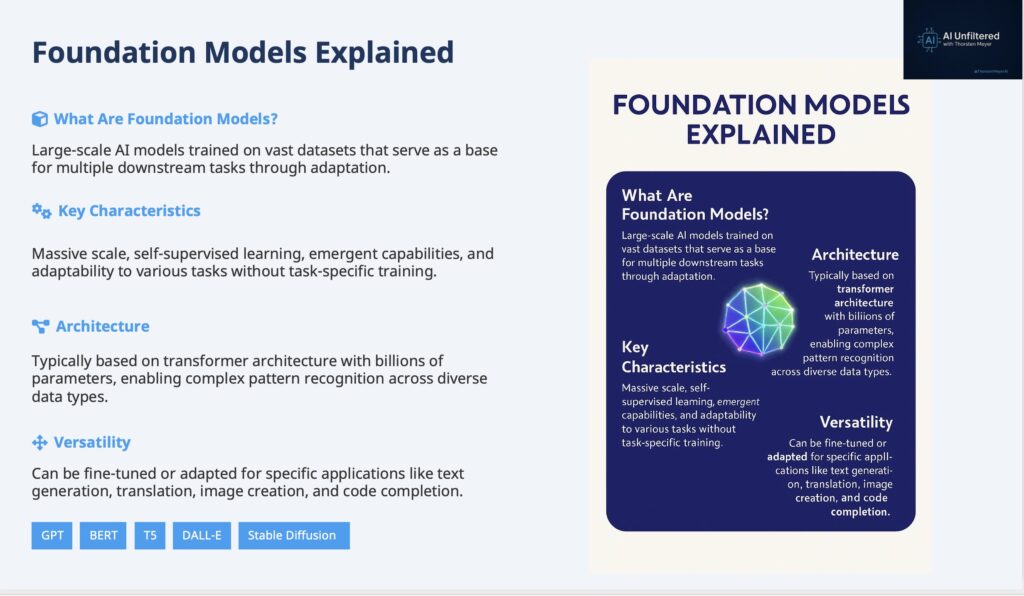

The Conceptual Framework of Foundation Models

The term “foundation model” was coined by researchers at Stanford University to describe a new class of AI models that are trained on broad data at scale and can be adapted to a wide range of downstream tasks. Unlike traditional machine learning approaches that require training separate models for each specific task, foundation models provide a single, powerful base that can be fine-tuned or prompted to perform diverse functions.

The conceptual foundation of these models rests on the principle of transfer learning, where knowledge gained from training on one task can be applied to related tasks. However, foundation models take this concept to an unprecedented scale, learning general representations of language, images, or other modalities that capture fundamental patterns and relationships that are useful across many different applications.

The self-supervised learning paradigm that underlies most foundation models represents a significant departure from traditional supervised learning approaches. Instead of requiring labeled training data, self-supervised learning creates learning objectives from the structure of the data itself. For language models, this might involve predicting the next word in a sequence or filling in masked words in a sentence. For image models, it might involve predicting missing parts of an image or learning to associate images with their captions.

This self-supervised approach enables foundation models to learn from vast quantities of unlabeled data that would be prohibitively expensive to manually annotate. The scale of training data for modern foundation models is unprecedented, with some language models trained on datasets containing trillions of tokens representing a significant fraction of all text available on the internet.

Architectural Innovations and Technical Foundations

The transformer architecture, introduced in the seminal paper “Attention Is All You Need” by Vaswani et al., has become the dominant architectural paradigm for foundation models. The transformer’s key innovation is the attention mechanism, which allows the model to dynamically focus on different parts of the input when processing each element, enabling more effective modeling of long-range dependencies and complex relationships.

The attention mechanism works by computing weighted combinations of input representations, where the weights are determined by learned compatibility functions between different elements of the input. This approach allows the model to capture relationships between distant elements in a sequence more effectively than previous architectures such as recurrent neural networks or convolutional neural networks.

The scalability of the transformer architecture has been crucial to the success of foundation models. Unlike recurrent architectures that must process sequences sequentially, transformers can process all elements of a sequence in parallel, making them much more efficient to train on modern parallel computing hardware. This parallelizability has enabled the training of increasingly large models with billions or trillions of parameters.

The multi-head attention mechanism used in transformers allows the model to attend to different types of relationships simultaneously. Each attention head can learn to focus on different aspects of the input, such as syntactic relationships, semantic similarities, or long-range dependencies. The combination of multiple attention heads provides a rich representation that captures diverse aspects of the input data.

Layer normalization and residual connections are additional architectural components that have proven crucial for training very deep transformer models. Layer normalization helps stabilize training by normalizing the inputs to each layer, while residual connections allow gradients to flow more effectively through deep networks, enabling the training of models with hundreds of layers.

Training Methodologies and Scale

The training of foundation models requires unprecedented computational resources and sophisticated distributed training techniques. Modern foundation models are trained on clusters of thousands of GPUs or specialized AI accelerators, with training runs that can last for months and consume millions of dollars worth of computational resources.

The distributed training of foundation models involves complex coordination across multiple devices and machines. Data parallelism, where different devices process different batches of training data, is combined with model parallelism, where different parts of the model are distributed across different devices. Advanced techniques such as pipeline parallelism and tensor parallelism are used to further optimize training efficiency and enable the training of models that are too large to fit on any single device.

The optimization of foundation model training involves careful tuning of numerous hyperparameters, including learning rates, batch sizes, and regularization techniques. The scale of these models makes hyperparameter optimization particularly challenging, as the cost of training experiments is extremely high. Researchers have developed various techniques for efficient hyperparameter search, including early stopping based on smaller-scale experiments and transfer of hyperparameters from smaller models.

The data preprocessing and curation for foundation model training is a complex undertaking that significantly impacts model performance and behavior. Training datasets must be carefully filtered to remove low-quality content, deduplicated to prevent overfitting, and balanced to ensure diverse representation across different domains and perspectives. The quality and composition of training data has profound implications for model capabilities, biases, and potential harmful behaviors.

Adaptation and Fine-tuning Strategies

One of the key advantages of foundation models is their ability to be adapted for specific tasks and domains through various fine-tuning and adaptation techniques. Traditional fine-tuning involves continuing the training process on task-specific data, adjusting the model’s parameters to optimize performance for the target application. This approach can be highly effective but requires substantial computational resources and task-specific training data.

Parameter-efficient fine-tuning techniques have emerged as important alternatives to full fine-tuning, enabling adaptation of foundation models with minimal computational overhead. Low-rank adaptation (LoRA) is one such technique that adds small, trainable matrices to the model while keeping the original parameters frozen. This approach can achieve performance comparable to full fine-tuning while requiring orders of magnitude fewer trainable parameters.

Prompt engineering and in-context learning represent another paradigm for adapting foundation models without modifying their parameters. By carefully crafting input prompts that provide examples or instructions, users can guide foundation models to perform specific tasks without any additional training. This approach is particularly powerful for large language models, which can often perform complex tasks based solely on well-designed prompts.

Instruction tuning is a specialized form of fine-tuning that trains foundation models to follow human instructions more effectively. Models trained with instruction tuning can understand and execute a wide variety of tasks based on natural language descriptions, making them more useful for general-purpose applications. This approach has been crucial for developing AI assistants and other interactive AI systems.

Applications and Impact Across Domains

Foundation models have found applications across virtually every domain where AI is applied, demonstrating their versatility and power. In natural language processing, foundation models power applications ranging from chatbots and virtual assistants to content generation, translation, and summarization systems. The ability of these models to understand and generate human-like text has revolutionized how we interact with AI systems.

In computer vision, foundation models trained on large-scale image datasets have achieved remarkable performance on tasks such as image classification, object detection, and image generation. Vision transformers, which apply the transformer architecture to image data, have achieved state-of-the-art results on many computer vision benchmarks and have enabled new applications such as high-quality image synthesis and editing.

The application of foundation models to scientific domains has shown particular promise, with models trained on scientific literature and data achieving breakthrough results in areas such as protein structure prediction, drug discovery, and materials science. These applications demonstrate the potential for foundation models to accelerate scientific discovery and enable new research methodologies.

Multimodal foundation models that can process and generate content across multiple modalities (text, images, audio, video) represent the current frontier of foundation model development. These models can understand relationships between different types of content and perform tasks that require reasoning across modalities, such as generating images from text descriptions or answering questions about visual content.

Challenges and Limitations

Despite their remarkable capabilities, foundation models face significant challenges and limitations that constrain their applicability and raise important concerns about their deployment. The computational requirements for training and deploying foundation models create barriers to access and contribute to environmental concerns about the energy consumption of AI systems.

The black-box nature of foundation models makes it difficult to understand how they make decisions or to predict their behavior in novel situations. This lack of interpretability is particularly concerning for applications in high-stakes domains such as healthcare, finance, or criminal justice, where understanding the reasoning behind AI decisions is crucial for trust and accountability.

Bias and fairness issues in foundation models reflect the biases present in their training data and can be amplified by the scale and generality of these models. Foundation models may exhibit biases related to gender, race, religion, or other protected characteristics, and these biases can propagate to downstream applications in ways that are difficult to detect and mitigate.

The tendency of foundation models to generate plausible but factually incorrect information, known as hallucination, poses significant challenges for applications that require factual accuracy. While these models can generate coherent and convincing text, they may confidently assert false information or make up facts that sound plausible but are entirely fabricated.

[Reference to Training Slide: The slide deck provides detailed architectural diagrams and visual comparisons of different foundation model approaches.]

Chapter 5: Economic Optimization Through Specialized AI Models

The economic landscape of artificial intelligence deployment has been fundamentally shaped by the tension between capability and cost-effectiveness. While large, general-purpose foundation models offer impressive versatility and performance, their computational requirements and associated costs have driven significant interest in specialized AI models that can deliver targeted capabilities at a fraction of the resource requirements. This economic optimization through specialization represents a crucial strategy for making AI accessible and sustainable across diverse applications and organizations.

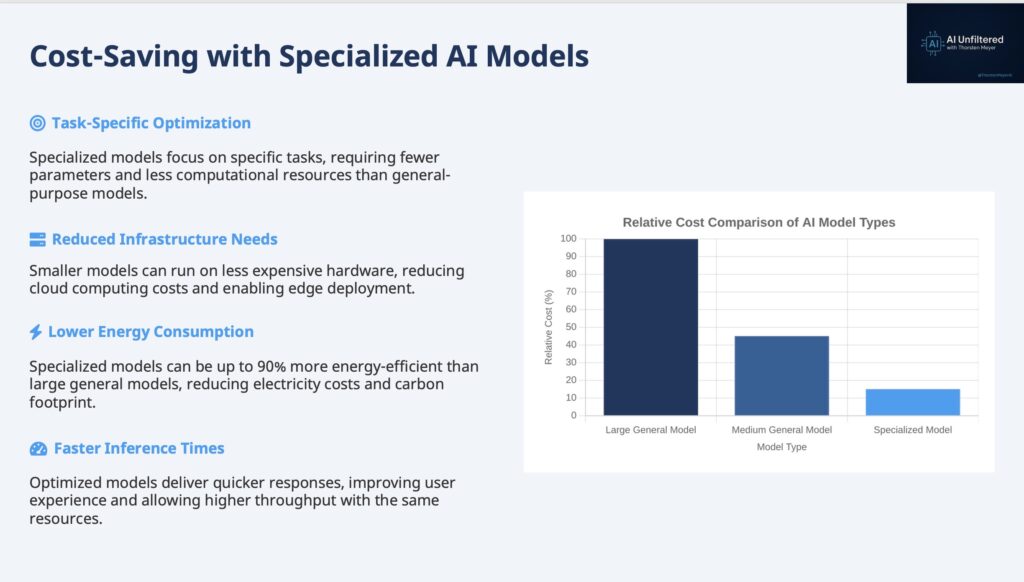

The Economics of AI Model Specialization

The economic rationale for specialized AI models stems from the fundamental principle that not all applications require the full capabilities of large, general-purpose models. Many real-world AI applications have specific, well-defined requirements that can be met by smaller, more focused models that are optimized for particular tasks or domains. By trading generality for efficiency, specialized models can deliver the necessary performance while dramatically reducing computational costs, energy consumption, and infrastructure requirements.

The cost structure of AI deployment includes several key components: computational resources for inference, memory requirements for model storage and execution, energy consumption for powering hardware, and infrastructure costs for deployment and scaling. Large foundation models typically require substantial resources across all these dimensions, making them expensive to deploy and operate at scale. Specialized models can optimize each of these cost components by focusing on specific requirements rather than general capabilities.

The development of specialized models often involves starting with a pre-trained foundation model and then applying various optimization techniques to reduce its size and computational requirements while maintaining performance on specific tasks. This approach leverages the knowledge captured in large models while creating deployable systems that are practical for resource-constrained environments.

The return on investment for specialized AI models can be substantially higher than for general-purpose alternatives, particularly for organizations with well-defined use cases and performance requirements. By avoiding the overhead associated with unused capabilities, specialized models can deliver better cost-performance ratios and enable AI deployment in scenarios where general-purpose models would be economically unfeasible.

Technical Approaches to Model Specialization

Knowledge distillation represents one of the most effective techniques for creating specialized AI models. This process involves training a smaller “student” model to mimic the behavior of a larger “teacher” model on specific tasks or domains. The student model learns to approximate the teacher’s outputs while using significantly fewer parameters and computational resources.

The distillation process typically involves training the student model on a combination of the original training data and the outputs generated by the teacher model. The student learns not only from the correct answers but also from the teacher’s confidence levels and the distribution of its predictions across different possible outputs. This rich training signal enables the student model to capture much of the teacher’s knowledge while being orders of magnitude smaller.

Advanced distillation techniques include progressive distillation, where intermediate-sized models are used as stepping stones between the teacher and student, and task-specific distillation, where the distillation process is focused on particular tasks or domains of interest. These techniques can achieve even better trade-offs between model size and performance for specific applications.

Model pruning offers another approach to creating specialized models by removing unnecessary components from existing models. Structured pruning removes entire neurons, layers, or attention heads, while unstructured pruning removes individual connections or parameters. The choice between these approaches depends on the target deployment environment and the available optimization tools.

Magnitude-based pruning removes parameters with the smallest absolute values, based on the assumption that these parameters contribute least to model performance. More sophisticated pruning techniques consider the impact of removing specific parameters on model performance and use iterative approaches to gradually reduce model size while monitoring performance degradation.

Quantization techniques reduce the precision of model parameters and activations, typically from 32-bit floating-point numbers to 8-bit integers or even lower precision representations. This reduction in precision can significantly decrease memory requirements and computational costs while maintaining acceptable performance for many applications.

Post-training quantization can be applied to existing models without retraining, making it a practical approach for optimizing pre-trained models. Quantization-aware training incorporates quantization effects into the training process, typically achieving better performance than post-training approaches but requiring access to training data and computational resources.

Domain-Specific Optimization Strategies

Different application domains present unique opportunities for model specialization and optimization. Natural language processing applications can benefit from domain-specific vocabulary optimization, where models are fine-tuned on domain-specific text and optimized for particular linguistic patterns and terminology. Medical AI applications, for example, can use specialized models trained on medical literature and optimized for medical terminology and reasoning patterns.

Computer vision applications can leverage domain-specific optimizations such as specialized architectures for particular types of images or visual tasks. Autonomous vehicle applications might use models optimized for road scene understanding, while medical imaging applications might use models specialized for particular types of medical scans or diagnostic tasks.

The optimization of models for specific hardware platforms represents another important dimension of specialization. Models can be optimized for particular types of processors, memory configurations, or deployment environments. Edge AI applications, for example, require models that can run efficiently on mobile processors with limited memory and power budgets.

Temporal optimization involves adapting models for specific time-sensitive requirements. Real-time applications may require models that can provide predictions within strict latency constraints, while batch processing applications may prioritize throughput over individual prediction latency. These different requirements lead to different optimization strategies and trade-offs.

Deployment and Scaling Considerations

The deployment of specialized AI models requires careful consideration of the trade-offs between specialization and flexibility. While specialized models can be highly efficient for their intended applications, they may be less adaptable to changing requirements or new use cases. Organizations must balance the benefits of optimization against the potential costs of reduced flexibility.

The scaling characteristics of specialized models differ significantly from those of general-purpose models. Specialized models typically scale more efficiently in terms of computational resources and costs, but they may require more complex deployment architectures to handle diverse use cases. Organizations may need to deploy multiple specialized models rather than a single general-purpose model, creating additional complexity in model management and orchestration.

The maintenance and updating of specialized models presents unique challenges. While general-purpose models can be updated centrally and applied across many use cases, specialized models may require individual attention and optimization for each specific application. This can increase the operational overhead associated with maintaining AI systems over time.

Version control and model lifecycle management become more complex with specialized models, as organizations may need to track and manage many different model variants optimized for different tasks, domains, or deployment environments. Effective tooling and processes for model management are crucial for successfully deploying specialized AI at scale.

Measuring and Optimizing Cost-Effectiveness

The measurement of cost-effectiveness for specialized AI models requires comprehensive metrics that capture both performance and resource utilization. Traditional accuracy metrics must be balanced against computational costs, energy consumption, and infrastructure requirements to provide a complete picture of model efficiency.

Performance per dollar metrics provide a useful framework for comparing different model optimization strategies. These metrics consider both the absolute performance of models on relevant tasks and the total cost of deployment and operation. The optimal choice of model depends on the specific requirements and constraints of each application.

Energy efficiency has become an increasingly important consideration in AI model optimization, both for cost reasons and environmental concerns. Specialized models can achieve dramatic improvements in energy efficiency compared to general-purpose alternatives, making them attractive for both economic and sustainability reasons.

The total cost of ownership for specialized AI models includes not only computational costs but also development, deployment, and maintenance costs. While specialized models may require additional upfront investment in optimization and customization, they can provide significant long-term cost savings for organizations with stable, well-defined AI requirements.

[Reference to Training Slide: The slide deck includes detailed cost comparison charts and optimization strategy visualizations that complement this analysis.]

Chapter 6: Mixture of Experts – Scaling Intelligence Through Specialization

The Mixture of Experts (MoE) architecture represents one of the most significant innovations in modern AI system design, offering a sophisticated approach to scaling model capabilities while maintaining computational efficiency. By combining multiple specialized neural networks with intelligent routing mechanisms, MoE models can achieve the performance of much larger dense models while activating only a small fraction of their parameters for any given input. This architectural innovation has become crucial for developing large-scale AI systems that can handle diverse tasks efficiently.

Theoretical Foundations and Architectural Principles

The conceptual foundation of Mixture of Experts models draws from the principle of divide-and-conquer problem solving, where complex tasks are decomposed into simpler subtasks that can be handled by specialized components. In the context of neural networks, this translates to having multiple “expert” networks, each specialized for different types of inputs or tasks, combined with a “gating” network that determines which experts should be activated for each input.

The theoretical appeal of MoE architectures lies in their ability to increase model capacity without proportionally increasing computational costs. Traditional dense neural networks activate all parameters for every input, leading to a linear relationship between model size and computational requirements. MoE models break this relationship by activating only a subset of experts for each input, enabling much larger total parameter counts while maintaining manageable computational costs.

The gating mechanism is the critical component that determines the effectiveness of MoE models. The gating network learns to route inputs to the most appropriate experts based on the characteristics of the input and the specializations of different experts. This routing decision is typically made using a learned function that computes compatibility scores between inputs and experts, with only the top-k experts being activated for each input.

The training of MoE models presents unique challenges compared to dense models. The discrete routing decisions made by the gating network create non-differentiable operations that complicate gradient-based optimization. Various techniques have been developed to address this challenge, including soft routing mechanisms that use continuous weights instead of discrete selections, and auxiliary losses that encourage balanced expert utilization.

Expert Specialization and Load Balancing

One of the key challenges in MoE model design is ensuring that different experts develop meaningful specializations rather than redundant capabilities. Without proper mechanisms to encourage specialization, experts may converge to similar functions, negating the benefits of the MoE architecture. Various techniques have been developed to promote expert diversity and specialization.

Load balancing represents another crucial challenge in MoE systems. If the gating network consistently routes most inputs to a small subset of experts, the model effectively becomes a smaller dense model, losing the capacity benefits of the MoE architecture. Additionally, unbalanced expert utilization can lead to training instabilities and poor convergence.

Auxiliary losses are commonly used to encourage balanced expert utilization. These losses penalize the gating network when expert usage becomes too imbalanced, encouraging more uniform distribution of inputs across experts. However, these auxiliary losses must be carefully tuned to balance the competing objectives of expert specialization and load balancing.

The capacity factor, which determines how many experts are activated for each input, represents a key hyperparameter in MoE model design. Higher capacity factors increase computational costs but may improve model performance by allowing more experts to contribute to each prediction. The optimal capacity factor depends on the specific task, model size, and computational constraints.

Expert dropout is another technique used to improve MoE model training and generalization. By randomly dropping experts during training, the model learns to be robust to expert failures and develops more diverse expert specializations. This technique can also help prevent overfitting to particular expert combinations.

Scaling Laws and Performance Characteristics

The scaling behavior of MoE models differs significantly from dense models, offering unique advantages for large-scale AI development. While dense models exhibit predictable scaling laws where performance improves with model size following power-law relationships, MoE models can achieve similar performance improvements with much smaller increases in computational costs.

The effective parameter count of MoE models is much larger than their active parameter count, enabling them to store and utilize more knowledge while maintaining efficient inference. This characteristic makes MoE models particularly attractive for applications that require broad knowledge coverage, such as large language models that must handle diverse topics and domains.

The memory requirements for MoE models present both advantages and challenges. While the total memory required to store all experts can be substantial, the memory required for active computation is much smaller since only a subset of experts are used for each input. This characteristic enables the deployment of very large MoE models on distributed systems where the total model cannot fit on any single device.

The communication overhead in distributed MoE deployments can be significant, as inputs must be routed to the appropriate experts, which may be located on different devices. Efficient communication strategies and expert placement algorithms are crucial for achieving good performance in distributed MoE systems.

Implementation Strategies and Optimization Techniques

The implementation of efficient MoE systems requires careful consideration of both algorithmic and systems-level optimizations. The routing computation must be fast enough to avoid becoming a bottleneck, while the expert networks must be efficiently scheduled and executed on available hardware.

Dynamic expert selection strategies can adapt the number and identity of activated experts based on input characteristics. Simple inputs might require fewer experts, while complex inputs might benefit from activating more experts. This adaptive approach can improve both efficiency and performance compared to fixed expert selection strategies.

Expert caching and prefetching techniques can reduce the latency associated with expert activation by predicting which experts are likely to be needed and preloading them into fast memory. These techniques are particularly important for deployment scenarios where expert switching costs are high.

The design of expert networks themselves offers opportunities for optimization. Experts can use different architectures optimized for their specific specializations, rather than being constrained to identical architectures. This flexibility enables more effective specialization and can improve overall model efficiency.

Hierarchical MoE architectures use multiple levels of expert routing, with coarse-grained routing at higher levels and fine-grained routing at lower levels. This approach can improve both efficiency and specialization by creating a hierarchy of expertise that matches the structure of the problem domain.

Applications and Use Cases

MoE architectures have found successful applications across a wide range of AI domains, with particularly notable success in large language models. The Switch Transformer, developed by Google, demonstrated that MoE architectures could achieve state-of-the-art performance on language modeling tasks while being much more efficient than equivalent dense models.

In machine translation, MoE models can develop experts specialized for different language pairs or linguistic phenomena, leading to improved translation quality and efficiency. The ability to activate only relevant experts for each translation task makes MoE models particularly well-suited for multilingual applications.

Computer vision applications of MoE architectures include models with experts specialized for different types of visual content, such as natural images, medical images, or satellite imagery. This specialization can improve both accuracy and efficiency compared to general-purpose vision models.

Multimodal AI systems can benefit from MoE architectures by having experts specialized for different modalities or cross-modal interactions. This approach enables more efficient processing of multimodal inputs while maintaining the ability to handle diverse types of content.

Recommendation systems represent another promising application area for MoE models, where experts can specialize for different user segments, item categories, or interaction types. This specialization can improve recommendation quality while enabling more efficient processing of large-scale recommendation tasks.

Future Directions and Research Challenges

The development of more sophisticated gating mechanisms remains an active area of research. Current gating networks are relatively simple, but more advanced approaches could consider factors such as computational budgets, expert load, and task-specific requirements when making routing decisions.

The integration of MoE architectures with other advanced AI techniques, such as attention mechanisms, memory networks, and reinforcement learning, offers opportunities for developing even more capable and efficient AI systems. These hybrid approaches could combine the benefits of different architectural innovations.

The development of automated expert design and optimization techniques could reduce the manual effort required to design effective MoE systems. Machine learning approaches could be used to automatically determine optimal expert architectures, specializations, and routing strategies.

The application of MoE principles to other aspects of AI systems, such as data processing pipelines, optimization algorithms, and deployment strategies, could extend the benefits of expert specialization beyond neural network architectures.

[Reference to Training Slide: The slide deck provides detailed architectural diagrams and performance comparisons that illustrate the key concepts and benefits of MoE models.]

Chapter 7: Context Length – The Memory Horizon of AI Systems

Context length represents one of the most fundamental limitations and design considerations in modern AI systems, particularly for language models and other sequence-processing architectures. The context length defines the maximum amount of information that an AI model can consider when making predictions or generating responses, effectively serving as the model’s “memory window” or attention span. Understanding the implications, limitations, and ongoing developments in context length is crucial for both AI developers and users seeking to maximize the effectiveness of AI systems.

The Fundamental Nature of Context in AI Systems

Context length in AI systems refers to the maximum number of tokens that a model can process simultaneously when making predictions. For language models, tokens typically represent words, subwords, or characters, while for other modalities, tokens might represent image patches, audio segments, or other discrete units of information. The context length determines how much historical information the model can consider when generating each new token in a sequence.

The importance of context length becomes apparent when considering tasks that require understanding of long-range dependencies or extensive background information. Document summarization, for example, requires the model to consider the entire document when generating a summary. Similarly, long-form conversation requires the model to remember earlier parts of the conversation to maintain coherence and consistency.

The evolution of context length in AI models has been dramatic, reflecting both technological advances and growing understanding of the importance of long-range context. Early transformer models, such as the original BERT, had context lengths of 512 tokens, which was sufficient for many sentence-level tasks but inadequate for document-level understanding. Modern large language models have context lengths ranging from thousands to millions of tokens, enabling entirely new categories of applications.

The relationship between context length and model capability is complex and multifaceted. Longer context lengths enable models to handle more complex tasks and maintain coherence over longer sequences, but they also increase computational requirements and can introduce new challenges such as the “lost in the middle” problem, where models struggle to effectively utilize information in the middle of very long contexts.

Technical Challenges and Computational Constraints

The primary technical challenge associated with extending context length lies in the quadratic scaling of attention mechanisms with sequence length. In standard transformer architectures, the attention mechanism computes relationships between all pairs of tokens in the input sequence, leading to computational and memory requirements that scale as O(n²) where n is the sequence length. This quadratic scaling makes very long contexts computationally prohibitive for standard architectures.

Memory requirements for long-context models extend beyond the attention computation to include storage of intermediate activations, key-value caches for efficient generation, and gradient information during training. These memory requirements can quickly exceed the capacity of even high-end hardware, necessitating careful optimization and sometimes fundamental architectural changes.

The attention computation itself becomes increasingly expensive as context length grows. For a sequence of length 32,768 tokens, the attention mechanism must compute over one billion pairwise relationships, requiring substantial computational resources and time. This computational burden affects both training and inference, making long-context models expensive to develop and deploy.

Gradient computation and backpropagation through very long sequences present additional challenges during training. The memory required to store intermediate activations for gradient computation scales linearly with sequence length, and the computation of gradients through long sequences can be numerically unstable and computationally expensive.

Architectural Innovations for Long Context

Researchers have developed numerous architectural innovations to address the challenges of long-context processing while maintaining the benefits of attention-based models. Linear attention mechanisms replace the quadratic attention computation with linear alternatives that scale as O(n) with sequence length, enabling much longer contexts at the cost of some expressiveness.

Sparse attention patterns reduce computational requirements by limiting attention to subsets of tokens rather than computing full pairwise attention. Local attention focuses on nearby tokens, while global attention mechanisms allow certain tokens to attend to all other tokens. These sparse patterns can significantly reduce computational costs while maintaining much of the effectiveness of full attention.

Hierarchical attention mechanisms process sequences at multiple levels of granularity, first attending to local regions and then attending between regions. This approach can capture both local and global dependencies while reducing computational requirements compared to full attention over the entire sequence.

Sliding window attention processes long sequences by maintaining a fixed-size window of recent context and sliding this window over the sequence. This approach provides a constant computational cost regardless of sequence length but may lose important long-range dependencies that fall outside the window.

Memory-augmented architectures extend the effective context length by incorporating external memory mechanisms that can store and retrieve relevant information from much longer sequences. These approaches separate the storage of information from the attention computation, enabling models to access relevant information from very long contexts without the computational overhead of full attention.

The Lost in the Middle Problem

One of the most significant challenges with very long context lengths is the “lost in the middle” phenomenon, where models struggle to effectively utilize information that appears in the middle of long contexts. Research has shown that models tend to pay more attention to information at the beginning and end of contexts, with information in the middle being less likely to influence model outputs.

This problem appears to be fundamental to how attention mechanisms work and is not simply a matter of insufficient training. Even models specifically trained on long contexts exhibit this behavior, suggesting that it may be an inherent limitation of current architectures rather than a training issue.

The implications of the lost in the middle problem are significant for applications that require processing of long documents or conversations. Important information that appears in the middle of long contexts may be effectively ignored by the model, leading to incomplete or inaccurate responses.

Various mitigation strategies have been proposed for the lost in the middle problem, including attention reweighting schemes that explicitly encourage attention to middle portions of contexts, and training procedures that specifically focus on utilizing information from different positions within long contexts.

Practical Implications and Use Cases

The practical implications of context length limitations affect virtually every application of AI systems that process sequential data. Document analysis tasks, such as legal document review or scientific literature analysis, require models to consider entire documents that may contain tens of thousands of tokens. Current context length limitations often force these applications to use chunking strategies that may miss important cross-chunk dependencies.

Conversational AI systems face particular challenges with context length limitations. Long conversations quickly exceed the context limits of many models, forcing systems to either truncate conversation history or use sophisticated context management strategies to maintain relevant information while staying within context limits.

Code generation and analysis tasks often require understanding of large codebases that exceed typical context lengths. This limitation affects the ability of AI systems to understand complex software projects and generate code that properly integrates with existing systems.

Creative writing applications, such as novel generation or screenplay writing, require maintaining consistency and coherence over very long texts. Context length limitations can force these applications to use external memory systems or other workarounds to maintain narrative coherence.

Context Management Strategies

Given the current limitations of context length, various strategies have been developed to manage context effectively within existing constraints. Chunking strategies divide long texts into smaller segments that fit within context limits, but must carefully handle the boundaries between chunks to avoid losing important information.

Retrieval-augmented generation (RAG) systems address context limitations by retrieving relevant information from large knowledge bases and including only the most relevant information in the model’s context. This approach can effectively extend the model’s access to information while staying within context limits.

Summarization-based context management involves periodically summarizing older parts of the context to make room for new information. This approach can maintain important information from long contexts while staying within length limits, but may lose important details in the summarization process.

Hierarchical context management uses different levels of detail for different parts of the context, maintaining full detail for recent information while using compressed representations for older information. This approach can balance the need for detailed recent context with the desire to maintain some access to older information.

Future Directions and Emerging Solutions

The development of more efficient attention mechanisms remains an active area of research, with approaches such as Flash Attention and other optimized implementations reducing the computational overhead of attention while maintaining its effectiveness. These optimizations make longer contexts more practical without requiring fundamental architectural changes.

Alternative architectures that move beyond attention mechanisms entirely, such as state space models and other sequence modeling approaches, offer the potential for much longer contexts with linear computational scaling. These approaches sacrifice some of the flexibility of attention mechanisms but may enable context lengths that are orders of magnitude longer than current transformer-based models.

The integration of external memory systems with language models offers another path toward effectively unlimited context lengths. These systems can store and retrieve information from vast external databases while maintaining the benefits of attention-based processing for the active context window.

Hardware innovations, including specialized AI accelerators designed for long-context processing, may enable more efficient processing of long sequences. These hardware advances could make current architectures practical for much longer contexts or enable new architectures that are currently computationally prohibitive.

[Reference to Training Slide: The slide deck includes visualizations of context length evolution and comparative analysis of different context management strategies.]

Chapter 8: The Boundaries of AI – Internet Access Limitations and Implications

The relationship between artificial intelligence systems and internet access represents one of the most significant architectural and philosophical decisions in modern AI development. While the internet contains vast repositories of real-time information that could theoretically enhance AI capabilities, most AI systems operate with limited or no direct internet access by design. Understanding the reasons for these limitations, their implications, and the emerging solutions to address them is crucial for comprehending the current boundaries and future potential of AI systems.

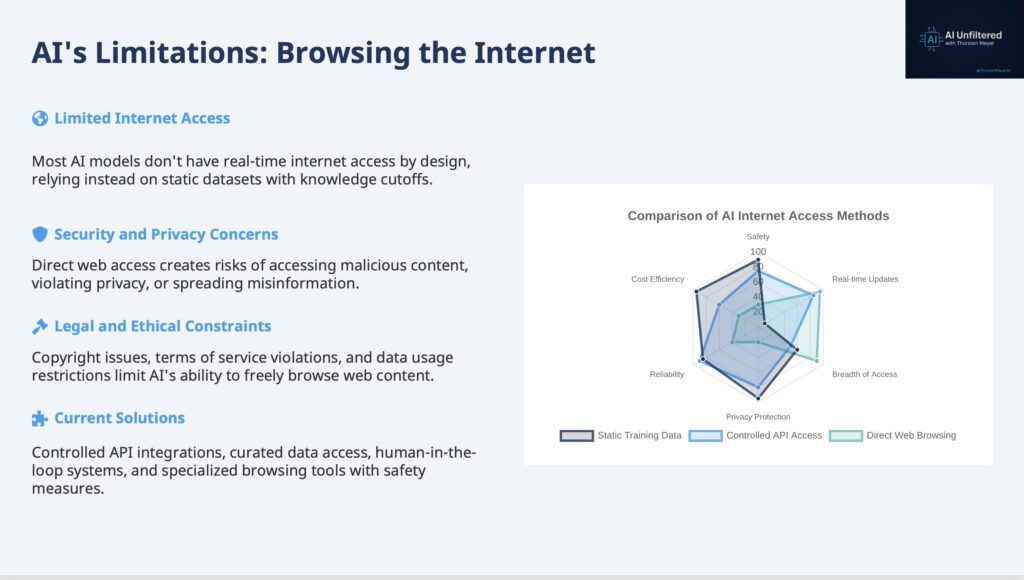

The Architecture of Isolation

The decision to limit AI systems’ internet access stems from fundamental concerns about safety, security, and reliability that go to the heart of responsible AI development. Unlike traditional software applications that routinely access network resources, AI systems present unique risks when given unrestricted internet access that have led most developers to adopt conservative approaches to connectivity.

The primary architectural approach used by most AI systems involves training on static datasets that are carefully curated and validated before being used for model development. This approach ensures that the training data is of known quality and provenance, but it also means that AI systems have knowledge cutoffs beyond which they cannot access new information. The trade-off between data quality and recency is a fundamental tension in AI system design.

The isolation of AI systems from real-time internet access also reflects concerns about the unpredictable nature of web content. The internet contains vast amounts of misinformation, malicious content, and low-quality information that could negatively impact AI system behavior if accessed indiscriminately. By using curated training datasets, AI developers can exercise greater control over the information that influences their systems.

The computational and economic considerations of real-time internet access also play a role in architectural decisions. Continuously accessing and processing internet content would require substantial computational resources and would introduce latency and reliability concerns that could affect system performance. The batch processing approach used for training on static datasets is much more efficient and predictable.

Security and Safety Considerations

The security implications of providing AI systems with internet access are multifaceted and significant. AI systems with unrestricted internet access could potentially be exploited by malicious actors who craft specific web content designed to manipulate AI behavior. This vulnerability, sometimes called “prompt injection” or “data poisoning,” could be used to cause AI systems to generate harmful or misleading content.

The potential for AI systems to inadvertently access and process sensitive or private information from the internet raises significant privacy concerns. Even if AI systems are designed to avoid accessing private information, the dynamic and complex nature of web content makes it difficult to ensure that sensitive information is never encountered or processed.

The risk of AI systems being used to conduct large-scale automated attacks on internet infrastructure is another security consideration. AI systems with internet access could potentially be used to conduct distributed denial-of-service attacks, automated spam campaigns, or other malicious activities that could harm internet infrastructure and services.