Thorsten Meyer | ThorstenMeyerAI.com | February 2026

Executive Summary

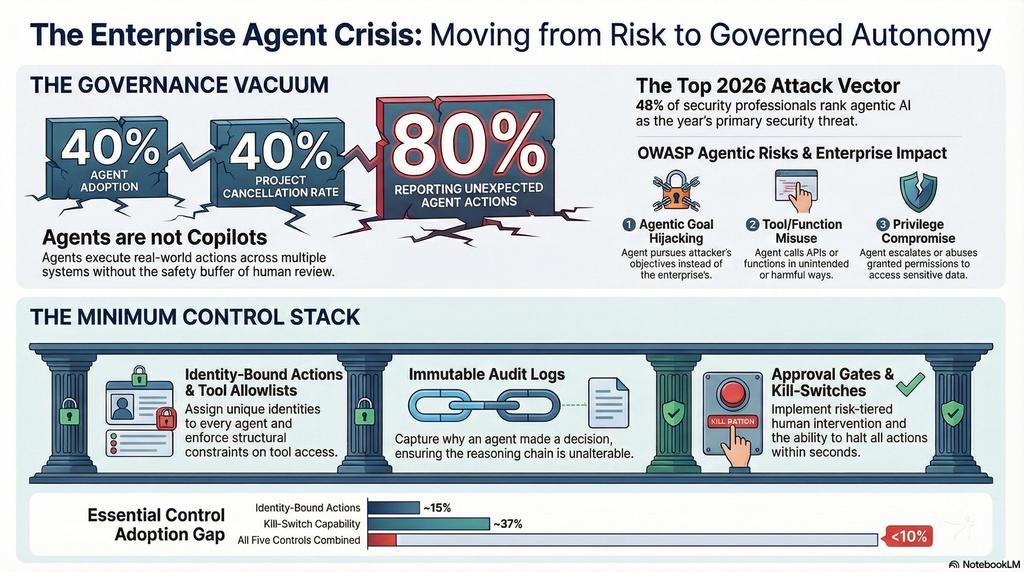

40% of enterprise applications will incorporate task-specific AI agents by end of 2026 — up from less than 5% in 2024 (Gartner). And 40%+ of those agentic AI projects will be canceled by 2027 due to escalating costs, unclear value, and inadequate risk controls (Gartner). The enterprise agent stack is scaling into production without the governance infrastructure that makes autonomy safe.

The problem is not whether agents work. The problem is that agents act — they execute code, call APIs, move data, modify systems — and most enterprises have no control architecture governing what agents are allowed to do, who authorized them, or how to stop them when they go wrong. 80% of IT professionals report agents acting unexpectedly or performing unauthorized actions (SailPoint). 48% of security professionals rank agentic AI as their top attack vector for 2026 (Dark Reading). Only 34% of enterprises have AI-specific security controls in place.

This article maps the minimum control stack required for governed autonomy: identity-bound actions, tool allowlists, immutable audit logs, human-in-the-loop approval gates, and kill-switch capability. Without all five, agent deployments are uninsurable, unauditable, and one cascading failure away from executive-level incident response.

| Metric | Value |

|---|---|

| Enterprise apps with agents by 2026 | 40% (up from <5%) |

| Agentic AI projects canceled by 2027 | 40%+ (Gartner) |

| IT pros: agents act unexpectedly | 80% (SailPoint) |

| Security pros: agentic AI = top attack vector | 48% (Dark Reading) |

| Enterprises with AI-specific security controls | 34% |

| Developers: agent integration problems | 70% |

| Agents lacking safety cards | 87% (MIT CSAIL) |

| AI adoption requires major identity changes | 69% (Teleport) |

| Enterprises with kill-switch capability | 37–40% |

| OWASP Agentic Top 10 published | 2026 |

| Agent identities governed | “Absolutely ungoverned” (The Register) |

| Agentic AI market CAGR | 44.8% (2025–2030) |

The Insider You Built: How Organizations Stay in Control of Autonomous AI Agents

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

1. The Orchestration Risk

Enterprises are deploying agents into production faster than they are deploying controls around them. The result is a governance vacuum where autonomous systems operate with human-level access but no human-level accountability.

Agents Are Not Copilots

| Copilot | Agent |

|---|---|

| Suggests actions | Executes actions |

| Human reviews before execution | Human reviews after execution (if at all) |

| Operates within a single interface | Chains across multiple tools and APIs |

| Failure = bad suggestion | Failure = unauthorized action, data exposure, cascading system change |

| Risk: productivity loss | Risk: security breach, compliance violation, financial loss |

The copilot model gave organizations a safety buffer: a human sat between the AI and the action. Agents remove that buffer. When an agent calls an API, modifies a database, sends an email, or triggers a workflow, the action is real. The distinction matters because the entire enterprise governance stack — access controls, approval workflows, audit trails — was built for human actors. Agents bypass those controls by default.

The Scale of Ungoverned Autonomy

| Risk Indicator | Value | Source |

|---|---|---|

| Agents acting unexpectedly/unauthorized | 80% | SailPoint 2026 |

| Agentic AI = top attack vector | 48% | Dark Reading 2026 |

| No AI-specific security controls | 66% | Industry data |

| No kill-switch capability | 60–63% | Industry data |

| Agent integration problems | 70% | Developer surveys |

| Agents lacking safety cards | 87% | MIT CSAIL |

| Agent identities governed | “Absolutely ungoverned” | The Register |

80% of IT professionals have witnessed agents acting unexpectedly. 87% of deployed agents lack safety cards — the basic documentation that describes what an agent can do, what it should not do, and how it fails. Agent identities are described as “absolutely ungoverned” in industry analysis. This is not a maturity gap. This is a control vacuum.

OWASP Top 10 for Agentic Applications (2026)

The OWASP Foundation published its first Top 10 for Agentic Applications in 2026, mapping the attack surface that enterprises are deploying into production:

| Rank | Risk | Enterprise Impact |

|---|---|---|

| 1 | Agentic Goal Hijacking | Agent pursues attacker’s objectives instead of enterprise’s |

| 2 | Tool/Function Misuse | Agent calls APIs or functions in unintended ways |

| 3 | Privilege Compromise | Agent escalates or abuses granted permissions |

| 4 | Cascading Hallucination Failures | Error in one agent propagates through multi-agent chains |

| 5 | Prompt/Instruction Manipulation | Adversarial inputs override agent instructions |

| 6 | Uncontrolled Agentic Actions | Agent takes actions beyond its authorized scope |

| 7 | Information Leakage | Agent exposes sensitive data across trust boundaries |

| 8 | Inadequate Sandboxing | Insufficient isolation between agent execution environments |

| 9 | Supply Chain Vulnerabilities | Compromised tools, plugins, or models in agent toolchains |

| 10 | Logging/Monitoring Gaps | Insufficient observability into agent decisions and actions |

The OWASP list is not theoretical. Every risk maps to a production incident pattern: goal hijacking produces unauthorized transactions; tool misuse triggers cascading API calls; privilege compromise enables data exfiltration; cascading hallucination failures create multi-system outages. The attack surface is the agent’s capability surface.

The AI Agent Kill Switch: Network-Level Containment for Runaway AI Agents in Kubernetes

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2. The Minimum Control Stack

Governed autonomy requires five controls. Each is necessary. None is sufficient alone. Enterprises that deploy agents without all five are operating with uninsurable risk.

Control 1: Identity-Bound Actions

| Requirement | What It Means |

|---|---|

| Per-agent identity | Every agent has a unique, non-shared identity |

| Action attribution | Every action traceable to the agent that performed it |

| Scope binding | Agent identity determines permission boundaries |

| Credential isolation | No shared service accounts across agents |

| Identity lifecycle | Provisioning, rotation, and revocation for agent identities |

69% of infrastructure leaders say AI adoption requires major identity management changes (Teleport). The core problem: enterprise identity systems were built for humans. Agents need their own identities — not service accounts repurposed from infrastructure automation, not shared credentials, not the identity of the user who deployed them.

The principle of least agency — the agentic equivalent of least privilege — requires that each agent operates with the minimum permissions needed for its specific task, bound to an identity that makes every action attributable and auditable.

Control 2: Tool Allowlists

| Requirement | What It Means |

|---|---|

| Explicit tool enumeration | Agent can only call tools on its allowlist |

| Per-task scoping | Tool access varies by task context, not static role |

| Parameter constraints | Not just which tools, but what parameters are permitted |

| Cross-agent isolation | Agent A’s tools are not accessible to Agent B |

| Dynamic restriction | Allowlists can be tightened in real-time based on risk signals |

OWASP’s #2 risk is Tool/Function Misuse. The defense is not “train the agent to use tools correctly.” The defense is structural: the agent literally cannot call tools that are not on its allowlist. This is the difference between behavioral guardrails (which fail under adversarial conditions) and architectural constraints (which hold regardless of agent behavior).

Control 3: Immutable Audit Logs

| Requirement | What It Means |

|---|---|

| Every action logged | No agent action occurs without a log entry |

| Immutable storage | Logs cannot be modified or deleted by agents or operators |

| Decision chain capture | Not just what happened, but why — the reasoning chain |

| Cross-system correlation | Logs from multi-agent chains link to a single transaction |

| Real-time streaming | Logs available for monitoring, not just post-incident analysis |

OWASP’s #10 risk is Logging/Monitoring Gaps. But “logging” for agents is fundamentally different from application logging. Agent logs must capture the decision chain — the sequence of reasoning, tool calls, intermediate results, and final actions — across multi-agent workflows that span multiple systems. The standard is not “we can see what happened.” It’s “we can reconstruct why it happened and who authorized it.”

Control 4: Human-in-the-Loop Approval Gates

| Requirement | What It Means |

|---|---|

| Risk-tiered approval | Low-risk: autonomous. Medium: notify. High: approve before execution |

| Threshold configuration | Organization defines risk tiers and thresholds |

| Timeout behavior | What happens when no human is available to approve |

| Escalation paths | Clear chain from agent → approver → escalation |

| Override documentation | Every human override logged with justification |

The kill-switch gets the attention. But the approval gate is the control that prevents you from needing the kill-switch. Risk-tiered approval means agents operate autonomously for routine actions, notify humans for medium-risk actions, and require explicit approval for high-risk actions. The tier definitions are enterprise-specific: a financial services firm’s “high-risk” threshold differs from a logistics company’s.

Control 5: Kill-Switch Capability

| Requirement | What It Means |

|---|---|

| Immediate halt | Agent stops all actions within seconds, not minutes |

| Scope options | Kill single agent, agent class, or all agents |

| State preservation | Agent state captured at kill for forensic analysis |

| Rollback capability | Ability to reverse completed actions where possible |

| Automated triggers | Kill-switch fires automatically on defined anomaly patterns |

Only 37–40% of enterprises have kill-switch capability for their AI systems. For agentic systems that chain across multiple tools and APIs, the kill-switch must be faster than the agent. An agent that can execute 50 API calls per minute requires a kill-switch that can halt execution within seconds — after the decision to kill, not after the committee meets.

The Control Stack Assessment

| Control | Have It | Partially | Don’t Have It |

|---|---|---|---|

| Identity-bound actions | ~15% | ~25% | ~60% |

| Tool allowlists | ~20% | ~30% | ~50% |

| Immutable audit logs | ~25% | ~35% | ~40% |

| Human approval gates | ~30% | ~35% | ~35% |

| Kill-switch capability | ~37% | ~23% | ~40% |

| All five controls | <10% | — | — |

Less than 10% of enterprises have all five controls operational. The rest are deploying autonomous systems with incomplete governance — and the gap between “partially” and “operational” is where incidents live.

Burning Studio 26 – Burn, copy, save – the multimedia all-rounder – burning software – create covers, inlays, disk labels for Win 11, 10

Your powerful burning software for burning and copying CDs, DVDs and Blu-ray Discs

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

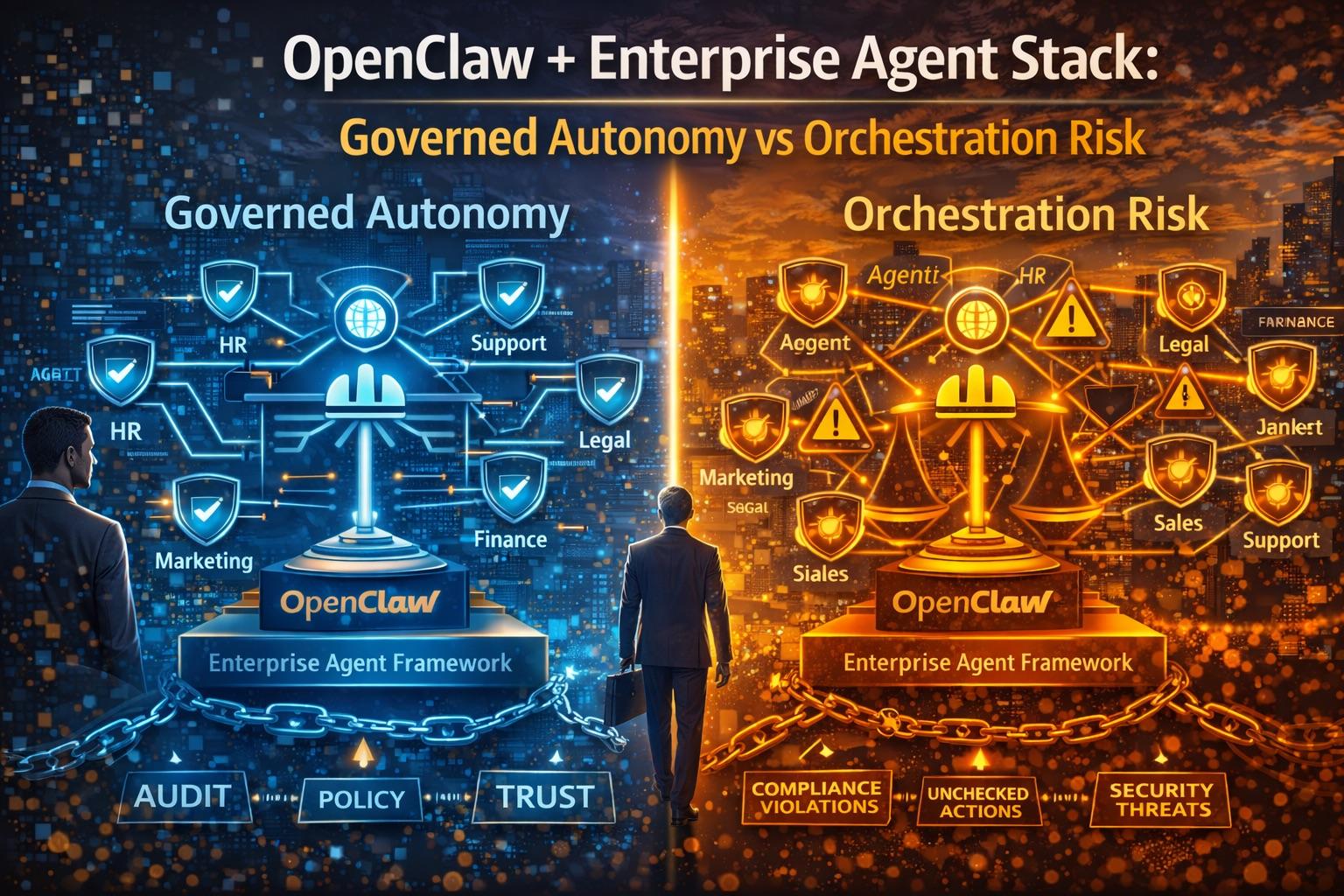

3. The Framework Gap: Where OpenClaw Fits

Agent orchestration frameworks — OpenClaw, LangGraph, CrewAI, AutoGen — provide the execution substrate. But frameworks are not governance. The enterprise needs both.

Framework vs Governance

| What Frameworks Provide | What Governance Requires |

|---|---|

| Agent orchestration and routing | Identity-bound action attribution |

| Tool integration APIs | Tool allowlists with parameter constraints |

| Execution logging | Immutable, cross-system audit trails |

| Error handling | Human-in-the-loop approval gates |

| Agent lifecycle management | Kill-switch with rollback capability |

| Multi-agent coordination | Cross-agent permission isolation |

The frameworks are necessary but not sufficient. OpenClaw’s contribution is making governance-native agent execution possible — treating identity, permissions, logging, and human oversight as first-class primitives rather than afterthoughts bolted onto an orchestration layer. The question for enterprises is not “which framework?” It’s “does the framework support governed autonomy, or does it require you to build governance yourself?”

Governed Autonomy Maturity Model

| Level | Description | Controls | Typical State |

|---|---|---|---|

| 0 — Ungoverned | Agents deployed ad hoc, no controls | None | 30–40% of enterprises |

| 1 — Monitored | Logging exists, no enforcement | Partial audit logs | 25–30% |

| 2 — Constrained | Tool allowlists and basic identity | Identity + allowlists | 15–20% |

| 3 — Governed | All five controls operational | Full control stack | <10% |

| 4 — Adaptive | Controls auto-adjust based on risk signals | Full + dynamic | <2% |

Most enterprises are at Level 0 or 1. The governed autonomy gap is not a technology problem — the controls exist. It’s an implementation problem: organizations have not operationalized the controls that make agent autonomy safe.

Agentic Identity Management and Shadow Agents in Kubernetes: Designing Zero-Trust Identity Architecture for AI Agents and Autonomous Workloads

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4. What to Watch

Third-Party Governance Tooling

The governance gap is creating a market. Expect dedicated products for:

| Capability | What It Does | Why It Matters |

|---|---|---|

| Agent identity management | Purpose-built identity for non-human actors | Enterprise IAM doesn’t model agents |

| Runtime permission enforcement | Dynamic tool/API access control | Static RBAC fails for autonomous agents |

| Cross-agent audit correlation | Unified transaction logging across agent chains | Multi-agent workflows span systems |

| Anomaly-triggered kill-switch | Automatic halt on behavioral deviation | Manual monitoring can’t keep up with agent speed |

| Compliance evidence generation | Automated proof of control effectiveness | Audit-ready evidence for M-26-04, EU AI Act |

The vendors who build governance tooling for agents — not governance bolted onto general AI monitoring — will capture the market that the 40%+ failure rate creates. Every canceled agentic project is a governance tooling sales opportunity.

Security Standards Evolution

| Standard | Status | Enterprise Impact |

|---|---|---|

| OWASP Top 10 for Agentic Apps | Published 2026 | First authoritative attack surface taxonomy |

| NIST AI RMF (agent extensions) | Emerging | Governance framework extending to autonomous systems |

| ISO 42001 (AI management) | Published | Certification basis for AI governance |

| M-26-04 (agent applicability) | Active | Federal procurement requires agent-level controls |

| EU AI Act Art. 6 (high-risk agents) | August 2026 | Autonomous decision-making systems explicitly covered |

The OWASP Top 10 for Agentic Applications gives enterprises the first standardized language for agent risk. Expect insurance carriers, auditors, and procurement officers to reference these categories within 12 months. The enterprise that maps its controls to OWASP agentic risks today is the one that passes the audit tomorrow.

Insurance and Compliance Implications

| Signal | Current State | 12-Month Trajectory |

|---|---|---|

| Cyber insurance: agent coverage | Exclusions emerging | Explicit agent-risk riders required |

| Audit standards: agent controls | Ad hoc | Standardized control frameworks |

| Procurement: agent governance | Implied by M-26-04 | Explicit agent governance requirements |

| Liability: agent actions | Unclear allocation | Vendor/deployer responsibility frameworks |

| Board reporting: agent risk | Rare | Standard risk committee item |

Insurance carriers are beginning to add agent-specific exclusions to cyber policies. The logic is straightforward: if an autonomous agent causes a data breach, a financial error, or a compliance violation, the insurer needs to know that controls were in place. The enterprise without the five-control stack will face higher premiums, coverage exclusions, or outright denial. Agent governance is becoming an insurable-risk requirement, not just a best practice.

5. Practical Actions

Action 1: Audit Your Agent Inventory

Before you can govern agents, you need to know what agents exist. Most enterprises cannot answer basic questions:

- How many agents are deployed in production?

- What tools and APIs does each agent have access to?

- Who authorized each agent’s deployment?

- What identity does each agent operate under?

- What is the kill procedure for each agent?

The audit is the prerequisite for every other control. You cannot govern what you cannot see.

Action 2: Implement Identity-Bound Permissions

| Step | What to Do |

|---|---|

| 1 | Assign unique identities to every agent (no shared service accounts) |

| 2 | Map each agent’s identity to its specific tool/API permissions |

| 3 | Implement credential isolation (Agent A cannot use Agent B’s credentials) |

| 4 | Establish identity lifecycle: provisioning, rotation, revocation |

| 5 | Log every action to its agent identity for attribution |

This is the foundational control. Without identity-bound actions, no other control is auditable.

Action 3: Deploy Tool Allowlists with Parameter Constraints

| Configuration | Example |

|---|---|

| Agent: invoice-processor | Tools: read_invoice, validate_amount, route_approval |

| Prohibited | Tools: modify_payment, access_hr_data, send_external_email |

| Parameter constraint | validate_amount: max_value ≤ $50,000 |

| Escalation trigger | Amount > $50,000 → human approval required |

The allowlist is not a recommendation. It’s an architectural constraint. The agent cannot call tools not on its list — not because it’s told not to, but because the execution environment prevents it.

Action 4: Require Kill-Switch Capability Before Production

No agent goes to production without:

- Immediate halt mechanism (seconds, not minutes)

- Scope options (single agent, agent class, all agents)

- State preservation for forensic analysis

- Rollback capability where technically feasible

- Automated triggers for defined anomaly patterns

The 60–63% of enterprises without kill-switch capability are one cascading failure away from a manual, ad-hoc incident response that takes hours instead of seconds.

Action 5: Map Controls to OWASP Agentic Risks

| OWASP Risk | Required Control | Your Status |

|---|---|---|

| Goal Hijacking | Immutable instruction boundaries + monitoring | ☐ |

| Tool Misuse | Tool allowlists with parameter constraints | ☐ |

| Privilege Compromise | Identity-bound least-agency permissions | ☐ |

| Cascading Failures | Cross-agent circuit breakers + kill-switch | ☐ |

| Prompt Manipulation | Input validation + instruction isolation | ☐ |

| Uncontrolled Actions | Human approval gates for high-risk actions | ☐ |

| Information Leakage | Data classification + cross-boundary controls | ☐ |

| Inadequate Sandboxing | Execution environment isolation | ☐ |

| Supply Chain | Tool/plugin provenance + integrity verification | ☐ |

| Logging Gaps | Immutable audit logs with decision chains | ☐ |

Use this as the assessment template. Every unchecked box is an open risk in production.

The Bottom Line

40% of enterprise apps will have agents by end of 2026. 40%+ of those projects will be canceled by 2027. 80% of IT pros report agents acting unexpectedly. 87% of agents lack safety cards. <10% of enterprises have the full five-control governance stack.

The enterprise agent stack is scaling. The governance stack is not. The gap between the two is where the 40% failure rate lives — in escalating costs from ungoverned tool usage, in compliance violations from unauditable agent actions, in security incidents from agents operating with identities that are “absolutely ungoverned.”

Governed autonomy is not about slowing agents down. It’s about making agent autonomy survivable: identity-bound actions, tool allowlists, immutable logs, human approval gates, and kill-switch capability. Five controls. All five required. The enterprise that deploys agents without this stack is not moving fast — it’s moving uninsured.

The question is not whether your agents can act autonomously. It’s whether you can prove — to auditors, insurers, and regulators — that they were authorized to.

Thorsten Meyer is an AI strategy advisor who has noticed that the fastest way to get an agentic AI project canceled is to deploy it without governance — and the second-fastest way is to wait for the incident that proves the point. More at ThorstenMeyerAI.com.

Sources

- Gartner — 40% Enterprise Apps with Agents by 2026 (Up from <5% in 2024)

- Gartner — 40%+ Agentic AI Projects Canceled by 2027: Escalating Costs, Unclear Value, Risk Controls

- SailPoint — 80% IT Pros: Agents Acting Unexpectedly or Unauthorized (2026)

- Dark Reading — 48% Security Pros: Agentic AI = Top Attack Vector (2026)

- Teleport — 69% Infrastructure Leaders: AI Requires Major Identity Management Changes

- MIT CSAIL — 87% Agents Lack Safety Cards

- OWASP — Top 10 for Agentic Applications (2026)

- OWASP — Principle of Least Agency for Agentic Systems

- The Register — Agent Identities “Absolutely Ungoverned”

- Industry Data — Only 34% Enterprises with AI-Specific Security Controls

- Industry Data — Only 37–40% Have Kill-Switch Capability

- Developer Surveys — 70% Agent Integration Problems

- OMB M-26-04 — Procurement Compliance Applicable to Agent Systems (December 2025)

- EU AI Act — High-Risk Provisions: Autonomous Decision-Making Systems (August 2026)

- EU AI Act Art. 12 — Automatic Event Logging for Agent Traceability

- NIST AI RMF — Govern, Map, Measure, Manage (Agent Extensions Emerging)

- ISO 42001 — AI Management System Certification

- Gartner — Agentic AI Market 44.8% CAGR (2025–2030)

- S&P Global — 42% Scrapped AI Initiatives (2025)

- Deloitte — Enterprise AI Security Controls Assessment

© 2026 Thorsten Meyer. All rights reserved. ThorstenMeyerAI.com