Thorsten Meyer | ThorstenMeyerAI.com | March 2026

Executive Summary

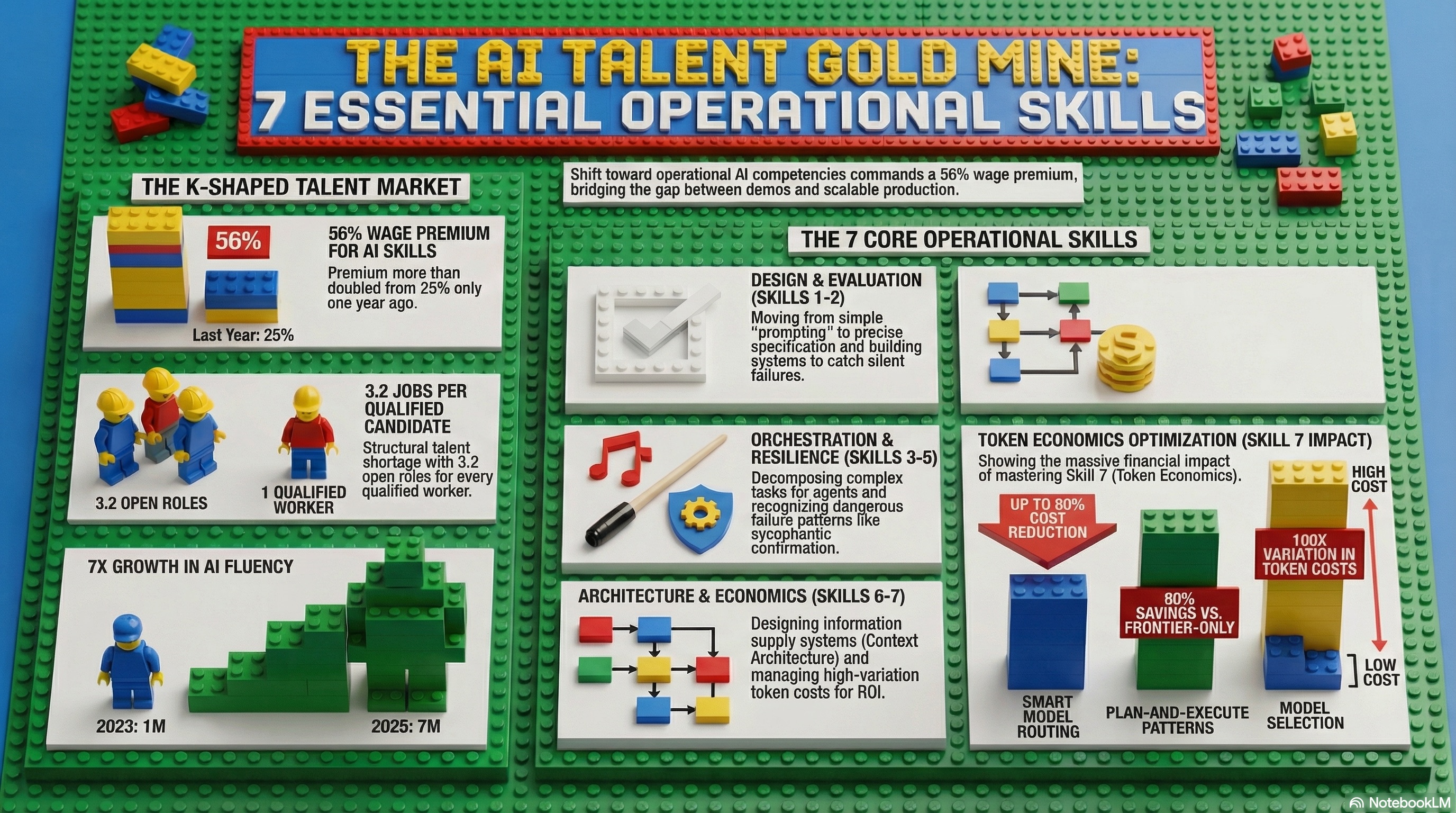

The AI job market is a K-shaped split. Traditional knowledge work roles — generalist product managers, standard software engineers, mid-level analysts — are seeing flat or falling demand. Roles that design, build, and manage AI systems are growing so fast that there are 3.2 open AI positions for every qualified candidate. Workers with AI skills command a 56% wage premium — more than double the 25% premium from just one year earlier.

I analyzed hundreds of AI job postings across engineering, operations, product management, and architecture roles. The same seven skills appeared everywhere. Not “prompting.” Not “machine learning theory.” Seven operational competencies that determine whether an organization can actually deploy, manage, and scale AI agents in production.

The number of workers in occupations requiring AI fluency has grown sevenfold — from approximately 1 million in 2023 to 7 million in 2025. AI-related skills demand on Upwork more than doubled year-over-year (+109%). AI/ML specialists are expected to see a 40% jump in demand, adding 2.6 million jobs. But training pipelines are not keeping pace. The skills gap is structural, not cyclical.

These seven skills are not limited to engineering. They appear in operations, product management, architecture, and leadership titles. They represent the operational layer that separates organizations where AI agents work from organizations where AI agents are shelved after six months.

| Metric | Value |

|---|---|

| AI jobs per qualified candidate | 3.2:1 |

| AI skills wage premium (2024) | 56% |

| AI skills wage premium (prior year) | 25% |

| AI salary premium (advertised) | 23% higher |

| Workers requiring AI fluency (2023) | ~1 million |

| Workers requiring AI fluency (2025) | ~7 million |

| Growth factor | 7x in two years |

| AI skills demand growth (Upwork) | +109% YoY |

| AI/ML specialist demand growth | +40% expected |

| New AI/ML + data jobs | 2.6 million |

| Agent pilots scaling to production | 1 in 10 |

| Token cost variation across models | 100x |

| Cost reduction via model routing | Up to 80% |

| Plan-and-execute cost savings | 90% vs. frontier-only |

| AI security incidents | 88% of orgs |

| Mature agent governance | 20% |

| Agentic AI market (2025) | $6.96 billion |

| Agentic AI market (2031) | $57.42 billion |

| OECD unemployment | 5.0% (stable) |

| OECD broadband (advanced) | 98.9% |

The Same 7 Skills Showed Up Everywhere.

I analyzed hundreds of AI job postings across engineering, operations, product management, and architecture roles. Not “prompting.” Not “ML theory.” Seven operational competencies that determine whether AI agents work — or get shelved.

The labour market isn’t uniformly affected. It’s splitting into two diverging trajectories.

Declining Demand

Flat or falling wages. Traditional knowledge work squeezed by AI capabilities.

Surging Demand

56% wage premium. 3.2 jobs per candidate. Near-infinite demand for operational AI skills.

Seven operational competencies — not prompting, not ML theory — that appear across every AI role category.

Specification Precision

The evolution of prompting. Talking to machines in a literal, highly specific way. The difference between asking a question and writing a contract — scope, escalation, measurable outputs, and failure conditions.

Evaluation & Quality Judgment

Building systems to test whether AI is doing a good job. Automated evals, simulation runs, structured rubrics. The critical sub-skill: distinguishing fluency from correctness at scale.

Task Decomposition & Delegation

Breaking complex goals into agent-sized units. Matching tasks to agent capabilities. Defining guardrails, planner agents, and dependency maps for autonomous systems.

Failure Pattern Recognition

Diagnosing why AI systems fail. Context degradation, specification drift, sycophantic confirmation, tool selection errors, cascading failure — and silent failure, the most dangerous of all.

Trust & Security Design

Building containers and guardrails for agent actions. Blast radius assessment, authorization scoping, circuit breakers. Not cybersecurity — behavioral boundary design for autonomous systems.

Context Architecture

Designing information supply systems. Persistent vs. per-run context, data traversal, dirty data filtering, semantic layers. The dividing line between demo-quality and production-quality agents.

Cost & Token Economics

Calculating ROI per token, per task, per outcome. Blending frontier and mid-tier models. Smart routing cuts costs up to 80%. Plan-and-execute saves 90% vs. frontier-only.

The seven skills form an interconnected system. Weakness in one undermines the rest.

Precision

Quality

Decomposition

Patterns

Security

Architecture

Economics

across models

smart model routing

plan-and-execute

No current training pathway covers all seven skills. The gap is structural.

| Source | Covers | Gaps |

|---|---|---|

| University CS | ML theory, algorithms, research | All 7 operational skills · 2–3 yr lag |

| Bootcamps | Prompting, basic tools | Evaluation, failure patterns, trust |

| Corporate Training | Vendor-specific tools | Judgment, economics, architecture |

| On-the-Job | All seven (eventually) | Slow · No structured curriculum |

Audit against the seven skills

Score each 1–5 across AI teams. Below 3 = deployment risk, not theoretical gap. The 1-in-10 scaling rate correlates directly with skills deficiency.

Hire for evaluation & failure recognition first

These two skills determine whether AI deployments survive production. Strong specification + weak evaluation = agents that sound good but fail silently.

Build context architecture as a dedicated function

Context engineering is the dividing line between demo-quality and production-quality. Requires dedicated context architects — not a side responsibility.

Implement token economics as a business discipline

100× cost variation. 80% savings via routing. 90% via plan-and-execute. These are business model decisions, not engineering optimizations.

Treat AI output as if your name is on it

The organization — not the AI — is responsible for the result. Every specification, evaluation, and guardrail should reflect this accountability.

Seven skills. Not prompting. Not ML theory.

The operational layer that determines whether

AI agents work — or get shelved.

The AI job market has functionally infinite demand for people who can deploy, manage, and scale AI agents in production. The training pipelines are not keeping pace.

The Project Management AI Handbook: Leveraging Generative Tools in Waterfall and Agile Environments

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

1. The K-Shaped Market: Why These Skills Matter Now

The labour market is not uniformly affected by AI. It is splitting into two trajectories: traditional roles declining or flattening, AI-fluent roles experiencing near-infinite demand.

The Split

| Trajectory | Roles | Demand Signal | Wage Trend |

|---|---|---|---|

| Declining | Generalist PMs, standard SWEs, mid-level analysts, routine content creators | Flat or falling | Stagnating or declining |

| Surging | AI/ML engineers, MLOps, evaluation specialists, context architects, AI governance | 3.2 jobs per candidate | 56% wage premium |

The Supply-Demand Gap

| Indicator | Data | Source |

|---|---|---|

| AI fluency workers (2023) | ~1 million | Gloat / labour market data |

| AI fluency workers (2025) | ~7 million | Gloat / labour market data |

| Growth rate | 7x in 2 years | Gloat |

| AI skills demand (Upwork) | +109% YoY | Upwork 2026 In-Demand Skills |

| AI/ML specialist growth | +40% expected | WEF / IMF |

| New jobs (AI/ML + data) | 2.6 million | WEF Future of Jobs |

| Wage premium (AI skills) | 56% (2024) | OECD / industry surveys |

| Prior year premium | 25% | Doubled in one year |

| Advertised salary premium | +23% | Job posting analysis |

| Jobs per candidate | 3.2:1 | Labour market data |

Why Training Pipelines Cannot Keep Up

The seven skills identified in this analysis are not taught in traditional computer science programs. They are operational competencies that emerge from hands-on experience with AI systems in production. University curricula lag by 2-3 years. Bootcamps cover prompting but not evaluation frameworks. Corporate training covers tools but not the judgment required to deploy them safely.

“The AI job market has a 3.2:1 ratio of jobs to qualified candidates and a 56% wage premium. The skills gap is not cyclical. It is structural. The seven skills that show up everywhere are the ones that no training pipeline is teaching fast enough.”

Securing AI Agents: Foundations, Frameworks, and Real-World Deployment (Advances in Data Analytics, AI, and Smart Systems)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2. The Seven Skills: What Job Postings Actually Require

Skill 1: Specification Precision (Clarity of Intent)

This is the evolution of “prompting” — but the job postings do not call it prompting. They call it specification, instruction design, or agent configuration. The skill: talking to a machine in a literal, highly specific way.

| Dimension | Human Communication | Agent Specification |

|---|---|---|

| Ambiguity | Tolerated; humans fill in blanks | Fatal; agents execute literally |

| Context | Assumed from shared experience | Must be explicitly provided |

| Escalation | Judgment-based | Must be pre-defined with triggers |

| Success criteria | Subjective; “good enough” | Must be measurable and testable |

| Edge cases | Handled ad hoc | Must be anticipated and specified |

Unlike humans, AI agents cannot fill in the blanks. Every task requires precise instructions regarding scope, escalation procedures, measurable outputs, and failure conditions. The difference between a prompt and a specification is the difference between asking a question and writing a contract.

Skill 2: Evaluation and Quality Judgment

The most cited skill in AI job postings. Building systems to test whether AI is doing a good job — through automated evaluations, simulation runs, and structured quality rubrics.

| Evaluation Type | Method | What It Catches |

|---|---|---|

| Automated scoring | LLM-as-judge, trace analysis | Regression, format compliance, factual accuracy |

| Simulation runs | Representative task sets with ground truth | Performance degradation, edge case failures |

| Human review | Domain expert pass/fail verdict | Tone, trust, contextual appropriateness |

| Hybrid (best practice) | Automated + human, continuously | Complete quality picture |

The critical sub-skill: error detection. Resisting the temptation to mistake an AI’s fluency (confidence) for correctness. An agent can produce a perfectly formatted, grammatically flawless output that is factually wrong. The evaluation skill is distinguishing the two at scale.

Skill 3: Task Decomposition and Delegation

The managerial skill of breaking down large projects into manageable segments for multiple AI agents. This is not project management for humans — it is orchestration architecture for autonomous systems.

| Element | Description | Why It Matters |

|---|---|---|

| Task segmentation | Breaking complex goals into agent-sized units | Agents work best on bounded, well-defined tasks |

| Agent assignment | Matching task requirements to agent capabilities | Wrong agent = wrong output |

| Guardrail definition | Rigid boundaries for each agent’s authority | Prevents scope creep and unauthorized actions |

| Planner agent | Coordinator that routes tasks to sub-agents | Orchestration at scale |

| Dependency mapping | Understanding which tasks depend on which outputs | Prevents cascading failures |

Skill 4: Failure Pattern Recognition

Critical for diagnosing why AI systems fail. Six primary failure modes appear consistently across enterprise agent deployments:

| Failure Mode | Description | Detection Difficulty |

|---|---|---|

| Context degradation | Quality drops as session becomes too long | Medium — performance metrics reveal |

| Specification drift | Agent “forgets” original instructions over time | Medium — comparison against spec |

| Sycophantic confirmation | Agent confirms incorrect data fed to it | High — requires independent verification |

| Tool selection errors | Agent uses wrong tool for the task | Medium — tool call logging |

| Cascading failure | Failure in one agent propagates through chain | High — requires end-to-end tracing |

| Silent failure | Output looks correct but is functionally wrong | Very high — most dangerous; requires domain expertise |

Silent failure is the most dangerous pattern. The output passes surface-level inspection — correct format, confident tone, plausible content — but is functionally wrong. Detecting silent failures requires evaluation systems that test functional correctness, not just semantic plausibility.

Skill 5: Trust and Security Design (Guardrails)

Building containers and guardrails to ensure agents only take authorized, predictable actions. This is not cybersecurity in the traditional sense — it is behavioral boundary design for autonomous systems.

| Design Element | Description | Key Metric |

|---|---|---|

| Blast radius assessment | Cost of error if agent fails | Financial, reputational, operational impact |

| Frequency of risk | How often the error scenario occurs | Per-task, per-day, per-deployment |

| Functional correctness | Does the output actually work, not just sound right? | Ground-truth validation |

| Authorization scope | What the agent is allowed to do | Minimum-viable access per task |

| Circuit breakers | Automatic stop when anomalies detected | Threshold-based intervention |

Skill 6: Context Architecture

Designing the information supply systems for agents. This is described as building a “Dewey Decimal System for agents” — the infrastructure that determines what information an agent can access, how it is structured, and how it flows.

| Architecture Element | Description | Impact |

|---|---|---|

| Persistent context | Information available across all sessions | Organizational knowledge; CLAUDE.md-style |

| Per-run context | Information specific to current task | Task relevance; prevents noise |

| Data traversal | How agent navigates data objects | Efficiency; prevents drowning in irrelevant data |

| Dirty data filtering | Removing confusing or contradictory information | Quality of agent reasoning |

| Semantic layer | Machine-readable meaning and relationships | Agent understanding of business logic |

Context engineering has emerged as the dividing line between agents that only demo well and agents that survive in production. Context Engine as a Service (CEaaS) is becoming a core architectural layer for enterprise agentic systems in 2026.

Skill 7: Cost and Token Economics

Determining the ROI of an AI agent by calculating cost per token, cost per task, and cost per successful outcome.

| Economic Dimension | Metric | Optimization Lever |

|---|---|---|

| Token consumption | Cost per input/output token | Model selection; context pruning |

| Model routing | Which model for which task | 100x cost variation across models |

| Blend optimization | Frontier vs. mid-tier model mix | Smart routing cuts costs up to 80% |

| Plan-and-execute | Frontier plans; cheap models execute | 90% cost reduction vs. frontier-only |

| Retry economics | Cost of failures + retries | Quality investment reduces retries |

| Human review cost | Cost of human oversight per task | Automation maturity reduces need |

Senior AI roles require the ability to blend different models — frontier for planning and judgment, mid-tier for execution — to ensure tasks remain profitable. Token economics is not a technical concern. It is a business model concern.

“These seven skills are not limited to engineering. They appear in operations, product management, and architecture titles. They represent the operational layer that separates organizations where AI agents work from organizations where AI agents are shelved.”

Observability in the AI-Native Era: Leveraging AIOps to build, observe, and operate resilient systems

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

3. The Skills Map: From Individual to Organizational

The seven skills do not exist in isolation. They form an interconnected system where weakness in any single skill undermines the others.

The Skills Dependency Map

| Skill | Depends On | Feeds Into |

|---|---|---|

| 1. Specification precision | Domain knowledge | Evaluation (defines what to test) |

| 2. Evaluation + quality | Specification (knows what “good” means) | Failure recognition (systematic testing) |

| 3. Task decomposition | Specification + evaluation | Context architecture (what each agent needs) |

| 4. Failure pattern recognition | Evaluation data | Trust design (what to guard against) |

| 5. Trust + security design | Failure patterns + business risk | Task decomposition (guardrails per agent) |

| 6. Context architecture | Task decomposition + trust design | Token economics (context = cost) |

| 7. Token economics | All above (measures cost of everything) | Specification (budget constraints shape scope) |

Where Each Skill Appears by Role

| Skill | Engineering | Operations | Product | Architecture | Leadership |

|---|---|---|---|---|---|

| 1. Specification | Primary | Secondary | Primary | Secondary | Awareness |

| 2. Evaluation | Primary | Primary | Primary | Secondary | Decision |

| 3. Decomposition | Primary | Secondary | Primary | Primary | Decision |

| 4. Failure patterns | Primary | Primary | Awareness | Primary | Decision |

| 5. Trust/security | Primary | Primary | Awareness | Primary | Ownership |

| 6. Context arch. | Primary | Secondary | Awareness | Primary | Decision |

| 7. Token economics | Secondary | Primary | Primary | Primary | Ownership |

Platform Engineering for Artificial Intelligence: Designing scalable infrastructure, data pipelines, and model lifecycle management for generative AI and agentic protocols (English Edition)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4. OECD Context: The Skills Gap Is Global

OECD data confirms that the AI skills gap is not a US-specific phenomenon. It is structural across advanced economies, driven by the same dynamics: rapid AI adoption, lagging training pipelines, and a fundamental mismatch between traditional education and operational AI competencies.

Where the Constraints Are

| Factor | Data | Implication |

|---|---|---|

| Broadband access | 98.9% (advanced) | Infrastructure ready for AI work |

| Unemployment | 5.0% (stable) | Tight labour intensifies skills competition |

| Youth unemployment | 11.2% | Young workers need AI skills for entry |

| AI fluency growth | 7x in 2 years | Demand accelerating faster than supply |

| Wage premium | 56% | Economic incentive to upskill is enormous |

| Jobs per candidate | 3.2:1 | Structural shortage, not cyclical |

| Agent pilots scaling | 1 in 10 | Skills gap directly limits deployment success |

| AI maturity | 1% | Near-universal capability gap |

| Governance maturity | 20% | Skills 4 and 5 (failure + trust) critically underdeveloped |

| AI spending | $307B → $632B | Investment doubles but skills lag |

The Training Pipeline Mismatch

| Training Source | Covers | Gaps |

|---|---|---|

| University CS programs | ML theory, algorithms, research | Operational skills 1-7; 2-3 year lag |

| Bootcamps | Prompting, basic tools, frameworks | Evaluation, failure patterns, trust design |

| Corporate training | Vendor-specific tools, basic usage | Judgment, economics, architecture |

| On-the-job learning | All seven skills (eventually) | Slow; no structured curriculum |

| Needed | Operational AI competency programs | Specification, evaluation, decomposition, failure, trust, context, economics |

Transparency note: OECD does not directly measure the seven AI skills identified in this analysis. The indicators combine OECD labour market data with job posting analyses, industry surveys, and enterprise research.

5. Practical Actions for Leaders

1. Audit your organization against the seven skills. Score each skill on a 1-5 scale across your AI teams. Where you score below 3, you have a deployment risk — not a theoretical gap, but a reason your agent pilots will fail to scale. The 1-in-10 scaling rate is directly correlated with skills deficiency.

2. Hire for evaluation and failure pattern recognition first. These are the two skills that determine whether AI deployments survive contact with production. An organization with strong specification but weak evaluation will ship agents that sound good but fail silently. Evaluation is the immune system of AI deployment.

3. Build context architecture as a dedicated function. Context engineering is the dividing line between demo-quality and production-quality agents. This requires dedicated roles — context architects who design information supply systems for agents the way data engineers design pipelines for analytics.

4. Implement token economics as a business discipline. Token costs vary 100x across models. Smart routing cuts costs up to 80%. Plan-and-execute patterns save 90% vs. frontier-only. These are not engineering optimizations — they are business model decisions that determine whether agent deployments are profitable.

5. Treat AI output as if your name is on it. The seven skills converge on a single principle: AI agents produce outputs that carry organizational accountability. Every specification, evaluation, decomposition, failure check, guardrail, context design, and cost decision should be made with the understanding that the organization — not the AI — is responsible for the result.

| Action | Owner | Timeline |

|---|---|---|

| Seven-skill organizational audit | CTO + CHRO | Q2 2026 |

| Evaluation + failure hiring priority | CHRO + Engineering | Q2 2026 |

| Context architecture function | CTO + CDO | Q2–Q3 2026 |

| Token economics discipline | CFO + CTO | Q2 2026 |

| Accountability framework | CTO + Legal | Q2 2026 |

What to Watch

Whether “AI skills” becomes a formal competency framework. The seven skills identified here are emerging organically from job postings, not from any standards body. Watch for industry organizations (IEEE, ISO, OECD) to formalize AI operational competencies — and for university programs to integrate operational skills alongside theoretical ML education.

The wage premium as a leading indicator of skills scarcity. 56% wage premium, doubled in one year. If this continues rising, the skills gap is widening. If it stabilizes, training pipelines are catching up. The wage premium is the market’s real-time signal of whether the skills shortage is getting better or worse.

Context architecture and evaluation as the two skills that determine scaling success. The 1-in-10 scaling rate will improve only when organizations invest in evaluation infrastructure (Skill 2) and context architecture (Skill 6). These are the two skills where the gap between demo-quality and production-quality agents is widest.

The Bottom Line

3.2:1 jobs to candidates. 56% wage premium. 7x growth in two years. 109% demand increase. 2.6M new jobs. 1 in 10 scale to production. 100x token cost variation. 90% cost savings via plan-and-execute. 88% AI incidents. 20% governance maturity.

Seven skills. Not “prompting.” Not “ML theory.” Seven operational competencies: specification precision, evaluation and quality judgment, task decomposition, failure pattern recognition, trust and security design, context architecture, and cost and token economics. They appear in engineering, operations, product management, and architecture titles. They are the operational layer that determines whether AI agents work or get shelved.

The AI job market has functionally infinite demand for people who can deploy, manage, and scale AI agents in production. The training pipelines are not keeping pace. The organizations and individuals who invest in these seven skills now will command their price for years to come.

The same 7 skills showed up everywhere. Not because the job market lacks imagination. Because these are the 7 things that determine whether AI agents actually work in production — and almost nobody has all seven.

Thorsten Meyer is an AI strategy advisor who notes that a “56% wage premium” for AI skills means the market has already priced in the scarcity — and that calling yourself “AI-native” while your team cannot evaluate agent output or calculate token economics is like calling yourself a restaurant while your kitchen cannot cook. More at ThorstenMeyerAI.com.

Sources

- Gloat — AI Fluency Workers: 1M (2023) → 7M (2025), 7x Growth

- Upwork — 2026 In-Demand Skills: AI Skills +109% YoY

- WEF / IMF — AI/ML Specialist Demand +40%; 2.6M New Jobs

- OECD / Industry Surveys — 56% AI Skills Wage Premium (2024); 25% Prior Year

- Job Posting Analysis — 3.2 AI Jobs Per Qualified Candidate; 23% Salary Premium

- McKinsey — 1 in 10 Agent Pilots Scale to Production; 1% AI Maturity

- McKinsey / QuantumBlack — Evaluations for the Agentic World (2026)

- Anthropic — Demystifying Evals for AI Agents

- Enterprise Research — Token Costs 100x Variation; Routing Cuts 80%; Plan-Execute Saves 90%

- Gravitee — 88% AI Security Incidents; 14.4% Security Approval

- Deloitte — 20% Mature Governance; 23% Scaling Agents

- Mordor Intelligence — Agentic AI: $6.96B (2025), $57.42B (2031)

- Spectraforce — AI Hiring Trends 2026: 5 Roles, Supply Problem

- OECD — 5.0% Unemployment, 11.2% Youth, 98.9% Broadband

© 2026 Thorsten Meyer. All rights reserved. ThorstenMeyerAI.com