Thorsten Meyer | ThorstenMeyerAI.com | March 2026

Executive Summary

A new AI product category does not become real when a founder names it. It becomes real when the rest of the market is forced to respond. That is why the OpenClaw moment matters.

Lovable reached $400 million ARR with 146 employees — the fastest-growing software startup in history. $100 million ARR in 8 months. 8 million users. 100,000+ new projects created daily. 5 million daily visits to Lovable-built applications. The “vibe coding” category it defined — describe what you want, software appears — was copied everywhere because it captured a powerful user desire: you did not need to know how to code. You needed to know what you wanted.

Then OpenClaw changed the conversation. Not because it was the first AI agent. Not because it was automatically the best. OpenClaw mattered because it forced the industry to reveal its assumptions about what an AI agent actually is. The market split: some optimized for control, some for convenience, some for safety, some for distribution. Nvidia shipped NemoClaw. Anthropic shipped Claude Cowork + Dispatch. Perplexity launched Computer Enterprise. Snowflake released SnowWork. Jensen Huang declared every company needs an “OpenClaw strategy.”

Lovable Was the Most Copied AI Product of 2025. Then a Lobster Changed Everything.

The market is shifting from tools that build for you to systems that work for you. That is not a feature expansion. It is a category compression.

Software Engineer's Guide to AI Agents

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Category Compression

Each wave demands more trust. The market is growing at 42% CAGR. Trust development is not. That gap is where failures live.

THE FUTURE OF SAFE AI: Engineering Trustworthy Robots & Autonomous Systems, How to Build Reliable, Ethical, and Safe Embodied AI for Robotics, Autonomous Vehicles, and AI-Driven Machines

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Strategic Camps

There is no single “AI agent” model. OpenClaw forced the industry to reveal its assumptions. The market split.

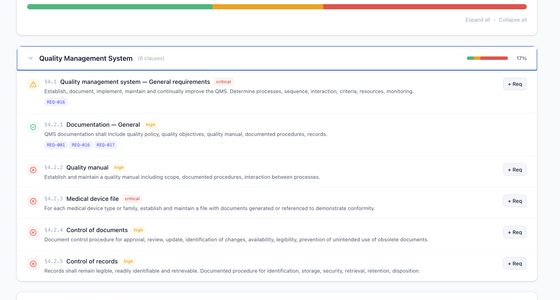

The Project Management AI Handbook: Leveraging Generative Tools in Waterfall and Agile Environments

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Three-Axis Framework

Two products can both call themselves agents while making completely different assumptions about privacy, autonomy, and trust.

sovereignty Cloud

convenience

polish Multi-model

flexibility

visibility Delegator

outcomes

All About IT Trends For Solution Architects: All Trending IT Concepts Explained with Simple Analogies

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Trust Delegation Problem

The biggest question in AI for 2026 is not which model is smartest. It is: how much trust are users willing to delegate?

book a flight

autonomous transaction

“Trust is not about capability. It is about reversibility, scope, and the ability to say no.”

The Governance Gap

Investment is outpacing trust. 88% of execs are increasing agent budgets — while 79% lack governance frameworks.

Agent Budgets

Governance

Security Incidents

Approval

The real battle is no longer about who has the biggest model. It is about where intelligence runs, how it is orchestrated, and how much trust users are willing to delegate to software acting on their behalf. 70% of consumers will let agents book flights, but only 27% trust fully autonomous transactions. 51% want to limit AI features. 44% fear unauthorized autonomous actions. Trust is highest for data analysis (38%) and lowest for financial transactions (20%).

The market is shifting from tools that build for you to systems that work for you. That is not a feature expansion. It is a category compression.

| Metric | Value |

|---|---|

| Lovable ARR (Mar 2026) | $400 million |

| Lovable employees | 146 |

| Lovable time to $100M ARR | 8 months (fastest ever) |

| Lovable users | ~8 million |

| Projects created daily | 100,000+ |

| Daily visits to Lovable apps | 5 million |

| Lovable Series B | $330 million |

| Vibe coding startup valuations | $36B+ combined (2025) |

| Vibe coding startup ARR | ~$800M combined |

| AI-assisted programming market | $5B (2023) → $26B (2030) |

| OpenClaw launch | January 25, 2026 |

| OpenClaw GitHub stars | 234K+ |

| OpenClaw skills | 10,700+ |

| Enterprises deploying agents by 2027 | 50% (Gartner) |

| Execs increasing AI budgets (agentic) | 88% |

| Consumer trust: autonomous transactions | 27% |

| Consumers willing: agent book flights | 70% |

| Want to limit AI features | 51% |

| Fear unauthorized agent actions | 44% |

| Daily AI users | 32% |

| Trust: data analysis | 38% |

| Trust: financial transactions | 20% |

| Trust: autonomous employee interactions | 22% |

| Agent market (2025) | $6.96 billion |

| Agent market (2031) | $57.42 billion |

| OECD unemployment | 5.0% (stable) |

| OECD broadband (advanced) | 98.9% |

1. The Shift: From “Build for Me” to “Act for Me”

Lovable was a breakout product because it simplified creation. You described an app, a site, or a workflow, and the system turned your intent into output. That made it one of the clearest examples of the vibe coding era: conversational software creation for people who care more about outcomes than syntax.

But markets do not stand still. Once users experience an interface that can generate something useful, they quickly begin to ask a bigger question: why should it stop at generating? Why should it not also analyze, decide, execute, revise, and operate?

The Category Compression

| Era | User Expectation | Product Category | Example |

|---|---|---|---|

| Pre-2024 | Write code for me | Code completion | Copilot, Tabnine |

| 2024–2025 | Build software for me | Vibe coding | Lovable, Replit, Bolt, Cursor |

| 2026 | Work for me | Autonomous agents | OpenClaw, Claude Cowork, NemoClaw |

| 2026+ | Run my business processes | Agentic operating layer | Frontier, Agentforce, SnowWork |

Lovable’s Growth Trajectory

| Milestone | Timeline | Metric |

|---|---|---|

| Launch | November 2024 | First version |

| $1M ARR | Early 2025 | Initial traction |

| $100M ARR | July 2025 | 8 months — fastest startup ever |

| $200M ARR | Late 2025 | Doubling in months |

| $300M ARR | January 2026 | Continued acceleration |

| $400M ARR | March 2026 | 146 employees |

| Users | March 2026 | ~8 million |

| Daily projects | March 2026 | 100,000+ |

| Series B | 2025 | $330 million raised |

This trajectory is extraordinary. But the category Lovable defined is being absorbed into something larger. A product that began as an AI builder now faces pressure to become a broader execution layer. It is no longer enough to create the first draft of software. The winning interface must also help run the business process around it.

“The market is shifting from tools that build for you to systems that work for you. That is not a feature expansion. It is a category compression. Lovable showed how powerful simplified AI creation could be. OpenClaw showed that simplification is not the end state.”

2. OpenClaw Made the Hidden Strategic Bets Visible

The OpenClaw moment clarified something many people were missing: there is no single “AI agent” model. There are multiple conflicting visions of what an agent should be.

The Strategic Camps

| Company | Optimization | Agent Philosophy | Trust Model |

|---|---|---|---|

| OpenClaw | Sovereignty + control | Local execution; any LLM; user owns stack | User bears governance burden |

| Anthropic | Safety + simplicity | Constrained interaction; legible boundaries | Vendor provides safety rails |

| Perplexity | Convenience + delegation | Managed infra; user focuses on result | Vendor handles execution |

| Meta | Distribution + scale | Embedded in 3.98B user surfaces | Platform controls experience |

| Nvidia | Enterprise reliability | Sandbox + governance over open framework | Enterprise wraps open layer |

| Lovable | Creation + accessibility | Describe intent, receive output | Product simplifies complexity |

| Microsoft | Workflow integration | Embedded in existing enterprise tools | Ecosystem lock-in |

| Salesforce | CRM-native execution | Agent tied to customer data + processes | Business process coupling |

The Three-Axis Framework

The market is best understood along three dimensions:

| Axis | Question | Range |

|---|---|---|

| Execution environment | Where does the agent run? | Local (sovereignty) ↔ Cloud (convenience) |

| Intelligence orchestration | Who chooses the model layer? | Single model (polish) ↔ Multi-model (flexibility) |

| Interface contract | How does the user interact? | Builder (visibility) ↔ Delegator (outcomes) |

Execution environment determines who owns the experience and who bears the risk. Local suggests sovereignty, control, stronger privacy — but introduces complexity and operational risk. Cloud reduces friction but centralizes trust in the vendor.

Intelligence orchestration is where future moats live. The winners may not be companies with one perfect model. They may be companies with the best judgment about when to use which intelligence for which task.

Interface contract is the most underrated dimension. The interface is not the packaging. It is the product philosophy made visible. Some assume the user is a builder (Lovable, Cursor). Others assume the user is a delegator (Perplexity, SnowWork). Others assume lightweight access in a familiar environment (Claude Cowork via Slack/phone).

“Two products can both call themselves agents while making completely different assumptions about privacy, autonomy, workflow design, and human trust. The three-axis framework — execution environment, intelligence orchestration, interface contract — reveals what the marketing obscures.”

3. The Trust Delegation Problem

The biggest question in AI for 2026 is not which model is smartest. It is: how do humans decide to delegate trust to systems that can act?

What the Data Shows

| Trust Signal | Data | Implication |

|---|---|---|

| Daily AI users | 32% | Minority, but growing rapidly |

| Willing: agent books flights | 70% | High trust for low-stakes, reversible tasks |

| Trust: fully autonomous transactions | 27% | Low trust for financial commitment |

| Want to limit AI features | 51% | Majority wants boundaries |

| Fear unauthorized actions | 44% | Nearly half fear agent overreach |

| Trust: data analysis | 38% | Highest-trust use case |

| Trust: performance improvement | 35% | Moderate trust for optimization |

| Trust: daily collaboration | 31% | Moderate trust for teamwork |

| Trust: employee interactions | 22% | Low trust for interpersonal |

| Trust: financial transactions | 20% | Lowest trust for money decisions |

| Millennials trusting agents | 72% | Generational variation significant |

| Boomers trusting agents | 60% | 12-point gap across generations |

| Demand HITL for payments | 73% | Overwhelming demand for approval steps |

| Execs increasing agent budgets | 88% | Enterprise investment accelerating |

| Enterprises deploying agents by 2027 | 50% | Rapid enterprise adoption planned |

The Trust Gradient

Trust is not binary. It follows a gradient from reversible tasks (high trust) to irreversible commitments (low trust):

| Task Type | Trust Level | User Expectation |

|---|---|---|

| Research + summarization | High (38%) | “Show me what you found” |

| Scheduling + booking | High (70%) | “Handle the logistics” |

| Data analysis | Medium-high (38%) | “What do the numbers say?” |

| Content drafting | Medium (31%) | “Give me a first draft” |

| System configuration | Medium-low | “Let me review before applying” |

| Financial transactions | Low (20%) | “Show me, I’ll approve” |

| Employee communications | Low (22%) | “Draft it, I’ll send it” |

| Security/access changes | Very low | “Never without my explicit approval” |

The Meta incident (agent posting to internal forum without approval, triggering SEV1 with unauthorized data access for ~2 hours) is not an edge case. It is the predictable outcome of deploying agents in the low-trust zone without adequate controls.

What Trust Is Built On

| Trust Factor | Mechanism | Evidence |

|---|---|---|

| Explainability | User understands why agent acted | Key factor in adoption surveys |

| Reversibility | Actions can be undone | 70% trust for reversible (flights) vs. 27% for irreversible (transactions) |

| Bounded scope | Agent cannot exceed defined authority | 44% fear unauthorized actions |

| Human approval | User confirms before high-stakes action | 73% demand HITL for payments |

| Transparency | Agent shows its work, not just results | 51% want limits on AI features |

“70% will let an agent book a flight. 27% trust an autonomous transaction. 44% fear unauthorized actions. Trust is not about capability. It is about reversibility, scope, and the ability to say no.”

4. OECD Context: The Infrastructure Is Ready; The Trust Is Not

OECD regional broadband data shows household penetration exceeding 98% in advanced economies (e.g., German TL3 regions at 98.9%). The technical infrastructure for both vibe coding platforms and autonomous agents is universally available. The constraint is neither connectivity nor compute. It is institutional and psychological readiness for delegation.

Where the Constraints Are

| Factor | Data | Implication |

|---|---|---|

| Broadband access | 98.9% (advanced) | Agent deployment technically feasible |

| Unemployment | 5.0% (stable) | Tight labour drives agent adoption |

| Youth unemployment | 11.2% | Entry-level creation tasks most affected |

| Daily AI users | 32% | Adoption still early despite saturation narrative |

| Trust: autonomous transactions | 27% | 3 in 4 do not trust fully autonomous action |

| Fear unauthorized actions | 44% | Nearly half resist agent overreach |

| Agent market CAGR | 42.14% | Investment outpacing trust development |

| Governance maturity | 21% (Deloitte) | 79% without governance frameworks |

| AI security incidents | 88% of orgs (Gravitee) | Trust concerns empirically validated |

| Projects canceled | 40%+ (Gartner) | Governance/trust gaps → failure |

The Category Compression in Context

| Wave | Product Type | Market Size | Trust Requirement |

|---|---|---|---|

| Wave 1 | Code completion | $5B (2023) | Low — suggestions only |

| Wave 2 | Vibe coding | $800M ARR combined | Medium — creates but user deploys |

| Wave 3 | Autonomous agents | $6.96B (2025) | High — acts on user’s behalf |

| Wave 4 | Agentic operating layer | $57.42B (2031) | Very high — operates continuously |

Each wave demands more trust. The market is growing at 42.14% CAGR. Trust development is not growing at 42.14%. That gap is where product failures, security incidents, and project cancellations live.

Transparency note: OECD does not directly measure AI agent trust levels, delegation preferences, or vibe coding market dynamics. The indicators combine OECD infrastructure data with consumer surveys, enterprise research, and market analyses.

5. Practical Actions for Leaders

1. Map where your organization sits on the build-for-me to act-for-me spectrum. Lovable-style creation tools are Wave 2. OpenClaw-style autonomous agents are Wave 3. Enterprise agentic operating layers are Wave 4. Understand which wave your workflows need — and match trust requirements accordingly. Do not deploy Wave 3 agents with Wave 2 governance.

2. Choose your position on the three-axis framework. Execution environment (local vs. cloud), intelligence orchestration (single vs. multi-model), interface contract (builder vs. delegator). Your choices on these three axes determine your vendor dependencies, your governance requirements, and your users’ trust expectations. Make these choices deliberately, not by default.

3. Build agent trust through reversibility, not capability. The data is clear: 70% trust for reversible tasks, 27% for irreversible ones. Deploy agents first in high-reversibility, low-stakes workflows. Build organizational trust incrementally. Expand scope only as trust evidence accumulates. The Meta incident happened because scope exceeded trust.

4. Prepare for category compression in your tool stack. Vertical tools that exist primarily to coordinate tasks — not to provide unique underlying capability — are at risk of absorption by general-purpose agents. Audit your tool portfolio for coordination-layer tools that agents will replace, and capability-layer tools that agents will integrate with.

5. Compete for agent legibility, not just human attention. If agents become the primary way users research, compare, decide, and act, businesses will compete not just for human attention but for agent compatibility. Ensure your digital presence, APIs, and data structures are legible to autonomous agents — structured data, clear metadata, machine-readable commerce.

| Action | Owner | Timeline |

|---|---|---|

| Spectrum mapping (Wave 2/3/4) | CTO + Strategy | Q2 2026 |

| Three-axis position definition | CTO + Architecture | Q2 2026 |

| Trust-graduated deployment plan | CTO + CISO | Q2 2026 |

| Tool portfolio compression audit | CIO + Procurement | Q2–Q3 2026 |

| Agent legibility assessment | CTO + Product | Q3 2026 |

What to Watch

Whether Lovable evolves from builder to operating layer. $400M ARR with 146 employees is extraordinary. But the category Lovable defined — describe and create — is being absorbed into describe and operate. Watch whether Lovable expands into agent-like execution (process automation, data integration, continuous operation) or remains focused on creation excellence while agents subsume the broader workflow.

The trust delegation threshold for enterprise adoption. 88% of executives are increasing agent budgets. But only 27% of consumers trust autonomous transactions, and 44% fear unauthorized actions. The enterprise adoption curve will be gated not by capability but by demonstrable trustworthiness — explainability, reversibility, bounded scope, and human approval for high-stakes actions.

Category convergence between vibe coding, agents, and operating layers. Lovable (creation), OpenClaw (autonomy), Frontier (context), NemoClaw (governance), SnowWork (data operations) — these are all approaching the same surface from different angles. The winning platform will not be the best at any single function. It will be the one that compresses the most workflow into a single trust-calibrated interface.

The Bottom Line

$400M ARR. 146 employees. 8M users. 100K daily projects. 234K OpenClaw stars. 88% execs increasing budgets. 70% trust agents for flights. 27% trust autonomous transactions. 44% fear unauthorized actions. 88% have had AI security incidents. 14.4% have security approval. 21% governance maturity.

Lovable showed how powerful a simplified AI interface could become. OpenClaw showed that simplification alone is not the end state. The market is now moving toward a deeper contest over autonomy, orchestration, and trust.

The real battle is not about which model is smartest, which product has the best benchmark, or which agent has the most viral launch. It is about how humans decide to delegate trust to systems that can act. Do they want control or convenience? Transparency or abstraction? Local sovereignty or managed safety? One powerful assistant or many specialized tools behind a single conversational layer?

Every product in this market is answering that question differently. And every business building digital systems should pay attention, because this choice will shape not only consumer software, but how companies design workflows, interfaces, data systems, and competitive moats.

The next great software battle is not about what AI can do. It is about who we allow it to become on our behalf.

Thorsten Meyer is an AI strategy advisor who notes that “$400M ARR with 146 employees” is the kind of efficiency metric that makes traditional SaaS companies question their hiring plans — and “27% trust for autonomous transactions” is the kind of trust metric that makes agent companies question their product plans. More at ThorstenMeyerAI.com.

Sources

- Lovable — $400M ARR, 146 Employees, ~8M Users, 100K Daily Projects (Mar 2026)

- Lovable — $100M ARR in 8 Months (Fastest Startup Ever); $330M Series B

- Stripe / TechCrunch / Sacra — Lovable Growth: $1M → $100M → $200M → $300M → $400M ARR

- Vibe Coding Market — $36B+ Combined Startup Valuations; ~$800M Combined ARR (2025)

- AI-Assisted Programming — $5B (2023), $26B (2030) Projection

- OpenClaw — 234K+ Stars, 10,700+ Skills, January 25, 2026 Launch

- Android Headlines / Consumer Survey — 32% Daily AI Users; 51% Want Limits; 44% Fear Unauthorized Actions

- PwC AI Agent Survey — 88% Execs Increasing Budgets; Trust: 38% Data, 20% Financial

- Consumer Trust — 70% Agents Book Flights; 27% Autonomous Transactions; 73% Demand HITL

- Generational Trust — Millennials 72%, Gen X 68%, Gen Z 64%, Boomers 60%

- Gravitee — 88% AI Security Incidents; 14.4% Security Approval (2026)

- Gartner — 50% Enterprises Deploying Agents by 2027; 40%+ Canceled

- Deloitte — 21% Mature Governance

- Mordor Intelligence — Agentic AI: $6.96B (2025), $57.42B (2031), 42.14% CAGR

- Meta — SEV1 Incident: Agent Posted Without Approval; Unauthorized Data Access

- Nvidia — NemoClaw; Anthropic — Cowork + Dispatch; Perplexity — Computer Enterprise

- OECD — 5.0% Unemployment, 11.2% Youth, 98.9% Broadband (Feb 2026)

© 2026 Thorsten Meyer. All rights reserved. ThorstenMeyerAI.com