Thorsten Meyer | ThorstenMeyerAI.com | March 2026

Executive Summary

The GPT-5.4 leak — 2 million token context window, stateful AI, full-resolution vision — is not the story. The story is what a 2 million token context window is for: replacing the human synthesis layer that connects an organization’s fragmented knowledge into coherent decision-making capability. OpenAI is pivoting from model company to stateful runtime environment — a platform that does not just answer questions but understands a company’s entire history, logic, and decision-making process.

The strategic thesis, articulated by Nate B. Jones: organizational knowledge is fragmented across filing cabinets — GitHub (code), Slack (informal reasoning), Salesforce (customers), Jira (projects). The only thing connecting these today is the human brain. When a senior engineer leaves, they take the synthesis layer with them, leaving the cabinets full but the organization functionally brain-dead. OpenAI’s goal is to replace human synthesis with an AI context platform that ingests every cabinet and reasons about it at trillion-token scale.

This creates a new form of technology capture: comprehension lock-in. Salesforce locks users in via data. An AI context platform locks users in via understanding. If a company has spent two years building a synthesized layer of knowledge — connecting code reviews, board decks, and customer feedback — switching providers means resetting the organization’s brain to zero. This is intelligence lock-in, potentially the deepest form of capture in software history.

Meanwhile, Anthropic is winning the same war from the bottom up. Claude Code captures context organically through daily developer workflows — CLAUDE.md files, session histories, project conventions. The irony: context captured organically (how people actually work) might be more valuable than context captured architecturally (data dumps).

The agentic AI market: $6.96 billion (2025), $57.42 billion by 2031. OpenAI: $14 billion ARR. Anthropic: Claude Code $2.5 billion ARR. The $600 billion infrastructure bet is not about the next chatbot. It is about who becomes the canonical source of organizational truth.

| Metric | Value |

|---|---|

| GPT-5.4 context window | 2 million tokens |

| GPT-5.2 context window (current) | 1 million tokens |

| Gemini 2.5 Pro context | 1 million tokens |

| OpenAI total ARR | $14 billion |

| Claude Code ARR | $2.5 billion |

| OpenAI Frontier launch | February 5, 2026 |

| Frontier core components | 4 (Context, Execution, Optimization, Governance) |

| GPT-5.4 ship probability (pre-April) | 55% (Manifold) |

| GPT-5.4 ship probability (pre-June) | 74% (Manifold) |

| Agentic AI market (2025) | $6.96 billion |

| Agentic AI market (2031) | $57.42 billion |

| Enterprise apps with agents (2026) | 40% (Gartner) |

| AI agents in operation (2026 est.) | 1 billion |

| Enterprises: data privacy barrier | 67% |

| Enterprises: cost unpredictability | 45% |

| Governance maturity | 21% (Deloitte) |

| Agentic projects canceled by 2027 | 40%+ (Gartner) |

| Target accuracy for long-running agents | 99.5%+ |

| OECD unemployment | 5.0% (stable) |

| OECD broadband (advanced) | 98.9% |

The Year of Corporate Software: Why AI Will Redefine Enterprise Software

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

1. From Model Company to Context Platform

The GPT-5.4 leak is a distraction — but an instructive one. A 2 million token context window is not a chatbot feature. It is infrastructure for a platform that holds an entire organization’s reasoning in active memory.

The Pivot

| Phase | OpenAI Role | Enterprise Value |

|---|---|---|

| Phase 1 (2022-2024) | Model provider | Better answers to questions |

| Phase 2 (2024-2025) | API platform | Developer tool ecosystem |

| Phase 3 (2025-2026) | Agent execution | Codex writes code, runs tests, ships PRs |

| Phase 4 (2026+) | Context platform | Stateful runtime that understands org history |

What Frontier Is Actually Building

OpenAI Frontier, launched February 5, 2026, has four core components that together form the architecture of a context platform:

| Component | Function | Strategic Implication |

|---|---|---|

| Business Context | Semantic layer connecting enterprise data sources — CRMs, warehouses, internal tools | The synthesis layer: agents understand how information flows and where decisions happen |

| Agent Execution | Reasoning, tool use, memory from past interactions | Stateful agents that accumulate understanding over time |

| Evaluation & Optimization | Built-in feedback loops for agent performance | The platform gets better as it learns your organization |

| Security & Governance | Identity, permissions, compliance, audit trails | Enterprise trust infrastructure for long-running autonomy |

The Fragmented Knowledge Problem

Today’s enterprise knowledge is scattered across disconnected systems. The only integration layer is human cognition — and it walks out the door every evening.

| Knowledge Cabinet | What It Holds | What Gets Lost When People Leave |

|---|---|---|

| GitHub/GitLab | Code, reviews, architectural decisions | Why the architecture was chosen; what was tried and failed |

| Slack/Teams | Informal reasoning, quick decisions, context | The rationale behind decisions never documented formally |

| Salesforce/HubSpot | Customer relationships, deal history | Relationship nuance, negotiation context, trust signals |

| Jira/Linear | Project plans, blockers, priorities | The politics, dependencies, and trade-offs behind priorities |

| Confluence/Notion | Documentation (often stale) | What’s current vs. what’s abandoned; the living context |

| Commitments, escalations, decisions | The chain of accountability and informal agreements |

“When a senior engineer leaves, they take the synthesis layer with them. The filing cabinets remain full. The organization is functionally brain-dead. The $600 billion bet is on replacing that synthesis layer with a platform.”

The AI-Powered Workplace: How Artificial Intelligence, Data, and Messaging Platforms Are Defining the Future of Work

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2. The Four Technical Pillars — and Why Each Can Fail

OpenAI’s context platform bet requires solving four massive technical challenges simultaneously. If even one fails, the multi-billion dollar investment collapses.

Pillar 1: Multiplicative Intelligence

Intelligence and context are multiplicative, not additive. A mediocre model given a million tokens of history will drown in surface-level patterns and deliver confidently wrong advice. High-level reasoning is required to distinguish a relevant past decision from a superficially similar but inapplicable one.

| Context Scale | Mediocre Model | Frontier Model |

|---|---|---|

| 10K tokens | Useful for simple Q&A | Useful for simple Q&A |

| 100K tokens | Starts pattern-matching noise | Identifies relevant signals |

| 1M tokens | Overwhelmed; surface correlations | Synthesizes cross-domain insights |

| 2M tokens | Actively misleading | Institutional reasoning (theoretical) |

The risk: context window size is a vanity metric if reasoning quality does not scale with it. A 2 million token window that produces overconfident hallucinations at scale is worse than a 100K window that knows its limits.

Pillar 2: Memory That Does Not Rot

Current AI memory is shallow. A true enterprise context platform needs institutional memory that:

| Memory Requirement | Current State | Required State |

|---|---|---|

| Decision tracking | Stateless between sessions | Tracks why decisions were made |

| Staleness detection | No awareness of time | Recognizes when decisions are outdated |

| Contradiction resolution | Accepts latest input | Resolves conflicts between old and new documentation |

| Organizational learning | Per-session context | Cumulative understanding that improves over time |

The risk: memory that does not decay is memory that does not update. The platform must distinguish between institutional knowledge that remains valid and institutional knowledge that has become dangerous.

Pillar 3: The Retrieval Bottleneck

Traditional RAG (Retrieval-Augmented Generation) breaks at enterprise scale. It cannot handle relational queries over time — for example, tracing the chain of events across eight months that led to a security vulnerability.

| Retrieval Challenge | RAG Capability | Required Capability |

|---|---|---|

| Point-in-time lookup | Works well | Works well |

| Relational query | Struggles | Causal chain tracking |

| Temporal sequence | Cannot handle | Event timeline reconstruction |

| Cross-system synthesis | Limited | Full integration across cabinets |

| Contradiction detection | Not supported | Identify conflicting information across sources |

The risk: the retrieval architecture determines whether the platform surfaces the right context or buries it. At 2 million tokens, the failure mode is not missing information — it is drowning in it.

Pillar 4: Execution at the Speed of Trust

For agents to run autonomously for days or weeks, failure rates must approach zero. A 5% failure rate per task compounds into systemic risk across multi-step workflows.

| Failure Rate | 10-Step Workflow | 50-Step Workflow | 100-Step Workflow |

|---|---|---|---|

| 5% per step | 40% total failure | 92% total failure | 99.4% total failure |

| 1% per step | 10% total failure | 39% total failure | 63% total failure |

| 0.5% per step | 5% total failure | 22% total failure | 39% total failure |

| 0.1% per step | 1% total failure | 5% total failure | 10% total failure |

The target: 99.5%+ accuracy per step for production-grade autonomous workflows. Current systems are nowhere near this for complex, multi-domain tasks.

“The four pillars are multiplicative intelligence, memory that doesn’t rot, retrieval that handles causation, and execution at the speed of trust. If even one fails, the $600 billion bet collapses.”

Ultimate Qlik Cloud Data Analytics and Data Integration: Master Data Integration and Analytics with Qlik Cloud to Drive Real-Time, Insightful, and … Across Your Organization (English Edition)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

3. Comprehension Lock-In: The Deepest Capture in Software History

The context platform strategy creates a new form of technology capture that makes traditional vendor lock-in look trivial.

The Lock-In Spectrum

| Lock-In Type | What’s Captured | Switching Cost | Recovery Time |

|---|---|---|---|

| Data lock-in | Records, schemas, formats | High | Weeks to months |

| API lock-in | Code dependencies, integrations | Medium-high | Months |

| Workflow lock-in | Processes, automations, rules | High | Months |

| Prompt/tuning lock-in | Optimized prompts, fine-tuning | Medium | Weeks |

| Embedding lock-in | Vector databases, retrieval | Very high | Months (re-embed) |

| Comprehension lock-in | Organizational understanding | Extreme | Years (if ever) |

What Comprehension Lock-In Means

If an organization spends two years building a synthesized layer of knowledge — where the platform connects code reviews to board decisions to customer feedback to architectural choices — switching to a different AI provider means:

| Asset at Risk | What Happens on Switch |

|---|---|

| Accumulated context | Reset to zero |

| Cross-system reasoning | Rebuilt from scratch |

| Decision history | Fragmented across old logs |

| Organizational learning | Lost (stored in platform state) |

| Stale knowledge detection | Starts over; no temporal awareness |

| Institutional memory | Gone; the “brain” is wiped |

This is not switching a tool. It is resetting an organization’s cognitive infrastructure. The switching cost is measured not in engineering months but in organizational intelligence lost.

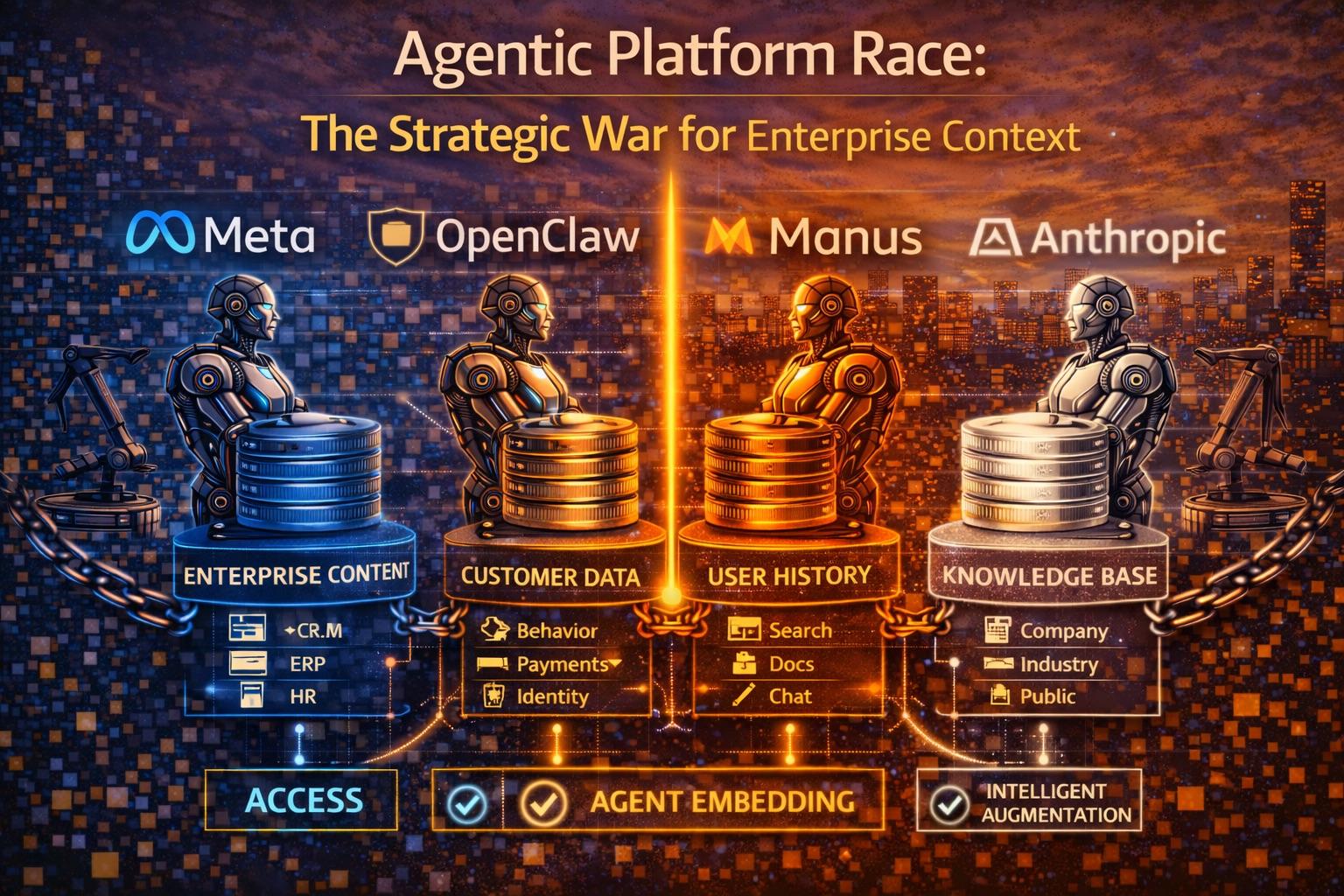

The Anthropic Counter-Strategy

While OpenAI builds top-down with massive infrastructure, Anthropic is accumulating context bottom-up through developer workflows:

| Strategy | OpenAI (Top-Down) | Anthropic (Bottom-Up) |

|---|---|---|

| Context capture | Architectural (data dump) | Organic (daily workflow) |

| Entry point | Enterprise platform (Frontier) | Developer terminal (Claude Code) |

| Context artifacts | Business Context semantic layer | CLAUDE.md files, session histories |

| Learning mechanism | Platform-level optimization | Project-level conventions, memory |

| User relationship | Organization-wide deployment | Individual developer adoption |

| Lock-in vector | Institutional understanding | Developer workflow dependency |

The strategic irony: context captured organically — through how people actually work, what they ask, what they correct, what they revisit — might be more valuable than context captured architecturally through data ingestion. The developer who uses Claude Code daily is teaching the platform what matters in their codebase through every session, every correction, every CLAUDE.md instruction.

“Salesforce locks you in via data. The context platform locks you in via understanding. Comprehension lock-in is the deepest form of capture in software history — you cannot export an organization’s synthesized intelligence.”

AI-Powered Business Intelligence: Improving Forecasts and Decision Making with Machine Learning

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4. OECD Context: The Constraint Is Institutional, Not Technical

OECD regional broadband data shows household penetration exceeding 98% in advanced economies (e.g., German TL3 regions at 98.9%). The technical infrastructure for enterprise context platforms is universally available. The constraints are institutional: governance frameworks, switching cost awareness, and organizational capacity to manage AI-dependent knowledge systems.

Where the Constraints Are

| Factor | Data | Implication |

|---|---|---|

| Broadband access | 98.9% (advanced) | Technical adoption universally feasible |

| Unemployment | 5.0% (stable) | Tight labour → context platforms augment scarce institutional knowledge |

| Youth unemployment | 11.2% | Entry-level knowledge work most affected by synthesis automation |

| Enterprise AI adoption | 40% apps with agents (Gartner) | Rapid adoption; governance lagging |

| Governance maturity | 21% (Deloitte) | 79% adopting without frameworks for managing AI-held knowledge |

| Data privacy barrier | 67% | Context platforms require deep data access; privacy is the gate |

| Cost unpredictability | 45% | Long-running context accumulation costs are opaque |

| Project cancellation | 40%+ (Gartner) | Governance gaps → failure regardless of platform quality |

The Institutional Knowledge Risk

| Risk | Description | OECD Relevance |

|---|---|---|

| Knowledge concentration | Organizational understanding held by single AI platform | No portability standards exist |

| Brain-dead on switch | Switching provider resets institutional memory | Competition policy not designed for this |

| Subsidy dependency | Platform-subsidized context accumulation creates reliance | Market contestability at risk |

| Regulatory gap | AI Act addresses risk classification, not knowledge portability | DMA review (May 2026) may address |

Transparency note: OECD does not directly measure enterprise AI context platform adoption, comprehension lock-in, or knowledge portability. The indicators above are infrastructure, labour market, and governance proxies. The institutional risks described are structural extrapolations, not measured outcomes.

5. Practical Actions for Leaders

1. Start building your own context layers now — even at smaller scale. Do not wait for a magic product. Structure your documentation, decision logs, and shared understanding to be AI-ready. Ensure organizational knowledge is captured in formats that any platform can ingest, not locked into a single vendor’s semantic layer.

2. Treat context accumulation as a strategic asset with portability requirements. Every month your organization spends building synthesized understanding on a single platform increases switching costs. Require contractual rights to export: context graphs, reasoning histories, organizational learning data, and workflow definitions. If you cannot export your context, you cannot leave.

3. Evaluate the top-down vs. bottom-up context capture trade-off. OpenAI’s Frontier captures context architecturally (enterprise data ingestion). Claude Code captures context organically (developer workflow). The right approach depends on your organization — but understand that bottom-up context (how people actually work) may be more durable than top-down context (how data is structured).

4. Demand 99.5%+ accuracy evidence before granting long-running autonomy. The compound failure math is unforgiving: 5% error per step becomes 92% failure over 50 steps. Before allowing agents to run autonomously for extended periods, require documented accuracy rates for production-representative tasks, not benchmark scores.

5. Map your synthesis layer dependencies today. Identify where organizational knowledge currently lives, who holds the synthesis layer (which people connect which systems), and what would be lost if those people — or that platform — disappeared. This is your context risk register.

| Action | Owner | Timeline |

|---|---|---|

| AI-ready knowledge structuring | CTO + Knowledge Mgmt | Q2 2026 |

| Context portability requirements | Legal + CTO | Q2 2026 |

| Top-down vs. bottom-up evaluation | CTO + Engineering | Q2–Q3 2026 |

| Autonomous accuracy benchmarking | CTO + CISO | Q3 2026 |

| Synthesis layer risk mapping | CTO + CHRO | Q2 2026 |

What to Watch

Whether GPT-5.4’s 2 million token context window delivers synthesis-quality reasoning or just longer pattern matching. The context window size is meaningless if the model cannot distinguish a relevant decision from 18 months ago from a superficially similar but inapplicable one. The test: can a 2 million token context platform outperform a well-organized 100K context with superior retrieval? If not, the trillion-token thesis collapses.

The convergence of top-down and bottom-up context strategies. OpenAI building from enterprise data ingestion, Anthropic building from developer workflow capture. The platform that merges both — institutional data context with organic workflow context — may create the most defensible position. Watch for Frontier integrating developer-level session context, or Claude Code expanding into enterprise-wide knowledge synthesis.

Context portability as the next regulatory frontier. The DMA review (May 2026) and AI Act (August 2026) address data portability and risk classification. Neither yet addresses knowledge portability — the right to export an AI platform’s accumulated understanding of your organization. If comprehension lock-in is real, this regulatory gap will become the most consequential omission in technology regulation.

The Bottom Line

$14B OpenAI ARR. $2.5B Claude Code ARR. 2M token context window (leaked). $600B infrastructure bet. 4 technical pillars, each capable of collapsing the thesis. 40% enterprise apps with agents. 21% governance maturity. 40%+ projects canceled. 99.5%+ accuracy target for autonomous workflows.

The race is not about which model hits a higher benchmark. It is about who becomes the canonical source of organizational truth — the platform that holds an enterprise’s accumulated understanding, reasoning history, and decision context. OpenAI is building this top-down through Frontier’s business context layer. Anthropic is building it bottom-up through Claude Code’s organic developer workflow capture.

Comprehension lock-in — the inability to export an organization’s synthesized intelligence — is the deepest form of technology capture ever conceived. The organizations that recognize this now and demand context portability, build vendor-neutral knowledge structures, and map their synthesis layer dependencies will retain strategic optionality. The organizations that do not will discover that switching AI providers means resetting their institutional brain to zero.

The agentic platform race is not about models, benchmarks, or context windows. It is about who owns the synthesis layer — the intelligence that connects an organization’s fragmented knowledge into coherent action. That is the $600 billion bet. Everything else is a distraction.

Thorsten Meyer is an AI strategy advisor who notes that “comprehension lock-in” sounds abstract until you realize it means your organization’s institutional memory is stored in a platform you do not control, cannot export, and cannot replicate — which is roughly the plot of every technology acquisition regret story ever told. More at ThorstenMeyerAI.com.

Sources

- GPT-5.4 Leak — 2M Token Context Window, Stateful AI, Full-Resolution Vision (Mar 2026)

- Nate B. Jones — “Beyond GPT-5: The Strategic War for Enterprise Context” (YouTube, Mar 2026)

- OpenAI — Frontier Platform: Business Context, Agent Execution, Governance (Feb 5, 2026)

- OpenAI — $14B Total ARR; Codex/Frontier Enterprise Platform Architecture

- Anthropic — Claude Code: $2.5B ARR, CLAUDE.md, Session History, Organic Context Capture

- Manifold Markets — GPT-5.4 Ship Probability: 55% Pre-April, 74% Pre-June

- Mordor Intelligence — Agentic AI: $6.96B (2025), $57.42B (2031), 42.14% CAGR

- IBM/Salesforce — 1 Billion AI Agents by End 2026

- Intelligence Lock-In Research — Knowledge Capture, Process Gravity, Switching Costs

- LangWatch — 6 Context Engineering Challenges at Enterprise Scale

- Amnic — Context Graphs as $1T Enterprise AI Backbone

- Gartner — 40% Enterprise Apps with Agents; 40%+ Canceled by 2027

- Deloitte — 21% Mature Governance

- Enterprise Surveys — 67% Data Privacy Barrier, 45% Cost Unpredictability

- EU — DMA Review May 2026; AI Act High-Risk August 2026

- OECD — 5.0% Unemployment, 11.2% Youth, 98.9% Broadband (Feb 2026)

- Compound Failure Math — 5% Error/Step = 92% Failure Over 50 Steps

© 2026 Thorsten Meyer. All rights reserved. ThorstenMeyerAI.com