Thorsten Meyer | ThorstenMeyerAI.com | February 2026

Executive Summary

Enterprise AI demand is real: worldwide AI spending will reach $2.52 trillion in 2026, up 44% year over year (Gartner). But the money is no longer flowing freely. Only 26.7% of CFOs plan to raise GenAI budgets — down from 53.3% a year ago. Only 14% of CFOs report measurable ROI today. 54% of organizations report positive returns. The rest are funding experiments that their finance teams can no longer justify.

The era of broad AI experimentation is giving way to measurable ROI, predictable operating costs, and clear accountability for outcomes. 61% of CEOs report increasing pressure to demonstrate AI returns compared to a year ago. 65% say they lack alignment with their CFO on AI value. The organizations that win in 2026 are not the ones spending the most — they’re the ones that can show cost-per-completed-transaction, exception rates, and human rework load for every workflow they automate.

This article is about the shift from pilot enthusiasm to unit-economics discipline — and the operating framework that survives CFO-level proof requirements.

| Metric | Value |

|---|---|

| Worldwide AI spending 2026 (Gartner) | $2.52 trillion (+44% YoY) |

| AI application software spending 2026 (Gartner) | $270 billion (3x prior) |

| CFOs planning GenAI budget increase | 26.7% (down from 53.3%) |

| CFOs reporting measurable AI ROI | 14% |

| CFOs expecting significant impact within 2 years | 66% |

| CFOs confident in driving AI impact | 36% |

| Organizations reporting positive AI ROI | 54% |

| CEOs: increasing ROI pressure | 61% |

| CEOs: not aligned with CFO on AI value | 65% |

| CEOs: near-term ROI undermines long-term | ~75% |

| GenAI pilots failing ROI (MIT) | 95% |

| AI projects: pilot-to-production failure | ~50% |

| Transformation success rate (McKinsey) | 30% |

| EBIT attributable to AI (most respondents) | <5% |

| Orgs reporting productivity gains (Deloitte) | 66% |

| Aspiring to AI revenue growth vs. achieving | 74% vs. 20% |

| Enterprise SaaS with outcome-based elements by 2026 | 40% (Gartner) |

| AI TCO underestimate for agents | 40–60% |

| Hidden costs: % of visible costs | 200–400% inflated |

| Budget overruns in first year | 30–40% |

| Unexpected charges from consumption pricing | 65% of IT leaders |

| Average monthly AI spending (2025) | $85,521 (+36% YoY) |

| AI costs eroding gross margins >6% | 84% |

| POC to production cost increase | 250–400% |

| Orgs spending $100K+/month on AI | 45% (up from 20%) |

| Enterprise AI spend per employee annually | $590–$1,400 |

AI for Data Analytics: A Practical Guide to Applying Machine Learning and Generative AI for Better Decisions

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

1. The Demand-Budget Paradox

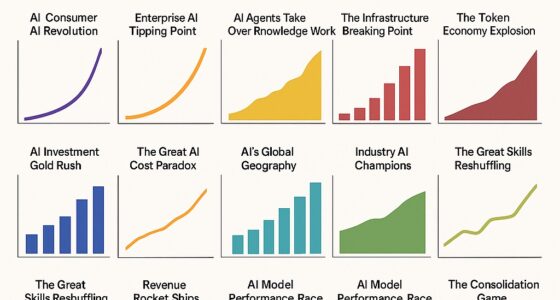

Enterprise AI demand is accelerating. Budgets are decelerating. This is not a contradiction — it’s a maturation signal.

Demand Is Real

| Demand Indicator | Value | Source |

|---|---|---|

| Worldwide AI spending 2026 | $2.52 trillion (+44% YoY) | Gartner |

| AI application software 2026 | $270 billion (3x prior year) | Gartner |

| Orgs using AI in 1+ function | 78% | McKinsey |

| Orgs planning AI agent deployment | 50%+ within 1 year | Protiviti |

| Average monthly AI spend (2025) | $85,521 (+36% YoY) | Industry data |

| Orgs spending $100K+/month | 45% (up from 20%) | Industry data |

The demand numbers are unambiguous. Enterprises are spending more on AI than ever. The question is not whether organizations are buying — it’s whether they’re buying the right things, measuring the right outcomes, and stopping the right experiments.

Budgets Are Tightening

| Budget Signal | Value | Source |

|---|---|---|

| CFOs raising GenAI budgets | 26.7% (↓ from 53.3%) | Gartner |

| CFOs reporting measurable ROI | 14% | Gartner |

| CFOs confident in driving AI impact | 36% | Gartner |

| CFOs confident in AI for finance | 44% | Gartner |

| CEOs: increasing ROI pressure | 61% | Fortune/AIQ |

| CEOs: not aligned with CFO on value | 65% | Fortune/AIQ |

| CEOs: near-term demands undermine bets | ~75% | Fortune/AIQ |

The CFO data tells the real story. A year ago, over half of CFOs planned to increase GenAI budgets. Now it’s barely a quarter. Only 14% report measurable ROI. Only 36% are confident they can drive enterprise AI impact. The checkbook is closing — not because demand disappeared, but because evidence didn’t arrive.

The Consequence: Vendor Consolidation

Enterprises will spend more through fewer vendors in 2026. The experimentation budget is being cut. Overlapping tools are being rationalized. Savings are redeployed into AI technologies that have delivered. CIOs are trading sprawling AI toolchains for platform SKUs, coterminous agreements, and committed-use discounts.

The vendor with weak differentiation or unclear ROI gets cut. The vendor with proven unit economics gets more budget. This is the sorting mechanism that pilot enthusiasm couldn’t provide.

Simhevn Electronic Digital Calipers, inch and Millimeter Conversion,LCD Screen displays 0-6" Caliper Measuring Tool, Automatic Shutdown, Suitable for DIY/Jewelry Measurement (New150mm Black Plastic)

[4 measuring methods and safety]: Digital calipers can be used to measure inner and outer diameters, depths and…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2. Why Pilots Pass But Budgets Fail

The gap between “the pilot worked” and “the CFO approved scale” is where most enterprise AI initiatives die. The failure is not technical. It’s economic.

The Unit-Economics Gap

| Economic Reality | What Pilots Hide |

|---|---|

| Visible costs = 15–20% of TCO | Integration, data engineering, ops management hidden |

| POC-to-production cost increase | 250–400% over proof-of-concept |

| First-year budget overruns | 30–40% of organizations |

| TCO underestimate for AI agents | 40–60% |

| Unexpected consumption charges | 65% of IT leaders report |

| AI costs eroding gross margins >6% | 84% of respondents |

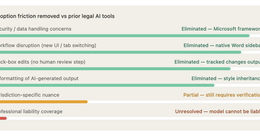

Pilots succeed in controlled environments with dedicated attention, limited data, and forgiven costs. Production succeeds only when the unit economics work at scale: cost per completed transaction must drop, quality must hold or improve, and exception handling must be predictable.

The most common pilot-to-budget failure mode: the pilot demonstrated capability but never measured cost-per-unit-of-work. The CFO doesn’t care that the AI “worked.” The CFO cares what it costs per completed workflow transaction, what the exception rate is, and whether the human rework load is trending down.

The ROI Timeline Mismatch

| Stakeholder | Expected ROI Timeline | Actual AI ROI Timeline |

|---|---|---|

| Board | 6–12 months | 2–4 years (Deloitte) |

| CFO | Quarterly visibility | Often not measurable for 12+ months |

| Business unit | Immediate productivity | 30–60 days for initial gains |

| IT/Operations | After integration stabilizes | 3–6 months post-deployment |

Deloitte data shows typical AI ROI takes two to four years — far longer than the seven to twelve months expected for standard technology investments. The mismatch creates organizational friction: boards want returns that AI can’t yet deliver, while CFOs are asked to fund initiatives that won’t appear on quarterly statements.

The EBIT Reality

McKinsey’s global survey reveals the scale of the gap: only 39% of respondents attribute any EBIT impact to AI, and most of those say less than 5% of their organization’s EBIT is attributable to AI use. Only 6% — the high performers — report more than 5% of EBIT from AI.

Nearly two-thirds of respondents say their organizations have not yet begun scaling AI across the enterprise. The demand is real. The P&L impact, for most organizations, is not yet.

Successful Construction Project Management: The Practical Guide

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

3. The Unit-Economics Framework

Unit economics is the discipline that converts pilot enthusiasm into CFO-grade evidence. Three metrics matter.

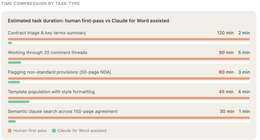

Metric 1: Cost Per Completed Workflow Transaction

Not cost per API call. Not cost per token. Cost per completed, end-to-end workflow transaction that produces a business outcome.

| Cost Component | What to Include |

|---|---|

| Compute/API costs | Token usage, model inference, API calls |

| Data pipeline costs | Ingestion, processing, validation |

| Integration costs | System connections, middleware, data transforms |

| Human oversight costs | Review time, escalation handling, corrections |

| Exception handling costs | Rework, fallback processing, error resolution |

| Maintenance costs | Model updates, prompt tuning, monitoring |

The target: declining cost per completed transaction over 60–90 days as the system learns, exceptions decrease, and human oversight shifts from approval-required to post-action review.

Metric 2: Exception Rate and Human Rework Load

| Exception Metric | What It Reveals |

|---|---|

| Exception rate | % of transactions requiring human intervention |

| Rework rate | % of AI outputs that need correction before use |

| Escalation frequency | How often AI hits policy/confidence boundaries |

| False-positive rate | Unnecessary human interventions |

| Resolution time per exception | Cost of each human touchpoint |

If the exception rate isn’t declining, the AI isn’t learning. If the human rework load is stable or growing, the automation is creating work, not eliminating it. These metrics expose the “fragile manual workaround” that many pilots conceal: the AI handles the easy cases while humans handle everything else.

Metric 3: Fully Loaded Cost vs. Baseline

| Comparison Element | Baseline (Pre-AI) | AI-Enabled |

|---|---|---|

| Cost per transaction | Known, measurable | Must include full TCO |

| Processing time | Known cycle time | End-to-end including exceptions |

| Error rate | Historical error data | AI errors + human correction errors |

| Throughput | Current volume capacity | Volume at sustained quality |

| Staffing requirement | Current FTE allocation | FTE after redeployment/reduction |

The comparison must be fully loaded: not just API costs vs. labor costs, but total cost of ownership including integration, maintenance, monitoring, exception handling, and the human oversight architecture. Enterprises that compare only API costs to FTE costs will consistently overestimate ROI.

Trak-4 GPS Tracker for Vehicles, Assets, Equipment. Long Battery Life, Waterproof, Global Tracking. Low-Cost Subscription Required.

The Trak4 USB Rechargeable Tracker is built to be waterproof and durable. Its robust internal LIPO battery provides…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4. The Decision Framework

Three gates. No ambiguity.

Go: Scale the Workflow

| Go Condition | Evidence Required |

|---|---|

| Unit cost drops | Cost per completed transaction declining over 60–90 days |

| Quality holds or improves | Error rate at or below baseline; customer impact stable |

| Exception rate declining | Human rework load trending down, not up |

| TCO bounded | Hidden costs identified and included in projections |

| Governance overhead manageable | Compliance and oversight costs proportionate to value |

The Go decision requires all five conditions. Partial evidence — “cost is down but exceptions are up” — is not a Go. It’s an Iterate.

Pause: Iterate with Adjustments

| Pause Condition | What to Fix |

|---|---|

| Gains depend on manual workaround | Automate the workaround or reclassify as human task |

| Exception rate stable, not declining | Retrain model, adjust triggers, refine data pipeline |

| Hidden costs exceeding projections | Renegotiate vendor terms, simplify integration |

| Quality inconsistent | Add validation layers, tighten confidence thresholds |

| Timeline exceeded without trend improvement | Set 30-day bounded iteration with specific targets |

The Pause is a structured 2–4 week iteration with specific targets, not an open-ended continuation. “We need more time” without defined conditions for resuming is not a Pause — it’s denial.

Stop: Reallocate Budget

| Stop Condition | Evidence |

|---|---|

| Governance overhead exceeds value | Compliance and oversight costs larger than productivity gain |

| Unit cost not declining after 90 days | No learning curve; static or rising cost per transaction |

| Exception rate rising | AI creating more work than it eliminates |

| Quality degradation | Error rate above baseline despite intervention |

| Vendor unable to meet outcome terms | Pricing doesn’t align with delivered value |

The Stop decision is the most valuable when the evidence supports it. The 42% of enterprises that scrapped AI initiatives in 2025 (S&P Global) often ran for months before stopping. A structured Stop in 60–90 days saves more than an unstructured continuation that ends in 9 months.

5. What Enterprise Leaders Should Do Now

Action 1: Prioritize 3–5 Workflows with Measurable P&L Impact

Not the most exciting AI use case. The most measurable one with direct P&L visibility. The workflow must have: quantified baseline performance, attributable cost structure, measurable output quality, and clear error rates.

| Good First Workflows | Why |

|---|---|

| Support triage | High volume, measurable resolution time, clear cost per ticket |

| Invoice processing | Structured data, quantifiable error rate, known cycle time |

| Compliance review | Audit-driven, time-intensive, penalty-linked outcomes |

| Proposal generation | Template-heavy, revision-countable, deadline-driven |

| Claims adjudication | Rule-based, high volume, measurable accuracy |

Action 2: Require Baseline vs. Target Metrics Before Launch

| Metric | Baseline (Pre-Launch) | Target (Post-Launch) |

|---|---|---|

| Cost per transaction | Current fully loaded cost | Target with AI fully loaded cost |

| Processing time | Current cycle time | Target end-to-end time |

| Error rate | Current error frequency | Target error frequency |

| Exception rate | N/A (new metric) | Acceptable human intervention % |

| Human rework load | Current manual processing hours | Target after automation |

No baseline, no launch. This is the single most important discipline. The 95% pilot failure rate (MIT) and the 14% CFO measurable ROI both trace to the same root cause: no quantified starting point against which to measure.

Action 3: Tie Vendor Payment to Operational Outcomes

| Old Contract Model | New Contract Model |

|---|---|

| Seat-based licensing | Cost per resolved transaction |

| Implementation fees + maintenance | Milestone payments tied to KPI achievement |

| Annual subscription | Outcome-linked pricing with performance guarantees |

| Unlimited use rights | Consumption-based with cost caps and audit rights |

Gartner forecasts 40% of enterprise SaaS will include outcome-based elements by 2026, up from 15%. The vendor confident in their product accepts outcome risk. The vendor who isn’t reveals the gap between their demo and their production economics.

Action 4: Instrument Every Workflow for Unit Economics

Deploy monitoring from day one that tracks:

| Instrument | Purpose |

|---|---|

| Cost per completed transaction | Core unit economics metric |

| Exception rate + trend | Learning curve visibility |

| Human rework hours | True automation displacement |

| Quality delta vs. baseline | Value creation evidence |

| Governance overhead | Compliance cost proportionality |

| Vendor cost vs. projected | Budget accuracy tracking |

Action 5: Run 60–90 Day Decision Cycles

Not 6-month reviews. Not annual budget cycles. 60–90 day windows with pre-defined Go/Pause/Stop criteria agreed before launch. The organizations that scale fastest are the ones that decide fastest — including deciding to stop.

6. What to Watch

Cost per completed workflow transaction as the standard AI metric. Token costs, API calls, and seat counts are proxy metrics. The enterprise metric that CFOs will standardize around is the fully loaded cost per completed workflow transaction — including compute, integration, exception handling, and human oversight. Vendors who can demonstrate declining cost-per-transaction will win. Vendors who can’t will be consolidated out.

Exception rates and human rework load as automation truth tests. The pilot that “automates” 80% of cases but creates 40% more work for the remaining 20% is not automation. Exception rate trends and human rework hours are the metrics that reveal whether AI is reducing work or redistributing it. Expect these to become standard vendor accountability metrics.

Vendor pricing shift from seat-based to outcome-based. 40% of enterprise SaaS will include outcome-based elements by 2026 (Gartner). The structural shift: enterprises pay for resolved tickets, processed claims, completed transactions — not for access to tools. This aligns vendor incentives with buyer outcomes and creates the pricing transparency that CFOs require.

The Bottom Line

Enterprise AI spending will reach $2.52 trillion in 2026. But only 26.7% of CFOs plan to increase GenAI budgets. Only 14% report measurable ROI. 54% of organizations see positive returns — meaning 46% are still funding experiments without evidence.

The shift is from pilot enthusiasm to unit-economics discipline: cost per completed transaction, exception rates trending down, human rework load declining, and fully loaded TCO that survives CFO scrutiny. The organizations that scale AI in 2026 are not the ones with the biggest budgets. They’re the ones that can prove, workflow by workflow, that the economics work.

The CFO doesn’t care that the AI “worked.” The CFO cares what it costs per completed transaction, what the exception rate is, and whether it’s getting cheaper.

Thorsten Meyer is an AI strategy advisor who has noticed that the enterprise leaders asking “How much does it cost per transaction?” are scaling, while the ones asking “How many people are using it?” are still in pilot purgatory. More at ThorstenMeyerAI.com.

Sources

- Gartner — $2.52 Trillion AI Spending 2026 (+44% YoY)

- Gartner — $270 Billion AI Application Software (3x Prior Year)

- Gartner — 26.7% CFOs Raising GenAI Budgets (Down from 53.3%)

- Gartner — 14% CFOs Report Measurable ROI; 36% Confident in AI Impact

- Gartner — 40% Enterprise SaaS Outcome-Based by 2026

- Fortune/AIQ — 61% CEOs: Increasing ROI Pressure; 65% Not Aligned with CFO

- Fortune/AIQ — 95% Pilots Zero ROI (MIT); ~75% CEOs: Short-Term Undermines Long-Term

- McKinsey — 39% Attribute EBIT to AI; 6% High Performers >5% EBIT

- McKinsey — Two-Thirds Not Yet Scaling AI Across Enterprise

- Deloitte — 66% Productivity Gains; 74% Aspire Revenue vs. 20% Achieving

- Deloitte — AI ROI Timeline: 2–4 Years vs. 7–12 Month Tech Standard

- Deloitte — 3,235 Leaders Surveyed (2025); 34% Transformative Use

- Kyndryl — 54% Positive ROI; 33% YoY Investment Increase

- Kyndryl — 30% Transformation Success Rate (McKinsey Reference)

- S&P Global — 42% Scrapped AI Initiatives (2025)

- Industry Data — $85,521 Average Monthly AI Spend; 45% Over $100K/Month

- Industry Data — 15–20% Visible Costs; 200–400% TCO Inflation

- Industry Data — 250–400% POC-to-Production Cost Increase

- Industry Data — 65% Unexpected Consumption Charges; 30–40% Budget Overruns

- Industry Data — 84% AI Costs Eroding Gross Margins >6%

- TechCrunch — Enterprises: More Spend, Fewer Vendors (2026)

- Protiviti — 50%+ Planning AI Agent Deployment Within 1 Year

- MIT — 95% GenAI Pilots Fail ROI

- Pilot.com — AI Pricing Economics: $0.99 Per Resolved Conversation (Intercom)

- WEF — CFO AI Investment: Cost and Productivity Gains

© 2026 Thorsten Meyer. All rights reserved. ThorstenMeyerAI.com