Thorsten Meyer | ThorstenMeyerAI.com | February 2026

Executive Summary

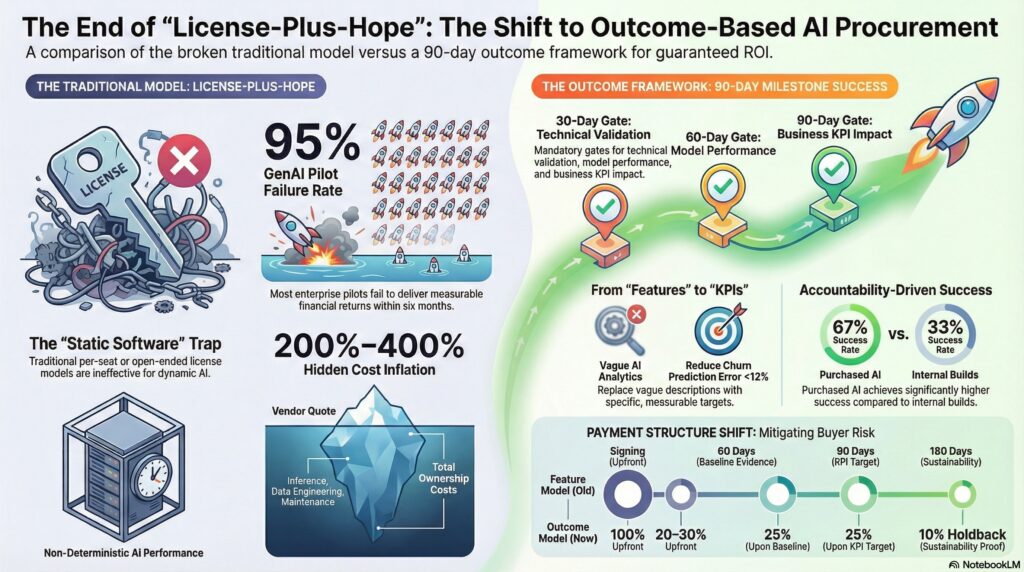

95% of enterprise generative AI pilots fail to deliver measurable financial returns within six months (MIT, 2025). 42% of companies scrapped most of their AI initiatives in 2025 — up from 17% the year before (S&P Global). Hidden costs inflate total AI ownership by 200–400% compared to initial vendor quotes.

The procurement model that created these outcomes — license-plus-hope, seat-based pricing, open-ended “transformation” mandates — was designed for static software, not for systems that degrade, hallucinate, and require continuous recalibration. Every failed AI project that passed through a traditional procurement gate is evidence that the contract, not just the technology, needs to change.

Outcome-based AI procurement ties payment to verified business impact, replaces open-ended licenses with 30/60/90-day performance gates, and shifts risk from buyer to vendor at the point where it belongs: the claim of capability.

| Metric | Value |

|---|---|

| GenAI pilots failing (MIT) | 95% |

| Companies scrapping AI initiatives (S&P, 2025 vs. 2024) | 42% (up from 17%) |

| AI projects showing zero ROI (Constellation) | 42% |

| Executives measuring ROI confidently | 29% |

| Positive profitability impact (Forrester) | 15% of AI decision-makers |

| Hidden cost inflation vs. vendor quote | 200–400% |

| Visible costs as share of true TCO | 15–20% |

| Cost misestimation rate (>10% error) | 85% of organizations |

| Budget overruns in Year 1 | 30–40% |

| Unexpected charges from consumption models | 65% of IT leaders |

| Agentic AI projects expected to fail by 2027 (Gartner) | 40%+ |

| Federal AI spending (2022–2024) | $5.6 billion |

| UK government AI contracts (through Aug 2025) | £573 million |

| Credit-model SaaS vendors (YoY growth) | 79 (up 126% from 35) |

| Outcome-based SaaS components (Gartner, 2025) | 30%+ of enterprise SaaS |

| Public servants experimenting with AI | 60%+ |

| Public servants who received guidance | 35% |

| EY: CPOs planning GenAI deployment (3 years) | 80% |

| Purchased AI: success rate (MIT) | 67% |

| Internal AI builds: success rate (MIT) | 33% |

| Inference as % of AI compute cost | 70–90% |

outcome-based AI procurement contracts

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

1. Why the Old Contract Model Is Breaking

Traditional enterprise software procurement assumes three things: the product ships complete, performance is deterministic, and maintenance is marginal. AI violates all three.

The Static-Software Assumption

Enterprise procurement templates — RFPs, scoring matrices, license agreements — evolved for software that behaves the same way on day 300 as on day 1. ERP, CRM, office suites: install, configure, maintain. Total cost of ownership was calculable because the variables were bounded.

AI systems are different. Models degrade. Data distributions drift. Performance is probabilistic, not deterministic. A system that achieves 92% accuracy in pilot can drop to 74% in production as input conditions change. The contract that authorized the purchase didn’t anticipate this, because the procurement template it was built on was designed for a different class of technology.

The Failure Evidence

The evidence is not ambiguous:

| Finding | Source |

|---|---|

| 95% of GenAI pilots fail to deliver measurable ROI within 6 months | MIT GenAI Divide (2025) |

| 42% of companies scrapped most AI initiatives in 2025 | S&P Global (up from 17% in 2024) |

| 42% of enterprises deployed AI with zero ROI | Constellation Research |

| Only 15% of AI decision-makers report positive profitability impact | Forrester |

| Only 29% of executives can measure AI ROI confidently | Survey data (2025) |

| 88% of AI pilots never reach production | Industry analysis |

| 40%+ of agentic AI projects expected to fail by 2027 | Gartner |

The pattern is consistent: organizations are buying AI through procurement processes that don’t measure what matters, don’t trigger accountability at the right milestones, and don’t provide exit mechanisms when performance diverges from claims.

The Hidden Cost Problem

The vendor quote is a down payment on a much larger obligation.

Hidden costs inflate total AI ownership by 200–400% compared to initial vendor quotes. Visible costs — the line item on the PO — represent only 15–20% of true expenditure. 85% of organizations misestimate AI project costs by more than 10%, and 65% of IT leaders report unexpected charges from consumption-based pricing.

The cost structure:

| Cost Layer | What It Includes | Typical Share |

|---|---|---|

| Vendor license/subscription | Listed price | 15–20% of true TCO |

| Inference compute | Token costs, API calls, GPU hours | 70–90% of compute lifetime |

| Data engineering | Cleaning, labeling, pipeline maintenance | Major hidden cost |

| Integration | Connecting to existing systems | Underestimated by 2–3x |

| Maintenance | Retraining, drift monitoring, patching | 15–30% of dev cost annually |

| Workforce | Training, hiring, skill gaps | 40–60% wage premium |

65% of IT leaders report unexpected charges from consumption-based AI pricing, with actual costs frequently exceeding initial estimates by 30–50% due to token overages, API rate limits, and unpredictable user adoption.

The procurement template that ignores this cost structure isn’t just incomplete. It’s a mechanism for transferring risk from vendor to buyer at the precise moment the vendor’s claims are least testable.

FortiGate-100F Network Security Appliance Plus 3 Year FortiGuard Enterprise Protection and FortiCare Premium (FG-100F-BDL-809-36)

Comprehensive Enterprise Security Solution: Includes FortiGate-100F hardware plus 3 year of FortiCare Premium and FortiGuard Enterprise Protection.

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2. From Feature Procurement to Outcome Procurement

The shift is structural, not semantic. Feature procurement asks: “What does this system do?” Outcome procurement asks: “What business result does this system produce, by when, measured how, and what happens if it doesn’t?”

Five Components of an Outcome-Based AI Contract

| Component | Feature Model | Outcome Model |

|---|---|---|

| Scope | “AI-powered analytics platform” | “Reduce customer churn prediction error to <12% within 90 days” |

| Success metric | Uptime SLA (99.9%) | Business KPI delta (e.g., 15% reduction in false positives) |

| Evidence cadence | Quarterly business review | 30/60/90-day automated dashboards with agreed baselines |

| Risk condition | Vendor indemnifies for IP/data breach | Vendor shares performance risk: payment tied to verified impact |

| Commercial trigger | Renewal at contract end | Scale/stop gate at 60 and 90 days; payment adjusts to measured outcome |

This is not theoretical. It’s already happening in pockets:

Zendesk charges $1.50 per AI-resolved ticket — payment directly linked to a measurable outcome. HubSpot uses metric-linked tiers, reporting 31% higher customer retention and 21% higher satisfaction from outcome-aligned pricing. ServiceNow offers an efficiency guarantee. Gartner projected that by 2025, over 30% of enterprise SaaS solutions would incorporate outcome-based components, up from ~15% in 2022.

The credit-model trend reinforces this: 79 of 500 companies in the PricingSaaS Index now offer credit-based pricing, up 126% year-over-year from 35 at end of 2024. Credits give buyers predictability while giving vendors a usage component tied to actual consumption.

The Procurement Process Shift

| Traditional Process | Outcome-Based Process |

|---|---|

| RFP lists features and compliance checkboxes | RFP defines use-case boundary, success metric, evidence requirements |

| Vendor scores on capability claims | Vendor scores on outcome commitment and assumption disclosure |

| Fixed annual license or per-seat pricing | Hybrid: base + outcome-linked variable |

| Renewal at term end | 30/60/90-day performance gates with scale/stop decisions |

| Exit = contract expiration | Exit = failure to meet agreed outcome at any gate |

The AI Pilot Handbook: A Practical Framework for AI Projects That Actually Ship and Deliver Real-world Value (The Human-First AI Series)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

3. What Buyers Should Require

The contract is the governance instrument. If the contract doesn’t enforce accountability, nothing will.

Baseline Agreement

Before any AI engagement begins, buyers and vendors must agree on the current-state baseline. You cannot measure improvement without a documented starting point.

| Baseline Element | Why It Matters |

|---|---|

| Current process performance (error rate, cycle time, cost per unit) | Without this, any vendor can claim improvement |

| Data quality assessment | Model performance is bounded by input quality |

| Integration environment documentation | Prevents “works in lab, fails in production” |

| Existing automation inventory | Identifies overlap and dependency |

30/60/90-Day Performance Gates

| Gate | What’s Measured | Decision |

|---|---|---|

| 30 days | Technical integration + data pipeline validation | Go/adjust/stop |

| 60 days | Model performance against agreed baseline | Scale/stop: first payment trigger |

| 90 days | Business KPI impact vs. stated outcome | Full payment / renegotiate / exit |

Each gate requires:

- Automated evidence generation (not vendor-curated slide decks)

- Pre-agreed success thresholds (not “directionally positive”)

- Named decision owner on buyer side

- Pre-defined exit terms if thresholds are not met

Failure Conditions

Contracts must specify what constitutes failure — and what happens when it occurs.

| Failure Condition | Trigger | Consequence |

|---|---|---|

| Model accuracy below threshold for >14 consecutive days | Automated monitoring | Vendor remediation within 7 days or payment suspension |

| Data drift exceeding agreed tolerance | Statistical monitoring | Mandatory retraining at vendor cost |

| Hidden costs exceeding 120% of disclosed TCO | Financial audit | Contract renegotiation right |

| Integration failures causing downstream system issues | Incident tracking | SLA credits + remediation timeline |

Named Owners and Accountability

| Role | Buyer Side | Vendor Side |

|---|---|---|

| Outcome owner | VP/Director with P&L authority | Named account executive with escalation authority |

| Technical owner | Integration architect | Solutions architect with deployment access |

| Data owner | Data governance lead | Data engineering lead |

| Decision authority at gates | C-level sponsor | Regional/practice lead |

MIT’s data reinforces this structure: purchased AI solutions from specialized vendors achieve a 67% success rate, versus 33% for internal builds. The difference isn’t just technology — it’s accountability structure. When a vendor has a named owner, a defined deliverable, and a payment gate, performance improves.

Exit Terms

The exit clause is the buyer’s most important instrument. It must be explicit:

- Data portability: buyer owns all training data, fine-tuning outputs, and model artifacts

- Transition period: minimum 90 days with vendor support at no incremental cost

- No lock-in: buyer retains right to deploy competing solution during transition

- Knowledge transfer: documented processes, not tribal knowledge

The Technology Advantage: How Leaders Turn AI, Data, and Systems into Sustainable Business Performance

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4. The Public Sector Procurement Problem

Government AI procurement faces the same structural failure, amplified by slower procurement cycles and weaker accountability mechanisms.

Federal agencies committed $5.6 billion to AI between 2022 and 2024. UK government AI contracts reached £573 million by August 2025, exceeding all of 2024. But 60%+ of public servants have experimented with AI while only 35% received any guidance. GenAI use cases inside US agencies jumped nine-fold in twelve months (GAO).

The OMB issued twin memoranda M-25-21 and M-25-22 in April 2025, binding every civilian department to new AI procurement frameworks. The reforms push for Other Transaction Authority (OTA), Commercial Solutions Openings (CSOs), faster award cycles, and commercially available technology. The EU’s Public Buyers Community published model contractual clauses (MCC-AI) in March — two risk tiers aligned with the AI Act, including a 12-step risk management framework.

But the gap between framework and execution is wide:

| Framework Element | Status |

|---|---|

| OMB AI procurement memos (M-25-21, M-25-22) | Issued April 2025 |

| Common clause libraries | Expected 2026 |

| Minimum vendor evidence standards | In development |

| Outcome-based acquisition language | Pilot phase |

| EU model contractual clauses (MCC-AI) | Published March 2025 |

| State-level AI capacity building | Weakest link (Code for America) |

The public sector is the largest single buyer of enterprise technology. When it shifts to outcome-based procurement, the entire vendor market adjusts.

5. Five Practical Actions

Action 1: Rewrite RFPs Around Outcomes, Not Features

Stop listing feature requirements. Start defining:

- Use-case boundary: What specific process this AI addresses

- Success metric: Quantified business outcome with baseline and target

- Evidence requirement: How success will be measured and by whom

- Assumption disclosure: What the vendor assumes about data, infrastructure, and user behavior

The RFP is the contract’s DNA. If the RFP asks for features, the contract will measure features. If the RFP asks for outcomes, the contract will enforce accountability.

Action 2: Require Vendor Assumption Disclosure

Every AI vendor makes assumptions about the buyer’s data quality, infrastructure readiness, user adoption, and process maturity. These assumptions are rarely documented. When the project fails, the vendor points to “implementation challenges” — which is another way of saying their undisclosed assumptions were wrong.

Require vendors to submit a formal Assumption Register with their proposal:

- Data quality assumptions (completeness, labeling, bias)

- Infrastructure assumptions (compute, latency, integration points)

- User behavior assumptions (adoption rate, workflow changes)

- Timeline assumptions (what must be true at each milestone)

If an assumption proves wrong and the vendor didn’t disclose it, the risk stays with the vendor.

Action 3: Tie Payment to Verified Impact

| Payment Structure | Feature Model | Outcome Model |

|---|---|---|

| Upfront | 100% at contract signing | 20–30% at contract signing |

| Milestone 1 (30 days) | N/A | 20% upon technical validation |

| Milestone 2 (60 days) | N/A | 25% upon baseline improvement evidence |

| Milestone 3 (90 days) | N/A | 25% upon business KPI target |

| Holdback | None | 10% retained for 180-day sustainability proof |

This is how the 80% of CPOs planning GenAI deployment (EY) should structure their investments. The vendor who is confident in their product will accept outcome-linked terms. The vendor who isn’t will walk away — and that’s the filter working.

Action 4: Implement 60/90-Day Stop/Scale Gates

The biggest waste in enterprise AI procurement isn’t failed projects. It’s projects that should have been stopped at day 60 but ran for 18 months on momentum, politics, and sunk-cost fallacy.

Gates must be:

- Automatic: triggered by pre-agreed data, not by human judgment calls

- Binary: scale, adjust, or stop — no “let’s give it another quarter”

- Public: results visible to the executive sponsor, not buried in a project team

- Consequential: gate failure triggers contractual remedies, not just a status meeting

Action 5: Track Hidden Costs from Day Zero

Create a Total Cost of Ownership tracker that captures:

- Vendor-disclosed costs (license, support, implementation)

- Consumption costs (API calls, token usage, compute)

- Integration costs (engineering hours, middleware, testing)

- Maintenance costs (retraining, drift monitoring, updates)

- Workforce costs (training, hiring, productivity loss during transition)

- Opportunity costs (what else those resources could have built)

The 85% of organizations that misestimate costs do so because they measure what the vendor charges, not what the project costs. Instrument from day zero.

6. What to Watch

Standardized outcome clauses. The EU’s MCC-AI is the first formal attempt. OMB’s common clause libraries for AI are expected in 2026. When procurement authorities publish standard outcome-based language, vendor resistance drops — because the clause is no longer negotiable, it’s institutional.

Hybrid pricing consolidation. 2026 is the year pricing models stabilize around hybrid approaches: base subscription + outcome-linked variable. The 79-company credit model trend (up 126% YoY) is the transitional form.

Boards requiring outcome proof. With 42% of companies scrapping AI initiatives and 95% of pilots failing to show ROI, board-level scrutiny is inevitable. The CFO who approved a $2M AI investment will want evidence, not a vendor presentation. Procurement becomes the enforcement mechanism.

Public sector as market signal. The US, UK, and EU are simultaneously reforming AI procurement frameworks. When the largest buyers demand outcome-based contracts, the vendor market rebuilds around that expectation.

The Bottom Line

The AI procurement model that got enterprises to 2026 — license-plus-hope, seat-based pricing, feature-checked RFPs — produced a 95% pilot failure rate, 42% initiative abandonment, and hidden costs that inflated budgets by 200–400%.

Outcome-based procurement doesn’t guarantee AI success. But it does something more important: it makes failure visible, measurable, and contractually consequential before the organization has spent 18 months and seven figures learning what a better contract would have revealed in 90 days.

The AI vendor who won’t tie payment to outcomes is telling you something about their product. The procurement team that doesn’t require it is telling you something about their process.

Thorsten Meyer is an AI strategy advisor who believes the most important clause in any AI contract isn’t the one about intellectual property — it’s the one that says what happens when the model stops performing at day 91. More at ThorstenMeyerAI.com.

Sources

- MIT — GenAI Divide Study: 95% Pilot Failure Rate (2025)

- S&P Global — 42% AI Initiative Abandonment (2025)

- Constellation Research — 42% Zero ROI on AI Deployments

- Forrester — 15% Positive Profitability Impact

- Xenoss — Total Cost of Ownership for Enterprise AI: 200–400% Inflation

- Zylo — AI Pricing: True Cost for Businesses (2026)

- Lex Data Labs — AI TCO: Hidden Cost of Inference

- EY — 80% CPOs Planning GenAI (Global CPO Survey 2025)

- Gartner — 30%+ SaaS with Outcome-Based Components

- Gartner — 40%+ Agentic AI Projects Fail by 2027

- PricingSaaS — 79 Credit-Model Companies (126% YoY Growth)

- Zendesk — $1.50 Per AI-Resolved Ticket

- HubSpot — 31% Retention + 21% Satisfaction from Outcome Pricing

- OMB — Memoranda M-25-21, M-25-22 (April 2025)

- EU Public Buyers Community — MCC-AI Model Contractual Clauses

- Open Contracting Partnership — Public Sector AI Procurement Shifts

- GAO — Federal GenAI Use Cases (Nine-Fold Increase)

- UK Government — £573M AI Contracts (August 2025)

- Code for America — Government AI Landscape Assessment

- IAPP — EU Model Clauses Practical Guide

- Fortune — MIT Report: 95% GenAI Pilots Failing

- CIO — 2026: The Year AI ROI Gets Real

- Art of Procurement — State of AI in Procurement 2026

- Bessemer Venture Partners — AI Pricing Playbook

- PYMNTS — AI Moves SaaS to Consumption Pricing

© 2026 Thorsten Meyer. All rights reserved. ThorstenMeyerAI.com