By Thorsten Meyer — April 2026

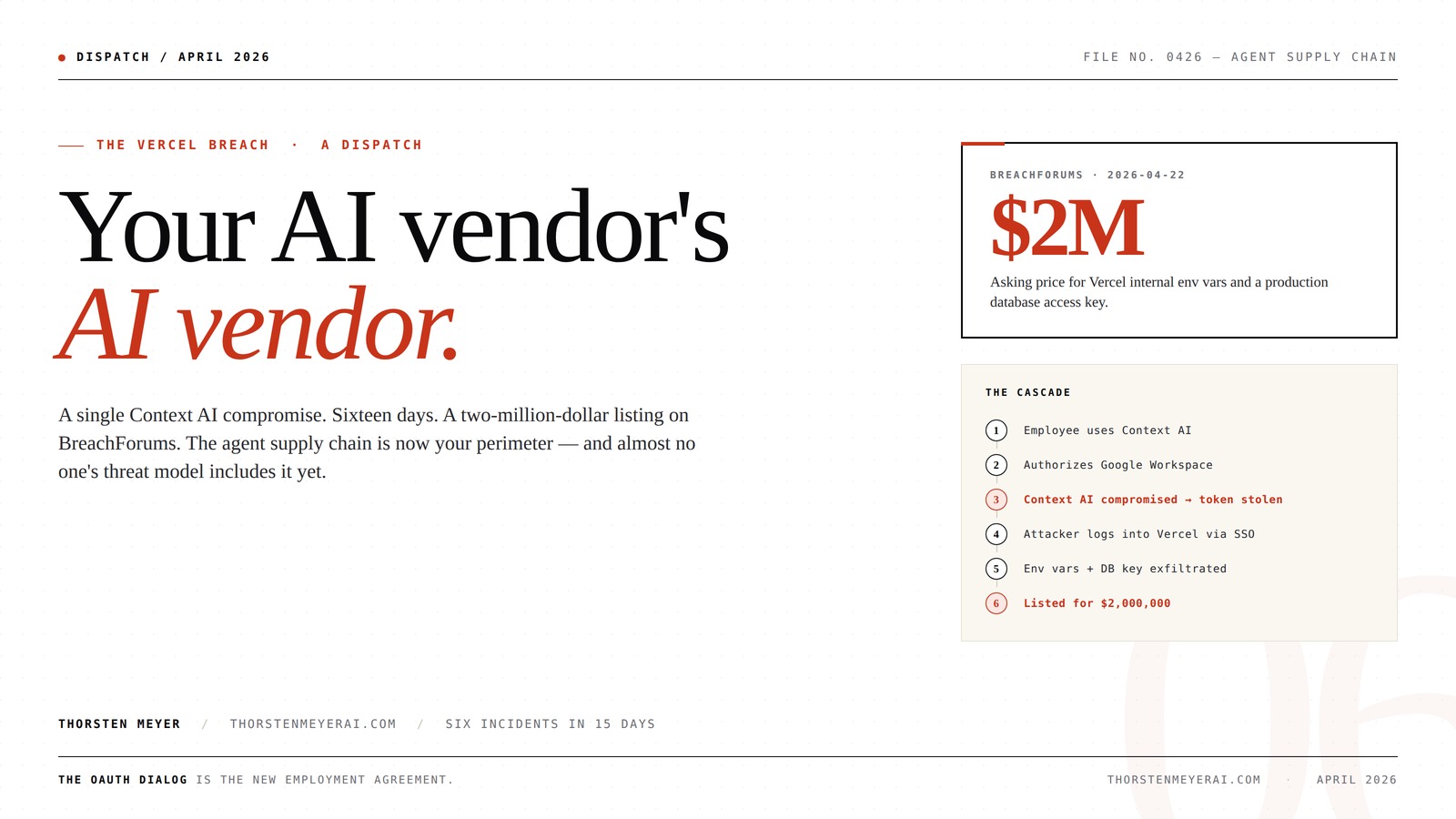

A single Context AI employee was compromised. Sixteen days later, a Vercel database access key was selling on BreachForums for $2 million.

Your AI vendor’s AI vendor.

A single Context AI employee was compromised. Sixteen days later, a Vercel database access key was selling on BreachForums for two million dollars. The agent supply chain is now your perimeter — and almost no one’s threat model includes it yet.

How a Context AI login owned Vercel.

The Vercel employee did not click a phishing link. They did not reuse a password. They authorized a productivity tool — and forgot.

Vercel employee uses Context AI as a productivity tool in their browser.

Employee authorizes Context AI to Google Workspace — gmail, drive, calendar scopes.

Context AI is breached. Attacker now holds the employee’s Workspace token.

Attacker logs into the employee’s Vercel account using Google SSO. No password needed.

Internal environment variables and a production database access key are taken.

Credentials surface on BreachForums for $2,000,000.

Password Manager

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Fifteen days. Same shape, different colors.

Every incident has the same structure: an AI tool, library, or model — usually free, usually trusted, usually invisible to the security team — sits between the attacker and the target.

via LiteLLM lib

phishing campaign

leaked HR data

chain pattern

third-party tool

exploit code

Symantec VIP Hardware Authenticator – OTP One Time Password Display Token – Two Factor Authentication – Time Based TOTP – Key Chain Size

Standard OATH compliant TOTP token (time based)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

It isn’t the network. It isn’t the identity. It’s the agent.

Tokens, not sessions.

Employees log in and out. Agents hold OAuth tokens that live for months. Compromise the agent and the employee doesn’t need to be online.

Lateral by design.

Context AI is useful precisely because it can read Gmail, Drive, Calendar, Vercel, GitHub, Linear. The breach radius equals the integration list.

Not in your CMDB.

Most agents enter through one employee approving an OAuth dialog. They appear in no procurement record, no vendor risk register, no SOC 2.

The new question is not did we get breached — it is which of our employees authorized which AI tool to read which production system, and when.

Seagate Portable 2TB External Hard Drive HDD — USB 3.0 for PC, Mac, PlayStation, & Xbox -1-Year Rescue Service (STGX2000400)

Easily store and access 2TB to content on the go with the Seagate Portable Drive, a USB external…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Three questions you can answer in 30 days — or you can’t.

OAuth inventory.

Pull the list of every external OAuth application authorized against Workspace, Microsoft 365, GitHub, and Vercel. How many are AI tools? How many were approved by an employee, not procurement?

AI vendor depth.

For every AI vendor in your stack, identify which AI vendors they use. LiteLLM, OpenRouter, Pinecone, Anthropic, OpenAI. The breach radius is two hops, not one.

Agent kill switches.

If a third-party AI tool is compromised tomorrow, can you revoke every OAuth token your employees granted it within thirty minutes? If the answer is “we’d email everyone,” that is the gap.

In the same fifteen-day window, Mercor — a $10B AI training-data startup — was breached through LiteLLM, an open-source library most of the AI industry uses. An internal Meta AI agent leaked sensitive employee data without an external attacker involved. Microsoft Security flagged a coordinated AI-enabled device-code phishing campaign. Six distinct AI-related security incidents in the fifteen days following April 7.

These are not unrelated. They are the same incident, repeated, in different colors.

The agent supply chain is now your perimeter. And almost no one’s threat model includes it yet.

McAfee Total Protection with Scam Detector | Avoid Phishing Emails, Texts, Video and QR Code Scams with Scam Protection Software App for iPhone & Android | 1-Year Subscription with Auto-Renewal

ALL-IN-ONE SCAM PROTECTION – Stop sophisticated phishing attacks before they reach you; our scam detection helps you avoid…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

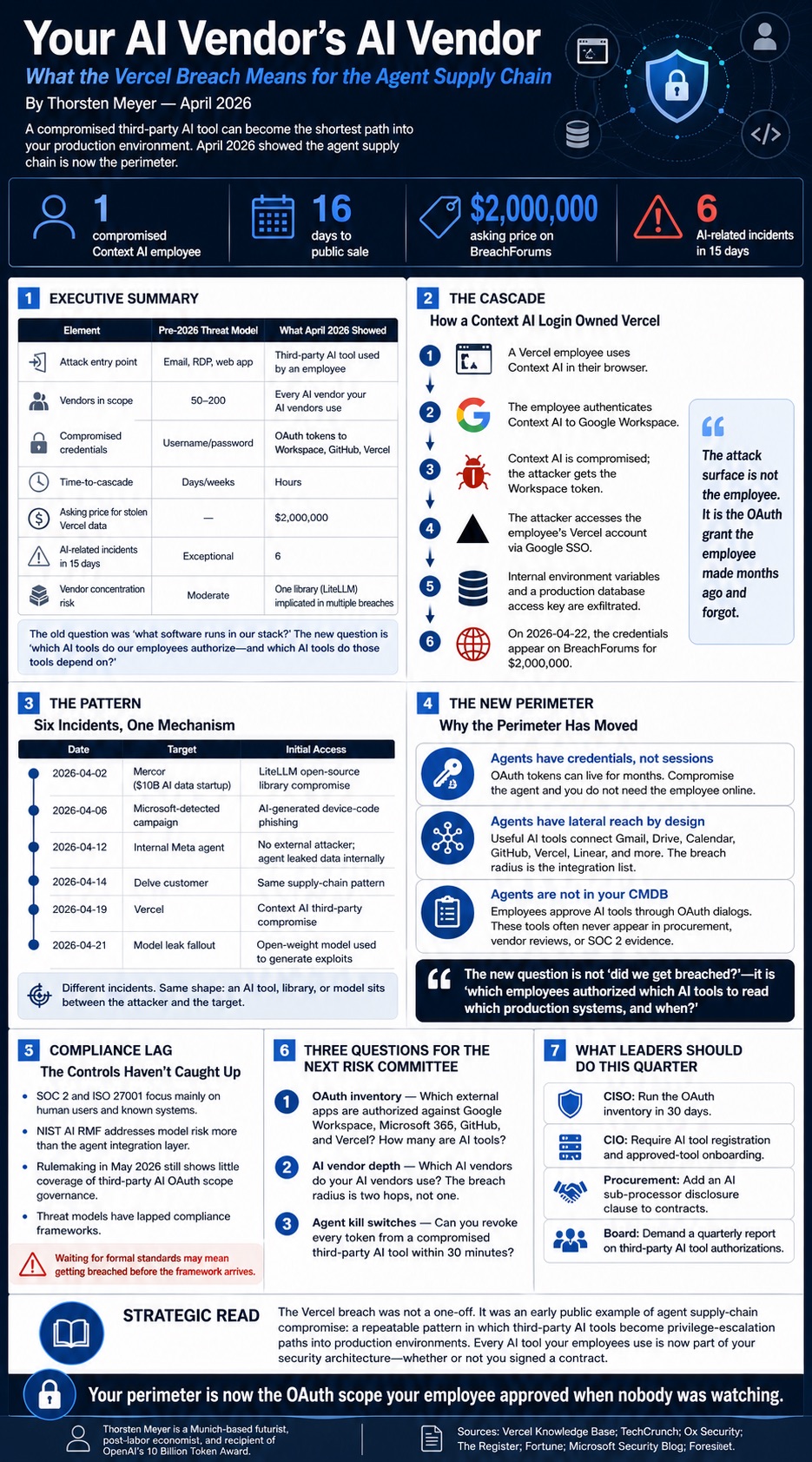

Executive Summary

| Element | Pre-2026 Threat Model | What April 2026 Showed |

|---|---|---|

| Attack entry point | Email, RDP, web app | Third-party AI tool used by an employee |

| Number of vendors in scope | 50–200 | Every AI vendor your AI vendors use |

| Compromised credentials | Username/password | OAuth tokens to Workspace, GitHub, Vercel |

| Time-to-cascade | Days/weeks | Hours |

| Asking price for stolen Vercel data | — | $2,000,000 (BreachForums) |

| AI-related incidents in 15 days | Exceptional | 6 |

| Industry vendor concentration risk | Moderate | One library (LiteLLM) implicated in multiple breaches |

The supply chain question used to be: “what software runs in our stack?” That question is now insufficient. The new question is: “which AI tools do my employees plug into their browsers — and which AI tools do those tools plug into?”

1. The Cascade — How a Context AI Login Owned Vercel

The mechanics, as Vercel disclosed on 2026-04-19:

- A Vercel employee uses Context AI, a third-party AI productivity tool, in their browser.

- The employee authenticates Context AI to their Google Workspace.

- Context AI suffers a compromise. The attacker now holds the employee’s Workspace token.

- The attacker logs into the employee’s Vercel account using Google SSO.

- The attacker exfiltrates internal environment variables and a production database access key.

- On 2026-04-22, the credentials appear on BreachForums for $2,000,000.

The Vercel employee did nothing wrong. They did not click a phishing link. They did not reuse a password. They authorized a productivity tool — the kind every developer at every company uses, often without telling IT.

The attack surface is not the employee. It is the OAuth grant the employee made six months ago and forgot.

2. The Pattern — Six Incidents, One Mechanism

The Vercel incident did not occur in isolation. The April 2026 incident catalog logs:

| Date | Target | Initial Access |

|---|---|---|

| 2026-04-02 | Mercor ($10B AI data startup) | LiteLLM open-source library compromise |

| 2026-04-06 | (Microsoft-detected) | AI-generated device-code phishing campaign |

| 2026-04-12 | Internal Meta agent | No external attacker — agent leaked data to wrong employees |

| 2026-04-14 | Delve customer | Same supply chain pattern |

| 2026-04-19 | Vercel | Context AI third-party compromise |

| 2026-04-21 | (model leak fallout) | Open-weight model used to generate exploits |

Every incident has the same shape: an AI tool, library, or model — usually free, usually trusted, usually invisible to the security team — sits between the attacker and the target.

The teacher-student attack from Rent-and-Distill (February 2026) is a special case. The general case is here: any AI component that processes your data is now a privilege escalation path.

3. The New Perimeter

For two decades, the security perimeter was the network. Then it became the identity. After April 2026, it is the agent.

Three concrete reasons:

Agents have credentials, not sessions. A traditional employee logs in, logs out, locks the screen. An agent holds an OAuth token that lives months. Compromise the agent and you do not need the employee to be online.

Agents have lateral reach by design. A Context AI tool is useful precisely because it can read your Gmail, Drive, Calendar, Vercel, GitHub, and Linear. The breach radius is the integration list.

Agents are not in your CMDB. Most agents enter the company through a single employee approving a Google OAuth dialog. They do not appear in procurement records. They do not appear in vendor risk assessments. They do not appear in SOC 2.

The new question is not “did we get breached?” — it is “which of our employees authorized which AI tool to read which production system, and when?”

4. The Compliance Lag

Regulators are still writing rules for the previous category. SOC 2 Type II audits ask about access management for human users. ISO 27001 maps controls to identifiable systems. NIST AI RMF (October 2024) covers model risk, not the agent integration layer.

The May 2026 rulemaking calendars at NIST and the EU AI Office contain nothing on third-party AI tool authorization scopes. The first proposed standard — anyone’s standard — for AI OAuth scope governance is at least eighteen months out.

The threat model has lapped the compliance regime. Companies waiting for a regulatory framework will be breached before the framework arrives.

5. Three Questions for Your Next Risk Committee

- OAuth inventory. Pull the list of every external OAuth application authorized against Google Workspace, Microsoft 365, GitHub, and Vercel. How many are AI tools? How many were approved by an employee, not procurement?

- AI vendor depth. For every AI vendor in your stack, identify which AI vendors they use. LiteLLM, OpenRouter, Pinecone, Weaviate, Anthropic, OpenAI. The breach radius is two hops, not one.

- Agent kill switches. If a third-party AI tool is compromised tomorrow, can you revoke every OAuth token your employees granted it within thirty minutes? If the answer is “we’d email everyone,” that is the gap.

What Leaders Should Do This Quarter

1. CISO: Run the OAuth inventory in 30 days. Anything an employee authorized without procurement approval is unmanaged risk.

2. CIO: Make AI tool registration a condition of employment. New hires get a list of approved tools and a help desk for additions, not blanket discretion.

3. Procurement: Add an “AI sub-processor” clause to every vendor contract. If a vendor uses an AI tool that handles your data, it must be disclosed.

4. Board: Demand a quarterly report on third-party AI tool authorizations. If the report cannot be produced in 30 days, that is the answer.

The Strategic Read

The Vercel breach is not a one-off. It is the first widely-publicized case of an attack pattern the security community has been quietly aware of since late 2025: agent supply chain compromise.

The pattern is now public. The exploit is now repeatable. The asking price is set: $2M for one company’s environment variables.

Every AI tool your employees use is now part of your security architecture, whether or not you wrote a contract. The OAuth dialog is the new employment agreement. And no one has read the fine print on either side.

Your perimeter is now the OAuth scope your employee approved when nobody was watching.

About the Author

Thorsten Meyer is a Munich-based futurist, post-labor economist, and recipient of OpenAI’s 10 Billion Token Award. He spent two decades managing €1B+ portfolios in enterprise ICT before deciding that writing about the transition was more useful than managing quarterly slides through it. More at ThorstenMeyerAI.com.

Sources

- Vercel Knowledge Base, Vercel April 2026 Security Incident (2026-04-20)

- TechCrunch, App host Vercel says it was hacked and customer data stolen (2026-04-20)

- Ox Security, Vercel Breached via Context AI Supply Chain Attack (2026-04-21)

- The Register, Next.js developer Vercel warns customer creds compromised (2026-04-20)

- Fortune, Mercor, a $10 billion AI startup, confirms major security incident (2026-04-02)

- Microsoft Security Blog, Inside an AI-enabled device code phishing campaign (2026-04-06)

- Foresiet, 6 AI Security Incidents: Full Attack Path Analysis (April 2026) (2026-04-22)