Weights Are the Model.

Everything Else Is Just Infrastructure.

Why the distinction between a model’s parameters and its training harness is one of the most clarifying ideas in AI — and why most people who deploy AI never think about it.

There is a peculiar asymmetry at the heart of modern AI. The system that produces a model — the data pipelines, the loss functions, the distributed compute cluster, the optimiser — is massive, expensive, and temporary. It runs for weeks, costs millions of dollars in cloud spend, and then it stops. What it leaves behind is a file. A large file, sometimes hundreds of gigabytes, full of floating-point numbers. Those numbers are the model. Everything else was the scaffolding.

That file is the weights.

Understanding the difference between weights — the permanent artefact, the thing that actually gets deployed — and the harness — the temporary system that shapes them — is not a technical detail reserved for ML engineers. It is a conceptual clarification that changes how you think about what AI systems are, where the value lives, and why fine-tuning a model looks nothing like training one from scratch.

What a weight actually is

Strip away the metaphors about “neurons” and “brains” and a neural network reduces to a graph of arithmetic operations. You have nodes, arranged in layers, connected by edges. Every edge has a number attached to it. That number is a weight.

When you pass an input through a network — a sentence, an image, a row in a spreadsheet — the signal travels along those edges. At each step, it gets multiplied by the weight on that edge. Then it gets added to every other signal arriving at the same node. Then a non-linear function squashes the result. Then it moves to the next layer. And again, and again, until you reach the output.

The weights determine how the signal travels. A large positive weight amplifies. A weight near zero suppresses. A negative weight inverts. The pattern of all weights across hundreds of millions of connections is what encodes everything the model has learned — the grammar of a language, the edge-patterns in images, the statistical regularities of code.

This is worth sitting with. GPT-4 and a freshly initialised model with identical architecture are structurally the same. Same layers, same dimensions, same operations. The only difference is the numbers on the edges. Those numbers are everything.

Artificial Intelligence for Solar Photovoltaic Systems (Explainable AI (XAI) for Engineering Applications)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The harness: what builds the weights

The term harness is borrowed from engineering — it refers to a system of constraints and control surfaces that keeps a powerful process pointed in the right direction. A training harness is exactly that: the complete infrastructure that takes a randomly initialised network and systematically adjusts its weights until they encode something useful.

It has several components, each with a distinct job.

The data pipeline

Before a single weight moves, training data has to arrive in a form the network can consume. The pipeline handles shuffling (so the model doesn’t learn the order of examples instead of their content), batching (so gradients are computed over many examples at once, providing stable signal), normalisation, and augmentation. A poor pipeline produces weight values that reflect data artifacts instead of genuine patterns. Garbage in, garbage in the weights.

The loss function

This is the formal definition of “wrong.” The loss function takes the model’s output and the correct answer and produces a single number — the loss — that quantifies their difference. High loss means the current weights produce bad predictions. Low loss means they produce good ones.

The choice of loss function determines what the weights will optimise for. Cross-entropy loss shapes weights toward confident classification. Mean squared error shapes them toward accurate regression. The RLHF reward model used in the post-training of large language models shapes weights toward human-preferred outputs. The weights will become whatever the loss function rewards — which means designing the loss function is, in a real sense, the most consequential design decision in the entire process.

Alignment researchers spend enormous effort on loss function design precisely because the weights will faithfully optimise for whatever objective they are given. “Reward hacking” — where a model finds unexpected ways to maximise a reward signal — is really just a weight optimisation problem. The weights did exactly what the loss function asked. The loss function asked for the wrong thing.

The optimiser

The optimiser is the mechanism that actually changes the weights. It reads the gradients — partial derivatives that indicate, for each weight, how much the loss would decrease if that weight changed — and decides how large the update should be.

Adam, the dominant optimiser in large-scale training, maintains a running estimate of both the mean and variance of gradients for each weight. It uses that history to apply adaptive learning rates — weights that have been noisy and uncertain get smaller updates; weights with consistent gradient signal get larger ones. This is why training converges: the optimiser is not moving blindly, it is accumulating a statistical picture of which weights matter and moving them more confidently over time.

The scheduler

The learning rate — how aggressively the optimiser moves the weights on any given step — is not constant. A scheduler modulates it over the course of training. Warmup phases start with tiny updates, letting the model settle from random initialisation before making large committed moves. Decay phases reduce the learning rate late in training, allowing the weights to converge precisely rather than oscillating around a good solution.

Without a scheduler, training a large model is like navigating a maze by sprinting at full speed: you overshoot every corridor. The scheduler is what allows the weights to find narrow, precise minima in the loss landscape.

R for Data Science: Import, Tidy, Transform, Visualize, and Model Data

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The beautiful asymmetry

Here is the thing that makes this framework genuinely useful for thinking about AI deployment, not just AI training: the harness disappears at inference time.

When you call a model API, there is no loss function running. There is no optimiser. There is no data pipeline. There is only the forward pass — input comes in, it flows through the weights, output comes out. The entire training apparatus is gone. What remains is the weight file and the arithmetic.

This asymmetry explains why fine-tuning is so much cheaper than pre-training. Pre-training must shape weights from random noise into something that encodes language understanding — that requires trillions of tokens and months of compute. Fine-tuning starts with weights that already encode language understanding and makes targeted adjustments to specific behaviours. The starting point is almost all the way there. The harness only has to travel the last mile.

It also explains why prompt engineering works. Prompt engineering is not modifying the weights. It is selecting a path through the existing weight structure by choosing inputs that activate the right patterns. The model has already learned everything it knows. You are just navigating it.

neural network weights viewer

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What this means for enterprise AI strategy

Most organisations deploying AI in 2026 are not training models from scratch. They are consumers of pre-trained weights — via API, via open-weight download, via model-as-a-service — and their strategic question is not “how do we train a good model” but “how do we use existing weights effectively.”

The weights/harness distinction clarifies this strategy considerably.

If you are building on foundation models via API, you have no access to the weights and no ability to run a harness. Your lever is prompting — navigating the existing weight structure. The ceiling is defined by what those weights already know. The floor is defined by how well your prompts find it.

If you are fine-tuning, you are running a harness against a copy of the weights. The pre-trained weight values represent enormous accumulated value — the knowledge distilled from training on the internet. Your fine-tuning harness is steering that value toward your specific objective without destroying the base capability. This is a delicate operation: a badly designed fine-tuning harness can wash out pre-trained capability. The technical term is catastrophic forgetting. The strategic implication is that your fine-tuning data and loss function design matter enormously.

If you are evaluating open-weight models — LLaMA, Mistral, Qwen — you are acquiring the weight file itself. This gives you something qualitatively different from API access: reproducibility, portability, and the ability to run your own harness against those weights indefinitely. The moat, if any, is not in the weights — which are public — but in the quality of harness you build on top of them and the proprietary data you use to run it.

AI Prompt Engineering: Foundations of Communication with LLMs – Building Generative AI and Agentic AI Prompt Systems Across Development, Testing, and Deployment

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The underappreciated craft of harness design

The ML research community publishes extensively about architectures and datasets. It publishes less about harness design, perhaps because harness design is messier — more craft than science, more engineering than mathematics. But the practitioners who build the most capable models treat harness design as a primary variable.

What does a sophisticated harness look like in practice?

# A minimal but complete training harness

for epoch in range(num_epochs):

for batch in dataloader: # pipeline

optimizer.zero_grad()

outputs = model(batch.inputs) # forward pass

loss = criterion( # loss function

outputs, batch.targets

)

loss.backward() # backward pass

clip_grad_norm_( # stability

model.parameters(), 1.0

)

optimizer.step() # weight update

scheduler.step() # lr schedule

log_metrics(loss, step) # observability

checkpoint_if_needed(step) # recoveryThis looks simple. The sophistication is invisible. The dataloader hides a data pipeline that may involve terabytes of preprocessing and deduplication. The criterion hides a carefully designed loss function — perhaps a composite of cross-entropy, a KL divergence penalty, and a contrastive term. The clip_grad_norm_ call is a safeguard against gradient explosions that can happen when weight updates are too large. The checkpoint is what makes recovery possible if a node fails seventeen hours into a training run.

And none of it ships with the model. The model that gets deployed is the weight file that the checkpoint writes. The harness is scaffolding. It comes down when the building is done.

Where this goes: harnesses for everything

The harness/weights distinction is becoming more, not less, relevant as AI systems mature. Several trends are converging on it.

Post-training is eating pre-training’s lunch. The capability gains in the top frontier models over the last year have come disproportionately from post-training — RLHF, direct preference optimisation, constitutional AI, synthetic data generation pipelines. These are all harness improvements applied to existing weights. The architecture has barely changed. The harness has changed enormously.

Fine-tuning is commoditising. Managed fine-tuning services, LoRA and QLoRA making parameter-efficient updates accessible on consumer hardware, the availability of high-quality open weights — all of these lower the barrier to running your own harness. The bottleneck is shifting from “can you access weights” to “can you design a harness that shapes them usefully.”

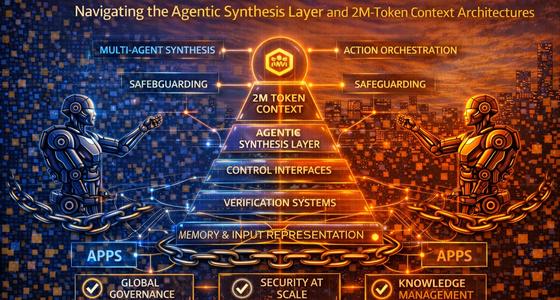

Agentic workflows are building harness-like systems at inference time. Reinforcement learning from interaction, tool-use training, multi-agent feedback loops — these are training-adjacent processes that update weight-adjacent representations in real time. The line between inference and fine-tuning is blurring. What does not blur is the distinction between the thing that learns (the weights, or their functional equivalent) and the system that directs the learning (the harness, or its functional equivalent).

If you are building AI systems, the question to ask is not “which model should I use” but “what is my harness strategy.” The model is the weights. The weights are largely determined by whoever trained them. Your differentiation — your moat, your capability edge, your alignment guarantee — lives in the harness you run against those weights, whether that harness is a fine-tuning pipeline, a sophisticated evaluation loop, or a well-designed RLHF reward model.

The scaffolding is temporary. But scaffolding is what builds cathedrals.