OpenAI feels like the inevitable winner. The products are everywhere, the brand is strong, and the pace of releases is dizzying. But if you zoom out and look at the unit economics, the story gets uncomfortable: when model quality converges and switching costs collapse toward zero, pricing power evaporates. Pair that with infrastructure that looks more like heavy industry than software, and you risk building a utility business while the market prices you like SaaS.

This article breaks down the “no moat” reality, the utility trap, and—most importantly—where value actually accrues as AI becomes ubiquitous. There’s also one shift underway that flips OpenAI from “winner” to “component.” Once you see it, a lot of the current hype cycle starts to look very different.

1) The No‑Moat Reality: When Models Reach Parity and Switching Costs Go to Zero

Here’s the core issue: when there’s no moat, premium pricing and durable margins collapse. If the core product can be swapped like a light bulb, the profit belongs to whoever owns the socket—not the bulb maker. That’s the strategic problem.

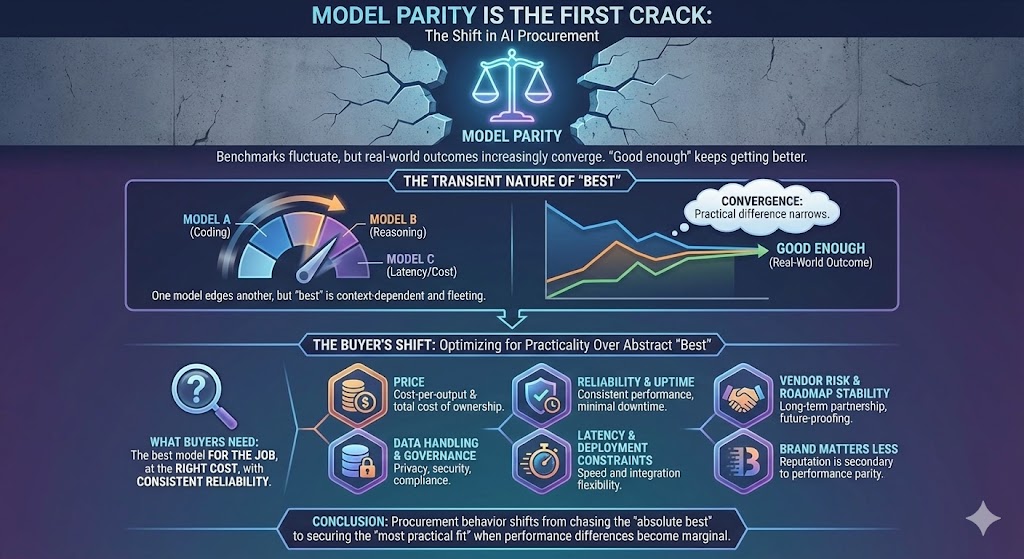

Model parity is the first crack

Benchmarks fluctuate, but real-world outcomes increasingly converge. One model edges out another on coding. Another does better on certain reasoning tasks. A third wins on latency, context handling, or cost-per-output. Meanwhile, “good enough” keeps getting better.

The practical takeaway for buyers is simple: they don’t need the absolute best model in the abstract—they need the best model for the job, at the right cost, with consistent reliability. When “best” is transient and context-dependent, procurement behavior shifts. People optimize for:

- Price

- Reliability and uptime

- Data handling and governance

- Latency and deployment constraints

- Vendor risk and roadmap stability

Brand still matters, but it matters less when performance differences feel situational instead of structural.

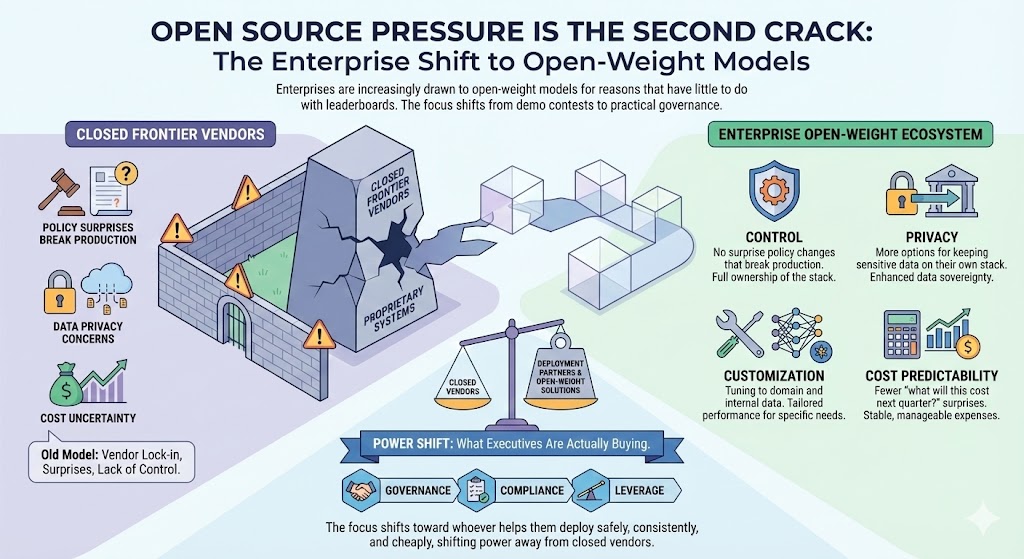

Open source pressure is the second crack

Enterprises are increasingly drawn to open-weight models for reasons that have little to do with leaderboards:

- Control: no surprise policy changes that break production

- Privacy: more options for keeping sensitive data on their own stack

- Customization: tuning to domain and internal data

- Cost predictability: fewer “what will this cost next quarter?” surprises

The thing many people miss is what executives are actually buying. They’re not trying to win a demo contest. They’re buying governance, compliance, and leverage. That shifts power away from closed frontier vendors and toward whoever helps them deploy safely, consistently, and cheaply.

The lock‑in illusion: APIs don’t create switching costs anymore

A common assumption is “if they integrate via API, they’re locked in.” But the opposite is happening.

Model routers, abstraction layers, and standardized interfaces make providers increasingly interchangeable. For a growing number of teams, switching is no longer a rewrite—it’s a configuration change. Route traffic from one provider to another. Run A/B tests. Push low-stakes calls to cheap models and reserve premium calls for high-impact outputs.

If you remember nothing else from this section, remember this:

Zero switching costs turn models into commodities.

Distribution owners have the upper hand

Another compounding disadvantage: the biggest players own distribution.

- OS vendors own the interface

- Productivity suites own daily workflows

- Browsers, search, and identity providers own default entry points

- Device makers own the hardware and on-device acceleration roadmap

They can swap underlying models with minimal user-visible disruption because they control the surface area. If you own the customer relationship, you can arbitrage the model layer: use the best tool today, switch tomorrow, and keep the brand experience intact.

OpenAI, in contrast, mostly sells tokens. That’s a tough position when the interface owners can treat models as replaceable suppliers.

A simple mental model: models as interpreters

A useful framing is that foundation models are increasingly becoming standardized components—like interpreters, runtimes, or widely adopted infrastructure layers. Once developers learn the mental model and tooling, they want stability. They will absolutely swap providers to lower cost or risk, but they won’t rebuild their applications every quarter.

That’s why orchestration wins: not because it’s glamorous, but because it’s rational.

A real-world pattern you’ll see everywhere: route “draft” generations to a cheaper model and keep premium calls for final outputs. Same user experience, lower bill, and almost no engineering disruption—because the system was designed to treat models like utilities.

The mistake to avoid

Don’t confuse brand loyalty with technical lock-in.

In many software categories, lock-in exists because of file formats, ecosystem dependencies, or contracts embedded in distribution. In model APIs, it’s often JSON in and JSON out. Once you accept that, you realize the moat probably isn’t the model.

So maybe the moat is the business model?

That’s where it gets worse.

Top picks for "openai look unstoppable"

Open Amazon search results for this keyword.

As an affiliate, we earn on qualifying purchases.

2) The Utility Trap: High Capex, Low Margins, Fragile Financing

If the moat isn’t the model, it’s tempting to believe the moat is scale: “We’ll spend more, build bigger, and therefore win.” But that’s the power-plant metaphor—and it comes with power-plant economics.

Think of data centers as plants and tokens as electricity. Utilities spend first and profit later—if ever. Costs arrive upfront, revenue ramps gradually, and margins get competed away as the category matures.

Fixed-cost gravity changes everything

Training runs, GPU clusters, specialized networking, energy, cooling, bandwidth, redundancy—the bills don’t wait for product-market fit. And frontier R&D doesn’t behave like a toggle you can switch off without consequences.

Even if you want to cut burn, some of the cost structure is sticky:

- You’ve already made multi-year commitments

- You’ve built organizations around constant scaling

- You’re in a race where slowing down can be strategically fatal

- Your customers expect a fast cadence of capability improvements

In software, you can often slow spend and preserve margins. In heavy infrastructure, you can’t “pause a plant.” If you stop feeding it, it decays—technically, organizationally, and competitively.

The “too cheap to meter” paradox

Every competitor is incentivized to drive marginal inference cost down. But if your revenue model is metering tokens, a falling marginal cost tends to compress price, not just cost.

In a routing world, the moment a credible competitor undercuts you, traffic moves. Buyers don’t need to “break up” with your brand—they just shift the workloads that aren’t worth premium pricing.

Over time, that dynamic pushes the category toward something closer to utility pricing:

- Lower margins than software

- More price competition

- Less durability of premium tiers

- A constant need for capex just to stay in the game

Software wants 70–80% gross margins. Utilities are happy with a fraction of that.

The trap: locked-in commitments, floating price per unit

This is the structural bind:

- You commit to compute, chips, and data center scaling based on aggressive growth assumptions.

- Meanwhile, buyers adopt routing, open models improve, and workloads shift to whichever option is cheaper and “good enough.”

- So revenue per unit trends down while amortization clocks keep ticking.

That spread—sticky costs vs. drifting prices—is the utility trap.

The financing loop that reinforces the trap

There’s also a narrative-financing pattern that can emerge in frontier categories:

- Promise a large step change to raise at premium valuations.

- Spend heavily on compute and talent to chase the frontier.

- Accumulate fixed obligations that require rapid growth to justify them.

- When growth slows or pricing compresses, promise an even bigger leap to re-ignite capital.

This can work for a while because narratives can outpace near-term unit economics. But it breaks when the cash flows don’t support the commitments.

To be clear: this isn’t an accusation about any specific balance sheet. It’s the category pattern you should watch for when a company sells metered access to compute-intensive capability in a market with collapsing switching costs.

“Partnerships will save them” is not a free pass

Partnerships can absolutely smooth this out—distribution, bundling, subsidized compute, guaranteed demand. But dependence cuts both ways.

If your largest partners are also:

- your infrastructure landlords,

- your largest distribution channels,

- your most powerful buyers,

…then you may not be negotiating from strength when demand softens. When growth stalls, the side with distribution and balance sheet flexibility often gains leverage, not loses it.

That’s what fragile financing looks like: needing someone else’s throughput, capital, and distribution just to keep the flywheel turning.

Utility endgames are rarely “software win” outcomes

When businesses fall into utility-style economics, endgames tend to rhyme:

- You become an R&D supplier to partners who control distribution and capture most of the value.

- Creditors and contract structures force asset sales or restructuring.

- The business rushes liquidity events while excitement is highest, before compression fully shows up.

None of these are inherently “bad” outcomes. But they are not the monopoly SaaS outcomes people implicitly price in when they talk about an “unstoppable” AI platform.

The investor mistake

The mistake is using an “AGI lottery ticket” to justify commodity economics today.

Even if you believe a breakthrough is possible, you still need a business model that survives a world where models are interchangeable and buyers route around premium pricing. Narratives don’t pay capex.

A practical heuristic: when you see high fixed costs + falling marginal prices + customers with routing power, don’t model it like SaaS. Model it like a grid.

And grids rarely mint monopoly profits.

So if the plant struggles, where does the real value go?

Follow the grid.

3) The Solar Age: Where Value Actually Accrues (Platforms, Hardware, Verticals)

If the model layer commoditizes, value doesn’t disappear. It migrates. Specifically, it moves to the grid and the appliances—the infrastructure owners, distribution owners, and outcome owners.

The shift to the edge is real

NPUs, TPUs, and custom AI ASICs are pushing capable models onto phones, cars, laptops, and local servers. The enabling mechanism is a loop:

- Frontier models improve

- They’re distilled into smaller models

- Smaller models run locally with low latency and better privacy

- Those models get fine-tuned on domain or user context

Most tasks don’t require maximum IQ. They require proximity, context, speed, and trust. Once you understand that, it becomes obvious why the edge keeps getting more important.

Who gets paid first: hardware and manufacturing

Hardware providers monetize the entire category because they collect a toll on every run:

- Training

- Inference

- On-device acceleration

- Data center build-out

That’s “toll booth” economics, not “single exit ramp” economics. They don’t need to guess which model vendor wins. They just need the workload to exist.

The landlords: cloud platforms and orchestration

Right behind hardware sits the “real estate” layer:

- managed hosting,

- orchestration,

- reliability,

- identity and access,

- compliance and audit tooling,

- enterprise procurement channels.

Cloud platforms don’t care who wins the model race. They care that workloads run on their land, with their tooling, at their margin.

As models become interchangeable, this layer gets stronger: buyers prefer standardized infrastructure with predictable procurement and governance.

The electricians: integrators and platform teams

Then come the teams wiring AI into real workflows—consultancies, system integrators, and internal AI platform groups.

Their advantage is simple: they turn cheap tokens into expensive outcomes.

- Less risk

- Faster throughput

- Better compliance

- Fewer incidents

- Higher customer satisfaction

- Lower operational cost

They also create compounding leverage: once AI is embedded deeply, each iteration improves the workflow rather than just the model call.

The appliances: vertical applications with measurable outcomes

This is where durable margins tend to live when the “electricity” gets cheap.

Vertical apps can price on outcomes because they own:

- domain expertise,

- proprietary data and feedback loops,

- workflow integration,

- trust and auditability,

- clear ROI metrics.

“Reduce contract review time by 60% with audited trails” is a business.

“Our chatbot writes nicer emails” is a feature.

What OpenAI becomes in this world: a component

In this future, OpenAI can still be extremely important—just not in the way people assume.

A plausible role is “invisible backend component and R&D engine.” Think something like “Intel Inside” economics: essential supplier, but disciplined on price by competition and abstracted by platforms that control the customer relationship.

That’s not doom. It’s just not monopoly software economics.

The OS integration arc makes the model layer swappable

As models stabilize, AI gets pulled into the kernel:

- voice,

- search,

- autocomplete,

- background agents,

- memory,

- safety scaffolding.

The interface keeps the brand. The underlying model becomes replaceable. That’s great for users and for platform owners. It’s challenging for any single model vendor trying to preserve premium pricing as the default.

A builder’s playbook: where to focus if you want a real moat

If you’re building products or investing in the space, here are the moves that compound.

1) Build context

- Own proprietary data

- Integrate deeply into workflows

- Create feedback loops so each interaction improves the next

- Make the system safer and more auditable over time

2) Orchestrate models

- Route by cost-quality tradeoff

- Keep providers interchangeable

- Monitor drift with automated evals

- Treat models as inputs, not the product

3) Ship vertical value

- Pick a domain

- Define the metric that matters

- Tie pricing to outcomes, not tokens

- Win by trust, measurable ROI, and operational integration

If you remember nothing else, remember this:

Results beat model access.

The mistake to avoid

Don’t build a strategy that depends on “premium frontier access” being your moat.

That’s a rental, not ownership. The moat is data, distribution, process change, and trust. Once you focus on those, the model becomes a detail—not the castle.

Conclusion

OpenAI may be a product leader, but the category dynamics increasingly point toward utility economics at the model layer while value shifts to hardware, orchestration, and vertical outcomes with measurable ROI. If you want the context-and-orchestration playbook in more depth, the next step is learning how to build an AI moat without training a model. Subscribe for weekly, no‑hype strategy.