A deliberately pessimistic scenario for Thorsten Meyer AI

Premise: This essay intentionally assumes a more destructive pathway for AI than most forecasts. Not because it’s inevitable, but to surface what could break—and what guardrails we’d need to prevent it.

1) The Conditions of a Destructive Path

A damaging AI trajectory emerges when four forces align:

- Adoption outruns governance. Organizations deploy generative AI faster than they build quality, liability, and safety systems.

- Cost over craft. Leadership frames AI primarily as headcount reduction; training and quality budgets are cut.

- Technical asymmetry expands. A few players control data, models, and compute; smaller firms/clinics become dependent.

- Black‑box comfort. Convenience beats skepticism. Models run with minimal oversight; error metrics stay opaque.

With that lens, here’s what could happen in law and medicine.

The Confidence Advantage: Optimizing Privacy, Cybersecurity and AI Governance for Growth

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2) Law: From Pyramid to Diamond—With Cracks

2.1 The devaluation of apprenticeship

As research, drafting, citation checks, and contract review are automated, the traditional on‑ramp for junior lawyers erodes. The firm pyramid morphs into a diamond: a narrow base (fewer entry roles), a bulging middle (AI workflow managers and specialist reviewers), and a thin apex of equity partners. It looks efficient at first—but five to ten years later, the shrunken pipeline produces experience gaps just as today’s juniors should be stepping into complex matters.

2.2 Error cascades and faux authority

Gen‑AI produces fluent, formally polished text—even when the substance is shaky. Under time and price pressure, hallucinations leak into production documents. The smooth surface creates false authority: clients and courts overweight the output. Single errors compound into systemic misjudgments, especially in high‑volume matters (consumer claims, mass debt, templated filings).

2.3 Market concentration and unequal justice

Large firms assemble exclusive data lakes (matters, playbooks, outcomes) and train bespoke models. Regional practices rely on off‑the‑shelf tools and fall behind on price and quality. For the public, AI self‑help grows: cheap and fast, but with murky liability and variable quality. Access to justice becomes polarized: premium human+AI service for those who can pay, “bot justice” for those who cannot.

2.4 Regulatory erosion

If courts require only a generic “AI used” checkbox—without substantive verification pathways (prompt lineage, sources, checks)—responsibility slips into a gray zone. Vendors sidestep unauthorized practice rules by branding products as “education tools.” The result is shadow practice with minimal oversight.

Hypothetical 2030 indicators (destructive scenario):

- 20–30% fewer entry‑level associate positions in large firms; traditional apprenticeship ladders shrink.

- 10–15% higher rates of sanctions/rejections for AI‑related filing defects in early‑adopting courts.

- 2–3 dominant platforms control research and drafting pipelines across the market.

AI For Lawyers: 2025: Legal Prompts, Legal AI Tools & More

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

3) Medicine: Productivity at a Price—Deskilling, Alarm Fatigue, Eroding Trust

3.1 Automation complacency

If triage, preliminary reads, and documentation default to AI, trainees unlearn rare‑pattern recognition. Attention shifts from clinical reasoning to system monitoring. Error types change—from obvious misses to subtle AI misinterpretations that are harder to detect and explain.

3.2 Alert floods and over‑diagnosis

Triage models throw false positives. Hospitals respond with more testing, not necessarily better care. Costs climb, patients endure diagnostic odysseys, and teams develop alert fatigue, tuning out warnings—sometimes the critical ones.

3.3 Data risk and misuse

More sensors, notes, and images mean more attack surface. Prompt injection, supply‑chain compromise, and model updates without robust change‑control create accountability gaps. Multimodal correlation increases the risk of re‑identifying patients, weakening trust in digital care.

3.4 Shifts in the care landscape

Low‑acuity clinics and rural practices struggle if payers incentivize AI‑first pathways. Tertiary centers gain; the rural gap widens.

Hypothetical 2030 indicators (destructive scenario):

- 15–25% fewer training slots in certain diagnostic specialties; a thinning pipeline.

- A measurable rise in preventable incidents at hospitals that scaled AI aggressively without adequate controls.

- 70–80% of facilities use AI for documentation, yet records remain legally vulnerable (opaque provenance, version chaos).

Triage Intelligence: Architecting AI Agents for Clinical Decision-Making and Patient Prioritization

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4) Societal Effects: Faster Systems, Thinner Justice and Care

- Quality polarization. The affluent receive “human‑in‑the‑loop‑plus”; others get bot‑only services.

- Dividends accrue to centers. Data‑ and compute‑rich institutions capture most gains; the periphery loses ground.

- Knowledge decay. With junior work automated away, expertise erodes over years, imposing long‑run costs.

- Systemic fragility. Outages, attacks, or faulty model updates can paralyze legal and care pathways.

- Norm shifts. Insurers and purchasers hard‑code a “KI standard of care” that pushes staffing and budgets down.

Generative AI for Software Development: Code Generation, Error Detection, Software Testing

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

5) Early Warning Signs You’re on the Destructive Path

- Job posts replace “Associate/Resident” with “AI Workflow Supervisor”—with no real training plan.

- Zero‑lawyer / doctor‑light offers promise half the cost—while liability remains vague.

- Courts or payers require AI use (“no reimbursement otherwise”).

- Waves of templated documents share identical AI artifacts (repeated phrasing, metadata fingerprints).

- Incident reports cite model drift or prompt injection as root cause—without public corrective protocols.

- Training timelines shrink without compensating simulation or supervision hours.

6) Counter‑Strategy: Ten Hard Guardrails

- Human rights at the point of care/justice. Codify a right to a human second opinion and a right to explanation.

- Auditability by design. Mandatory process logs (prompts, sources, model/version, verification steps) for professional use.

- Clear liability. Presume negligence when required oversight is missing; define accountable chains (vendor ↔ institution ↔ professional).

- Change‑control with an emergency brake. Every model update passes a safety harness (test suites, regressions) and has a rollback path.

- Protect quality budgets. A required “training dividend”: a share of AI savings must fund apprenticeship, supervision, and QA.

- Apprenticeship 2.0. Mandatory simulation cases, graduated autonomy, peer review, and tracked learning curves.

- Open benchmarks & red‑team pools. Shared stress tests on realistic data, with public results.

- Interoperability first. Open interfaces to prevent lock‑in; data portability as default.

- Procurement & insurance leverage. Payers and insurers require provable oversight—or they don’t pay.

- Equitable access. Public base models/compute for courts, public defense, community clinics, and rural care.

7) What Different Audiences Should Do Now

Students & early‑career professionals

- Choose AI‑complementary edges: quality assurance, evidence appraisal, ethics & liability, crisis communication, socio‑technical system design.

- Build proof portfolios (simulated cases, audits, incident playbooks), not just certificates.

Practitioners in firms & hospitals

- Define stop rules: when AI must not decide.

- Introduce two‑person checks with documented verification.

- Measure drift, alert precision/recall, and error types; report internally monthly, externally annually.

Founders & decision‑makers

- Your moat is a validation moat: deliver test harnesses, monitoring, and governance tooling, not only smarter models.

- Tie pricing to quality guarantees (e.g., refunds for validated errors), or the cheapest wins and quality loses.

- Invest in resilient architecture (fallback modes, offline continuity, cold redundancy).

8) Conclusion: Destruction Is Possible—Not Predetermined

AI could make law and medicine faster—and more brittle: through deskilling, quality erosion, inequality, and new systemic risks. That path is avoidable, but only with explicit guardrails, real oversight, and investment in people.

The choice isn’t “human or machine.” It’s architecture:

- With auditability, liability, training, and interoperability, AI becomes an amplifier.

- Without those pillars, it becomes an accelerant of erosion.

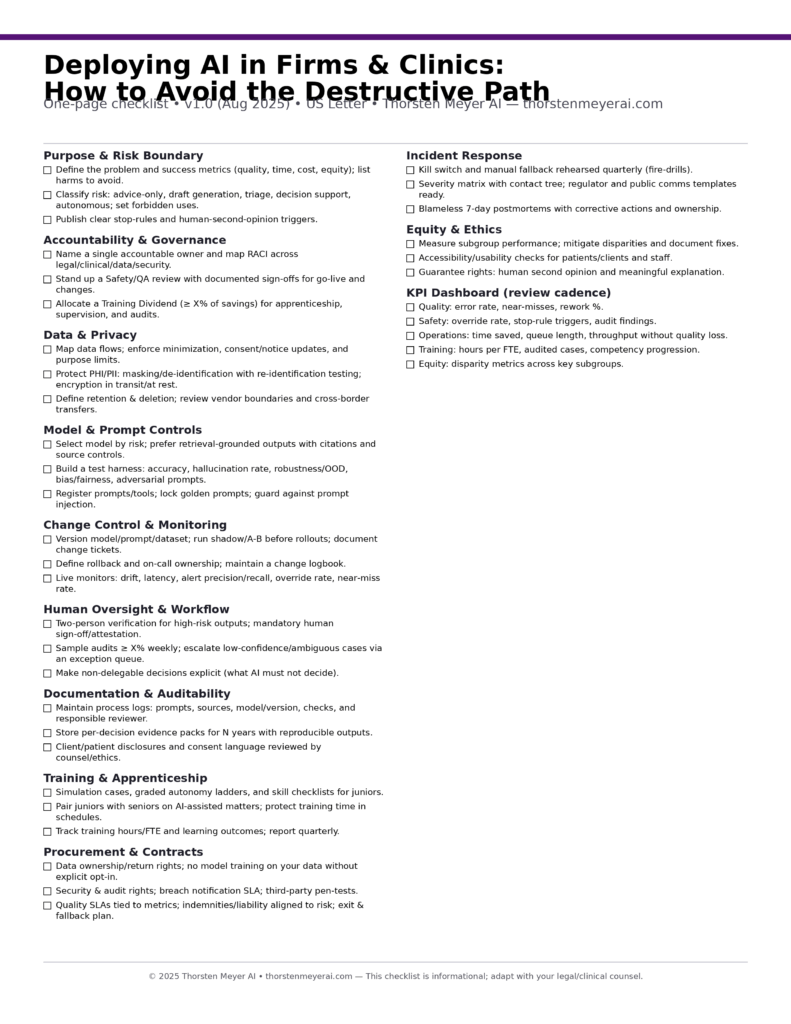

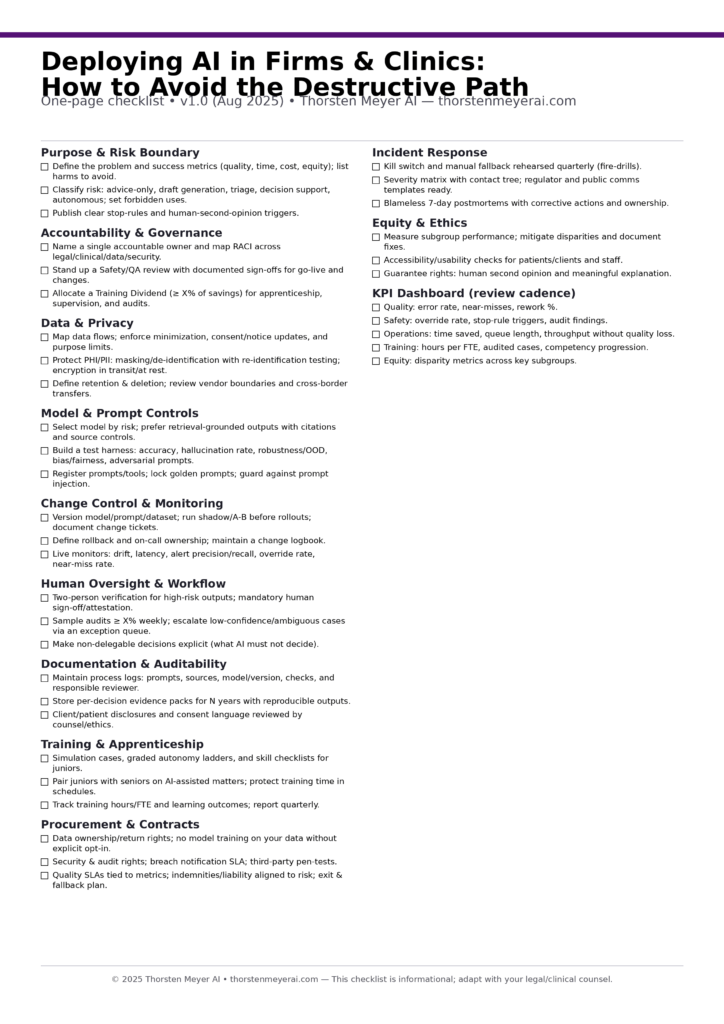

Deploying AI in Firms & Clinics: How to Avoid the Destructive Path One‑Page Checklist

One‑page checklist • v1.0 (Aug 2025)

Ready‑to‑use downloads

US Letter PNG (print‑ready):

US Letter PDF (one page):

A4 printable PNG:

A4 PDF (one page):