By Thorsten Meyer — May 2026

May 6, 2026 — yesterday — Anthropic announced an agreement with SpaceX to use the entire compute capacity of Colossus 1, the Memphis data center built and operated by Elon Musk’s xAI infrastructure. More than 300 megawatts. Over 220,000 NVIDIA GPUs. Online within the month. Effective immediately, Claude Code’s five-hour rate limits double for Pro, Max, Team, and seat-based Enterprise plans. Peak-hour limit reductions on Pro and Max accounts are removed entirely. API rate limits for Claude Opus models are increased substantially — Tier 1 sees 1,500 percent increase in maximum input tokens per minute and 900 percent increase in maximum output tokens per minute. The deal joins existing Anthropic compute commitments: up to 5 GW with Amazon, 5 GW with Google and Broadcom, $30 billion in Microsoft Azure capacity with NVIDIA strategic partnership, $50 billion in American AI infrastructure with Fluidstack. The announcement also signals “interest in partnering with SpaceX to develop multiple gigawatts of orbital AI compute capacity.”

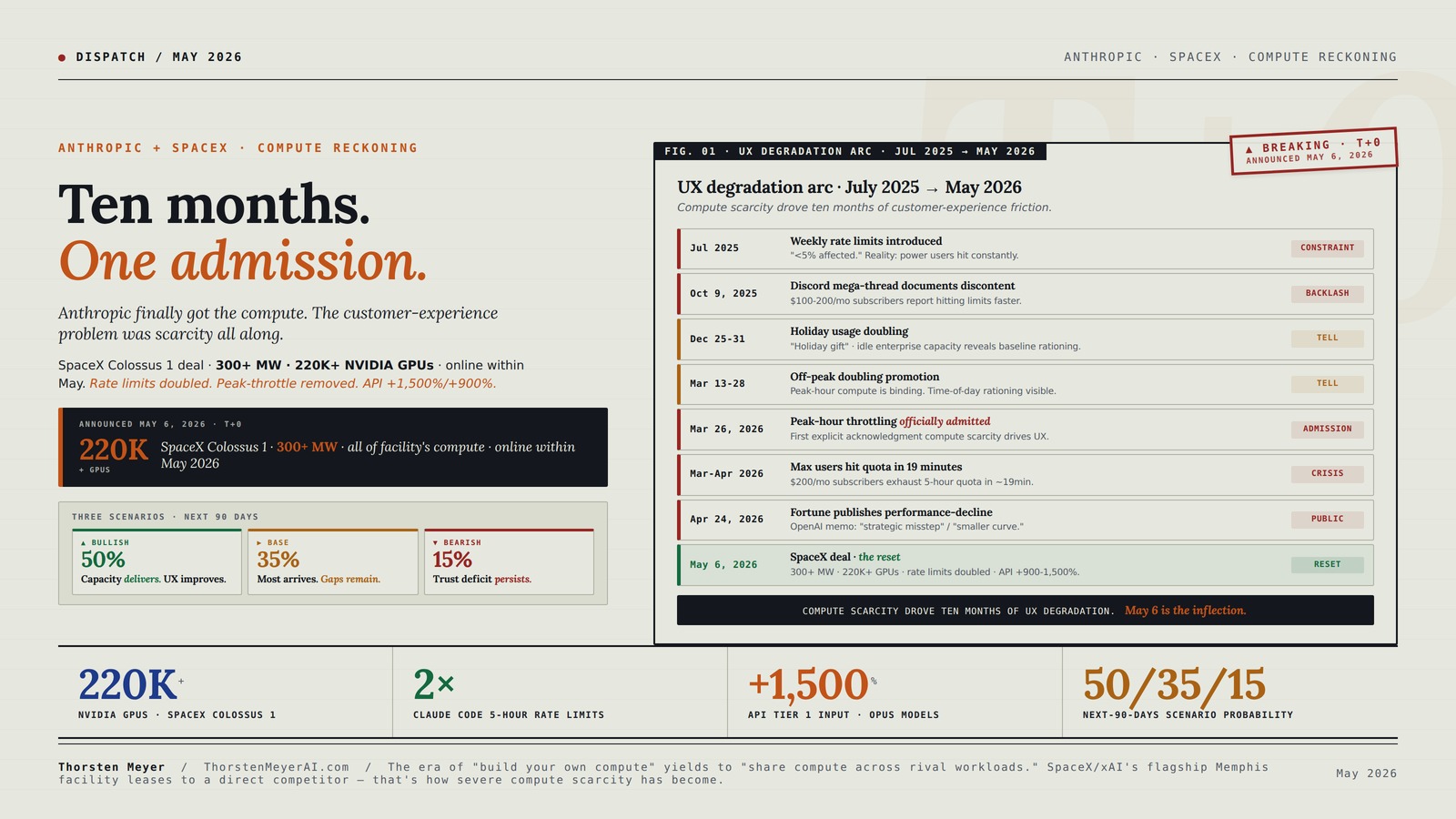

The headline frames this as good news for Claude users. The structural read is more uncomfortable: Anthropic is implicitly admitting that ten months of progressive customer-experience degradation — weekly rate limits introduced July 2025, peak-hour throttling rolled out March 2026, $200/month Max subscribers hitting quota in 19 minutes, the “Claude is dumber on Tuesdays” discourse, repeated outages, the controlled rollout of Mythos to “select large firms” only — was compute-driven. Not strategic product decisions. Not safety positioning. Compute scarcity. Anthropic’s own statement to Fortune in April acknowledged it: “Demand for Claude has grown at an unprecedented rate, and our infrastructure has been stretched to meet it, particularly at peak hours.” OpenAI’s internal memo, leaked to CNBC, characterized it more bluntly — Anthropic had made a “strategic misstep” by failing to secure sufficient compute and was “operating on a meaningfully smaller curve” than competitors.

The May 6 announcement closes that gap aggressively. The SpaceX deal alone (300+ MW, 220K+ GPUs within a month) is approximately equal to the entire H100-equivalent inference fleet a tier-2 hyperscaler ran in 2024. Combined with the broader compute portfolio (Amazon’s nearly 1 GW of new capacity by end of 2026, Google’s 5 GW beginning to come online in 2027, Microsoft’s $30B Azure commitment, Fluidstack’s $50B American AI infrastructure investment), Anthropic moves from “compute-constrained challenger” to “well-resourced frontier lab” in a single quarter. The strategic positioning matters enormously for the Q3-Q4 2026 IPO window covered in the Anthropic IPO disclosure dispatch — the compute risk factor that was prominent in the disclosure framework just got materially de-risked.

This dispatch is the structural read on what the May 6 announcement actually means. The compute scarcity narrative that was visible to attentive users for ten months. The customer-experience consequences and what the rate-limit changes actually deliver. The strategic significance of the SpaceX-xAI/Anthropic rival cooperation. The orbital ambition and what it signals about terrestrial compute constraints. The connections to broader threads from this dispatch series. The forward implications for Claude product strategy, the Anthropic IPO, and the AI lab competitive dynamic through 2026-2027.

Ten months. One admission.

Anthropic finally got the compute. The customer-experience problem was scarcity all along.

May 6, 2026 — Anthropic announced SpaceX Colossus 1 deal · 300+ MW · 220,000+ NVIDIA GPUs · online within May. Effective immediately: Claude Code 5-hour rate limits doubled. Peak-hour throttling removed. API limits up 1,500% input / 900% output for Opus on Tier 1. Closes ten-month UX degradation arc. Compute risk in IPO disclosure framework materially de-risked.

multi-GW exploration

Nine moments. One constraint.

For ten months, Claude users experienced compute scarcity as broken product. Anthropic experienced it as the binding constraint on growth. May 6 closes the gap — at the announcement level. Verification follows.

4U Server Cabinet Case – Rackmount Server Chassis with 7 PCI Slots, Lockable with Key

Server Cabinet Case:The 4u server cabinet case adopts a combined internal architecture.With 7 x PCI slot, providing additional…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Five partnerships. One arms race.

Anthropic now operates the second-largest publicly disclosed compute portfolio of any frontier lab — behind only Microsoft-OpenAI. Multi-vendor by design: Trainium + TPU + NVIDIA + custom · five major partners · multi-jurisdictional.

Deep Learning at Scale: At the Intersection of Hardware, Software, and Data

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Three scenarios. Verification follows.

50/35/15 probability allocation. The May 6 announcement either delivers on customer experience improvements or doesn’t. Setup factors favor bullish: SpaceX execution capability, IPO incentive alignment.

- Online May 2026SpaceX capacity as announced.

- UX improvements stickDoubled limits, no peak throttle.

- Trust rebuilds Q3ARR growth continues.

- IPO Q4 2026 catalyzesPositive market response.

- Outcome: Compute reckoning is start of positive arc.

- Some delayCapacity partial through May.

- Mostly deliversSome peak-period gaps.

- Trust rebuild slowerThrough Q3-Q4.

- IPO early 2027Pushed if needed.

- Outcome: Continuation trajectory with friction.

- Capacity lateOr arrives in pieces.

- Partial improvementsIssues recur in different form.

- Competitive erosionOpenAI / Google gain share.

- IPO substantially delayedOr repriced.

- Outcome: Trust deficit compounds. Multi-quarter rebuild.

The era of “build your own compute” yields to “share compute across rival workloads when economics support it.” SpaceX/xAI’s flagship Memphis facility leases to a direct competitor — that’s how severe compute scarcity has become across the AI lab category.

Next-Gen Game Streaming: Designing High-Performance Cloud Infrastructure for Real-Time Play

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Four assignments. By role.

Verify actual delivery vs announced.

Test the doubled rate limits in your workflow. Monitor performance through May-June. Consider whether to retain, upgrade, or cancel based on demonstrated improvement rather than announced improvement. The trust deficit from ten months of degradation requires sustained performance to repair. Anthropic has incentive to deliver — IPO timing depends on it.

Re-architect for new headroom.

1,500% input / 900% output Tier 1 increase is substantial. Scale rate-limit-bottlenecked applications. The structural implication: Anthropic now competitive with OpenAI on API capacity, narrowing what had been meaningful OpenAI advantage. Document delivered vs announced capacity in your monitoring.

Update models · compute risk de-risked.

The compute risk factor in the Anthropic IPO disclosure framework is materially de-risked. Q3-Q4 2026 IPO window becomes more credible. Valuation case strengthens — $30B ARR, $400-500B precedent from frontier-lab benchmarks, credible compute portfolio. Position based on demonstrated delivery through Q2-Q3 2026.

Direct demand validation for Q1 FY27 print.

220K+ GPUs from SpaceX deal alone. Aggregate NVIDIA-attributable demand from Anthropic’s compute portfolio plausibly $20-40B over 2026-2028. NVIDIA Q1 FY27 dispatch bull case gets concrete numbers. Hyperscaler capex thesis demand-pull validation gets specific evidence. Watch May 20 print for confirmation.

Active Optical/Electrical Cable CDFP x16 to CDFP x16 for PCIe 5.0, 1.6ft Data Center AI GPU Server Cable, Ultra Low Latency High Bandwidth,Computer Component (19.6, Inches)

【Features and Benefits】Compliant with SFF-TA-1032 MSA Standard,Data Rate: Support PCIe 5.0 32GT Per Channel,Low Power Consumption,Exceeds 32GT/channel electrical…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Executive Summary · The Compute Picture in One Table

| Compute commitment | Scale | Timing | Status |

|---|---|---|---|

| SpaceX Colossus 1 (Memphis) | 300+ MW · 220,000+ GPUs | Within May 2026 | Announced May 6, 2026 |

| Amazon (AWS Trainium) | Up to 5 GW; ~1 GW new in 2026 | 2026-2030 | Previously announced |

| Google + Broadcom (TPU) | 5 GW agreement | Begins 2027 | Previously announced |

| Microsoft + NVIDIA (Azure) | $30B Azure capacity | 2026-2028 | Strategic partnership |

| Fluidstack (US AI infra) | $50B investment | 2026-2030 | American AI infra commitment |

| Orbital compute (SpaceX exploration) | Multi-gigawatt aspiration | 2028+ speculative | “Expressed interest” |

| Claude Code 5-hour limits | Doubled Pro/Max/Team/Enterprise | Effective May 6 | Direct user benefit |

| Peak-hour throttling | Removed for Pro/Max | Effective May 6 | Direct user benefit |

| API Tier 1 input tokens/min | +1,500% for Opus models | Effective May 6 | Developer-facing scale |

| API Tier 1 output tokens/min | +900% for Opus models | Effective May 6 | Developer-facing scale |

| Anthropic ARR (Fortune Apr 2026) | $30 billion annualized | Q1 2026 disclosed | 3× growth in 12 months |

The cumulative picture: Anthropic has assembled the second-largest publicly-disclosed compute portfolio of any frontier lab, behind only Microsoft-OpenAI’s combined commitment. The compute deficit that drove ten months of customer experience degradation is structurally addressed. The May 6 announcement is the inflection point where Anthropic transitions from “compute-constrained” to “compute-resourced.” The user-facing rate-limit changes are the immediate proof.

1. The ten-month customer-experience arc

To understand why the May 6 announcement matters, the customer-experience timeline matters.

July 2025 · Weekly rate limits introduced. Anthropic rolls out new weekly rate limits for Claude Pro and Max subscribers, specifically targeting users running Claude Code continuously in background processes. Company framing: “less than 5 percent of subscribers will be affected based on current usage patterns.” Reality: users running production Claude Code workflows hit the limits constantly. The first signal that compute was binding.

October 9, 2025 · Discord mega-thread documents discontent. A Discord channel thread becomes the running log of user frustration. Subscribers paying $100-200/month for Max plans report hitting limits faster than expected. Anthropic’s response: largely silent through Q4 2025, except for general reassurance that limits were operating as designed.

December 25-31, 2025 · Holiday usage doubling. Anthropic doubles customer usage limits during the Christmas-to-New-Year window. The framing: “holiday gift to customers.” The structural reading: idle compute capacity was available because enterprise customers were on vacation, and Anthropic could afford to release it temporarily. The doubling proved that the baseline limits were demand-rationing, not technical-architecture constraints.

January 4, 2026 · Bug report after holiday revert. Bug report filed claiming heightened token consumption after holiday limits expire. Anthropic dismisses claims of usage reductions as “unfounded” and attributes complaints to “resumption of normal limits.” The Discord thread amplifies user frustration — paying customers are functionally getting a worse product in January than December for the same price.

March 13-28, 2026 · Off-peak doubling promotion. Anthropic temporarily doubles usage limits during off-peak hours through March 28 for Free, Pro, Max, and Team subscribers. The structural admission: peak-hour compute was the binding constraint. Off-peak had spare capacity. The promotion implicitly priced in time-of-day rationing as the compute-management tool.

March 26, 2026 · Anthropic officially admits peak-hour session-limit reductions. Thariq Shihipar (Anthropic technical staff) on X: “To manage growing demand for Claude we’re adjusting our 5 hour session limits for free/Pro/Max subs during peak hours. Your weekly limits remain unchanged. During weekdays between 5am–11am PT / 1pm–7pm GMT, you’ll move through your 5-hour session limits faster than before. We’ve landed a lot of efficiency wins to offset this, but ~7% of users will hit session limits they wouldn’t have before, particularly for pro tier.” The first explicit official acknowledgment that compute scarcity was driving user-experience changes.

Late March-April 2026 · Max subscribers report 19-minute quota exhaustion. Multiple reports surface that $200/month Max subscribers are hitting usage limits in approximately 19 minutes versus expected 5 hours. Anthropic acknowledges: “We’re aware people are hitting usage limits in Claude Code way faster than expected. We’re actively investigating, and will share more when we have an update.” Suspected combination of bugs in token tracking plus the underlying capacity rationing.

April 24, 2026 · Fortune publishes performance-decline analysis. The full pattern visible: weeks-long performance decline, outages as usage surged, peak-hour usage caps, controlled Mythos rollout limited to “select large firms.” Anthropic statement to Fortune: “Demand for Claude has grown at an unprecedented rate, and our infrastructure has been stretched to meet it, particularly at peak hours… compute is a constraint across the entire industry.”

OpenAI’s parallel narrative. Internal OpenAI memo leaked to CNBC characterized Anthropic as having made a “strategic misstep” by failing to secure sufficient compute and “operating on a meaningfully smaller curve” than competitors. The framing was self-serving but factually correct — Anthropic had announced fewer multibillion-dollar compute deals than OpenAI through 2024-2025. The catch-up began Q4 2025 with the Amazon and Google partnerships; the May 6 SpaceX deal completes the pivot.

May 6, 2026 · The reset. SpaceX deal announced. Claude Code rate limits doubled. Peak-hour throttling removed for Pro/Max. API rate limits raised dramatically. The ten-month compute-rationing era ends — at least at the announcement level. Whether the actual user experience matches the announcement remains to be verified through the next 30-60 days as the SpaceX capacity comes online.

The arc is structurally important because it reveals what compute scarcity actually looks like at the customer-experience level, not just at the abstract infrastructure level. Users were experiencing it as broken product. Anthropic was experiencing it as the binding constraint on growth. The May 6 announcement closes the gap.

2. The strategic significance of SpaceX-xAI / Anthropic rival cooperation

The SpaceX deal is structurally important beyond the raw compute numbers. The Memphis Colossus 1 facility was built and operated as xAI’s flagship training infrastructure. Elon Musk’s xAI is one of Anthropic’s direct competitors in the frontier-lab space. The cooperation is unusual.

The mechanics of the deal. Reports indicate Anthropic gets “all of the computing capacity at SpaceX’s Colossus 1 data center.” The framing positions SpaceX (not xAI) as the counterparty, suggesting either: (1) SpaceX has carved out the data center as a separate compute-leasing entity, or (2) the facility was built as SpaceX-owned with xAI as the original anchor tenant, and Anthropic takes over the workload, or (3) some combination of operational separation between the data center infrastructure (SpaceX) and the AI model-training tenant (xAI) that allows compute capacity to be sold to a competitor. The exact structure isn’t publicly disclosed. What’s clear: xAI’s exclusive use of Colossus 1 for Grok training is no longer happening, or has materially reduced, to enable Anthropic’s tenancy.

The Musk-Amodei rapprochement question. Elon Musk’s public commentary on AI safety has been mixed. He’s been a vocal critic of OpenAI (where he was a co-founder), has alleged that OpenAI breached its non-profit mission, and has positioned xAI explicitly against Sam Altman’s leadership. Musk’s relationship with Dario Amodei has been less public but historically less adversarial. The May 6 deal suggests either: (1) the relationship is functional enough to enable major commercial cooperation, (2) the financial terms are attractive enough to override personal/competitive dynamics, or (3) there are non-financial considerations (joint regulatory positioning, joint defense of certain AI policy positions, shared interests in compute scarcity narrative).

The competitive read for xAI. xAI losing exclusive access to its flagship Memphis facility is a strategic concession. xAI gains: cash flow from compute leasing (relevant for SpaceX IPO preparation, and arguably for xAI’s own funding), validation of the facility scale (“if Anthropic wants all of it, it must be capable enough”), and continued access to leverage SpaceX’s other infrastructure (Colossus 2, additional xAI buildouts). xAI loses: training capacity that would have accelerated Grok development. The trade only makes sense if xAI is shifting training to other facilities (Colossus 2 in Memphis at 1.2 GW projected, plus additional buildouts), or if xAI has determined that the unit economics of compute leasing exceed the strategic value of additional Grok training.

The competitive read for Anthropic. Anthropic gains immediate-term capacity (300+ MW within May), accelerated Claude Pro/Max capacity, marketing optionality around the SpaceX brand, and the orbital-compute narrative angle. Anthropic also gains structural validation of its position — competitor’s facility is leased to it. The cost: financial terms not disclosed, but at 220K+ GPUs at typical hyperscaler economics, the deal is plausibly $1-3 billion annually (cost depends heavily on whether Anthropic is buying capacity, leasing it, or running on cost-plus terms).

The OpenAI counter-positioning question. OpenAI’s internal “strategic misstep” memo just got blown apart. Anthropic now has a credible compute portfolio. The “operating on a meaningfully smaller curve” narrative no longer holds. OpenAI’s response will likely be additional compute commitments through 2026 — already announced $10B DeployCo joint venture with TPG / Bain Capital is one expression. The AI lab compute arms race intensifies, which validates the hyperscaler capex thesis demand-pull side.

The cooperation is strategic-rational for both parties despite the rival framing. The deeper read: compute scarcity is so severe across the AI lab category that even direct competitors find shared interest in efficient compute allocation. The era of “build your own compute” is yielding to “share compute across rival workloads when economics support it.”

3. The orbital compute angle

The May 6 announcement included one element that is qualitatively different from the rest: “We have also expressed interest in partnering with SpaceX to develop multiple gigawatts of orbital AI compute capacity.” This is the most strategically interesting paragraph in the announcement.

What “orbital AI compute” actually means. Data centers in space — running on solar power without atmospheric attenuation, dissipating heat through radiation rather than mechanical cooling, connected to terrestrial users via Starlink-class satellite networks. The technical concept has been discussed academically and in early-stage commercial proposals for years. Multiple gigawatts of orbital capacity would be a substantial fraction of total terrestrial AI compute today. The timeline for this is unclear; “expressed interest” is exploratory language, not a committed deployment.

Why orbital compute matters now. The power bottleneck dispatch covered the structural constraint that terrestrial AI deployment faces: power grid availability for new data centers is becoming binding through 2027-2028. Hyperscalers are scrambling for power-rich locations (UAE, Norway, Iceland, Texas with new capacity). Orbital compute bypasses the terrestrial grid entirely. If the technology can be made economical, it provides an end-run around the power constraint that compounds capex-deployment timing.

The SpaceX strategic alignment. SpaceX has unique capability for orbital deployment — Falcon 9 / Starship launch infrastructure, Starlink network connectivity, demonstrated experience with large constellation operations. No other entity globally has the integrated capability stack that orbital data centers require. SpaceX positioning toward orbital compute is consistent with its broader infrastructure ambitions; the Anthropic partnership signals AI demand for the capability.

The realistic timeline. Multi-gigawatt orbital compute is plausibly 2028-2032 timeline at earliest. Technical challenges include: heat dissipation (currently the fundamental ceiling on space-based compute scale), radiation hardening (chips need protection from cosmic rays without compromising performance), launch economics (300+ MW of compute requires substantial mass to orbit), and ground-segment connectivity (latency and bandwidth to terrestrial users). Each challenge is solvable in principle; the integration timeline is unclear. The “expressed interest” framing is appropriately speculative.

The signal value. Even if orbital compute is years away, the announcement signals that Anthropic is thinking strategically about long-horizon compute architecture. The Q3-Q4 2026 IPO window benefits from this positioning — investors evaluating Anthropic want to see strategic foresight on compute scarcity, which is the binding constraint for the entire AI lab category through 2030. The orbital narrative provides forward-looking optionality that competitors haven’t matched.

The orbital element is the most editorially distinctive piece of the May 6 announcement. The terrestrial commitments are large but conventional; the orbital interest is qualitatively different.

4. The connections to other dispatches

The compute reckoning connects to multiple structural threads from this dispatch series.

Connection 1 · The Anthropic IPO disclosure. The dispatch flagged compute risk as a forward-risk factor in the IPO framework. May 6 materially de-risks this. The Q3-Q4 2026 IPO window is now more credible because the compute deficit that would have appeared in S-1 risk factors is structurally addressed. Anthropic ARR at $30B (per Fortune Apr 2026) combined with credible compute portfolio supports valuation in the $300-500B range that early IPO disclosures suggested.

Connection 2 · The hyperscaler capex thesis. The capex dispatch covered the $725B 2026 commitment and the demand-pull thesis. Anthropic’s compute commitments are direct expressions of demand-pull — the combined $80B+ in compute partnerships (Amazon up to 5 GW, Google 5 GW, Microsoft $30B Azure, Fluidstack $50B, SpaceX undisclosed) represent multi-year contracted demand for hyperscaler infrastructure. The capex demand-pull validation gets material support.

Connection 3 · The bubble question disentanglement. The dispatch flagged frontier-lab valuations as one of three contested middle categories. Anthropic transitioning from “compute-constrained challenger” to “well-resourced frontier lab” supports the durable-value reading for AI lab valuations broadly. Customer experience improvements directly translate to revenue retention and growth, which improves unit economics through 2026-2027.

Connection 4 · The NVIDIA Q1 FY27 earnings preview. The dispatch covered the May 20 print. Anthropic’s commitment to “more than 220,000 NVIDIA GPUs” through SpaceX is a direct revenue signal for NVIDIA. The 5 GW Amazon agreement and 5 GW Google/Broadcom partnership also contain substantial NVIDIA hardware components. The aggregate NVIDIA-attributable demand from Anthropic’s compute portfolio is plausibly $20-40B over 2026-2028, which materially supports NVIDIA’s revenue trajectory.

Connection 5 · The power bottleneck. The dispatch covered the grid-cliff 2027-2028 constraint. The orbital compute interest signals Anthropic’s recognition that terrestrial power constraints are real and binding. The hyperscaler power-positioning thesis (Microsoft UAE+nuclear, Alphabet TPU+SMR, Amazon Trainium for power efficiency, Meta most exposed) gains additional confirmation through Anthropic’s diversification across power-different facilities.

Connection 6 · The agentic loop failure modes. The dispatch covered the technical limitations of current agent deployment. The compute scarcity that drove rate limits also constrained agent deployment economics. The May 6 capacity expansion enables more aggressive agentic deployment — Claude Code 5-hour limits doubled, peak-hour throttling removed, API rate limits up 900-1,500 percent. The economic constraints on agent deployment loosen materially.

Connection 7 · The labor displacement data. The dispatch covered Q1-Q2 2026 displacement patterns. The customer experience improvements for AI tools (Claude Code, Cursor with Claude integration, broader development tooling) accelerate the productivity translation that drives the displacement. Better AI tools at lower friction → faster productivity gains → faster cohort displacement.

Connection 8 · EU AI Act enforcement. The dispatch covered Q3-Q4 2026 enforcement activation. International expansion mentioned in the May 6 announcement (“our recently announced collaboration with Amazon includes additional inference in Asia and Europe”) reflects Anthropic’s positioning for EU regulatory framework. The structural read: Anthropic is preparing for EU compliance with regional infrastructure rather than relying on data export.

The cumulative picture: the May 6 compute reckoning is not an isolated business announcement. It compounds with multiple structural threads to shape the AI lab competitive dynamic, the IPO market for AI labs, the hyperscaler capex demand validation, and the AI deployment economics through 2026-2028.

5. The forward implications

Three structural patterns emerge from the May 6 announcement that affect the AI cycle through 2026-2027.

Pattern 1 · The Anthropic IPO is now more credible. The Anthropic IPO disclosure dispatch flagged compute risk in the forward-risk framework. May 6 closes that gap materially. With $30B ARR and credible compute portfolio, an Anthropic IPO at $300-500B valuation in Q3-Q4 2026 is plausible. The “operating on a smaller curve” OpenAI memo no longer applies. Anthropic’s strategic narrative for IPO investors now centers on: superior product (Claude Code adoption, Mythos cybersecurity capabilities, enterprise penetration), Constitutional AI / RSP framework alignment with regulatory environment, multi-vendor compute portfolio (no single-provider dependency), and disciplined capital allocation. The valuation case is materially strengthened.

Pattern 2 · The AI lab compute arms race intensifies. Anthropic’s compute portfolio is now in the same league as OpenAI (Microsoft Azure + Stargate + DeployCo) and Google (in-house TPU + diversified hardware). Smaller AI labs (Mistral, Aleph Alpha, Cohere, others) face widening compute gap. The structural implication: the AI lab category bifurcates more sharply — frontier labs with multi-vendor compute portfolios versus specialized labs with focused infrastructure access. Mid-tier AI labs face existential pressure unless they identify defensible specialization (sovereign positioning for European players, vertical-specific models, open-source community building).

Pattern 3 · Customer experience becomes competitive variable. The ten months of Claude UX degradation cost Anthropic real customer goodwill. Cursor / Replit / Windsurf gained Claude-Code-adjacent share specifically because their platforms managed compute differently. The May 6 reset addresses the immediate friction, but the trust deficit takes longer to rebuild. AI labs that maintain better customer experience through compute scarcity periods gain durable advantage. The implication: customer experience metrics (rate limit satisfaction, peak-hour reliability, predictable pricing) become first-class competitive variables alongside model capability.

The forward implication for users: the immediate experience improves dramatically (rate limits doubled, peak throttling removed, API limits up 900-1,500 percent). The medium-term experience depends on whether the SpaceX capacity actually comes online as announced, whether efficiency gains continue to absorb usage growth, and whether the broader compute portfolio scales as committed. The May 6 announcement is the inflection point but not the resolution.

6. Three scenarios for the next 90 days

The May 6 announcement either delivers on customer experience improvements or doesn’t. The next 90 days reveal which scenario materializes.

Bullish scenario · 50% probability · “Capacity comes online; UX dramatically improves.” SpaceX Colossus 1 capacity comes online within May 2026 as announced. Claude Code 5-hour limits effectively double. Peak-hour throttling stays removed. API limits actually scale as announced. User community rebuilds trust through Q3 2026. ARR continues growing through Q3-Q4. Anthropic IPO Q4 2026 announcement catalyzes positive market response. Compute reckoning is the start of a positive narrative arc.

Base scenario · 35% probability · “Most capacity arrives; gaps remain.” SpaceX capacity arrives within May with some delay. Most rate-limit improvements deliver as announced. Some users still hit limits during extreme peak periods. Anthropic continues iterating on capacity management. Trust rebuild takes longer through Q3-Q4 2026. ARR growth continues but customer churn from prior period takes time to reverse. IPO timing pushed to early 2027 if needed.

Bearish scenario · 15% probability · “Implementation gap; trust deficit persists.” SpaceX capacity arrives late or in pieces. Rate limit improvements only partial. Peak-hour issues recur in different form. Customer trust deficit persists. Competitive pressure from OpenAI (DeployCo + Pentagon contracts), Google (Gemini Enterprise momentum), Microsoft (Copilot enterprise expansion) erodes Anthropic’s positioning. ARR growth slows. IPO timing pushed substantially.

The 50/35/15 probability allocation reflects the specifics of the May 6 announcement. Setup factors favoring the bullish scenario: SpaceX has demonstrated execution capability; the rate-limit changes are explicitly tied to capacity delivery; Anthropic has incentive to deliver before IPO. Setup factors favoring the bearish scenario: capacity-buildout always faces execution risk; large-scale data center transitions are operationally complex; the user trust deficit is real and hard to quickly repair.

7. The strategic implications by stakeholder

The May 6 announcement has direct consequences for five stakeholder groups.

For Claude Pro/Max users. Immediate improvements: doubled Claude Code 5-hour limits, removed peak-hour throttling. Verify the actual delivery over the next 30 days — announcement vs delivery has had gaps in prior Anthropic capacity claims. Consider whether to upgrade or cancel based on demonstrated improvement rather than announced improvement. The trust deficit from ten months of degradation requires sustained performance to repair.

For API developers. Massive increase in Tier 1 API rate limits (1,500 percent input, 900 percent output for Opus). Check the official rate limits documentation for specifics. Re-architect rate-limit-constrained applications to take advantage of new headroom. The structural implication: Anthropic is now more competitive with OpenAI on API capacity, narrowing what had been a meaningful OpenAI advantage.

For Anthropic IPO investors. The compute risk factor is materially de-risked. The Q3-Q4 2026 IPO window becomes more credible. Valuation case strengthens — $30B ARR, $400-500B valuation precedent from prior frontier-lab benchmarks, credible compute portfolio, disciplined capital allocation. Position based on demonstrated delivery of May 6 commitments through Q2-Q3 2026.

For competitive AI labs. Anthropic moves out of the “compute-constrained challenger” frame. OpenAI’s “smaller curve” narrative no longer holds. Smaller labs (Mistral, Aleph Alpha, Cohere) face widening structural disadvantage. Specialization becomes more important — vertical-specific positioning, sovereign positioning, open-source community building, regional regulatory advantages.

For NVIDIA / hyperscalers. Direct demand validation. 220K+ GPUs from SpaceX deal alone. Aggregate NVIDIA-attributable demand from Anthropic’s compute portfolio plausibly $20-40B over 2026-2028. The NVIDIA Q1 FY27 dispatch bull case for revenue trajectory gets specific evidence. Hyperscaler capex thesis demand-pull validation gets concrete numbers.

What to Do This Quarter (Through Q2-Q3 2026)

1. Claude users. Test the improvements. Verify the doubled rate limits actually deliver in your workflow. Monitor performance through May-June. Consider whether to retain, upgrade, or cancel based on demonstrated improvement rather than announced improvement. The trust rebuild requires sustained performance.

2. API developers. Scale your applications to take advantage of new headroom. The 1,500% input / 900% output Tier 1 increase is substantial. Re-architect rate-limit-bottlenecked workflows. Document actual delivered capacity vs announced capacity for your application monitoring.

3. AI lab observers. The compute arms race just intensified. Track OpenAI’s response (additional capacity announcements likely Q2-Q3 2026), Google’s Gemini Enterprise scaling, smaller-lab specialization moves. The frontier-lab category is bifurcating; the bifurcation pattern matters for bubble question frontier-lab valuation analysis.

4. Investors. Update Anthropic IPO models — compute risk materially de-risked, valuation case strengthens. Update NVIDIA forward demand models — Anthropic alone contributes meaningful revenue trajectory. Watch for OpenAI counter-announcements through Q2-Q3 2026 as competitive response.

The Strategic Read

May 6, 2026 — Anthropic announced the SpaceX Colossus 1 partnership: 300+ MW, 220,000+ NVIDIA GPUs online within the month. Combined with Amazon (up to 5 GW), Google + Broadcom (5 GW from 2027), Microsoft + NVIDIA ($30B Azure capacity), Fluidstack ($50B American AI infrastructure), Anthropic now operates the second-largest publicly disclosed compute portfolio of any frontier lab. Effective immediately: Claude Code 5-hour rate limits doubled for Pro/Max/Team/Enterprise. Peak-hour throttling removed for Pro/Max. API rate limits raised 1,500 percent input / 900 percent output for Opus models on Tier 1. Anthropic also “expressed interest” in multi-gigawatt orbital AI compute capacity with SpaceX.

The structural reckoning matters more than the headline. For ten months — July 2025 through April 2026 — Anthropic’s customer experience progressively degraded. Weekly rate limits introduced July 2025. Holiday usage doubling in December 2025 (revealing baseline rationing). Peak-hour throttling officially announced March 26, 2026. $200/month Max subscribers reporting 19-minute quota exhaustion. Outages, performance decline, controlled Mythos rollout to “select large firms only.” Anthropic statement to Fortune in April: “Demand for Claude has grown at an unprecedented rate, and our infrastructure has been stretched to meet it.” OpenAI’s leaked internal memo: Anthropic made a “strategic misstep” by failing to secure compute, “operating on a meaningfully smaller curve.” Users were experiencing compute scarcity as broken product. Anthropic was experiencing it as the binding constraint on growth. May 6 closes the gap.

The SpaceX rival cooperation is structurally significant beyond the compute numbers. Colossus 1 was xAI’s flagship Memphis training facility. Anthropic gets access to all of it. The framing positions SpaceX (not xAI) as counterparty, suggesting operational separation between data center infrastructure and AI training tenant. The Musk-Amodei rapprochement is functional enough to enable major commercial cooperation despite the rival positioning. The deeper read: compute scarcity is so severe that even direct competitors find shared interest in efficient compute allocation. The era of “build your own compute” yields to “share compute across rival workloads when economics support it.”

The orbital compute interest is the most editorially distinctive element. “Multiple gigawatts of orbital AI compute capacity” with SpaceX. The realistic timeline is 2028-2032 at earliest given heat dissipation, radiation hardening, launch economics, and ground-segment connectivity challenges. The signal value matters even if the deployment timeline is years out — Anthropic is thinking strategically about long-horizon compute architecture, which addresses the Q3-Q4 2026 IPO window expectation that frontier labs demonstrate strategic foresight on compute scarcity.

The connections to broader threads run deep. The Anthropic IPO disclosure dispatch flagged compute risk; May 6 materially de-risks it. The hyperscaler capex thesis demand-pull validation gets specific evidence — Anthropic’s combined $80B+ compute commitments are direct expressions of contracted hyperscaler demand. The bubble question durable-value reading for AI labs gains support. The NVIDIA Q1 FY27 earnings preview bull case gets concrete numbers — Anthropic alone plausibly contributes $20-40B in NVIDIA-attributable demand 2026-2028. The power bottleneck thesis gets confirmed through the orbital compute interest signaling Anthropic’s recognition of terrestrial power constraints.

Three scenarios resolve through the next 90 days. Bullish (50%): SpaceX capacity comes online as announced; UX dramatically improves; trust rebuilds; ARR growth continues; IPO Q4 2026 catalyzes positive response. Base (35%): most capacity arrives with some delay; rate-limit improvements mostly deliver; trust rebuild takes longer; IPO possibly pushed to early 2027. Bearish (15%): capacity arrives late; partial improvements; trust deficit persists; competitive pressure erodes positioning; IPO substantially delayed. The probability allocation reflects SpaceX’s demonstrated execution capability against the operational complexity of large-scale data center transitions.

The strategic implications run by stakeholder. Claude users should verify actual delivery vs announced delivery before drawing conclusions. API developers should re-architect to take advantage of new headroom. Anthropic IPO investors should update models for materially de-risked compute factor. Competitive AI labs should expect intensified compute arms race; smaller labs face widening structural disadvantage. NVIDIA / hyperscalers get direct demand validation.

The deeper signal: the May 6 announcement is an inflection point in the AI lab competitive dynamic. Anthropic transitions from compute-constrained challenger to well-resourced frontier lab. The customer experience problems that have characterized the past ten months either resolve through Q2-Q3 2026 or persist. The IPO window opens or doesn’t. The orbital compute exploration matures or remains conceptual. Which scenario materializes determines whether the May 6 reset is the start of a sustained positive arc or a temporary catch-up that the structural compute constraints reassert.

The honest assessment: the most likely scenario is the bullish case — capacity delivers, UX improves, IPO proceeds, the compute arms race intensifies but Anthropic holds its position. The probability of the bearish tail is meaningful but lower. The structural insight is that the customer experience degradation of the past ten months was the visible expression of compute scarcity at the binding constraint. The May 6 announcement is the visible expression of the constraint loosening. Whether the loosening sustains through 2027-2028 depends on whether Anthropic can keep ahead of demand growth — which has tripled ARR in twelve months and shows no signs of slowing.

May 6, 2026 — Anthropic announces SpaceX Colossus 1 deal: 300+ MW, 220,000+ GPUs within May. Combined compute portfolio (Amazon 5 GW + Google 5 GW + Microsoft $30B Azure + Fluidstack $50B + SpaceX) makes Anthropic second-largest publicly disclosed compute portfolio in frontier-lab category. Claude Code rate limits doubled. Peak-hour throttling removed. API limits up 900-1,500%. Closes ten-month customer-experience degradation arc. The compute risk factor in the Anthropic IPO disclosure framework is materially de-risked. Three scenarios with 50/35/15 probability allocation. Bullish base case: capacity delivers, UX improves, IPO Q4 2026 proceeds.

About the Author

Thorsten Meyer is a Munich-based futurist, post-labor economist, and recipient of OpenAI’s 10 Billion Token Award. He spent two decades managing €1B+ portfolios in enterprise ICT before deciding that writing about the transition was more useful than managing quarterly slides through it. More at ThorstenMeyerAI.com.

Related Dispatches

- The Anthropic IPO Disclosure Document

- The $725B Hyperscaler Capex Question

- The Bubble Question, Disentangled

- The NVIDIA Q1 FY27 Earnings Preview

- The Power Bottleneck — Grid Cliff 2027-2028

- The Agentic Loop Failure Modes

Sources

- Anthropic · Higher usage limits for Claude and a compute deal with SpaceX · May 6, 2026 — primary announcement

- Yahoo Finance · Anthropic signs SpaceX compute deal, raises Claude usage limits · May 6, 2026

- CNBC · Anthropic, SpaceX announce compute deal that includes space development · May 6, 2026

- The Decoder · Anthropic taps SpaceX’s Colossus-1 data center for 220,000 GPUs · May 6, 2026

- 9to5Google · Claude Code is getting higher usage limits, doubled for most users · May 6, 2026

- DataCenter Dynamics · Anthropic to use all of SpaceX-xAI’s Colossus 1 data center compute · May 6, 2026

- Fortune · Anthropic explains Claude Code’s recent performance decline after weeks of user backlash · April 24, 2026

- The Register · Anthropic admits Claude Code quotas running out too fast · March 31, 2026

- The Register · Claude devs complain about surprise usage limits · January 5, 2026

- TechRadar · Claude is limiting usage more aggressively during peak hours · March 27, 2026

- MacRumors · Claude Code Users Report Rapid Rate Limit Drain · March 26, 2026

- DevOps.com · Developers Using Anthropic Claude Code Hit by Token Drain Crisis · April 1, 2026

- PYMNTS · Claude Users Hit a New Reality of AI Rationing · March 25, 2026

- J.D. Hodges · Claude AI Usage Limits: What Changed in 2026 · March 2026

- XDA Developers · I almost ditched Claude over its brutal rate limits · April 3, 2026

- Anthropic · Up to 5 GW agreement with Amazon

- Anthropic · 5 GW agreement with Google and Broadcom

- Anthropic · Strategic partnership with Microsoft and NVIDIA · $30B Azure capacity

- Anthropic · $50 billion investment with Fluidstack in American AI infrastructure

- Anthropic · Commitment to cover consumer electricity price increases caused by US data centers

- OpenAI internal memo (CNBC) · “strategic misstep” / “meaningfully smaller curve” framing

- Anthropic ARR · $30 billion annualized run rate per Fortune Apr 2026 · 3× growth in 12 months