By Thorsten Meyer | ThorstenMeyerAI.com | February 2026

Executive Summary

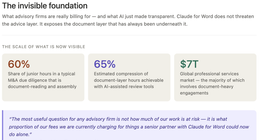

While the AI discourse fixates on model benchmarks and chatbot features, the most consequential shift is happening in the operational basement. On February 10, 2026, IBM launched a new FlashSystem portfolio — the 5600, 7600, and 9600 — positioned as “autonomous storage” with agentic AI as co-administrator. FlashSystem.ai, trained on tens of billions of telemetry data points, makes thousands of operational decisions per day and claims to reduce manual storage management by up to 90%. Whether that number holds in heterogeneous production environments is an open question. That IBM is framing the pitch around autonomous control — not a chatbot, not a dashboard, not a copilot — is not.

This is the infrastructure layer telling the market: AI is moving from the interface to the control plane. From assisting humans in producing outputs to autonomously maintaining service continuity, security posture, and cost optimization. Gartner predicts 40% of enterprise applications will feature task-specific AI agents by end of 2026, up from less than 5% in 2025. By 2029, 70% of enterprises will deploy agentic AI in IT infrastructure operations.

The productivity context reinforces why this matters — and why execution, not access, is the differentiator. OECD data shows labour productivity growth across advanced economies averaged just 0.4% in 2024. The euro area recorded –0.9% in 2023 — the steepest drop since 2009. The United States managed 1.6%. This is not the profile of an AI-driven productivity boom. The macro signal says: digitization is not automatically translating into output gains. The opportunity is therefore execution-specific: enterprises that institutionalize autonomous workflows with governance discipline may capture a real productivity differential. Those that remain at pilot stage — and Dynatrace’s global survey says ~50% of agentic AI projects are still in POC or pilot — likely will not.

| Metric | Value |

|---|---|

| IBM FlashSystem.ai operational decisions | Thousands per day |

| Manual storage management reduction claimed | Up to 90% |

| Enterprise apps with AI agents by end 2026 (Gartner) | 40% (from <5% in 2025) |

| Enterprises deploying agentic AI in IT ops by 2029 | 70% (from <5% in 2025) |

| OECD average labour productivity growth (2024) | 0.4% |

| Euro area productivity (2023) | –0.9% (worst since 2009) |

| Agentic AI projects still in POC/pilot (Dynatrace) | ~50% |

| Organizations with 2–10 agentic AI initiatives | 72% |

| Top barrier to production: security/privacy | 52% of leaders |

| AIOps market (2024) | $14.6B, projected $36B by 2030 |

This article examines what IBM’s announcement signals about the structural shift from copilots to control planes, why the OECD productivity baseline should discipline strategy, where autonomous operations have strong evidence of return, and what enterprise and public leaders should do now.

Design Principles of Autonomous Systems

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

1. The Overnight Signal: Infrastructure Is Becoming Agentic

IBM FlashSystem: The Concrete Case

The strongest short-cycle signal came from enterprise infrastructure, not frontier labs. IBM’s new FlashSystem portfolio inserts AI agents into three operational layers:

| Operational Layer | What FlashSystem.ai Does | Strategic Significance |

|---|---|---|

| Runtime control | Autonomous storage tiering, performance optimization, capacity planning | AI makes operational decisions without human initiation |

| Cyber resilience | Threat detection, anomaly identification, recovery orchestration | AI tied to security posture, not just efficiency |

| Cost optimization | Continuous workload placement, data efficiency (40% improvement claimed) | AI optimizes economics continuously, not episodically |

The numbers: 40% greater data efficiency versus the previous generation. 30–75% storage footprint reduction depending on model. Up to 90% reduction in manual management. General availability March 6, 2026.

Uncertainty note: These claims are vendor-reported from controlled environments. Independent validation in heterogeneous production — mixed workloads, multi-vendor integrations, legacy data — should be expected to yield lower numbers. The directional signal, not the exact percentage, is what matters for strategy.

Why This Isn’t Just a Product Launch

IBM is not unique. It’s representative. The pattern repeats across infrastructure vendors: agentic AI embedded not in user interfaces but in operational control paths. The CNCF’s 2026 forecast identifies four pillars of platform control for autonomous enterprise operations: golden paths, guardrails, safety nets, and manual review workflows. These aren’t chatbot features. They’re control-plane architecture.

The industry signal: observability is becoming the system of record for AI operations. Dynatrace, Datadog, and New Relic are all positioning their platforms as the governance layer for autonomous agents — the mechanism through which enterprises see, audit, and constrain what agents do in production.

“IBM didn’t announce a storage product. It announced a thesis: that the most valuable AI deployment isn’t a chatbot answering questions. It’s an agent making thousands of infrastructure decisions per day that no human would have time to make — and most wouldn’t know to make.”

UGREEN NAS DH4300 Plus 4-Bay Desktop NASync, Support Capacity 128TB (Diskless), Remote Access, AI Photo Album, Beginner Friendly, 8GB LPDDR4X RAM, 2.5GbE, 4K HDMI, Network Attached Storage (Diskless)

Entry-level NAS Home Storage: The UGREEN NAS DH4300 Plus is an entry-level 4-bay NAS that's ideal for home…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2. From Tools to Control Planes: The Governance Consequence

What Changes When AI Enters the Operational Core

Most governance programs were designed for generative use cases: content risk, hallucination, IP exposure, privacy leakage. Those are real risks. They are also interface-layer risks — the AI assists a human, the human reviews the output, the human acts. The accountability chain is intact.

Autonomous infrastructure introduces a fundamentally different class of risk because it breaks the human-in-the-loop assumption that underpins most governance frameworks.

| Risk Class | Description | Why Traditional Controls Fail |

|---|---|---|

| Control-plane risk | AI agents with privileged operational rights; errors propagate faster than human escalation | ITIL/ITSM assume human approval gates |

| Recovery-path opacity | AI “recommended remediation” becomes black box during incidents | Incident response playbooks assume deterministic logic |

| Single-vendor dependence | Agentic functions embedded in proprietary stacks; lock-in deepens | Procurement assumed modular, swappable components |

| Audit mismatch | Deterministic workflows and human approvals assumed by SOX/ITGC | Agentic controls require event-level observability |

| Cascading autonomy | One agent’s output triggers another agent’s action across systems | No monitoring framework covers agent-to-agent chains |

The Governance Gap in Numbers

Dynatrace’s 2026 global survey of 919 senior leaders at enterprises with $100M+ revenue reveals the gap:

- 52% cite security, privacy, or compliance as the top barrier to production agentic AI

- 51% cite technical challenges in managing and monitoring agents at scale

- 44% cite shortage of skilled staff

- Only 13% run fully autonomous agents; 64% use supervised-plus-autonomous hybrid models

- Only 23% have reached mature, enterprise-wide integration

The pattern: enterprises are deploying agentic AI widely but governing it narrowly. 72% run 2–10 agentic AI initiatives, but the governance architecture for autonomous operations — event-level observability, policy attestation, rollback mechanisms — lags deployment by 12–18 months in most organizations.

Callout: The governance gap for autonomous operations is not a policy gap — most enterprises have AI policies. It’s an architecture gap. Policy says “AI should be governed.” Architecture determines whether governance is operationally enforceable in real time.

24-Drawer Heavy Duty Metal Parts Cabinet – Bolt and Nut Organizer with Clear PS Drawers, Hardware Storage Cabinet for Workshop Garage or Office, Bolt Bin, Stackable Design, No Assemble

Heavy Duty Cabinet With 24 Drawers – The metal storage cabinet is manufactured out of galvannealed steel for…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

3. The OECD Productivity Baseline: A Sobriety Check

Why the Macro Numbers Should Discipline Strategy

If AI were already delivering broad productivity gains, the OECD numbers would show it. They don’t.

| Economy | GDP per Hour Worked | Period | Trend |

|---|---|---|---|

| United States | ~$97 USD PPP | 2023 | +1.6% YoY |

| Germany | ~$98 USD PPP | 2024 provisional | Stagnant |

| Euro area average | — | 2023 | –0.9% (worst since 2009) |

| OECD average | — | 2024 | +0.4% |

| OECD Asian countries | — | 2024 | +1.8% (best performer) |

Labour productivity growth of 0.4% across OECD countries in 2024 is not a productivity revolution. The euro area’s –0.9% in 2023 — the steepest decline since 2009 — occurred precisely during the period when generative AI was being deployed at scale across European enterprises. Labour hoarding (firms retaining employees despite weak demand) explains part of the gap, but not the persistence of flat-to-negative productivity in economies investing heavily in AI.

The Complementary Factors That Explain the Gap

This is consistent with every prior general-purpose technology wave. Performance gains appear first in reorganized systems, not automatically in aggregate statistics. The constraints:

| Constraint | Description | Impact on Productivity |

|---|---|---|

| Process redesign lag | AI layered on unreformed processes | Automates existing inefficiency faster |

| Data architecture debt | Fragmented, siloed, low-quality enterprise data | Agents can’t act on data they can’t access or trust |

| Governance friction | Compliance reviews, approval chains, audit requirements | Slows deployment from pilot to production |

| Managerial capability gaps | Leaders who understand AI technically but not operationally | AI adopted but not integrated into decision rights |

| Diffusion gap | Frontier firms capture gains; median firms don’t | Aggregate stats reflect the median, not the frontier |

Strategic implication: Competitive advantage will come less from buying access to models and more from redesigning decision rights, escalation logic, and process ownership around autonomous execution. The OECD data suggests that model access alone — which is nearly universal among enterprises — is not the binding constraint on productivity.

“The OECD data is useful precisely because it’s unspectacular. If we were in an AI productivity boom, we’d see it in the numbers. We don’t. The productivity gain is available — but only to organizations that do the harder work of process redesign, not just tool deployment.”

Empowering Cyber Educators: Strategies and Tools for Educators Shaping the Future of Cybersecurity (The Cyber Education Series: Teaching the Future of Security Book 3)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4. Investment Logic: Where Returns Are Likely, Where They’re Weakly Evidenced

High-Return Domains (If Well-Governed)

Dynatrace’s survey identifies the strongest ROI signals: ITOps/system monitoring (44%), cybersecurity (27%), and data processing/reporting (25%). These share a common profile:

| Domain | Why Autonomous AI Adds Value | Evidence Strength |

|---|---|---|

| Infrastructure optimization | High-frequency decisions; speed > human capacity; quantifiable cost savings | Strong (vendor + independent signals) |

| Incident detection/triage/containment | Time-critical; consistent patterns; reduces MTTR | Strong (measured in minutes, not quarters) |

| Multi-system workflow routing | Complex interdependencies; rules-based with probabilistic edge cases | Moderate (case studies emerging) |

| Continuous compliance checking | Deterministic requirements against shifting data; scale advantage | Moderate (regulated sector adoption) |

The common thread: high-frequency, time-critical, pattern-recognizable tasks where autonomous speed creates measurable value and where errors are bounded and reversible.

Weakly Evidenced Domains (Label Uncertainty Explicitly)

Not all autonomous AI investments have strong evidence. Leaders should be explicit about where they’re placing bets vs. making informed deployments:

| Domain | Why Evidence Is Weak | Risk |

|---|---|---|

| Fully autonomous cross-functional planning | Requires integration across unconnected systems; decisions aren’t easily reversible | High — errors compound across functions |

| End-to-end transformation in low-data environments | AI needs quality data; “garbage in, autonomous garbage out” | Medium-High — cost without productivity gain |

| Rapid “lift-and-shift” autonomy on legacy estates | Legacy systems weren’t designed for agent interaction; no clean APIs | Medium — integration cost exceeds ROI |

| Autonomous procurement and vendor negotiation | Requires judgment, relationship context, and strategic priorities AI can’t fully model | Medium — narrow wins, broad risks |

Uncertainty label: Evidence of durable enterprise-scale performance gains from autonomous operations remains limited and skewed toward case studies, pilots, and vendor disclosures. The AIOps market is projected to reach $36 billion by 2030 (from $14.6 billion in 2024), which reflects expectation — not yet validated outcomes at scale.

Callout: The honest investment framework: deploy autonomous AI where errors are bounded and speed is valuable. Label as bets — not strategies — the domains where errors compound, data is poor, or processes haven’t been redesigned. The difference between a well-governed deployment and an expensive pilot is whether the organization names its uncertainties.

5. The Layered Autonomy Architecture

A Practical Framework for Production Deployment

The Dynatrace finding that 64% of enterprises use supervised-plus-autonomous hybrid models points toward the operational architecture that actually works in production: layered autonomy with explicit governance at each tier.

| Level | Autonomy Scope | Human Role | Governance Requirement |

|---|---|---|---|

| Level 0: Manual | No AI involvement | Full human control | Standard ITGC/SOX controls |

| Level 1: Recommendation | AI suggests; human decides and acts | Review and approve | Recommendation logging; accuracy metrics |

| Level 2: Bounded autonomy | AI acts within predefined parameters; human monitors | Exception handling | Full event logging; policy boundary enforcement |

| Level 3: Supervised autonomy | AI acts autonomously; mandatory post-event attestation | Audit and override | Real-time observability; automated compliance checks |

| Level 4: Full autonomy | AI acts without human review for defined domains | Strategic oversight only | Continuous assurance; regulatory attestation |

Most enterprises should be operating at Levels 1–2 for broad deployments and Level 3 for high-frequency, bounded domains (like IBM’s storage optimization). Level 4 should be reserved for narrow, well-understood tasks with proven rollback mechanisms and mature observability.

The governance test at each level: Can you explain what the agent did, why it did it, and how to reverse it — within the timeline that matters for your stakeholders? If the answer is no, you’re at a higher autonomy level than your governance supports.

Procuring for Reversibility

The deeper the agentic functions embed in proprietary stacks, the higher the lock-in. Practical procurement requirements:

- Telemetry export rights: Full access to all agent decision logs and telemetry in open formats

- Model-switch clauses: Contractual right to replace the AI layer without replacing the infrastructure

- API continuity guarantees: Commitment that operational APIs remain stable across vendor AI updates

- Rollback SLAs: Defined time-to-revert if autonomous decisions create incidents

- Agent audit interfaces: External tools can query agent state, recent decisions, and policy compliance

“The question isn’t whether to deploy autonomous infrastructure. The question is whether you can explain, reverse, and audit what it does. If your answer depends entirely on the vendor’s dashboard, you don’t have governance — you have a subscription.”

6. Implications for Cyber Insurance and Regulatory Posture

The Insurance Signal

Cyber insurers are already conditioning coverage on AI-specific controls. The emerging pattern: AI Security Riders that require documented evidence of adversarial red-teaming, model-level risk assessments, and specialized safeguards as prerequisites for underwriting.

| Insurance Requirement | Description | Enterprise Impact |

|---|---|---|

| AI risk registers | Documented inventory of all AI/agent systems with risk classifications | Mandatory for coverage |

| Model governance protocols | Evidence of testing, monitoring, and human oversight mechanisms | Premium differentiator |

| Agent audit trails | Full event logging for autonomous agent decisions | Condition for claims |

| Red-team evidence | Documented adversarial testing of AI systems | Increasingly required |

| Absence-of-governance penalty | No AI governance → coverage denials or premium surcharges | Direct financial consequence |

Companies with AI risk registers, model inventories, and governance protocols are viewed as better risks. Companies without governance face coverage denials, premium increases, or inability to procure AI coverage at all.

The Regulatory Direction

NAIC pilot programs for AI Systems Evaluation Tools are expected in early 2026. State-level AI bills are expanding liability exposure for autonomous systems. The regulatory signal: autonomous agent actions will increasingly be treated as organizational decisions — with commensurate accountability, audit requirements, and liability exposure.

For C-level leaders, this changes the cost structure of ungoverned autonomy. It’s no longer just an operational risk. It’s an insurability risk and a regulatory exposure.

7. Strategic Implications and Actions

For Enterprise Leaders

1. Reclassify agentic infrastructure as a critical control function. Move oversight from “innovation steering” to formal risk committees. If AI agents make thousands of operational decisions per day, those decisions belong in the same governance framework as financial controls and security operations.

2. Mandate layered autonomy architecture. Deploy Levels 1–2 broadly, Level 3 for bounded high-frequency domains, Level 4 only for narrow tasks with proven rollback. No production autonomy without event logging, policy traceability, and rollback mechanisms.

3. Require control-plane observability before scale approval. If you can’t see what agents are doing in real time, you can’t govern them. Observability is not a nice-to-have — it’s a prerequisite.

4. Run resilience drills with and without agentic pathways. Test recovery and failback scenarios where AI agents are available and where they aren’t. Identify dependency cliffs before they surface in production incidents.

5. Anchor business cases to productivity pathways, not demo metrics. Tie ROI to cycle-time reduction, error-rate reduction, and avoided downtime — not token volume, pilot completion counts, or model benchmark scores.

For Investors

6. Look beyond model companies to infrastructure autonomy. The AIOps market is projected to grow from $14.6 billion to $36 billion by 2030. The companies enabling autonomous operations — observability, orchestration, security — may capture more durable value than the model providers.

7. Evaluate governance maturity as investment signal. Companies that can demonstrate layered autonomy, observability infrastructure, and regulatory readiness are better positioned than companies that just deploy agents widely.

For Policymakers

8. Update audit frameworks for non-deterministic operations. SOX, ITGC, and sector-specific regulations assume deterministic workflows with human approvals. Autonomous agents require event-level observability and policy attestation — frameworks that don’t yet exist in most regulatory regimes.

9. Develop agent-accountability standards. If an AI agent makes an operational decision that causes a security breach, service outage, or compliance violation — who is accountable? Current regulatory frameworks don’t answer this question for autonomous systems.

10. Watch the insurance market as a leading indicator. When cyber insurers require AI governance as a condition of coverage, enterprise behavior changes faster than regulation can enforce. The insurance signal may be more impactful than any AI executive order.

What to Watch Next

- Independent third-party audits of autonomous infrastructure claims — especially incident-response outcomes and MTTR comparisons, not just vendor benchmarks

- Gartner’s 40% AI agent prediction vs. actual deployment data — by Q3 2026 we’ll know if the trajectory is real or aspirational

- Shift from AI policy documents to measurable control evidence in board reporting and regulatory filings

- Cyber insurance AI Security Rider adoption rates — the canary for when governance moves from optional to existential

- OECD productivity data through H1 2026 — the first period where autonomous operations should register in macro statistics if the deployment wave is real

- IBM FlashSystem.ai production benchmarks — independent reviews after March 6 GA will test whether 90% management reduction holds in the wild

The Bottom Line

The AI conversation has been fixated on model capabilities: who has the best benchmark, the largest context window, the most impressive demo. Meanwhile, the operational layer is making a structural move that matters more for enterprise value: AI is being embedded not in the interface but in the control plane — making thousands of autonomous decisions per day about infrastructure, security, cost, and resilience that no human would have time or context to make.

The OECD productivity data provides the sobriety check. Aggregate productivity across advanced economies grew 0.4% in 2024. That’s not a boom. It’s a signal that access to AI isn’t the differentiator — the differentiator is whether organizations do the harder work of redesigning process, decision rights, and governance architecture around autonomous execution. The 72% of enterprises running multiple agentic initiatives but with only 23% at mature integration tells you exactly where the gap is: it’s not in ambition or technology. It’s in execution discipline.

IBM’s FlashSystem is one announcement. But it signals the pattern: the next phase of enterprise AI isn’t about making humans more productive. It’s about autonomous systems maintaining operations that humans can’t keep up with. The organizations that capture that value will be the ones that build the governance, observability, and reversibility architecture to deploy it safely. The ones that don’t will have the same AI tools — and the same flat productivity curve.

The model benchmark that matters isn’t who scores highest on a test. It’s whether your autonomous operations can explain what they did, why they did it, and how to undo it — before your auditor asks.

The infrastructure layer just told you where AI value actually accrues. The question is whether your governance can keep up.

Thorsten Meyer is an AI strategy advisor who believes the most underrated AI metric is “time-to-rollback” — because if you can’t undo what the agent did, you don’t have autonomous operations, you have autonomous prayer. More at ThorstenMeyerAI.com.

Sources:

- IBM Newsroom: IBM Introduces Autonomous Storage with New FlashSystem Portfolio Powered by Agentic AI — February 10, 2026

- PR Newswire: IBM FlashSystem Autonomous Storage Launch — February 2026

- Techzine: IBM FlashSystem Autonomous AI Takes Over 90% of Storage Management — February 2026

- InsideHPC: IBM Introduces Autonomous Flash Storage with Agentic AI — February 2026

- SDxCentral: IBM’s FlashSystem Autonomous Storage Update — February 2026

- OECD: Insights on Productivity Developments in 2024 — Compendium of Productivity Indicators 2025 — 2025

- OECD Statistics Blog: Tracking Productivity Trends Amid Economic Headwinds — September 2025

- Gartner: 40% of Enterprise Apps Will Feature AI Agents by 2026 — August 2025

- Gartner Predicts 2026: AI Agents Will Reshape Infrastructure & Operations — 2026

- Dynatrace: The Pulse of Agentic AI in 2026 — Global Report — 2026

- Dynatrace: Autonomous Operations Hits an Inflection Point — 2026

- CNCF: The Autonomous Enterprise and the Four Pillars of Platform Control — January 2026

- Deloitte Insights: Agentic AI Strategy — Tech Trends 2026 — 2026

- Grand View Research: AIOps Platform Market — $36B by 2030 — 2025

- Grab The Axe: Cyber Insurance Underwriting — New Technical Requirements 2026 — 2026

- Intelligent Insurer: What Insurers Must Understand Before Underwriting AI-Driven Catastrophe — 2026

- Lexology: When Insurance Won’t Cover AI — Governance Now Essential — 2026

- Gartner: Strategic Predictions for 2026 — 15% of Work Decisions Made by AI Agents by 2028 — 2025