By Thorsten Meyer, April 2026 – Thorsten Meyer AI

For most of 2024, the debate over Chinese AI capability hinged on a single question: how far behind are they, really? By early 2026, that framing looks naive. The answer isn’t a number of months — it’s a sentence. They are exactly as far behind as the latency of a residential proxy and the upload time of a fine-tuning dataset.

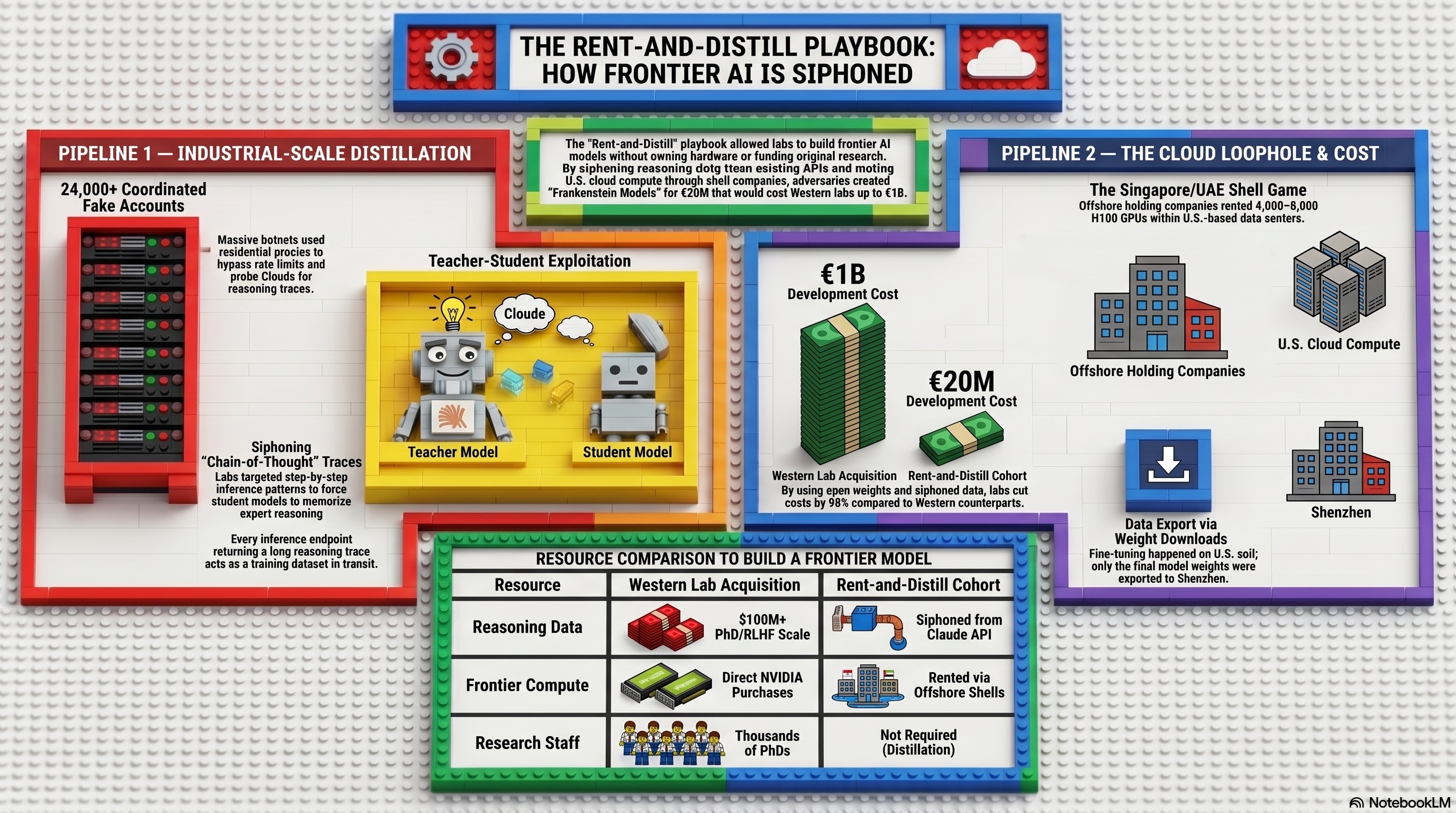

The strategy that got them there has a name now. Call it Rent-and-Distill: two parallel, unauthorized pipelines that solve the two bottlenecks U.S. policy was supposed to weld shut. One pipeline acquires the intelligence — the actual reasoning patterns of frontier Western models, extracted prompt by prompt. The other acquires the compute — the forbidden Nvidia silicon, accessed not by smuggling chips into Shenzhen but by renting them over the public internet from data centers sitting on U.S. soil.

Each pipeline has been documented in granular detail in recent weeks. Together, they describe a playbook that essentially treats the American frontier lab stack as a semi-public utility — one that can be drained from the top (model outputs) and the bottom (GPU capacity) while the legal regime built to prevent exactly this struggles to catch up.

Rent-and-Distill.

How China is building world-class AI without owning the hardware, hiring the researchers, or writing the reasoning. Two unauthorized pipelines, running in parallel, against the American frontier stack.

The playbook, at a glance.

Industrial-scale distillation of Claude’s reasoning

24,000+ fraudulent accounts. 16 million+ engineered prompts. Residential proxies. Claude’s chain-of-thought captured, refined into supervised fine-tuning data.

Export-controlled GPUs, rented through the cloud

Offshore shell entities rent Nvidia H100 / Blackwell clusters from U.S. and allied hyperscalers. Training happens on U.S. soil. Only the weights file crosses the border.

Output: a “Frankenstein” open model

Open-weights base (Llama / Qwen) + distilled Western reasoning + rented Western compute → a Chinese flagship model with American cognition, served at Chinese cost.

AeXdream RX 580 8GB White Graphics Card – 8GB GDDR5 256bit GPU – Gaming Video Card for PC Computer Desktop: Dual Fan Cooling System – High Performance White GPU for 1080P Ultra Gaming (White)

UPGRADED GAMING PERFORMANCE WITH RX 580 8GB – This high-performance RX 580 8GB White Graphics Card features 2048SP…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

By the numbers.

AI model training and fine-tuning cloud platforms

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Pipeline 01 — IntelligenceThe Siphon.

Rather than hire thousands of PhDs to write reasoning traces, attackers let Claude write them — then harvest the output as training data for a weaker open model. The capture surface is the API itself.

Residential proxies

Traffic routed through home Wi-Fi routers of unwitting users worldwide. A request from Shenzhen arrives wearing the IP of a Comcast subscriber in Ohio. Classical geoblocking becomes theatre.

-

S/01

Account provisioning

Create tens of thousands of accounts across API tiers, obscure origin via commercial proxy services. One cluster observed managing 20,000+ accounts simultaneously.

-

S/02

Chain-of-thought elicitation

Engineer prompts — code, competitive math, multi-hop logic, rubric grading — that force the model to narrate its reasoning step-by-step.

-

S/03

Capture & refine

Collect millions of reasoning traces. Format as supervised fine-tuning data: input, gold chain-of-thought, final answer.

-

S/04

Behavioral cloning

Fine-tune an open base (Llama, Qwen) on the captured traces. The student model memorizes the shape of Claude’s cognition until it becomes its own.

Web Scraping with Python & Playwright: No Prior Knowledge Needed, Even If You're a Total Beginner (Python Mastery Book 1)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Pipeline 02 — ComputeThe shell game.

Export controls prohibit shipping advanced Nvidia silicon to China. They did not — until recently — prohibit Chinese entities from remotely renting the same chips from U.S. and allied hyperscalers. The chips never move. The customer does, virtually.

-

C/01

Offshore incorporation

Register a subsidiary in Singapore, UAE, Indonesia, or similar. Or contract through a third-party intermediary reselling cloud capacity.

-

C/02

Remote training

Upload the distilled “golden dataset” plus an open-weights base. Train on rented H100 / Blackwell clusters inside U.S. or allied data centers.

-

C/03

Weight extraction

Only the trained weights file leaves — 800GB or so of tensors over HTTPS. No customs officer for a .safetensors container.

AI Value Creators: Beyond the Generative AI User Mindset

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The output stack.

DeepSeek-Rx · MiniMax-Mx · Moonshot Kimi-x

“Golden dataset” distilled from Claude

Rented H100 / Blackwell in U.S. or allied data centers

Open-weights: Llama 3 / Qwen 2.5 / DeepSeek-V base

The barrier, closing.

From physical shipment to remote access.

RASA amends the Export Control Reform Act of 2018 to authorize BIS to regulate not only the export of controlled items, but the remote access of them. Translation: cloud compute becomes subject to export control law for the first time. Hyperscalers would face KYC obligations designed to catch the shell-company arrangements described above.

House Foreign Affairs

Full House vote

Senate Banking Committee

Presidential signature

Pipeline 1: The Intelligence — Industrial-Scale Distillation

On February 23, 2026, Anthropic published the most detailed public attribution of model theft the industry has ever seen. In a single disclosure, it named three Chinese AI labs — DeepSeek, Moonshot AI, and MiniMax — and accused them of running coordinated, industrial-scale campaigns to extract Claude’s capabilities. (See our article: 24,000 Fake Accounts and a Cloud Login: The Rent-and-Distill Playbook Behind China’s Frontier Models)

The numbers are the part worth sitting with:

- ~24,000 fraudulent accounts created across the three campaigns

- 16+ million exchanges generated with Claude

- ~13 million of those exchanges attributed to MiniMax alone

- ~150,000 prompts from DeepSeek specifically designed to force Claude to reveal step-by-step chain-of-thought reasoning

- One proxy network managing more than 20,000 fake accounts simultaneously, laundered alongside unrelated customer traffic to evade detection

This isn’t casual scraping. It is a mature operational discipline, and it maps almost perfectly onto the playbook that U.S. regulators and frontier labs have been sketching out for months.

The “Siphon” — Turning Claude into an Automated PhD Factory

The classical way to train a reasoning model is expensive: you hire thousands of specialists — mathematicians, programmers, lawyers, doctors — to write out worked solutions to hard problems, then use their labor to teach the model how to think. It is the single most capital-intensive line item in modern AI, and it is the reason frontier training runs cost what they do.

The Siphon attack bypasses all of it. Instead of paying humans to produce reasoning traces, you pay nothing and let Claude produce them. The prompts are not random; they are engineered to force the model to “show its work” on problems where the intermediate chain of thought is more valuable than the final answer. Coding. Competitive math. Multi-hop logic. Rubric-based grading. Tool-use orchestration. Every token Claude spends narrating why it reached an answer is a token the attacker captures.

Anthropic’s threat intelligence team was blunt about what it saw: the volume, structure, and focus of the prompts looked nothing like normal traffic. The attackers weren’t asking Claude questions — they were harvesting cognition.

The Golden Dataset

Once captured, those reasoning traces are reformatted into supervised fine-tuning data. A smaller, weaker base model — a stock Llama, a base Qwen — is then trained to mimic those traces token-for-token. The result is sometimes called “behavioral cloning” in the literature, but at this scale it is closer to identity theft. The student model doesn’t independently rediscover how to solve an integral; it memorizes the shape of Claude’s thought process until the shape becomes its own.

This is why the economics are so devastating. Berkeley researchers famously recreated a reasoning model for roughly $450 in a 2025 demonstration. Stanford and UW researchers did a similar stunt for under $50. Those were toy examples. What Anthropic documented in February is the same technique run at production scale against a live commercial API, with enough harvested data to upgrade multiple competing foundation models simultaneously.

The Evasion Layer: Residential Proxies

The reason Anthropic didn’t catch this immediately — and the reason similar campaigns against OpenAI and Google Gemini have persisted — is the proxy layer.

Modern residential proxy services route traffic through the home Wi-Fi routers of consenting (or, often, unwitting) users worldwide. A request from Shenzhen arrives at Claude’s API wearing the IP address of a Comcast subscriber in Ohio, a Deutsche Telekom customer in Bavaria, or a Jio user in Mumbai. Classical IP-based rate limiting and geoblocking become almost useless. The defense has to shift to behavioral fingerprinting — detecting the shape of coordinated distillation traffic against the shape of genuine human use — which is expensive, imperfect, and inherently reactive.

Anthropic says it now has classifiers specifically tuned to chain-of-thought elicitation patterns, plus tighter verification on high-risk account categories (educational, research, startup). Google’s Threat Intelligence Group disclosed a similar defensive posture for Gemini earlier in 2026, after disrupting campaigns spanning 100,000+ prompts. These are real countermeasures, but they arrive after the fact. The 16 million exchanges are already in the training sets.

Pipeline 2: The Compute — The Cloud Loophole

The distillation pipeline produces a dataset. Turning that dataset into a competitive model still requires the one resource export controls were explicitly designed to deny: large clusters of Nvidia H100, H200, and Blackwell-class GPUs.

The Biden-era and Trump-era export control regimes both focused on physical shipment: you can’t put a pallet of H100s on a plane to Shanghai. What neither regime adequately covered until very recently was remote access — a foreign entity renting those exact same chips over the internet from a data center inside the United States or an allied jurisdiction.

The Shell Game

Reporting over the last fifteen months has exposed the basic structure of the workaround. A Chinese AI lab does not attempt to sign up for AWS, Azure, or Google Cloud from a Beijing IP with a PRC corporate entity. Instead, it uses an offshore subsidiary — typically registered in Singapore, the UAE, or another jurisdiction with flexible corporate formation rules — or a third-party intermediary that resells cloud capacity.

Concrete examples have surfaced repeatedly:

- INF Tech, a Shanghai startup, reportedly secured access to ~2,300 banned Nvidia Blackwell GPUs by renting racks of 32 GB200 servers from an Indonesian telecommunications provider, in a deal valued around $100 million.

- Tencent reportedly locked up roughly $1.2 billion in contracts for ~15,000 Blackwell processors through the Japanese provider Datasection.

- ByteDance was reported in 2024 to have rented advanced Nvidia chips via Oracle, an arrangement that became one of the politically catalyzing case studies for subsequent legislation.

In each case, the chips never moved. The customer did — virtually. A model trained this way is, in a literal sense, a model trained on U.S. soil or in U.S.-aligned jurisdictions, using U.S. silicon, for a Chinese beneficiary. Only the weights file crosses the border at the end, and a weights file is just a bunch of floating-point numbers in a .safetensors container. There is no customs officer for 800GB of tensors moving over HTTPS.

Why This Worked For So Long

The legal fiction that made all of this possible is, roughly, that “providing cloud computing services” has never been treated as an “export” under the Export Administration Regulations. BIS — the Commerce Department’s Bureau of Industry and Security — had authority over things and software crossing borders. Compute-as-a-service fell in the gap between them.

For about two decades this was a reasonable gap, because nobody was using rented cloud capacity to build strategic weapons. In the AI era, the gap became the primary attack surface.

The Result: The Frankenstein Model

Stitch the two pipelines together and you get something genuinely new in the history of technology transfer.

Base layer: a strong open-weight foundation model — Llama 3, Qwen 2.5, or a derivative.

Upgrade layer: a fine-tuning dataset distilled from Claude (or ChatGPT, or Gemini), containing hundreds of thousands to millions of high-quality reasoning traces that no one paid humans to write.

Compute layer: a rented cluster of export-controlled Nvidia GPUs in a U.S. or allied data center, accessed through an offshore shell entity.

Output: a specialized model — a flagship DeepSeek release, a MiniMax reasoning variant, a Moonshot long-context tier — that combines the speed and serving cost of a smaller open model with reasoning nuances licensed, essentially, from Anthropic’s training run. It can be downloaded back into China, run on domestic hardware (even modest hardware), and deployed into the consumer and enterprise markets at prices that Western labs cannot match, because the Western labs paid for the research.

This is why Dario Amodei’s framing of export controls as a national security issue has shifted over the last year from “tighten the chip controls” to something closer to “we are being asymmetrically copied.” If the moat is the frontier lab’s cost of training — billions of dollars of compute, hundreds of millions in human expert labor — and that cost can be ingested by a competitor for the price of a few million API calls plus a cloud rental contract, then the moat is not a moat. It is a tariff that only American companies pay.

The Regulatory Response: RASA and Its Limits

The specific compute loophole is, finally, closing. On January 12, 2026, the U.S. House of Representatives passed the Remote Access Security Act (H.R. 2683) by a vote of 369-22 — a genuinely overwhelming bipartisan margin that tells you how little daylight exists in Washington between the parties on the China-AI question.

RASA would amend the Export Control Reform Act of 2018 to authorize BIS to regulate remote access to export-controlled items, not just physical shipment. Cloud providers — AWS, Azure, Google Cloud, Oracle, and increasingly relevant jurisdictions like Datasection in Japan or INF’s Indonesian counterparties — would be subject to Know-Your-Customer obligations explicitly designed to catch shell-company arrangements designed to route Chinese AI training onto U.S. silicon.

Two caveats matter before anyone declares the loophole shut:

First, it is not yet law. The bill is in the Senate Banking, Housing, and Urban Affairs Committee, with a companion bill (S. 3519) introduced in December 2025 by Senators McCormick, Wyden, Cotton, and Coons. Strong bipartisan support makes eventual passage likely, probably via unanimous consent, but until it clears the Senate and is signed, the regulatory gap is still legally open.

Second, RASA authorizes BIS to regulate remote access — it does not itself specify the controls. Once enacted, BIS will have to write rules defining which items are subject to which remote-access restrictions, for which end-users, at what enforcement threshold. That rulemaking process is where the actual fight will happen. The hyperscalers have obvious commercial incentives to prefer narrow definitions; the national security community will push for broad ones. Neither side has fully tipped its hand.

What This Means

A few implications worth stating directly.

The distillation threat is not going away with RASA. RASA addresses Pipeline 2, the compute side. Pipeline 1 — the actual extraction of reasoning from frontier APIs — is a Terms-of-Service problem and a cat-and-mouse detection problem, not an export-control problem. Anthropic, OpenAI, and Google are now spending real engineering against it, but the underlying asymmetry is permanent: a frontier model is, by nature, an artifact that produces its most valuable training signal every time it answers a question. The larger and more capable the model, the more valuable each output becomes to a potential cloner.

The open-weights debate just got more complicated. Every one of the “Frankenstein” models relies on a strong open-weights base. This does not mean open weights should be banned — the strategic case for open ecosystems is serious and not made less serious by bad actors — but the argument that open weights are a pure public good requires updating. Open weights plus illicit distillation plus cloud arbitrage is a specific, measurable capability transfer vector, and the debate needs to account for it.

The hyperscalers are now squarely in the national security stack. AWS, Azure, and Google Cloud have historically treated compliance as a box-check on a services contract. RASA, if enacted, makes them front-line enforcers of export controls, with real liability for failing to identify when a Singapore-registered shell company is actually a proxy for a PRC entity. Compliance infrastructure that currently resembles an enterprise sales KYC check will need to start resembling a sanctions regime.

For European operators, this is not a spectator sport. The compute pipeline routinely touches European data centers (Bavaria, Dublin, Stockholm, Frankfurt). The residential proxy pipeline routinely routes through European IPs. And the eventual regulatory framework — whatever BIS writes — will have extraterritorial implications for any EU-based cloud provider or AI lab that uses U.S. silicon, which is essentially all of them. The EU’s own AI Act doesn’t address distillation attacks at all; it is orthogonal to the actual threat vector.

The version of AI competition that most commentators were describing a year ago — the U.S. has the chips, China has the data and the cheap labor, may the best innovation win — was always a bit of a fairy tale. The version documented in the Anthropic disclosure and the RASA vote is more honest and much less comforting. China has the data, the cheap labor, and functional access to the chips, and a growing library of distilled Western reasoning. What the U.S. has is the original research, the enforcement authority to make that research expensive to steal, and — so far — the political will to finally use it.

Whether that will survive the transition from House vote to Senate floor to BIS rulemaking to actual hyperscaler enforcement is the question that decides whether the next frontier model is competed for or just copied.

Sources: Anthropic’s February 23, 2026 threat disclosure; CNBC, Fortune, VentureBeat, and Reuters reporting on the DeepSeek/Moonshot/MiniMax distillation campaigns; Congress.gov and GovTrack records for H.R. 2683 and S. 3519; Tom’s Hardware, The Register, DCD, and WSJ reporting on the INF Tech, Tencent, and ByteDance cloud-rental arrangements; Latham & Watkins and Baker McKenzie legal analyses of RASA’s expected scope.