Thorsten Meyer AI Foundations · 05 / 08

Why models make things up — and how to build trust around that fact, not away from it

In 2023, a US attorney filed a legal brief citing six federal cases that had never existed. The cases had names. They had docket numbers. They had plausible-sounding holdings that supported his argument. They had everything — except the small matter of being real. The attorney hadn’t invented them. ChatGPT had.

This story gets told as a cautionary tale about AI. It’s actually a cautionary tale about verification. The model wasn’t lying — lying requires knowing the truth and saying something else. The model was doing exactly what it was trained to do: producing text that was statistically likely given the context. In that context, plausible-sounding legal citations were statistically likely. That they didn’t correspond to reality was not the model’s concern. It had no way to know, and no way to check.

If you understand only one thing about hallucination, make it this: hallucination is not a bug that will be fixed. It is the same mechanism that makes LLMs useful, pointed in a direction you didn’t want. Waiting for hallucination to disappear is a strategy for staying on the sidelines.

The real AI skill is not eliminating hallucination. It’s designing around it.

LEAN PROGRAMMING FOR FORMAL SOFTWARE VERIFICATION: Mathematical proof systems and logical frameworks for verified computation

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Why models make things up

LLMs are next-token predictors. They look at a context and produce what’s statistically likely to come next. When the context is “the capital of France is” they produce “Paris.” When the context is “citations supporting the argument that the defendant acted in good faith include” they produce citations — plausible-looking strings of case name + year + reporter + page number. The mechanism is identical. The only difference is whether what’s likely also happens to be true.

For well-represented facts with stable references in the training data, likely and true usually coincide. For novel combinations, obscure facts, recent events, or fabrications dressed up as requests — the cases, the citations, the “give me three recent papers about X” — likely and true diverge, sometimes dramatically. The model has no flag that fires when it’s crossed that line. From the inside, producing “Paris” and producing a made-up case citation feel like the same operation, because they are.

This has two practical consequences.

First: confidence is not correctness. A model can produce a wrong answer with the same fluent, confident tone it produces right answers. There’s no tell in the output itself. People trained on human communication read fluency as a signal of expertise. That heuristic is broken for LLMs. The fluency is constant. The accuracy is variable.

Second: next year’s model will hallucinate less, but not zero. Bigger models with better training do drift in the direction of fewer fabrications. Grounding techniques (retrieval, tool use, citations) reduce hallucination by giving the model something real to lean on. But neither eliminates it. The mechanism that produces the occasional bad output is the same mechanism that produces all the good ones. You don’t get the second without some risk of the first.

Hands-On Large Language Models: Language Understanding and Generation

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The verification ladder

The useful move is to stop asking “is this true?” and start asking “what level of verification does this task need?”

Different tasks tolerate different levels of AI error. Drafting a joke for a birthday card: the cost of a hallucinated joke is effectively zero. Drafting a contract clause: the cost of a hallucinated legal standard is enormous. These are not the same problem. Treating them the same — either by distrusting the model for everything, or trusting it for everything — produces bad outcomes in both directions.

The framing that works is a verification ladder. Four rungs, from lowest stakes to highest. Each rung has a matching verification posture. The skill is matching rung to task.

Rung 1 — Zero stakes. First-draft marketing copy. Brainstorming session. Exploring an idea. If the output is wrong, the cost is the time to notice and redo. Verification posture: trust freely. Catch errors when you notice them. Don’t build a verification process for the cost-free.

Rung 2 — Low stakes, recoverable. Summaries for personal use. Internal notes. Rough analysis that will inform a decision but not drive it. Cost of a wrong answer: modest rework. Verification posture: trust with sanity check. Skim for obvious errors. Ground anything that will be repeated or referenced.

Rung 3 — Meaningful stakes, reputational or financial. Content that will be published. Analysis someone will act on. Recommendations to clients. Cost of a wrong answer: embarrassment, eroded trust, possibly money. Verification posture: trust after human review. Every claim checked. Citations followed. Facts spot-tested. The human is the last line before the output becomes real.

Rung 4 — Regulatory, legal, medical, safety. Court filings. Medical information a patient will act on. Financial advice. Compliance-sensitive content. Cost of a wrong answer: legal exposure, physical harm, regulatory penalty. Verification posture: trust only with tools of record. The AI drafts; authoritative systems (case databases, clinical guidelines, certified calculators) verify every factual claim before it goes anywhere.

Most AI use sits on rungs 1 and 2. Most AI mistakes — the ones that become news — happen because work at rung 3 or rung 4 was treated as rung 1 or 2. The attorney who filed the fake citations wasn’t wrong to use ChatGPT. He was wrong to use it at rung 4 with a rung 1 verification posture.

AI hallucination mitigation tools

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

How to climb the ladder

Placing a task on the ladder is usually obvious once you ask the question. Two tests make it easier.

The consequence test. If the output is wrong and it goes out, what happens? If the answer is “I’ll redo it,” it’s rung 1 or 2. If the answer is “someone will act on this,” rung 3. If the answer is “legal exposure, regulatory problem, or physical harm,” rung 4.

The audience test. Who sees this output? If only you, the bar is lower. If a client, higher. If a court, regulator, or patient, higher still. The audience implicitly sets the verification posture whether you design for it or not. Design for it.

A good habit: for any new AI workflow, place it on the ladder before you build it. Rung decided up front means verification can be designed in, not bolted on. Rung decided after a near-miss means you already learned the expensive way.

Unlocking Data with Generative AI and RAG: Enhance generative AI systems by integrating internal data with large language models using RAG

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Grounding reduces, never eliminates

Retrieval-augmented generation (RAG), tool use, and citations are all grounding techniques. They give the model something real to reason over — a document, a database, a calculation — instead of asking it to produce facts from its weights alone. They work. They reduce hallucination substantially on well-designed systems.

They do not eliminate it.

A RAG system can still hallucinate by misquoting a source it correctly retrieved. A model using a calculator tool can still hallucinate by typing the wrong number into the calculator. A model asked to cite its sources can hallucinate the existence of the citation and then confabulate a plausible quote from the thing it invented. The grounding reduces the probability of error; it does not remove it.

This matters for system design. If your architecture is “add RAG, therefore no more hallucinations, therefore no verification needed,” you have re-created the attorney-with-ChatGPT problem one layer deeper. Grounding is a probability improvement, not a correctness guarantee. Plan verification accordingly.

Designing verification in

A verification workflow does not have to be heavy. For rung 2 it might be a single human skim. For rung 3 it might be a checklist: facts cross-referenced, citations followed, numbers recomputed. For rung 4 it might be an automated tool-of-record check on every factual claim before the output goes anywhere.

The pattern is always the same: the AI does the generation, humans or tools do the verification, and the split is explicit. The failure mode everyone fears — the model confidently asserting something false — is managed not by trying to make the model stop being confident but by making sure confidence alone never converts to action.

A useful diagnostic: look at your AI workflows and ask, for each one, what would have to go wrong for a false claim to reach the audience. If the answer is “the model would have to make an error” — that’s a rung mismatch. The verification posture is trusting the model. For anything above rung 2, that’s not enough. If the answer is “the model would have to err and the reviewer would have to miss it and the tool-of-record would have to fail” — now you have layered defense proportional to the stakes.

What this changes

Two shifts once you accept the verification ladder.

Trust gets granular. Instead of “do I trust AI?” the useful question is “do I trust this output, for this task, at this rung, with this verification?” The answer is different for every combination. That’s not hedging. It’s operating with the right resolution.

Adoption gets unblocked. A lot of “we can’t use AI because it hallucinates” is really “we haven’t specified what rung the task is on, so we’re treating every use as rung 4 and finding AI inadequate.” Specify the rung. Apply the matching verification. You’ll unlock a lot of rung 1 and rung 2 uses that were blocked by rung 4 thinking, and you’ll avoid the rung 3 and rung 4 mistakes that come from treating them as rung 1.

The failure mode isn’t hallucination. It’s rung confusion.

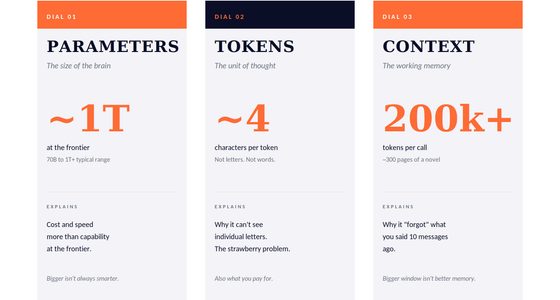

Next in Thorsten Meyer AI Foundations: which model you pick changes all of this. Models, providers, and the frontier — the market map.