By Thorsten Meyer — May 2026

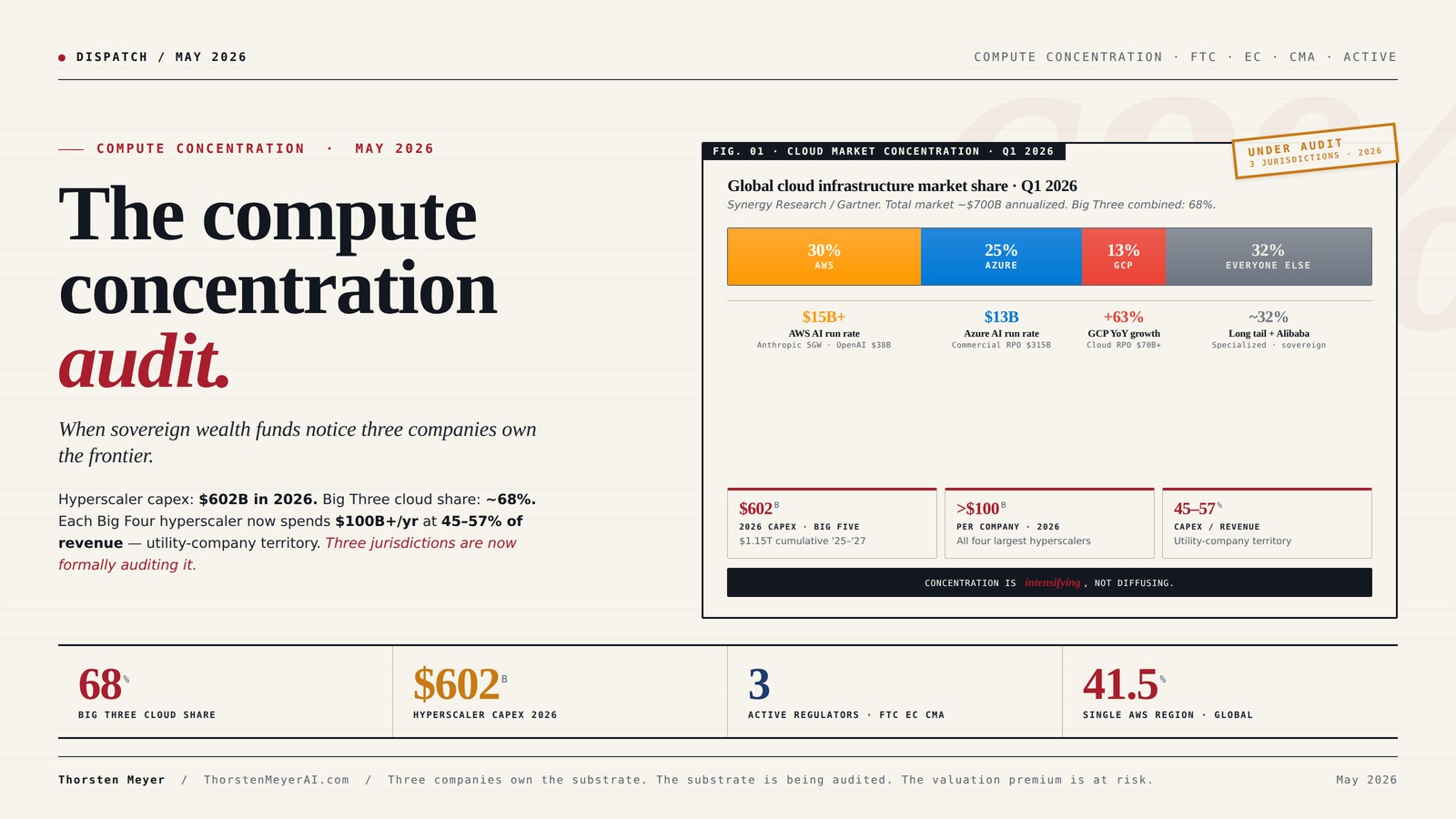

Hyperscaler capex is on track for $602 billion in 2026. Each of the four largest hyperscalers — AWS, Microsoft Azure, Google Cloud, Meta — will spend more than $100 billion individually. Capex now runs 45 to 57 percent of revenue at these companies, ratios that historically describe utilities and pipeline operators, not technology platforms. The Big Three cloud providers control roughly 68 percent of the global cloud infrastructure market. The Big Three plus Meta will pour over $400 billion into AI infrastructure this year alone.

Frontier AI runs on this substrate. Anthropic has committed to up to five gigawatts of AWS Trainium capacity. OpenAI has committed two gigawatts of Trainium starting in 2027 plus a $38 billion AWS deal that closed in March, on top of a separate $50 billion Amazon chips-for-equity arrangement and continued Azure obligations. The model labs do not own most of their own compute. They rent it. The renting relationships are the most consequential industrial dependency in the technology sector.

This dispatch is about the moment when that dependency became visible to the people whose job is to watch industrial concentration. The FTC has moved from the 2024 6(b) inquiry to active investigation. Chair Andrew Ferguson signed off on a formal compulsory demand against Microsoft in early 2025 that has since expanded. The European Commission designated AWS and Azure as gatekeepers under the Digital Markets Act in motion. The UK Competition and Markets Authority published preliminary findings on the cloud market in late 2025 and is now examining the partnership structures specifically.

The polite description of what is happening is “regulatory scrutiny.” The accurate description is that three jurisdictions are simultaneously running a structural audit on the most concentrated capital allocation in modern technology history, and the findings are starting to land.

This is not a piece about whether the audit produces enforcement action. That is uncertain and will play out over 18 to 36 months. This is a piece about why the audit is happening, what the findings already establish, and which strategic positions become more or less viable as the audit progresses. Three companies own the substrate that frontier AI runs on. That fact has consequences whether or not regulators act, because sovereign wealth funds and large institutional allocators are now visibly pricing it.

The dispatch on the EU sovereignty bet covered why Mistral’s open-weight Apache 2.0 strategy aligns with EU regulatory architecture. The dispatch on the 2028 model lab endgame treated compute access as a binding constraint on which labs reach frontier capability. This piece sits beneath both. The compute substrate is the layer below the labs, and its concentration is the structural fact that everything above it inherits.

The compute concentration audit.

When sovereign wealth funds notice three companies own the frontier.

Hyperscaler capex: $602B in 2026. Big Three cloud share: ~68%. Each Big Four hyperscaler now spends $100B+ per year at 45–57% of revenue — utility-company territory. Frontier AI runs on this substrate. Three jurisdictions are now formally auditing it.

Three companies. 68 percent. Of a $700B market.

Cloud is more concentrated than past technology cycles, and the AI workload growth is intensifying the concentration rather than diffusing it. The model labs above this substrate run on it. They cannot move freely.

AWS Trainium GPU cloud computing

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The dollars that never leave the closed system.

The FTC’s most consequential analytic move was naming the pattern: cloud providers invest billions in AI labs; AI labs commit billions back through compute. Both companies’ financial statements show large numbers. The underlying cash flow between them is substantially smaller than either set of numbers suggests.

Linux Network Administrator's Guide: Infrastructure, Services, and Security

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Three jurisdictions. Same direction. Compounding pressure.

Each track is on its own timeline and produces a different kind of constraint. The cloud providers can litigate each one in isolation. They cannot litigate three convergent investigations producing similar conclusions over 12–24 months.

FTC

Examining input access, switching costs, exclusivity rights, governance and consultation. Amazon-OpenAI deal characterized as quasi-merger designed to circumvent traditional review.

EC · DMA

Operational obligations: interoperability requirements, transparency, self-preferencing prohibitions. Constrains partnership behaviors without forcing structural separation.

CMA

Anti-competitive concerns identified: egress fees, technical lock-in, committed-spend agreements. Behavioral or structural remedies within powers. Likely template for EU and US.

cloud compute rental services

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Behavioral. Operational. Structural.

Probability that any jurisdiction issues a true structural remedy is low. Probability of meaningful behavioral and operational change is high. Across all three scenarios, the AI-infrastructure-platform valuation premium compresses.

Consent decrees · premium compresses 15–25%

Behavioral consent constrains partnership exclusivity, requires interoperability, prohibits self-preferencing. Big Three remain dominant. Sovereign wealth fund rebalancing real but modest. 18–36 mo.

Functional separation · premium compresses 25–40%

One+ jurisdiction requires functional separation of AI investment from cloud commercial. Specialized infrastructure + sovereign-cloud capture meaningful share. Model lab landscape diversifies materially.

Divestiture order · structural reorganization

Most likely EU. Forced divestiture of cloud-AI investment stakes or operational separation of cloud and AI. Historically least common antitrust outcome. Most consequential. 36–60 month reshape.

Three companies own the substrate. The substrate is being audited. The valuation premium is at risk. Sovereign wealth funds have started to rebalance.

frontier AI training hardware

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Four assignments. By role.

Re-screen hyperscaler exposure for concentration risk.

AWS, Microsoft, Google still produce strong cash flows; AI-platform-of-record valuation premiums at risk over 18–36 months. Rebalance toward specialized AI infrastructure (CoreWeave, Lambda) and chip suppliers (Broadcom, TSMC, SK Hynix). Reallocate at the margin, don’t divest aggressively.

The analog is Big Tobacco 2010–2014.

Pattern suggests 25–40% valuation-premium compression over 4–6 years if Scenarios A or B materialize. Begin incremental rebalancing now, not after the consent decrees publish. Sovereign-cloud, regional cloud, specialized AI infrastructure are the absorbing categories.

Update vendor-assurance for compute-concentration risk.

Multi-cloud architectures that cost 20–40% more to operate now look meaningfully better as regulatory environment compresses single-vendor pricing power. Sovereign-cloud option is real procurement criterion for EU, UK, US public-sector and regulated-industry workloads.

Anthropic IPO disclosure October 2026 sets the template.

OpenAI’s PBC structure is the response template. Reflection AI and the spinout cohort have structural advantage of not yet being locked in. Optimal posture for any new model lab: multi-cloud minimum, ideally with material specialized-infrastructure exposure.

Executive Summary · The Concentration in One Table

| Metric | Number | Source |

|---|---|---|

| Big Three share of global cloud market | ~68% | Synergy Research, Q1 2026 |

| Q1 2026 share by provider | AWS 30% · Azure 25% · GCP 13% | Synergy / Gartner |

| Total 2026 hyperscaler capex (Big 5) | $602B | Goldman Sachs aggregate |

| Per-company capex (Big 4) | >$100B each | Q1 2026 disclosures |

| Capex as % of revenue | 45–57% | Across the Big 4 |

| 2025–2027 cumulative hyperscaler capex | $1.15 trillion | Goldman Sachs projection |

| AWS disclosed AI run rate | >$15B, triple-digit growth | Amazon Q1 2026 call |

| Azure disclosed AI run rate | $13B | Microsoft Q1 2026 call |

| Google Cloud RPO (backlog) | $70B+ | Alphabet Q1 2026 |

| Microsoft total commercial RPO | $315B | Microsoft Q1 2026 |

| Anthropic AWS commitment | 5 GW Trainium capacity | Public disclosures |

| OpenAI AWS commitment | $38B + 2 GW Trainium from 2027 | March 2026 deals |

| OpenAI–Amazon chips-for-equity | $50B | March 2026 |

| Active regulatory investigations | FTC (US) · EC (EU DMA) · CMA (UK) | All ongoing as of May 2026 |

| Single AWS region share of global AWS traffic | 41.5% (us-east-1) | Cloudflare Radar Q1 2026 |

The pattern: a small number of providers command an outsized share of compute, with revenue concentration intensifying rather than diffusing as AI workloads scale. The frontier AI labs run on this substrate. The regulators are now formally examining the structure of the dependency. Sovereign wealth funds are rebalancing exposure as the dependency becomes visible.

1. The substrate beneath the substrate

Most discussion of AI competitive dynamics treats the model labs as the unit of analysis. Anthropic versus OpenAI versus Google DeepMind. Frontier capability versus open weight. US versus China. Each of those framings is correct at its layer. None of them captures what is actually happening at the layer below.

The layer below is the compute substrate, and it is structured very differently from the layer above. The model labs are competitive — six or more credible Western players, plus four major Chinese labs, plus the European specialists. The compute substrate underneath them is not competitive in the same sense. Three providers — AWS, Microsoft Azure, Google Cloud — hold roughly two-thirds of global cloud infrastructure spend and are extending that share as AI workloads concentrate. Add Meta, and four companies account for the bulk of frontier-tier AI training and inference capacity in the Western world.

This is structurally different from past technology cycles. The internet built out across hundreds of competing infrastructure providers in the 1990s. Cloud computing in the 2010s was concentrated but with meaningful share in the 30 percent range across each of the top three. AI compute in the 2020s is concentrating into the same three companies with a fourth (Meta) operating at similar scale internally, while every credible frontier model lab is contractually committed to rent compute from one or two of the three for the foreseeable future.

The dependency is not abstract. Anthropic’s 5 gigawatts of AWS Trainium commitment is a hard contractual obligation that scales the lab’s capability ceiling in direct proportion to AWS’s chip and power delivery. OpenAI’s combined commitments to AWS, Azure, and now Oracle for Stargate represent a multi-cloud strategy that nonetheless leaves OpenAI structurally dependent on the same three to four providers. Google DeepMind operates on TPU capacity that Alphabet controls; the dependency is intra-corporate but no less structural.

This is the substrate that the FTC, the European Commission, and the CMA are now formally auditing. The 2024 FTC 6(b) study produced a staff report in early 2025. The compulsory demand on Microsoft expanded under Chair Ferguson in 2025. The Amazon-OpenAI deal of March 2026 was characterized in the FTC’s framing as a “quasi-merger designed to circumvent traditional antitrust review.” The EU’s DMA gatekeeper designation process is in motion. The CMA’s findings on cloud competition were preliminary in late 2025; they will become operational over 2026.

The audit is happening. The strategic question is what the audit produces, and what changes in capital allocation while it produces.

2. The “circular spending” framing

The FTC’s most consequential analytic move in the 2025 staff report was naming a specific structural pattern: cloud providers invest billions in AI labs, the AI labs commit to spend billions back on the same cloud providers’ infrastructure. The dollars cycle through both companies’ financial statements but never leave the closed system. The FTC term for this is “circular spending.”

The pattern is documented in detail. Microsoft invested at least $13 billion in OpenAI starting in 2019, with most of the investment delivered as Azure compute credits rather than cash. Amazon invested up to $8 billion in Anthropic, structured similarly. Google invested approximately $2 billion in Anthropic, on top of an earlier $300 million stake. Each of these investments came paired with multi-year compute commitments by the AI lab back to the investing cloud provider. The AI lab’s revenue lines show large compute expenses; the cloud provider’s revenue lines show large enterprise contracts; the underlying cash flow between them is substantially smaller than either set of numbers suggests.

There are three reasons regulators find this framing concerning.

Reason 1 · Market foreclosure. If a cloud provider’s investment in an AI lab gives it preferential access to the lab’s models — exclusive distribution, first-look rights, priority for new capabilities — then competing cloud providers cannot match the integration. Smaller AI labs cannot match the cloud provider’s investment terms. The structure forecloses competition at both layers simultaneously.

Reason 2 · Capital allocation distortion. The AI lab’s revenue is artificially inflated by cloud-credit investments that the lab uses to purchase the cloud provider’s product. The cloud provider’s enterprise revenue is artificially inflated by the lab’s compute spend, which is funded by the cloud provider’s investment in the lab. Neither company’s financial statements cleanly reflect the underlying economic activity, and other capital allocators (sovereign wealth funds, pension funds, public-equity investors) are pricing the wrong cash flows.

Reason 3 · Switching costs. An AI lab that has signed a multi-year compute commitment in exchange for an investment cannot easily migrate to a different cloud provider, even if the alternative provider would offer better terms or capability. The lab’s strategic flexibility is constrained by the structure of the original deal. From a regulatory perspective, structural switching cost is a competitive harm.

OpenAI’s March 2026 restructuring as a Public Benefit Corporation, followed by the AWS and Amazon deals, is the clearest test of the circular spending pattern’s stability. OpenAI used the PBC structure to argue that it was no longer Azure-exclusive, and signed deals with both Amazon and Oracle to demonstrate multi-cloud strategy. The FTC’s response, made visible through the “quasi-merger” framing in March, was that diversification across multiple cloud providers does not address the underlying concentration concern. If the AI lab is dependent on the same three to four providers regardless of how it splits the dependency, the structural problem persists.

This is the point at which the audit moves from theoretical concern to operational consequence.

3. The single-region concentration nobody talks about

The market-share numbers describe concentration at the company level. They understate the concentration at the geographic and operational level.

A single AWS region — us-east-1 in Northern Virginia — handles 41.5 percent of all AWS requests globally according to Cloudflare Radar Q1 2026 data. The top three US AWS regions (Virginia, Ohio, Oregon) account for 66.8 percent of global AWS traffic combined. Europe’s Frankfurt and Ireland regions together contribute 13.7 percent. Asia-Pacific hubs in Singapore, Tokyo, and Mumbai combine for 9.4 percent.

The corresponding pattern at Azure is less granularly disclosed, but the structural picture is similar. Microsoft’s commercial RPO of $315 billion as of Q1 2026 is dominated by a small number of regions where the largest enterprise customers concentrate. Google Cloud’s $70 billion RPO backlog is similarly concentrated geographically.

The implication: a meaningful portion of global AI inference and a non-trivial portion of frontier training is running through a small number of physical sites in a small number of jurisdictions. A regulatory action affecting Northern Virginia, a power-grid issue in Northern Virginia, a network outage at a single hyperscaler region, or a geopolitical event affecting a major cloud hub cascades through the entire AI economy in ways that the headline market-share numbers do not communicate.

This is not a hypothetical. Major AWS outages in 2017, 2020, 2023, and 2024 each propagated through a meaningful fraction of the consumer internet and an even larger fraction of enterprise services. The same outage in 2026 propagates through frontier AI inference. The concentration risk has migrated from “websites go down” to “the substrate of the AI economy goes down” without the underlying physical infrastructure becoming meaningfully more distributed.

Sovereign wealth funds are pricing this. Norway’s Government Pension Fund Global and Singapore’s GIC have both signaled increased interest in geographically diverse infrastructure exposure as part of their AI thematic allocation through 2025-2026. The signal is small but visible: the largest institutional allocators are starting to treat geographic concentration as a risk variable, not a neutral fact.

4. The three regulatory tracks and their timelines

Three jurisdictions are running concurrent investigations. Each is on its own timeline and produces a different kind of pressure.

Track 1 · United States · FTC. The 6(b) study produced a January 2025 staff report identifying potential competition implications: input access, switching costs, exclusivity rights, governance and consultation rights. The compulsory demand on Microsoft was crafted by FTC staff and signed off by then-Chair Khan, then expanded under Ferguson. The Amazon-OpenAI quasi-merger framing in March 2026 indicated active enforcement consideration rather than passive monitoring. The realistic timeline for any consent decree, divestiture order, or structural remedy is 18 to 36 months from full investigation initiation, putting the earliest action in the late 2026 to early 2028 window. The DOJ has separately signaled interest in the cloud market specifically; coordination between the agencies is ongoing.

Track 2 · European Union · Digital Markets Act. The DMA’s gatekeeper designation framework was always going to apply to the largest cloud providers; the question is how aggressively the European Commission applies the obligations. AWS and Azure in the gatekeeper designation pipeline is a real but procedural development. The operational consequence is interoperability requirements, transparency obligations, and self-preferencing prohibitions that constrain the most concentrated business practices without forcing structural separation. The timeline is shorter than the FTC’s — gatekeeper obligations typically take effect within 6 to 12 months of designation. By mid-2027, AWS and Azure operating in the EU are likely to face structural constraints on the partnership behaviors that the FTC is also examining.

Track 3 · United Kingdom · CMA. The CMA’s cloud market investigation produced preliminary findings in late 2025 that identified anti-competitive concerns in egress fees, technical lock-in, and committed-spend agreements. The CMA’s powers to require behavioral or structural remedies are real. The timeline is intermediate — likely 12 to 24 months from preliminary findings to final orders. The CMA has the practical advantage that the UK market is large enough that compliance matters but small enough that the cloud providers can absorb the changes without major architectural disruption. Whatever the CMA orders is likely to become a template that propagates to the EU and possibly the US.

The three tracks together produce a regulatory pressure that compounds. The cloud providers can litigate, delay, and contest individual findings, but the convergence of three jurisdictions on similar conclusions over 12 to 24 months makes structural change more likely than not. This is the sovereign wealth fund insight: not whether any single track produces enforcement, but that three tracks are simultaneously moving in the same direction.

5. What sovereign wealth funds actually do when this happens

Sovereign wealth funds are not first responders to regulatory action. They do not buy or sell on press releases. They are slow, large, and consensus-driven. When they move, they move in size and they move durably. The pattern is recognizable from prior cycles.

The 2010-2014 Big Tobacco regulatory cycle is the closest historical analog. Tobacco companies retained significant earning power but progressively lost premium valuation multiples as regulatory pressure stacked across multiple jurisdictions. Sovereign wealth funds and large pension funds did not divest dramatically; they reallocated incrementally. Over four to six years, the structural valuation premium that tobacco had relative to consumer staples compressed by 25 to 40 percent. The companies remained profitable. They were no longer priced as growth assets.

The 2014-2018 European bank regulatory cycle produced a similar pattern at faster pace. European banks faced concurrent capital adequacy, anti-money-laundering, and structural-separation regulatory pressure from the ECB, the EBA, and national regulators. Sovereign wealth funds reallocated from European banks to North American banks and to non-bank financial alternatives. The valuation multiples compressed; the cash flows persisted; the long-term return on capital declined to a new equilibrium that was visibly lower than the pre-regulatory baseline.

The cloud-AI concentration cycle of 2025-2028 looks structurally similar. The hyperscalers retain enormous earning power, will continue to grow revenue at meaningful rates, and will continue to dominate the underlying market. The regulatory pressure does not destroy the businesses. It compresses the valuation premium that “AI infrastructure platform” currently commands. The capex-to-revenue ratios already at 45-57 percent constrain how much further growth can be funded internally; any constraint on the partnership-and-circular-spending structures further constrains how AI revenue scales relative to capex.

The strategic implication for sovereign wealth fund positioning: rebalance from concentrated hyperscaler exposure toward distributed alternatives. Three categories absorb the rebalancing flow.

Category 1 · Specialized AI infrastructure players. Companies whose entire business is AI compute, with structurally different cost economics: CoreWeave, Lambda, Together AI, Crusoe, Voltage Park. Each has secured material capital in 2024-2026. The thesis is that as hyperscalers face concentration pressure, specialized players capture marginal AI workload share at attractive economics.

Category 2 · Sovereign-cloud and regional alternatives. EU-jurisdiction infrastructure (Mistral Compute, Scaleway, OVH, T-Systems), Middle Eastern sovereign clouds (G42, Khazna), Indian alternatives, Japanese (KDDI, NTT, SoftBank). These were structural curiosities five years ago; they are now AI-procurement options with regulatory tailwinds.

Category 3 · Non-hyperscaler chip and infrastructure suppliers. Nvidia obviously, but also Broadcom, TSMC, SK Hynix, Samsung, Micron, and the long tail of data center infrastructure providers that supply all of the hyperscalers but are not concentrated themselves. The economic exposure to AI growth is preserved while the company-specific concentration risk is diluted.

These flows are visible in 2026 portfolio data from the larger sovereign wealth funds. They are not yet large enough to move the hyperscaler market caps materially. They will be by 2028 if the regulatory cycle plays out as the analog suggests.

6. The model lab consequences

The compute concentration audit has direct consequences for which model labs reach frontier-2028 capability and which do not. The 2028 endgame piece treated compute access as a binding constraint without disambiguating the layer at which the constraint operates. This piece updates that.

Anthropic is structurally tied to AWS. The 5 GW Trainium commitment is large, the partnership is deep, and AWS-specific custom silicon means the migration cost away from AWS is meaningful. If the FTC orders structural remedies on the Amazon-Anthropic partnership specifically — divestiture of the AWS investment stake, prohibition of exclusivity terms, compelled multi-cloud distribution — Anthropic’s compute capacity is not threatened, but the cost structure changes. The IPO disclosure documents in October 2026 will need to address this risk explicitly.

OpenAI is now the most multi-cloud of the major labs. Microsoft, Amazon, Oracle (Stargate), and reportedly Google Cloud and CoreWeave on the margins. The diversification reduces single-cloud dependency but does not address total compute concentration. From a regulatory standpoint, OpenAI’s structure is most consistent with a “circular spending across multiple counterparties” pattern rather than a “single cloud lock-in” pattern. The PBC restructuring was strategically designed to enable this.

Google DeepMind is internal. The compute concentration question for Google is whether Alphabet’s vertical integration — TPUs, Vertex AI, Gemini, Google Cloud — survives the DMA’s gatekeeper obligations on self-preferencing. The most likely outcome is that the DMA forces unbundling of TPU access from Google Cloud subscription requirements, which marginally reduces Google’s cost advantage but does not threaten the underlying capability. Probability the DMA produces structural separation of DeepMind from Alphabet: very low. Probability of operational unbundling: high.

Meta Superintelligence Labs runs primarily on its own infrastructure and does not face the cloud-partnership scrutiny of the others. Meta’s strategic constraint is internal — capex discipline, capability roadmap clarity, the “very technical question” disclosure issue — rather than regulatory.

xAI runs on Colossus, its own data center, with material additional capacity from Oracle. The lab faces less cloud-partnership scrutiny but has its own concentration problem at the chip layer (Nvidia GB300, with limited diversification).

The Chinese sphere operates outside the Western regulatory cycle entirely. DeepSeek, Qwen, Moonshot, and Zhipu run primarily on domestic Chinese cloud infrastructure (Alibaba Cloud, Tencent Cloud, Huawei Cloud) plus the increasingly Nvidia-independent Huawei Ascend stack that Zhipu’s GLM-5.1 demonstrated. The Chinese audit equivalent — the 2024 antitrust action on Alibaba Cloud, ongoing scrutiny of Tencent — operates on a different timeline and produces different remedies.

Reflection AI and the 12 founders cohort spinouts sit closer to the structural beneficiaries side of this. A regulatory environment that constrains hyperscaler-AI lab partnerships creates room for independent labs to negotiate without having to choose a single hyperscaler patron. The capital that flows to these cohorts in 2026-2027 is partly funded by sovereign wealth fund rebalancing away from concentrated hyperscaler exposure.

7. The audit’s actual outcome — three scenarios

I want to be honest about what the audit produces. The probability that any of the three jurisdictions issues a true structural remedy — forced divestiture, compelled separation, breakup — is low. The probability that the audit produces meaningful behavioral and operational changes is high. Three scenarios, with my probability estimates.

Scenario A · Behavioral consent (probability 60%). The FTC, EC, and CMA each negotiate consent decrees that constrain partnership exclusivity, require interoperability, prohibit self-preferencing, and impose transparency obligations on cloud-AI lab agreements. No company is broken up. The Big Three remain dominant. The valuation premium that “deeply integrated AI infrastructure stack” commands compresses by 15 to 25 percent over 18 to 36 months. Sovereign wealth fund rebalancing is real but modest.

Scenario B · Operational separation (probability 30%). One or more jurisdictions requires functional separation of AI investment activities from cloud commercial relationships. The companies are not divested, but the partnership-and-circular-spending structures are constrained to a degree that makes them substantially less effective as competitive moats. Specialized AI infrastructure providers and sovereign-cloud alternatives capture meaningful share. Hyperscaler valuation premium compresses by 25 to 40 percent. The model lab landscape diversifies cloud relationships materially.

Scenario C · Structural remedy (probability 10%). A jurisdiction — most likely the EC — orders structural divestiture of cloud-AI investment stakes or compels operational separation of cloud and AI businesses at one or more of the Big Three. This is the historically least common antitrust outcome and would be the most consequential. Hyperscaler structures become meaningfully different over 36 to 60 months. The AI economy reorganizes around different patron relationships.

Across all three scenarios, the substrate concentration that the audit identified is real and is being actively re-examined by capital allocators. Even Scenario A produces meaningful flow changes. Scenarios B and C produce structural changes. None of them produces business destruction at the Big Three; all of them compress the AI-infrastructure-platform valuation premium.

8. What changes in 2026-2027 because of this

Three structural shifts are visible already.

Shift 1 · Cloud-AI partnership disclosure becomes mandatory in IPO documents. Anthropic’s October 2026 S-1 will have to address the AWS partnership in detail because the regulatory environment is now sufficiently active that omission would be a disclosure failure. OpenAI’s eventual S-1 will face the same requirement at much greater complexity given the multi-cloud structure. The disclosure standards established in these IPO documents will propagate to other labs and other partnerships. This is the structural development that compounds over time.

Shift 2 · Specialized AI infrastructure capital flows accelerate. CoreWeave is public; Lambda has secured material 2026 capital; Together AI, Crusoe, and Voltage Park have all expanded. Sovereign wealth fund rebalancing toward this category is visible in the deal flow. By end of 2026, the specialized cohort collectively will represent a material fraction of frontier-tier AI training and inference capacity. Not 50 percent. Probably 8 to 15 percent. Enough that the hyperscalers can no longer assume monopoly access to AI workloads.

Shift 3 · Sovereign-cloud procurement preference becomes a real procurement criterion. The EU AI Act enforcement starting August 2 builds on this. Mistral Compute, Scaleway, and the EU sovereign-cloud cohort capture procurement that would have flowed to AWS or Azure absent the regulatory environment. The capture is meaningful in absolute terms (multi-billion euro by 2027) but small relative to total hyperscaler revenue (1 to 3 percent). The structural significance is that the substrate concentration becomes geographically diversified in a way the headline market-share numbers cannot show.

These three shifts together describe how the compute substrate evolves over 2026-2027. None of them produces a binary break in concentration. All of them produce the slow, durable rebalancing that prior regulatory cycles have produced.

What to Do This Quarter

1. Public-equity investors. Re-screen hyperscaler exposure for concentration risk. AWS, Microsoft, and Google still produce strong cash flows; their valuation premiums for “AI-platform-of-record” are at risk over 18-36 months. Rebalance toward specialized AI infrastructure (CoreWeave, Lambda) and toward chip and infrastructure suppliers (Broadcom, TSMC, SK Hynix). Do not divest aggressively; reallocate at the margin.

2. Sovereign wealth fund and pension fund allocators. The analog is Big Tobacco 2010-2014 and European banks 2014-2018. Pattern suggests 25-40% valuation-premium compression over four to six years if Scenarios A or B materialize. Begin incremental rebalancing now, not after the consent decrees publish.

3. Enterprise CIOs and CTOs. Update vendor-assurance frameworks to include compute-concentration risk. Multi-cloud architectures that cost 20-40% more to operate now look meaningfully better when the regulatory environment compresses single-vendor pricing power. The sovereign-cloud option is a real procurement criterion for EU, UK, and increasingly US public-sector and regulated-industry workloads.

4. Model lab strategists. Anthropic’s IPO disclosure in October 2026 sets the template. OpenAI’s PBC structure is the response template. Reflection AI and the spinout cohort have the structural advantage of not yet being locked into deep partnership relationships. The optimal strategic posture for any new model lab is multi-cloud at minimum, ideally with material specialized-infrastructure exposure.

The Strategic Read

The compute substrate beneath the AI economy is more concentrated than the headline market-share numbers suggest, and the concentration is now being formally audited by three jurisdictions simultaneously. The audit will not produce structural breakup. It will produce behavioral, operational, and disclosure constraints that compound over 18 to 36 months.

The valuation premium that “AI infrastructure platform” currently commands at AWS, Microsoft, and Google is at meaningful risk over that horizon. Not because the businesses fail; they will not. Because the partnership-and-circular-spending structures that made the AI-infrastructure-platform thesis especially attractive are visibly constrained by the regulatory cycle. Capital allocators who watch for this kind of inflection have begun rebalancing.

The strategic question for everyone downstream — model labs, enterprise AI buyers, AI startups, AI investors — is whether they are positioned for the rebalancing or whether they will pay the cost of being on the wrong side of it. Three companies own the substrate. That fact is real and is being priced. The question is who is pricing it accurately and who is still pricing the pre-2024 narrative.

The answer determines a meaningful fraction of what the AI economy looks like by 2028. The audit is the inflection. Sovereign wealth funds are noticing.

Three companies own the substrate. The substrate is being audited. The valuation premium for owning the substrate is at risk. Sovereign wealth funds have started to rebalance. The audit’s findings cascade through every layer of the AI economy that runs on top of it.

About the Author

Thorsten Meyer is a Munich-based futurist, post-labor economist, and recipient of OpenAI’s 10 Billion Token Award. He spent two decades managing €1B+ portfolios in enterprise ICT before deciding that writing about the transition was more useful than managing quarterly slides through it. More at ThorstenMeyerAI.com.

Related Dispatches

- The 2028 Model Lab Endgame — scenario forecast

- The Memento Constraint — continual learning trillion-dollar bottleneck

- The European Bet — Mistral × Aleph Alpha × Black Forest Labs

- The Channel Move — Anthropic × Wall Street PE channel acquisition

- The 27% Problem — Anthropic’s enterprise lead and Google’s $750M check

- Single Digits — open-weight inflection

- The Bubble Is Not in Valuations

Sources

- Synergy Research Group, Q1 2026 Cloud Infrastructure Market Share (AWS 30% / Azure 25% / GCP 13%)

- Goldman Sachs, Hyperscaler Capex Projections 2025-2027 ($1.15 trillion cumulative)

- Introl Blog, Hyperscaler CapEx Hits $602B in 2026 (per-company >$100B, capex 45-57% of revenue)

- Tom Tunguz, The $112 Billion Quarter — Hyperscalers Bet The Farm On AI (April 29, 2026)

- Cloudflare Radar, Cloud Provider Traffic Share Q1 2026 (us-east-1 41.5%, top three US regions 66.8%)

- Federal Trade Commission, FTC Issues Staff Report on AI Partnerships & Investments Study (January 2025)

- Federal Trade Commission, FTC Launches Inquiry into Generative AI Investments and Partnerships (Section 6(b) orders, January 2024)

- Fortune, Trump’s FTC moves ahead with broad Microsoft antitrust probe (March 2025)

- FinancialContent / MarketMinute, The Great Cloud Divorce: Microsoft and OpenAI Clash as Amazon Charges into the AI Supremacy Vacuum (March 18, 2026)

- BusinessTats, Cloud Market Share Statistics (Big Three 68% of $395B market 2025, $268B Big Three revenue)

- HeyGotrade, AWS vs Google Cloud vs Azure: Hyperscaler Stocks 2026 (AWS AI run rate >$15B, GCP RPO $70B+)

- Tech-Insider, Microsoft AI Spending 2026: $150B Capex Analysis (Azure AI run rate $13B)

- European Commission DMA gatekeeper designation process (ongoing)

- UK Competition and Markets Authority cloud market investigation (preliminary findings late 2025)