Reading Clark’s closing — the bivalent 60%/40% credence, the 30% by 2027 alternative, and what it means when a frontier-lab co-founder publicly says “I’m persuaded.”

By Thorsten Meyer — May 2026 · The Coda

This is the coda to the Clark essay franchise. Eight pieces in — five Clark Series pieces and three Outside Read pieces. This piece engages with the closing section of Jack Clark’s Import AI #455, titled “Staring into the black hole,” where Clark states his actual credence and writes the personal-conclusion paragraph that gives the essay its emotional weight.

The closing matters in a way distinct from the rest of the essay. The argument is in the prior sections. The closing is what Clark concludes from the argument. Two paragraphs. The first contains the explicit probability numbers. The second contains the personal-credence statement that crosses a specific discourse threshold.

What follows: the bivalent forecast walk-through, the 30% by 2027 alternative, the creativity hinge that returns to Outside Read 02, the discourse-crossing significance of Clark’s “ghost story” reframe, what it means when the builders are publicly persuaded, the “profound change” claim taken as a serious assessment rather than rhetorical flourish, and the franchise close.

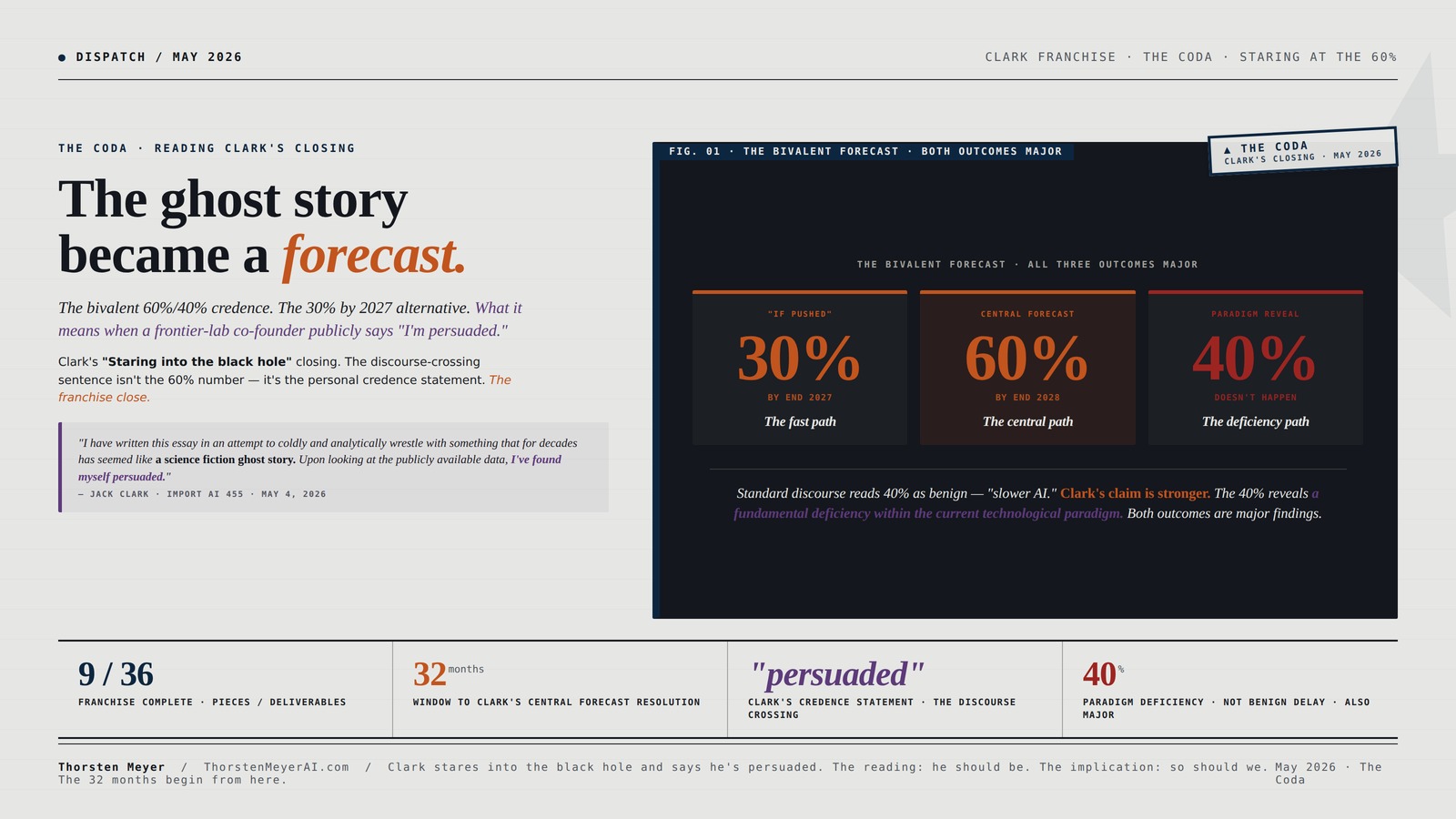

The headline finding for the coda: the bivalent forecast is itself the most important sentence Clark writes in the essay. 60% probability of automated AI R&D by end of 2028. 40% probability of something else. And the 40% is not “things will be slower than expected.” Clark explicitly says the 40% means “we will have revealed some fundamental deficiency within the current technological paradigm and it’ll require human invention to move things forward.” Both outcomes are major findings. The franchise has been about reading the 60% side. The coda reads the 40% side and the structural implication of the bivalence itself.

The ghost story

became a forecast.

Reading Clark’s closing — the bivalent 60%/40% credence. The 30% by 2027 alternative. What it means when a frontier-lab co-founder publicly says “I’m persuaded.”

Jack Clark’s closing section — “Staring into the black hole” — contains the most important sentence in the essay for the public discourse. Not the 60%/2028 number — though that’s the technical claim that gets quoted. The discourse-crossing sentence is the personal credence statement: “I have written this essay in an attempt to coldly and analytically wrestle with something that for decades has seemed like a science fiction ghost story. Upon looking at the publicly available data, I’ve found myself persuaded that what can seem to many like a fanciful story may instead be a real trend.”

The standard discourse reads 40% as benign — “slower AI.” Clark’s actual claim is stronger. The 40% reveals a fundamental deficiency within the current technological paradigm. Both outcomes are major findings. The franchise has read the 60% side. The coda reads the 40% side and the bivalence itself.

“For decades, it has seemed like a science fiction ghost story.“

The most important sentence in the essay is not the 60% number. The discourse-crossing sentence is the personal credence statement. When a frontier-lab co-founder publicly says “I am persuaded by the data that this is no longer science fiction,” the discourse changes.

“I have written this essay in an attempt to coldly and analytically wrestle with something that for decades has seemed like a science fiction ghost story. Upon looking at the publicly available data, I’ve found myself persuaded that what can seem to many like a fanciful story may instead be a real trend.”

AI Engineering: Building Applications with Foundation Models

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Nine pieces. One structural finding.

Six different forms of evidence aggregating to one structural finding: the labs are building what they say they’re building; the forecast is the plan; the institutional response window is the only variable that remains unfixed.

Six different forms of evidence. One structural finding. The labs are building what they say they’re building. The institutional response window is the only variable that remains unfixed.

Ai-driven Time Series Forecasting: Complexity-conscious Prediction and Decision-making

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Three paths. All major. All need capacity.

Three structural possibilities for what the next 32 months produce. Asymmetric cost-of-being-wrong points toward building response capacity now. There is no scenario where the capacity goes unused.

~20 months

~32 months

field correction

Capacity built for 30%/60% paths is useful. Capacity built for 40% path is also useful (for field correction). There is no scenario where building response capacity now is wasted.

Clark stares into the black hole and says he’s persuaded. The franchise has been about reading that statement seriously. The reading: he should be. The implication: so should we.

Practical Guide to Google Notebook lm for Beginners: Your Step-by-Step Guide to Summarize, Organize, Smarter Research, and Note-Taking with AI

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

I · The bivalent forecast

Clark’s actual numbers from the closing:

- 30% probability of automated AI R&D by end of 2027 (the alternative he gives “if pushed”)

- 60% by end of 2028 (his central forecast)

- 40% of not seeing it by end of 2028 — which Clark explicitly says would reveal “a fundamental deficiency within the current technological paradigm and it’ll require human invention to move things forward.“

The structural significance is in how the 40% should be read. Standard discourse around frontier-AI forecasts treats lower probabilities of takeoff as good news — slower trajectory, more institutional response time, less disruption. That reading is incomplete. Clark is saying: if it doesn’t happen by 2028, the correct conclusion isn’t “AI is slower than expected.” The correct conclusion is “the current technological paradigm has a fundamental limitation that we hadn’t recognized.”

Two readings of the 40%:

The benign reading. Capability progress hits some natural bottleneck — compute supply, data scarcity, architectural limits, human bandwidth for supervision. Progress slows. The timeline extends. Automated AI R&D arrives in 2029-2032 rather than 2028. The institutional response gets more time. The transition unfolds at a more manageable pace. Many readers of Clark’s essay default to this reading. It is the comforting reading.

Clark’s reading. Capability progress hits a fundamental ceiling implicit in the paradigm. The engineering trajectory was extrapolatable up to some point and then doesn’t extrapolate further. We discover that the assumption “more compute plus more data plus better algorithms produces continued capability gains” was true within a regime and false outside it. The paradigm needs replacement. Back to the drawing board. Possibly years before the next architectural breakthrough enables a different trajectory to begin.

These are very different conclusions. The benign reading is “delayed AI.” Clark’s reading is “we don’t understand what we were doing.” Either outcome is a major structural finding. The franchise documents that. The benign reading buys institutional time. Clark’s reading produces an entirely different research and policy landscape.

The point I want to make explicit: the 40% is not a non-event. Whatever happens, something significant happens. Either automated AI R&D arrives by end of 2028 with all the consequences the franchise documents, or it doesn’t arrive and the field learns it has been operating on incomplete foundations. The bivalence is the structural finding. Both possibilities deserve institutional planning.

White Paper – AI Ethics Beyond 2025: Building a Responsible Future Together

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

II · The 30% by 2027 alternative

Clark provides the 30% by 2027 alternative “if pushed.” This deserves attention separately from the central 60%/2028 number.

The 30%/2027 timeframe is 17 months from Clark’s May 2026 publication. Within this window:

- OpenAI’s “automated AI research intern by September 2026” target (10-11 months out)

- Anthropic’s Q4 2026 IPO timing (within window)

- The corporate calendar markers Outside Read 03 catalogs

Clark is essentially assigning 30% probability to the corporate calendar being met as published, with the resulting capability cascade producing automated AI R&D by end of 2027. The 30% reflects compound uncertainty: will the labs hit September 2026 as advertised, and will the resulting capability be sufficient to produce automated AI R&D within an additional 15 months.

This matters because it tells us how Clark internally weights the corporate-commitment evidence. If he thought the September 2026 target was certain to be met and certain to produce automated AI R&D by end of 2027, he’d assign higher than 30%. He doesn’t — the 30% reflects real uncertainty on both legs.

But 30% is not a small number for a 17-month forecast horizon. Outside the AI field, a domain expert assigning 30% probability to a specific named outcome in a specific 17-month window is making a strong claim. Most forecasts of specific outcomes within specific short windows are at the 5-15% probability range — 30% is twice or more that baseline.

The discourse around Clark’s essay has focused on the 60%/2028 number. The 30%/2027 deserves equal attention. The 30% probability of automated AI R&D arriving inside a 17-month window — a window that includes corporate-calendar targets the labs are actively executing toward — is a near-term forecast with meaningful policy implications. If the 30% scenario plays out, the institutional response window narrows from 32 months to roughly 20 months. That changes what’s feasible to build.

III · The creativity hinge · returning to Outside Read 02

Clark’s stated reason for the 60% not being higher: “I think AI research contains some requirement for creativity and heterodox insights to move forward.”

This is exactly the residual question Outside Read 02 engaged with. Engineering is automated. Research is the residual. The residual question is whether creativity is a permanent moat or compressed perspiration.

Clark’s 60% reflects a specific position on this question: creativity is partly binding but not fully. The 40% non-occurrence reflects scenarios where creativity is more binding than the engineering trajectory anticipates. The 60% occurrence reflects scenarios where engineering at sufficient scale produces creativity-equivalent outputs.

The Outside Read 02 reading argued that creativity may be compressed perspiration — discovered through volume of well-executed engineering work, not through a separate spark. If that reading is correct, Clark’s 60% is conservative. The actual probability is higher because the residual binds less than Clark’s framing assumes. If Clark’s reading is correct, the 60% is well-calibrated; creativity is somewhat-but-not-fully binding. The two readings diverge by an amount that matters for institutional planning.

Clark himself notes the math results as “suggestive” of AI creativity — the Erdős-1051 discovery, centaur math discovery papers, the gradual accumulation of AI-assisted research outputs. The suggestion is in the right direction. Clark’s 60% is consistent with treating those signals as real but incomplete evidence. As more data accumulates over the next 12-24 months, the creativity question resolves empirically. If the Erdős-discovery rate scales with attempt volume, the optimistic reading wins. If it doesn’t, the pessimistic reading wins.

Clark’s 60% is the median between these two readings. That’s a reasonable epistemic position given current data. It’s also a position that produces different institutional response implications depending on which way the empirical resolution falls — and the resolution is the empirical question of the next 18 months.

IV · The ghost story reframe

Clark’s closing paragraph contains the sentence I think is most important in the entire essay for the public discourse — and it is not the 60%/2028 number.

“I have written this essay in an attempt to coldly and analytically wrestle with something that for decades has seemed like a science fiction ghost story. Upon looking at the publicly available data, I’ve found myself persuaded that what can seem to many like a fanciful story may instead be a real trend.”

This is the discourse-threshold crossing.

For decades, AGI / automated AI R&D / human-level AI has had the cultural status of science fiction. Speculative in a specific way — distant, uncertain, more interesting as a concept than as a near-term prediction. Movies. Books. Philosophy seminars. Singularity University talks. Not policy briefings. Not corporate strategy documents. Not central-bank stress tests. The science-fiction framing was load-bearing for how institutions related to the topic. If something is science fiction, it’s not your department.

Clark’s closing is the public acknowledgment that this status has changed. A frontier-lab co-founder — who would know — is publicly saying the science-fiction framing no longer applies. Not because the future is now, not because AGI is imminent in the singularity sense, but because the trend lines are real, the corporate commitments are real, the capital is real, and the institutional response is structurally inadequate to a trend the labs themselves are pursuing.

The structural significance: when the people building the systems publicly say “this is no longer science fiction,” the discourse changes. Not immediately — cultural inertia is substantial. The institutions that organize their thinking around “AI as speculative future” don’t restructure on the timescale of a single essay. But over 12-36 months, the framing shifts. From speculative future to operational near-term. From “what if” to “when.”

Clark’s essay is part of producing that shift. It is the kind of essay that gets cited in policy briefings, central-bank speeches, corporate-strategy documents, IPO disclosure language. It legitimizes treating automated AI R&D as a near-term scenario worthy of institutional planning. That legitimization is itself an event in the discourse.

For external observers, this matters operationally. If you’ve been reading AI discourse as “speculative future stuff” — interesting but not in your professional remit — Clark’s closing is the explicit invitation to update. The signal is from the people who would know. Their persuasion is the information.

V · What it means when the builders are publicly persuaded

Three related observations:

(a) The builders are internally persuaded enough to commit calendar targets and capital scale. OpenAI’s September 2026 target. Anthropic’s Q4 2026 IPO. Recursive Superintelligence’s $500M Series A. Mirendil’s named-mission. These are not the actions of organizations hedging against a speculative scenario. They are the actions of organizations executing a plan they believe will succeed. The corporate evidence base Outside Read 03 catalogs is the operational form of “we believe this.”

(b) Clark’s essay is the public articulation of an internal consensus. Specifically Anthropic-internal. The Anthropic view is that automated AI R&D is achievable and is being pursued. The essay externalizes this view in a form suitable for public discourse. It is what the internal corporate-strategy documents say, translated for public consumption with appropriate hedging. The 60% probability is the externalized form of the internal credence. The “ghost story” closing is the externalized form of “we no longer treat this as speculative.”

(c) The persuasion crossing has implications for the alignment and safety community. If the builders are publicly saying “we are building this and we are persuaded by the data that we will succeed,” the safety community has 11-32 months to be ready. The alignment work that doesn’t ship by mid-2027 is not in the production stack when the calendar targets are hit. The technical capacity to verify alignment claims about systems being built on those timelines doesn’t currently exist at scale. The institutional capacity to enforce safety constraints across competing labs doesn’t currently exist at all. Both are races against the corporate calendar.

VI · The “profound change” claim taken seriously

Clark’s actual closing words: “If this trend continues, we may be about to witness a profound change in how the world works.”

This is not rhetorical flourish. The franchise has documented why it’s not.

Eight pieces. The capability data is real. The deployment data is operational. The labor market consequences are empirical. The corporate commitments are public. The capital cascade is observable. The forecast is structurally a corporate plan. The verification infrastructure is inadequate. All of these are individually documented; the aggregation is the case for taking “profound change” seriously.

When a frontier-lab co-founder writes “profound change in how the world works” in the closing of an essay published in the IPO disclosure preparation window, it should be read as the executive’s actual assessment, not as marketing copy. The marketing language would be softer. The corporate-strategy language would be more hedged. The “profound change” language is what people use when they’re trying to communicate something they actually believe is happening.

The right response is to take the statement seriously. Not as alarm. Not as triumph. As data. A senior executive at a frontier lab publicly says “the world is about to change.” That assessment has informational content beyond the data the assessment is calibrated against. The franchise has read that content from outside the frontier lab. The reading: it should be taken seriously.

Taking it seriously doesn’t mean accepting it without analysis. The franchise has been the analysis. It means treating “the world is about to change” as a serious near-term scenario for institutional planning, not as a speculative future scenario for cultural commentary. The shift in framing is the response.

VII · The franchise close

This is the coda. Beyond the structured eight-piece Clark essay franchise. Reading the closing from outside the frontier lab.

The aggregate franchise:

- Clark Series 1 · Clark says it out loud — 60%/2028 is the institutional fact.

- Clark Series 2 · The Benchmark Saturation Cascade — six benchmarks saturating on overlapping timelines.

- Clark Series 3 · The Compounding Error Problem — recursive self-improvement compounds errors. 0.999^500 = 0.606.

- Clark Series 4 · The Machine Economy — capital-heavy, human-light economy emerges. 5,000× labor cost ratio.

- Clark Series 5 · The Co-Founder’s Black Hole — synthesis · four threads converge on the 32-month window.

- Outside Read 01 · The Coding Singularity — coding is the wedge into recursive self-improvement.

- Outside Read 02 · Engineering Automated, Research Residual — 99% perspiration automated; 1% inspiration may be compressed perspiration.

- Outside Read 03 · The Forecast Is the Plan — five labs commit to one stated goal. The forecast is endogenous.

- The Coda (this piece) · The Ghost Story Became a Forecast — bivalent forecast, 30%/2027, persuasion crossing.

Aggregate structural finding across all nine pieces: the Clark essay catalogs the public evidence base for automated AI R&D by 2028 from a co-founder of one of the labs publicly committed to building it. The capability data supports the trajectory. The deployment is operational. The labor market is empirically responding. The corporate commitments are public. The capital cascade is observable. The competitive dynamics force continued execution. The verification infrastructure is inadequate. And the builders are publicly persuaded.

Six different forms of evidence aggregating to one structural finding. The franchise is the read on the aggregation from outside the frontier lab.

VIII · Staring at the 60%

The franchise documents what we currently know. The next 32 months produce the empirical resolution. Three possibilities:

30%/2027 path. The corporate calendar gets met. OpenAI’s September 2026 target ships. The capability cascade proceeds. Automated AI R&D arrives by end of 2027. The institutional response has roughly 20 months from now. Most institutional capacity does not get built in time.

60%/2028 path (the central forecast). The corporate calendar slips somewhat but the trajectory holds. Automated AI R&D arrives sometime in 2028. The institutional response has roughly 32 months from now. The window the synthesis piece describes. Some institutional capacity gets built; most doesn’t.

40% paradigm-deficiency path. The trajectory hits a fundamental limitation. The trend doesn’t extend. The field discovers it has been operating on incomplete foundations. The response is “back to the drawing board.” The institutional response window is functionally indefinite — until the next paradigm produces a similar trajectory, which could be years.

All three outcomes are major. The institutional response should be built to be useful in any of them. The asymmetric cost-of-being-wrong analysis from Outside Read 02 applies: capacity built for the 30%/60% paths is useful; capacity built for the 40% path is also useful (for the field-correction work). There is no scenario where building the response capacity now is wasted. There are scenarios where not building it is catastrophic.

IX · Closing

The franchise opened with Jack Clark Says It Out Loud — the observation that a co-founder of a frontier lab had publicly said 60%+/2028 for automated AI R&D and that this changed what could be said in the discourse. The franchise closes here with the observation that the same essay contains the discourse-crossing the public is least equipped to interpret.

The “ghost story became a forecast” sentence is not the headline of any news story. The 60% number is. But the sentence is doing more structural work than the number. The number is a technical claim calibrated to specific data. The sentence is the executive saying “this is no longer in the science-fiction department” — and meaning it.

For the next 32 months, what gets built institutionally — alignment research, coordination mechanisms, transition support, compute allocation frameworks, verification infrastructure, political-economy reform, public-discourse capacity — depends substantially on how seriously the discourse takes the persuasion crossing. If the crossing is taken seriously, capacity gets built. If it’s read as “interesting essay from an AI guy,” capacity does not get built.

The franchise has been an attempt to make the case that the crossing should be taken seriously. Eight pieces and the coda are the case. Whether the case lands is determined by what readers do with it — what they build, who they call, what they fund, what they vote for, what they prepare for, what they invest in, what they teach, what they write next.

Clark stares into the black hole and says he’s persuaded. The franchise has been about reading that statement seriously. The reading: he should be. The implication: so should we.

That is the franchise close. The 32 months begin from here.

About the Author

Thorsten Meyer is a Munich-based futurist, post-labor economist, and recipient of OpenAI’s 10 Billion Token Award. He spent two decades managing €1B+ portfolios in enterprise ICT before deciding that writing about the transition was more useful than managing quarterly slides through it. More at ThorstenMeyerAI.com.

The Franchise · Nine Pieces

Clark Series:

- Jack Clark Says It Out Loud · Clark Series 1

- The Benchmark Saturation Cascade · Clark Series 2

- The Compounding Error Problem · Clark Series 3

- The Machine Economy · Clark Series 4

- The Co-Founder’s Black Hole · Clark Series 5 Synthesis

Outside Read Series:

- The Coding Singularity Outside Read · Piece 1

- Engineering Is Automated. Research Is the Residual. · Piece 2

- The Forecast Is the Plan. · Piece 3

The Coda · this piece · The Ghost Story Became a Forecast.

Adjacent work:

Sources

- Jack Clark · Import AI 455: Automating AI Research · “Staring into the black hole” closing section · May 4, 2026 · jack-clark.net

- Clark’s 30%/2027 and 60%/2028 explicit probabilities · from the closing paragraph

- Clark’s “fundamental deficiency within the current technological paradigm” framing · from the closing paragraph

- Clark’s “science fiction ghost story” reframe · from the closing personal statement

- Anthropic IPO target $900B · Q4 2026 · public reporting

- OpenAI’s September 2026 “automated AI research intern” calendar target · Sam Altman · October 28 2025

- Erdős-1051 result · Aletheia/Gemini · math team paper · 2025-2026

- Centaur math discovery · UBC/UNSW/Stanford/DeepMind · 2026

- The eight prior pieces of the Clark essay franchise · ThorstenMeyerAI.com · May 2026