Why Jack Dorsey’s “World Model” Company Looks Like Success for a Year — Then Compounds the Wrong Way

By Thorsten Meyer — ThorstenmeyerAI.com

In February 2026, Block cut more than 4,000 roles — approximately 40% of its workforce — and Jack Dorsey told shareholders the reason was “intelligence tools.” He said most companies were late, and would reach the same conclusion within a year.

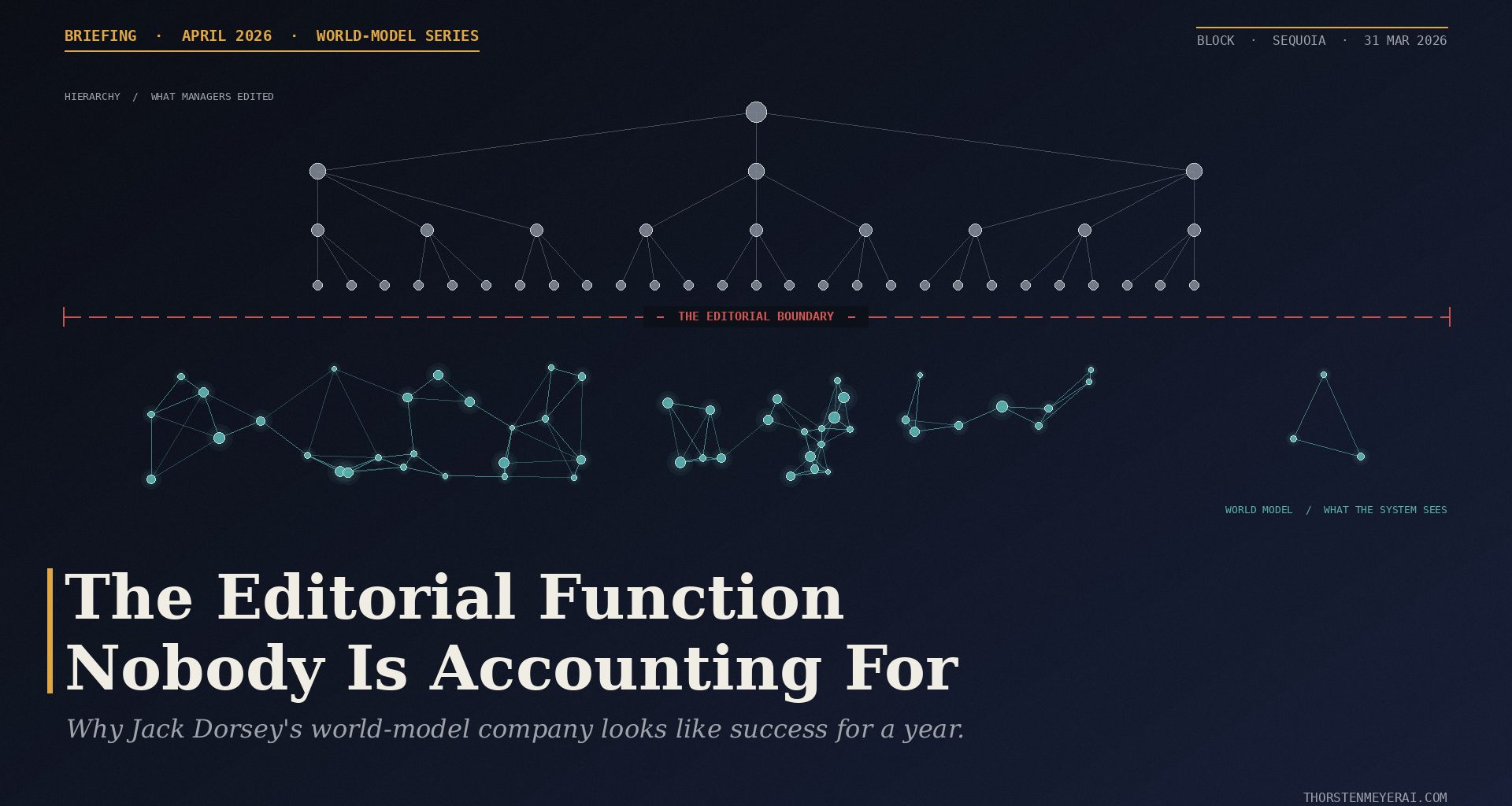

On March 31, Dorsey and Sequoia’s Roelof Botha published the blueprint behind that decision. The essay is called From Hierarchy to Intelligence. It argues that the management layer itself is what AI is about to replace — not through smarter copilots or flatter teams, but by building the company as a “mini-AGI” in which a pair of continuously updated world models absorb what managers used to do: aggregate context, relay decisions, maintain alignment. In its place, Block keeps three roles — individual contributors, directly responsible individuals, and player-coaches — and pushes everyone to “the edge” where the system can’t yet reach.

Briefing · April 2026 · World-Model Series

The Editorial Function

Nobody Is Accounting For

Jack Dorsey’s “From Hierarchy to Intelligence” is the blueprint every mid-market CEO will see this quarter. Here is why badly-implemented world models compound the wrong way — and how to tell which side of the line yours is on.

4,000+

Block roles cut

February 2026

~40%

Of Block’s

total workforce

3

Roles remaining

IC · DRI · Player-Coach

31 Mar

Essay co-published

Block & Sequoia

— The Thesis —

Every management revolution has a failure mode. This one’s failure is that it looks like success for a year. Dashboards stay clean. Reports keep flowing. Underneath, decision quality degrades — one small editorial choice at a time.

01 What managers actually did

From Dashboards to Decisions: Decision Intelligence in Practice: How Organizations Connect BI, Analytics, AI, and Human Judgment

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The editorial function that gets replaced — and misread as judgment.

Managers did not just route information. They edited it. They decided which five signals of thirty mattered, which twenty-five to sit on, and what didn’t belong in the decision at all. World models replace routing cleanly. They replace editing with something that feels like judgment and isn’t.

Three properties make this function hard to automate — and all three are underweighted in most implementations: it is selective under ambiguity, it is accountable (the loop closes when a human gets it wrong), and it is political (much of what managers edit is not information; it is conflict).

02 Three architectures, three failure modes

Visual Models for Software Requirements (Developer Best Practices)

Used Book in Good Condition

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The same label. Three different bets on what “understanding” means.

Architecture 01

Vector Database

Understanding emerges from embedding everything and retrieving by semantic similarity.

✕ Failure mode

Overconfident retrieval. The system cannot tell you what it doesn’t know, because it doesn’t know that. The editorial line is drawn inside the retrieval step — the wrong place.

◆ Where the boundary sits

Inside the retrieval weights. Opaque. Per-query.

Architecture 02

Structured Ontology

Understanding emerges from explicit structure — objects, relationships, and formal rules.

✕ Failure mode

Premature formalization. The schema fossilizes the present. Edge cases and hybrid states — where judgment matters most — are exactly what the ontology cannot represent.

◆ Where the boundary sits

Inside the schema. Rigid. Set once, painful to change.

Architecture 03

Signal-Driven

Understanding emerges from honest signal. “Money is the most honest signal in the world.” (Block’s bet.)

✕ Failure mode

Signal monoculture. The unmeasured parts of the business — culture, trust, internal argument — become structurally invisible to the system that now “sees everything.”

◆ Where the boundary sits

Inside the signal definition. Baked in at config time.

Which you pick matters less than whether you draw an explicit boundary layer before anything else. Almost nobody does.

03 Five principles — compound or rot

Stop Reacting, Start Living – Emotional Regulation For Adults: 50+ Science Backed Tools For Self-Regulating, Anger Management, Resilience, Managing Triggers & Developing True Emotional Intelligence

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The difference between a world model that gets smarter each month and one that quietly decays.

Signal fidelity over signal volume

30% of your data, cleanly captured, beats 100% of your data if half of it is gamed. Volume is not fidelity.

Earned structure, not imposed structure

An ontology extended through use compounds. One imposed by a six-month consulting engagement fossilizes.

Outcome encoding is not optional

Every output must close the loop to a measurable downstream outcome. Unwired loops degrade silently.

Organizational resistance is a feature

The people pushing back hardest are usually the ones doing the editorial function well. Listen before you reorganize them out.

Accumulated reality compounds. Accumulated reports don’t.

Transactions, shipped code, customer outcomes — these are contact with reality. Dashboards and status updates are summaries of it. Systems that eat their own summaries develop organizational dementia.

04 Which architecture fits your company

Building Organizational Decision Support Systems

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

A mapping from company type to paradigm.

Signal-Driven

Architecture

Transactional businesses with direct customer signal — fintech, marketplaces, payments, usage-heavy consumer SaaS. Your edge is the data. Block’s thesis applies. The risk is monoculture — deliberately ingest at least one structurally different signal class alongside the primary one.

Structured

Ontology

Regulated enterprises with complex objects and relationships — financial services, healthcare, logistics, defense, large operational businesses. The domain pays back formal structure, and regulation requires the explainability pure retrieval cannot give you. Keep the schema small, let it earn its scope.

Knowledge-Work

(The Hard Case)

Consulting, agencies, law, research, editorial, mid-market B2B. The data is mostly conversations and documents — exactly what vector databases were built for, and exactly where the failure mode lives. Start vector-first, but layer lightweight ontology (client, engagement, decision, risk) on top and wire one or two clean signals for outcome grading. All three architectures, boundary explicit from day one.

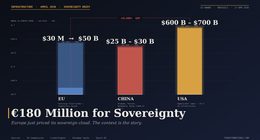

Within a week, agency founders were posting their implementations. Enterprise vendors started rebranding existing knowledge-graph and RAG products as “world models.” A Medium post from Lagos arguing for a different architectural bet was making the rounds. Palantir’s ontology team published a response in spirit, if not in name.

The idea is sound. A large share of what fills managers’ calendars is work software can now do faster and cheaper, and the companies that automate it will be structurally faster than the ones that don’t. I’m not here to argue with the thesis. I’m here to argue with the implementation that’s about to be sold to every mid-market CEO in Europe and North America over the next six quarters.

Every management revolution since the first org chart has produced a specific failure mode. This one’s failure is that it looks like success for a year.

Badly implemented, a world model produces a simulation of organizational intelligence. Dashboards stay clean. Reports keep flowing. Status gets synthesized. Underneath, the quality of decisions degrades — structurally, one small editorial choice at a time — because the system is making judgment calls it isn’t equipped to make, and the humans who used to catch those calls are no longer in the room.

By the time it’s visible in results, several quarters are gone, and the damage reads as “execution was off” or “the market shifted” rather than what it actually was: a system drew the line between information and judgment in the wrong place. Or didn’t draw it at all.

Three different architectures are being sold as “world models” right now — vector databases, structured ontologies, and signal-driven systems. Each one gets the information-versus-judgment boundary wrong in its own specific way. Which one you pick matters less than whether you build an explicit boundary layer before anything else. Most implementations I’m seeing skip that step entirely.

This briefing covers what the next six quarters of world-model implementations will actually look like, where they’ll fail, and how to tell if yours is compounding or rotting.

I. The Editorial Function Nobody Accounts For

The Dorsey–Botha essay is unambiguous about what middle management was for: routing information, precomputing decisions, maintaining alignment. That’s the Roman-army-to-railroads-to-Frederick-Taylor lineage they trace, and it’s accurate as history.

It’s incomplete as a present-tense description of what good managers actually do.

Good managers did not just route information. They edited it. They decided what mattered and what didn’t. They chose which five-signal-of-thirty to surface upward and which twenty-five to sit on until next quarter. They knew the difference between a customer complaint that meant “we have a bug” and one that meant “the deal is gone in two weeks.” They synthesized — yes — but synthesis is a form of judgment, not a form of routing.

This editorial function has three properties that make it genuinely hard to automate, and all three are underweighted in most world-model implementations I’ve seen:

It’s selective under ambiguity. A good manager ignores 80% of the signal on their desk every day. What they choose to ignore is as important as what they choose to act on. A vector database doesn’t ignore anything; it weights. An ontology doesn’t ignore anything; it classifies. Neither of these is the same thing as judgment about what doesn’t belong in the decision at all.

It’s accountable. When a manager makes an editorial call and it’s wrong, they own it. The loop closes. Next time they read that kind of signal, they read it differently. A world model that gets an editorial call wrong doesn’t own anything. If you don’t build explicit outcome encoding — which I’ll come back to — the loop never closes and the same error compounds.

It’s political. A lot of what managers actually edit is not information; it’s conflict. Two functions disagree. A VP wants something the team knows is wrong. A promising project is being protected beyond its useful life because the executive sponsor can’t admit it’s dead. An honest world model would surface all of this. An implemented world model does not, because the people configuring it don’t want it to.

A world model replaces what feels like judgment but isn’t. The failure mechanism is specific: the system generates plausible-sounding outputs at the interface layer — summaries, priorities, recommendations — that look like editorial choices. A human downstream treats them as editorial choices. They aren’t. They’re weighted retrievals or ontology traversals or signal aggregations, dressed up in the grammar of judgment.

This is the part that’s invisible until it’s structural. You don’t get a bug report when a world model surfaces the wrong five items out of thirty. You get a trendline, two quarters later, that reads as execution failure.

II. Three Architectures, Three Failure Modes

“World model” is being used as a branding term for at least three genuinely different technical bets. The Dorsey–Botha essay gestures at all three without distinguishing them, which is part of why implementations are about to go in wildly different directions under the same label.

Architecture One: The Vector Database Approach

The bet here is that understanding emerges from embedding everything. Decisions, conversations, documents, commits, support tickets — all of it gets vectorized, stored, and retrieved by semantic similarity. When the system needs “context,” it retrieves the nearest neighbors and lets a language model synthesize.

This is the fastest path to a working demo. It’s also the fastest path to a world model that’s confidently wrong.

The failure mode is overconfident retrieval. A vector database has no model of why two things are similar, only that they are. When you ask it for “everything relevant to our churn problem in the SMB segment,” it returns a plausible-looking list. What it does not return is the thing that didn’t get written down — the conversation in a hallway, the signal a manager sat on, the context that never made it into the corpus. The system cannot tell you what it doesn’t know, because it doesn’t know that.

The information-versus-judgment line in a pure vector architecture is drawn inside the retrieval step, which is exactly the wrong place. Every retrieval is a tiny editorial act, and the editorial criteria are opaque.

Architecture Two: The Structured Ontology Approach

The bet here is that understanding emerges from explicit structure. You build a formal model of the business — objects, relationships, rules — and you ground the AI’s reasoning in that structure. Palantir’s Ontology is the canonical example. Atlan and TopQuadrant ship variants. Every ontology-first vendor you’re about to hear from is betting on this paradigm.

This is the slowest path to a working demo. It’s also, when it works, the most defensible.

The failure mode is premature formalization. An ontology forces you to decide what things are before the system has seen enough to know. The shape of your business two years from now is not the shape of your business today, and a heavily ontologized world model tends to fossilize the present. Worse, the places where the ontology is wrong are the places where judgment matters most — the edge cases, the novel situations, the conflicts between two legitimate ways of classifying the same thing.

The information-versus-judgment line in an ontology architecture is drawn inside the schema. If your schema says a customer is a customer and a partner is a partner, the system cannot tell you that a particular account is currently behaving like both and needs to be handled as a hybrid. A human can. Your ontology cannot.

Architecture Three: The Signal-Driven Approach

The bet here is that understanding emerges from honest signal. Money is the example Dorsey and Botha use — every transaction is a fact about someone’s life — and Block’s advantage is that it sees both sides of millions of them daily. Usage telemetry, product analytics, support volume, revenue cohorts: the richer and more direct the signal, the better the model.

This is the cleanest path in theory and the most deceptive in practice.

The failure mode is signal monoculture. Honest signal is real. So is the fact that any single signal, no matter how honest, is a lossy projection of reality. Transactions tell you what people bought, not what they nearly bought and why they didn’t. Usage tells you what the product does, not what it should have done. A signal-driven world model converges hard on whatever is measured, which means the unmeasured parts of the business — culture, trust, the quality of internal argument — become structurally invisible.

The information-versus-judgment line in a signal-driven architecture is drawn inside the signal definition. Which transactions count. How events are attributed. What counts as a conversion. Every one of those is an editorial decision made once, at configuration time, and then baked in. If it’s wrong, the whole model tilts.

III. Draw the Boundary Explicitly, or Don’t Build It

Here’s the part most implementations skip.

Before you pick an architecture, you draw an explicit boundary layer between what the system is allowed to report and what a human has to decide. Not a UI disclaimer. Not a “recommendation confidence score.” A structurally enforced boundary that says: these classes of output are information, these classes of output are judgment, and the system is not permitted to render judgment as information.

Almost nobody is doing this. Every vendor demo I’ve watched over the last month slides over the boundary because the demo is more impressive when the system “just tells you what to do.” That’s the whole sales pitch. The moment the demo says “and here’s where you, the human, have to make the actual call,” it stops being a world model and starts being a dashboard, which is a much worse sales motion.

The reason you build the boundary first is that every architecture decision downstream — which signals to ingest, which ontology to adopt, which retrieval strategy to use — is constrained by where the boundary sits. Try to draw the boundary after the system is running and you will lose the political argument. Nobody wants to hear that the expensive thing they just deployed is only allowed to surface information, not decisions.

If you take one thing from this piece, take this: the boundary layer is the product. The architecture is an implementation detail.

IV. Five Principles That Determine Whether It Compounds or Rots

These hold regardless of which of the three architectures you pick. They’re the difference between a world model that gets smarter every month and one that becomes a liability faster than you can notice.

1. Signal fidelity over signal volume. Most companies are configuring world models on whatever data they already have. This is backwards. The cheapest intervention in any world model is cutting the signals that are distorted, delayed, or incentive-corrupted. A system built on 30% of your data, cleanly captured, outperforms one built on 100% of your data, half of which is gamed. Dorsey and Botha are right about one thing unambiguously: the model is only as good as the signal. Volume is not fidelity.

2. Earned structure, not imposed structure. If you’re going ontology-first, resist the urge to model everything upfront. Let the structure emerge from the decisions the system is actually being asked to support. An ontology earned through use compounds. An ontology imposed by a consultant in a six-month engagement fossilizes. The test is simple: when a genuinely novel customer situation shows up, does your schema extend gracefully, or does it require a schema migration and a steering-committee meeting?

3. Outcome encoding is not optional. Every output the world model produces has to be connected — mechanically, not aspirationally — to a measurable downstream outcome. Did the thing the model recommended actually help? Did the signal it surfaced actually matter? If you can’t close this loop, the world model is writing with no one grading the paper. This is the single most common gap in real implementations. Teams ship the retrieval layer, ship the synthesis layer, ship the interface, and then never wire outcomes back. Without outcome encoding, the system degrades silently.

4. Organizational resistance is a feature, not a bug. The managers who will push back hardest on the world model are, disproportionately, the ones doing the editorial function well. Their objection is not “you’re taking my job.” Their objection is “the system is about to make a call it doesn’t know it’s making.” When you hear that objection, slow down. It is, specifically, the signal you most need to attend to — because the system cannot produce it itself, and the people who can are about to be reorganized out of the room. A world model implementation that encounters no resistance is almost certainly one that’s going to fail quietly.

5. Accumulated reality compounds; accumulated reports don’t. The difference between a world model that gets smarter every month and one that plateaus is whether it’s accumulating contact with reality or accumulating summaries of reality. Transactions are contact. Code that ships and gets used is contact. Customer outcomes are contact. Dashboards, status updates, and executive briefings are summaries. A system that mostly eats its own summaries develops a kind of organizational dementia: confident, fluent, increasingly detached from the thing it’s supposed to be modeling.

V. Which Architecture Fits Your Company

The Dorsey–Botha essay makes a specific argument about Block: the economic graph of millions of merchants and consumers, both sides of every transaction, is the kind of proprietary signal that justifies a signal-driven world model. Fair. Block has something most companies do not.

Most companies do not look like Block. Here’s the mapping I’d use:

If you’re a transactional business with direct customer signal — fintech, marketplaces, payments, consumer SaaS with heavy usage telemetry — signal-driven is the right bet. Your edge is the data. Block’s thesis applies. The risk is signal monoculture, which you manage by deliberately ingesting at least one structurally different signal class alongside the primary one.

If you’re a regulated enterprise with complex objects and relationships — financial services, healthcare, logistics, defense, any large operational business — structured ontology is the right bet. Your edge is that the domain is complex enough that formal structure pays for itself, and regulation requires explainability that pure retrieval cannot give you. Palantir built a business on this. The risk is premature formalization, which you manage by keeping the schema small and letting it earn its scope.

If you’re a knowledge-work company — consulting, agencies, law firms, research, editorial, most mid-market B2B — this is the hardest case and the most common one. Your “data” is mostly conversations and documents, which is exactly what vector databases were built for. So the default implementation is vector-first. And the default implementation is also where the failure mode lives.

For knowledge-work companies specifically, the sequence I’d recommend is: start with a vector layer for retrieval, but do not let it make any decisions. Layer a lightweight ontology on top — just enough structure to name the handful of entity types your business actually turns on (client, engagement, deliverable, decision, risk). Then pick one or two signals that you can capture cleanly and use them to grade the system’s outputs. That gives you all three architectures working together, which is what most production implementations will end up looking like anyway, and it keeps the boundary layer explicit from day one.

The temptation with knowledge-work companies is to skip the ontology and the signal wiring because “we don’t have the data.” You do. You just haven’t captured it yet. Outcomes on projects. Whether the deliverable landed. Whether the client renewed. These are your signals. If you won’t capture them, you are not ready for a world model, and no vendor demo is going to change that.

VI. A 20-Minute Readiness Assessment

Before you pick a vendor or approve a budget, run this. It’s not a scorecard; it’s a set of questions whose answers reveal whether you’re about to build something that compounds or something that rots.

1. What’s the editorial function in your company today, and who performs it? Name the three to five people whose judgment calls are disproportionately load-bearing. If you can’t name them, you don’t know what the world model is replacing. Go find them before you do anything else.

2. Where is your signal honest, and where is it corrupted? Sales pipeline data is almost always corrupted. Support ticket volume is usually honest. Engineering velocity metrics are almost always corrupted. Customer retention is usually honest. Name the three cleanest signals you have. Those are the ones the world model should be fed first.

3. Do you have a closed outcome loop on at least one class of decision? If a recommendation goes out and nobody ever checks whether it worked, the system has no feedback. Name one decision class where you can close the loop end-to-end. If you can’t name one, build that first, before any world model work.

4. What does your business understand that is genuinely hard to understand? This is the Dorsey–Botha question, and it’s the right one. If the answer is “nothing specific,” AI is going to be a cost optimization story for you — useful, but not transformative. If the answer is specific and getting deeper every month, you have the raw material for a real world model.

5. Which of the three architectures does your answer to #4 point to? If the answer is a signal only you have, signal-driven. If the answer is a structural view of a complex domain, ontology. If the answer is accumulated documents and conversations, vector — with explicit structural and signal layers on top, per the knowledge-work sequence above.

6. Who is going to own the boundary layer? Not the architecture. The boundary. Someone senior, with enough authority to say “no, the system is not permitted to render this as a decision.” If you don’t have that person named before you start, you will not have them named when you need them, and the boundary will be drawn by whoever ships fastest. That’s the failure mode.

If you can answer all six in under twenty minutes and the answers feel load-bearing, you’re ready to pick an architecture. If you can’t, the vendor pitch you’re about to take is going to be very impressive and very premature.

VII. What Comes Next

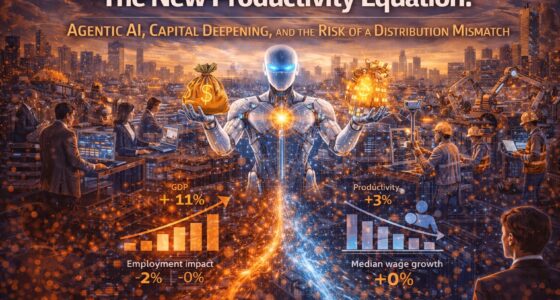

Dorsey is right about the direction. The middle-management layer as we’ve known it for two thousand years is, in most companies, about to absorb a hit it doesn’t recover from. The arithmetic is clear, and the first-order economics are obvious enough that Block’s stock moved the way it did.

He’s also right that Block is early, and that “parts of it will likely break before they work.” Worth taking that line seriously. In a company with proprietary transaction signal, a remote-first machine-readable artifact culture, a founder willing to absorb the political cost of a 40% restructuring, and the capital to survive several quarters of visible mess — yes, the world-model approach has a shot. Block may be one of the few companies where it works cleanly.

The question is what happens when the essay — a 3,000-word manifesto published jointly on Block’s and Sequoia’s sites, with the deliberate intent of being read as a blueprint — gets turned into a six-figure implementation at a 400-person professional services firm in Düsseldorf that has none of Block’s advantages and all of its enthusiasm.

That firm’s world model will produce a simulation of organizational intelligence. For about a year. Then the decisions it’s been quietly making in the editorial layer will show up in results, and nobody in the room will know to look at the system, because the system’s dashboards will still be clean.

The companies that get this right will compound. The ones that get it wrong won’t know for a year.

The difference between the two is not the architecture. It’s whether the boundary layer was built first, and whether someone senior enough was willing to slow the implementation down long enough to build it.

That’s the decision, and it’s in front of you this quarter, whether you’re ready for it or not.

Thorsten Meyer is the founder of ThorstenmeyerAI.com, covering AI infrastructure, agentic systems, and the organizational patterns reshaping how companies actually work in the era of production AI.