A Field Guide to the Terms Every Exec Is Hearing Right Now — IDE, Harness, Agent, CLI, Copilot, Claude Code, Codex, Cursor, and the Rest

By Thorsten Meyer — ThorstenmeyerAI.com

There are two kinds of vocabulary problems in enterprise AI right now.

The first is the obvious kind: genuinely new words for genuinely new things. “MCP.” “World model.” “Context window.” These are fair. They name something that didn’t exist before and needed a name.

The second is the nastier kind: old words pointing at new things, and new words pointing at old things, overlapping at the edges, and used inconsistently by the vendors selling them. “Claude Code” is the name of a product and a marketing category. “Codex” refers to at least three different OpenAI artifacts across a five-year timeline. “Agent” means something different depending on whether the person saying it sells AI, builds with it, or buys it. “IDE” used to mean one thing. Now it means four.

This is the field that every mid-market CEO is walking into when their head of engineering says the team wants to try Cursor, or when an agency pitches them Claude Code, or when they read that OpenAI Codex just added a new CLI.

The terms aren’t hard. The layering is hard. Most of them name different layers of the same stack, and if you understand the stack, the terms resolve on their own. That’s what this piece is for.

I’ll do three things:

- Lay out the stack. Six layers, from the raw model up to the end-user surface. Every term below lives in one of these layers.

- Walk each layer. The terms that belong there, what they actually mean, and where the vendors are bending the words.

- Give you a reference table at the end. One page, thirty-odd terms, sorted by layer.

If you only remember one sentence: the model is the engine, the harness is the car, the IDE is the road, and you are the driver. Everything else is a variation on where those four pieces meet.

Field Guide · April 2026 · Vocabulary Series

The AI Coding Stack,

Decoded

IDE, harness, agent, CLI, Claude Code, Codex, Cursor, Claude Design — thirty words, six layers, one mental model that resolves them all.

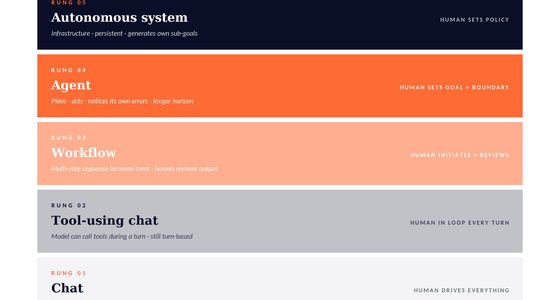

01 The Stack — Six Layers, Bottom to Top

Agentic Development: The Complete Guide to AI-Assisted Coding with Claude, Cursor, and Beyond

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Every term you’re hearing lives in one of these six layers.

LAYER 6

⬆

Workflow Surface

Where the work starts and ends.

LAYER 5

IDE

IDE / Editor

The code-editing environment around the interface.

LAYER 4

UI

Interface / Surface

Where the human meets the agent.

LAYER 3

Agent

Agent

Harness + model + goal, running a loop.

LAYER 2

Harness

Harness

The code wrapping the model — tools, loop, memory, context.

LAYER 1

⬇

Model

The raw neural network. Stateless. No tools.

02 Three rules that fall out of the stack

Agentic Artificial Intelligence: Harnessing AI Agents to Reinvent Business, Work and Life

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Read these once. They resolve most category confusion.

01

Layer confusion is the main source of category confusion.

Cursor is an IDE. Claude Code is a harness. GitHub Copilot is an interface that rides any editor. Not the same kind of thing.

02

The model doesn’t care which harness it lives in.

Same Claude Opus 4.7 behind Claude Code, Cursor, Zed, or a script you wrote. Model choice and harness choice are separate decisions.

03

“Agent” is an emergent property, not a product.

Vendors sell harnesses and call them agents. The agent is what you get when the harness is pointed at a goal. Harness quality decides the outcome.

03 The comparison that’s actually a comparison

Gemini CLI and MCP: Building AI-Powered Workflows in the Terminal

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Claude Code vs. Codex — harness vs. harness, fair fight.

ANTHROPIC

Claude Code

- Model

- Claude Opus 4.7 family

- Philosophy

- Plan-first. Human-in-the-loop. Approval before file writes. Checkpoint & rewind.

- Surfaces

- CLI · desktop · web · VS Code · JetBrains · Slack

- Strongest at

- Deep multi-file reasoning, long-context refactors, high-stakes changes.

- Pricing anchor

- Claude subscription · $20 Pro → $200 Max

OPENAI

Codex (CLI + Cloud)

- Model

- GPT-5.4-Codex family

- Philosophy

- Fire-and-forget. Autonomous execution. Async delegation, parallel tasks.

- Surfaces

- CLI (Rust, open-source) · Cloud Agent (sandboxed, via ChatGPT)

- Strongest at

- Volume work. Parallel routine tasks. Cloud-sandboxed experimentation.

- Pricing anchor

- Included with ChatGPT Plus / Pro · $20 / $200

Many teams run both. Common pattern: Codex for keystrokes, Claude Code for commits — Codex handles volume, Claude Code handles the high-stakes changes.

04 The comparisons that aren’t actually comparisons

Design Multi-Agent AI Systems Using MCP and A2A: Engineer your own Python-based agentic AI framework with tool use, memory, and multi-agent workflows

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Layer mismatches, resolved.

Question asked

“Claude Code or Cursor?”

Layer mismatch. Claude Code is a harness (Layer 2). Cursor is an IDE (Layer 5). You can run Claude Code’s VS Code extension inside Cursor, because Cursor is a VS Code fork. Many senior devs pair them: Cursor for day-to-day editing, Claude Code for deep refactors.

Question asked

“Copilot or Cursor?”

Different eras of the same category. Copilot (2021) was the first AI coding assistant — still safest for teams on GitHub Enterprise (indemnity, SSO, audit). Cursor (2023) is the generation after: AI-native, not AI-as-extension. Better completion, better multi-file editing.

Question asked

“Is ‘Codex’ one thing or three?”

Three. The codex model family (Layer 1 — GPT-5.4-Codex weights), the Codex CLI (Layer 2 — local Rust harness), and the Codex Cloud Agent (Layer 2 — cloud-sandboxed harness inside ChatGPT). Same brand, three layers, different products.

I. The Stack: Six Layers

Before the vocabulary, the mental model. Here is the stack, bottom to top:

| # | Layer | What it is | Example |

|---|---|---|---|

| 1 | Model | The raw neural network. Weights. No tools, no memory, no identity. | Claude Opus 4.7, GPT-5.4, Gemini 3 |

| 2 | Harness | The code wrapping the model — tool calls, context management, memory, orchestration loop. | Claude Code’s core, Codex CLI’s Rust runtime |

| 3 | Agent | What emerges when a harness + a model + a goal is left to run a loop. | “The Claude Code agent refactoring your auth module” |

| 4 | Interface / Surface | Where the human meets the agent. CLI, IDE extension, web app, desktop app. | claude in your terminal, Cursor’s chat panel, claude.ai |

| 5 | IDE / Editor | The code-editing environment around the interface. | VS Code, Cursor, Windsurf, Zed, JetBrains |

| 6 | Workflow surface | Where the work starts and ends. Ticket systems, PRs, Slack, email. | GitHub PR reviews, Linear issues, Slack handoffs |

Every product name I’m about to define lives at one or more of these layers. When you understand which layer a product sits on, you understand what it competes with, what it complements, and what it actually does.

Three rules that fall out of the stack:

Rule 1: Layer confusion is the main source of category confusion. Cursor is an IDE. Claude Code is (primarily) a harness with multiple interfaces. GitHub Copilot is an interface layer that rides on top of whichever IDE you already use. These are not the same kind of thing, even though they’re all “AI coding tools.” When someone asks “Claude Code or Cursor?”, they are really asking “harness or IDE?” — which is not a question, it’s a false choice. Many teams run both.

Rule 2: The model doesn’t care which harness it lives in. The same Claude Opus 4.7 can sit behind Claude Code, Cursor, Zed, a browser, and a custom agent you wrote in a weekend. It behaves differently in each because each harness gives it different tools, context, and system prompts — but it’s the same engine. Exec implication: the choice of model provider and the choice of harness/IDE are separate decisions, and should be priced as such.

Rule 3: “Agent” is an emergent property, not a product. Vendors use “agent” as a noun (“our AI agent”), but what they’re selling is almost always a harness. The agent is what you get when you run the harness against a goal. This matters because harness quality — not model quality — is usually what determines whether an “agent” actually finishes a task.

With that in hand, let’s walk the layers.

II. Layer 1 — The Model

The raw engine. A trained neural network that takes tokens in, produces tokens out. No persistence, no tools, no opinions about your codebase. Each API call is stateless — the model remembers nothing about the previous call unless you pass the conversation back in.

When you hear an exec say “we switched from Claude to GPT,” they usually mean the model layer. They have not switched IDE, harness, or workflow. They have swapped out the engine and left the car the same.

Key terms at this layer:

- Frontier model. The top-capability tier from each lab. As of April 2026: Claude Opus 4.7 (Anthropic), GPT-5.4 (OpenAI), Gemini 3 (Google). These cost more per token and are slower. You use them when the task is hard.

- Reasoning model. A variant trained to “think” before answering — internal deliberation steps that burn tokens in exchange for better answers on complex tasks. The distinction is fading; most frontier models now do this by default and expose a “thinking budget” setting.

- Coding-tuned model. A model variant specifically fine-tuned or reinforcement-learned on code. OpenAI’s

gpt-5.4-codexandgpt-5.4-miniare the current examples. These often beat the general-purpose frontier model on SWE-bench while costing less. The name “Codex” also refers to OpenAI’s agent product built on these models — which we’ll get to in Layer 2. Two different “Codex” meanings, same brand, adjacent layers. This is one of the places the vocabulary breaks. - Context window. How many tokens the model can hold at once. Claude Opus 4.7 handles 1M tokens. GPT-5.4 is similar. This matters because it determines how much of your codebase a harness can stuff into a single prompt before it has to get clever about retrieval.

- Open-weight / open-source model. Meta’s Llama, DeepSeek-V3, Qwen 3, GLM-5. You can run these yourself on your own hardware — which matters when data sovereignty, cost, or offline use are constraints. In 2026, the best open-weight models are roughly six months behind the frontier closed models; close enough to be a serious option for many coding tasks.

What execs actually need to decide at this layer: which provider, for which tasks, at what price per million tokens. Everything above this layer is negotiable. This layer is contractual.

III. Layer 2 — The Harness

This is the term most execs haven’t heard yet and the one that explains the most.

A harness is the code that wraps a model and turns it into something that can do work. Tool calls (file read, file write, shell command, web search, git operations), context management (what goes into the prompt, what gets trimmed, what gets retrieved), the orchestration loop (model produces a plan → calls a tool → reads the result → produces the next step), memory (what persists across turns), safety (sandboxing, permission prompts, rollback), and the system prompt that sets the agent’s identity and behavior.

The canonical definition, which has crystallized across the field over the last six months: the agent equals the model plus the harness. The harness is every piece of code, configuration, and execution logic that is not the model itself. Or in LangChain’s tighter phrasing: “if you’re not the model, you’re the harness.”

This matters to execs for one specific reason: harness quality, not model quality, is usually what decides whether an agent finishes a task. Two harnesses running the same Claude Opus 4.7 can behave dramatically differently. Anthropic’s own Claude Code is a reference-grade harness. A weekend-built script calling the same model over HTTP will fail at tasks Claude Code handles in its sleep. The model is identical. The harness is the product.

Key harness products in 2026:

- Claude Code. Anthropic’s coding harness, released with Claude 4 in May 2025 and now shipping across CLI, desktop, web, VS Code extension, JetBrains extension, and a Slack integration. It is the reference implementation for plan-first, human-in-the-loop coding. Approval before file writes. Checkpoint system that lets you rewind. Subagent spawning for parallel work. It is what people usually mean when they say “Claude Code” in conversation, even though the name technically covers all the surfaces.

- Codex CLI. OpenAI’s terminal harness, rewritten in Rust in late 2025, open source, 67,000+ GitHub stars. Installs via

npm install -g @openai/codex. Runs locally, reads your files, executes commands. Its defining philosophy is “fire-and-forget” — submit a task, let it run, come back later. The opposite of Claude Code’s step-by-step approval model. - Codex Cloud Agent. OpenAI’s cloud-based coding agent, accessed through ChatGPT. Not to be confused with Codex CLI. The cloud agent spins up an isolated sandbox, clones your repo, runs in a microVM, delivers back a diff or PR. Great for async delegation (“build this feature, tell me when it’s done”), not great for interactive work.

- Aider. Open-source terminal harness, bring-your-own-API-key, model-agnostic. Strong git integration. Popular with developers who want to run harness code they can read and don’t want to pay a subscription on top of their API usage.

- Cline. Open-source VS Code extension that acts as a harness inside the editor. Same spirit as Aider but lives inside VS Code rather than the terminal. 5M+ installs.

- Continue.dev. Open-source, highly customizable, VS Code and JetBrains extension. For teams that want granular control over model routing and CI/CD integration.

- Devin. Cognition Labs’ autonomous coding agent. Cloud-hosted, long-running, closer to “delegate and walk away for three hours” than anything else on the list. Cognition acquired Windsurf in July 2025, which is why Windsurf and Devin increasingly share underlying technology.

Where the vocabulary bends: OpenAI’s own product page calls Codex a “coding agent.” Anthropic’s own docs call the Claude Code SDK “the agent harness that powers Claude Code.” These are the same kind of thing. The word choice reflects branding preference more than any technical distinction.

What execs actually need to decide at this layer: which harness (or harnesses) for which workflow — interactive coding vs. async delegation vs. production automation. And who pays the harness subscription vs. the model API costs, because they are separate line items.

IV. Layer 3 — The Agent

Agents are what you get when a harness + a model + a goal is left to run.

The term “agent” is the single most abused word in the 2026 AI vocabulary. Vendors apply it to everything from a Slack bot that answers FAQ to a fully autonomous coding system that ships PRs overnight. For an exec, the practical definition is simpler:

An agent is a system that, given a goal, chooses its own actions in a loop until the goal is achieved or abandoned.

The key property is autonomy over the loop. An agent decides what to do next without asking for each step. A copilot suggests; you decide. An agent acts; you review.

The loop matters because it’s where harness design shows up. A badly designed agent loop runs in circles, burns tokens, or confidently does the wrong thing ten steps in a row. A well-designed agent loop checks its own work, recognizes when it’s off track, and either course-corrects or stops and asks.

Key agent-related terms:

- Autonomous agent. Runs without per-step approval. Codex Cloud Agent in full-auto mode. Devin. Claude Code’s “auto-accept” mode.

- Human-in-the-loop agent. Pauses for approval at defined checkpoints. Claude Code’s default mode. Cursor’s Agent mode with confirmation on.

- Multi-agent / subagents. One agent spawns others to handle parallel subtasks. Claude Code supports this via the subagent feature. Codex CLI has a “multi-agent v2” workflow. The pattern is real and useful for genuinely parallelizable work; it is also frequently overkill for everything else. Anthropic and OpenAI both recommend maximizing a single agent first before adding coordination overhead.

- Background agent. An agent that runs asynchronously while you do something else. Cursor shipped background agents in 2025. Codex Cloud Agent is essentially a background agent. The defining feature is that you don’t have to sit and watch it work.

- Copilot. Not an agent. A copilot suggests completions or edits that you accept or reject in real time. GitHub Copilot (the original) and Cursor’s Tab feature are the canonical examples. The distinction is getting muddier because most copilot products now include an “agent mode” — but if you’re choosing a product for copilot-style work vs. agent-style work, the split is real.

Where the vocabulary bends hardest: marketing. “Agentic” is applied to systems that are mostly copilot. “Copilot” is applied to systems that are mostly agent. Ignore the word on the landing page. Ask: does it run without per-step approval, and for how long? That tells you where on the autonomy spectrum it actually sits.

V. Layer 4 — Interfaces and Surfaces

This is where the human meets the agent. The same harness can ship with multiple surfaces, which is exactly what’s happened with Claude Code.

The five surfaces that matter in 2026:

- CLI (command-line interface). The agent lives in your terminal. You type, it responds. File access is direct, shell is native, and the interaction loop is the fastest possible. Claude Code CLI and Codex CLI are the two biggest. Senior developers usually prefer this.

- Desktop app. A GUI wrapper around the same harness. Claude Code ships a desktop app; Codex ships one. The appeal is to developers who want the terminal’s power without the terminal.

- Web app / browser-based. The agent runs in the cloud and you talk to it through a browser. Codex Cloud Agent is the clearest example. Anthropic also ships Claude Code on the web for remote sessions.

- IDE extension. The harness is plugged into your existing editor. Claude Code extension for VS Code and JetBrains. GitHub Copilot across dozens of editors. Cline. Continue.dev. The extension model lets the harness share context with the editor (open files, selected text, diagnostics) that a standalone CLI wouldn’t see.

- Chat app (consumer / claude.ai). claude.ai, chatgpt.com, gemini.google.com. Not coding-first. Not harness-grade for coding work. But frequently where non-developers first meet these models, and where “Claude” or “ChatGPT” get confused with the coding products that share a brand.

Why this layer matters for exec decisions: access control, audit logging, and compliance don’t live at the model or harness layer — they live at the surface. A team using Claude Code via the CLI vs. via the VS Code extension vs. via claude.ai is having three different security conversations. The underlying model and harness can be identical.

VI. Layer 5 — The IDE / Editor

This is the word with the most shifted meaning in the last three years.

IDE — Integrated Development Environment — used to mean: a program that edits code, compiles it, runs it, debugs it, and shows you errors inline. VS Code, JetBrains, Vim, Emacs. The “integrated” part was about bundling the edit/compile/run/debug tools together.

In 2026, “AI IDE” means something new: an IDE where AI is not an extension but a first-class architectural component. These editors don’t bolt AI onto a code editor. They’re rebuilt around it.

The four AI IDEs that matter:

- Cursor. A VS Code fork from Anysphere. Now the dominant commercial AI IDE with $2B annualized revenue, 2M+ users, adoption in roughly half the Fortune 500. Its signature features are Tab completion (the best autocomplete in the market in most benchmarks), Composer for multi-file editing, @-codebase indexing, and Agent mode for longer-running tasks. Cursor is a VS Code fork, so every VS Code extension works; migration takes minutes. Pricing ladder: free tier, Pro at $20/mo, Pro+ at $60, Ultra at $200.

- Windsurf. Also a VS Code fork, from Codeium, acquired by Cognition (makers of Devin) in July 2025 for $250M. Its defining feature is Cascade — an autonomous agent inside the editor that proactively indexes your project, runs commands, and makes multi-file changes without waiting for you to point at files. More autonomous than Cursor; some developers prefer that, some find it too aggressive.

- Zed. Built from scratch in Rust, not a VS Code fork. The fastest editor in the category by a wide margin — sub-500ms startup, ~2ms input latency, 1/4 the memory of Electron-based editors. AI integration is deliberately lighter-touch: Claude or GitHub Copilot for assistance, but nothing as aggressive as Cascade or Cursor’s Agent. The editor for developers who care about performance first and want AI that enhances rather than replaces their workflow. Real-time collaborative editing is native.

- VS Code. The default. Microsoft’s free editor. Not an “AI IDE” by the 2026 definition, but still the broadest extension ecosystem (50,000+) and the baseline that Cursor and Windsurf forked from. GitHub Copilot and half a dozen agent extensions (Cline, Continue.dev, Claude Code, Codex IDE extension) run inside it. If you need AI assistance but don’t want to switch editors, this is the pragmatic choice.

JetBrains (IntelliJ, PyCharm, WebStorm, etc.) still anchors a lot of enterprise Java and Python work and supports most major agent extensions through plugins, but hasn’t produced an “AI IDE” of its own category.

Where the vocabulary bends: “Cursor vs. Claude Code” is the comparison I hear most, and it’s a layer mismatch. Cursor is an IDE. Claude Code is a harness that ships as a CLI, a desktop app, and also an IDE extension (for VS Code and JetBrains). You can run Claude Code inside Cursor, because Cursor is a VS Code fork and the Claude Code VS Code extension works there. The two are often complementary: many senior developers pair them — Cursor for day-to-day editing, Claude Code for deep refactors and plan-first work.

VII. Layer 6 — Workflow Surfaces

This is the newest and least-defined layer. It’s where AI coding leaves the IDE and shows up in the systems where work actually gets tracked.

- GitHub / GitLab PR reviews. AI-authored PRs are now routine. Both Codex and Claude Code can produce them. Code review itself is getting AI-assisted.

- Issue trackers. Linear, Jira, GitHub Issues. Codex’s “Automations” feature now picks up triage work automatically.

- Slack / Teams handoffs. Claude Code ships a Slack integration. Codex is increasingly reachable from chat platforms.

- Claude Design. Anthropic’s newest product, launched April 17, 2026 — three days before this piece. Claude Design is a design tool that lets you create designs, prototypes, slides, and one-pagers by chatting with Claude. It sits mostly outside the coding stack but has one explicit integration into it: when a design is ready to build, Claude Design packages it into a handoff bundle that can be passed to Claude Code with a single instruction. This is the first serious example of a cross-product handoff inside Anthropic’s own ecosystem. Figma’s stock dropped when it launched, which tells you where the market thinks the competitive threat sits.

- Claude Cowork. Anthropic’s agentic assistant for knowledge work, separate from Claude Code. Launched January 2026. More for general business tasks than for coding, but shares the harness lineage.

The reason this layer matters to an exec: the long-term competitive question isn’t which IDE your developers use. It’s which workflow surface your company standardizes on. If your PR-review, issue-triage, and design-handoff systems all converge on one vendor’s ecosystem, the switching cost isn’t the IDE — it’s the workflow.

VIII. Three Comparisons That Resolve the Most Common Confusions

With the stack in place, the comparisons that usually get asked answer themselves. Three that come up constantly:

“Claude Code or Cursor?”

It’s a layer mismatch. Claude Code is a harness with multiple surfaces. Cursor is an IDE. The actual question is usually one of these two:

| Real question | Answer |

|---|---|

| Which harness? (Claude Code vs. Codex CLI vs. Aider vs. Cline) | Depends on autonomy preference and whether you want step-by-step approval or fire-and-forget |

| Which IDE? (Cursor vs. Windsurf vs. Zed vs. VS Code+extensions) | Depends on whether you want Tab autocomplete quality (Cursor), autonomous in-editor agent (Windsurf), speed (Zed), or max extension ecosystem (VS Code) |

You can run Claude Code’s VS Code extension inside Cursor. You can use the Claude Code CLI alongside Cursor. These are not mutually exclusive.

“Codex or Claude Code?”

This one is a harness-vs-harness comparison, and it’s fair.

| Dimension | Claude Code | Codex (CLI + Cloud) |

|---|---|---|

| Model | Claude Opus 4.7 family | GPT-5.4-Codex family |

| Core philosophy | Plan-first, human-in-the-loop, approval before file writes | Fire-and-forget, autonomous execution, async delegation |

| Primary surface | CLI, desktop, VS Code / JetBrains extension, web | CLI (local, open-source Rust) + Cloud Agent (sandboxed, via ChatGPT) |

| Open source? | Closed source (SDK is open) | CLI is open source, model is not |

| Strongest at | Complex multi-file reasoning, deep refactors, long-running context | Parallel tasks, cloud sandboxing, lots of routine work at once |

| Pricing anchor | Claude subscription ($20 Pro, up to $200 Max) | Included with ChatGPT Plus/Pro ($20/$200) |

Many pragmatic teams run both. One popular pattern: Codex for keystrokes, Claude Code for commits — meaning Codex handles the volume of daily coding while Claude Code handles the high-stakes changes.

“Copilot or Cursor?”

Different eras of the same product category.

GitHub Copilot (launched 2021) was the first AI coding assistant to reach broad adoption. It still ships, it still works, and it’s still the safest choice for teams already deep in the GitHub ecosystem — legal indemnity on generated code, SSO, audit logs, procurement via GitHub Enterprise. It runs as an extension inside VS Code, JetBrains, Neovim, and most other editors.

Cursor (launched 2023, exploded in 2025) represents the generation after Copilot: AI-native rather than AI-as-extension. Better completion model, better multi-file editing, better codebase understanding.

If your company is already standardized on GitHub Enterprise, Copilot is friction-free. If your engineers are individually choosing, most of them pick Cursor.

IX. The One-Page Reference

Pin this somewhere.

| Term | Layer | What it is in one line |

|---|---|---|

| Claude Opus 4.7 | Model | Anthropic’s frontier model. Coding-strong. 1M context. |

| GPT-5.4 / GPT-5.4-Codex | Model | OpenAI’s frontier model. Coding-tuned variant is the Codex engine. |

| Gemini 3 | Model | Google’s frontier model. Strong on long context. |

| Frontier model | Model | The top-tier capability from any lab. Slower, pricier, smarter. |

| Open-weight model | Model | Llama, DeepSeek, Qwen, GLM. Runnable on your own hardware. |

| Context window | Model attribute | How many tokens the model can hold at once. 1M is now the frontier default. |

| Harness | Layer 2 | The code around the model — tools, loop, memory, context. “If you’re not the model, you’re the harness.” |

| Claude Code | Harness (multi-surface) | Anthropic’s coding harness. Plan-first, human-in-the-loop. CLI + desktop + web + VS Code / JetBrains extensions. |

| Codex CLI | Harness (terminal) | OpenAI’s open-source Rust-based terminal coding agent. Runs locally. Fire-and-forget. |

| Codex Cloud Agent | Harness (cloud) | OpenAI’s cloud-sandboxed coding agent inside ChatGPT. Async delegation. |

| Aider | Harness (open-source) | Model-agnostic terminal harness. Strong git integration. |

| Cline | Harness (in-editor) | Open-source VS Code agent extension. 5M+ installs. |

| Continue.dev | Harness (in-editor) | Open-source, highly customizable VS Code and JetBrains agent. |

| Devin | Harness (autonomous cloud) | Cognition Labs’ long-running autonomous coding agent. |

| Agent | Layer 3 | Harness + model + goal, running a loop. Autonomy over the loop is the defining trait. |

| Copilot | Layer 3 (distinct from agent) | Suggests completions. You accept or reject. Not autonomous. |

| Subagent / multi-agent | Layer 3 pattern | One agent spawning others for parallel work. |

| Background agent | Layer 3 pattern | Runs async while you do something else. |

| CLI | Interface | The agent lives in your terminal. |

| IDE extension | Interface | The harness runs inside your existing editor. |

| Desktop app | Interface | GUI wrapper around a harness. |

| VS Code | IDE | Microsoft’s free editor. The baseline. 50,000+ extensions. |

| Cursor | AI IDE | VS Code fork, AI-native. $2B ARR, 2M+ users. Best Tab completion. |

| Windsurf | AI IDE | VS Code fork, agentic. Cascade agent runs aggressively. Owned by Cognition. |

| Zed | AI IDE | Rust-native, fastest editor in the category. Light AI integration. |

| JetBrains | IDE | IntelliJ, PyCharm, WebStorm. Enterprise-anchored. Plugin-based AI. |

| GitHub Copilot | Copilot product | Original AI coding assistant. Extension for any editor. Best enterprise procurement story. |

| MCP | Protocol | Model Context Protocol. The standard for connecting models to external tools/data. |

| Claude Design | Workflow surface | Anthropic’s new design tool (April 17, 2026). Chat → prototype → hand off to Claude Code. |

| Claude Cowork | Workflow surface | Anthropic’s agentic assistant for general knowledge work. |

X. What This Means for Decisions

Three take-aways an exec can act on:

1. The model decision and the harness decision are separate. You can pay Anthropic for Claude Opus 4.7 and use it through Cursor, through Claude Code, through Cline, or through a custom harness you wrote. You can pay OpenAI for GPT-5.4 and use it through Codex CLI, Codex Cloud Agent, through the API behind your own harness, or through Cursor (which supports both). Don’t let one vendor sell you both decisions bundled together unless you actively want the bundle.

2. Harness quality is where the hidden variation lives. Two teams running the same model on the same tasks will get noticeably different results depending on which harness they use. When you’re evaluating a coding tool and the demo is impressive, the impressive part is usually the harness — the way it manages context, which tools it exposes, how it recovers from errors. The model underneath is the same one anyone can access.

3. “Agent” on a landing page is a marketing word until proven otherwise. Ask two questions: does it run without per-step approval, and for how long before it needs human input? The answers tell you where on the autonomy spectrum the product actually sits, regardless of how the marketing describes it. A tool that needs approval every 30 seconds is a copilot dressed as an agent. A tool that runs three hours before checking in is an agent even if it’s sold as “AI assistance.”

The vocabulary will keep moving for another two to three years. The layers won’t. If you understand the stack, the next new term that shows up will slot into one of these six layers on its own — and so will the decision you need to make about it.

Thorsten Meyer is the founder of ThorstenmeyerAI.com, covering AI infrastructure, coding tooling, and the organizational patterns reshaping how software gets built in the era of production AI.