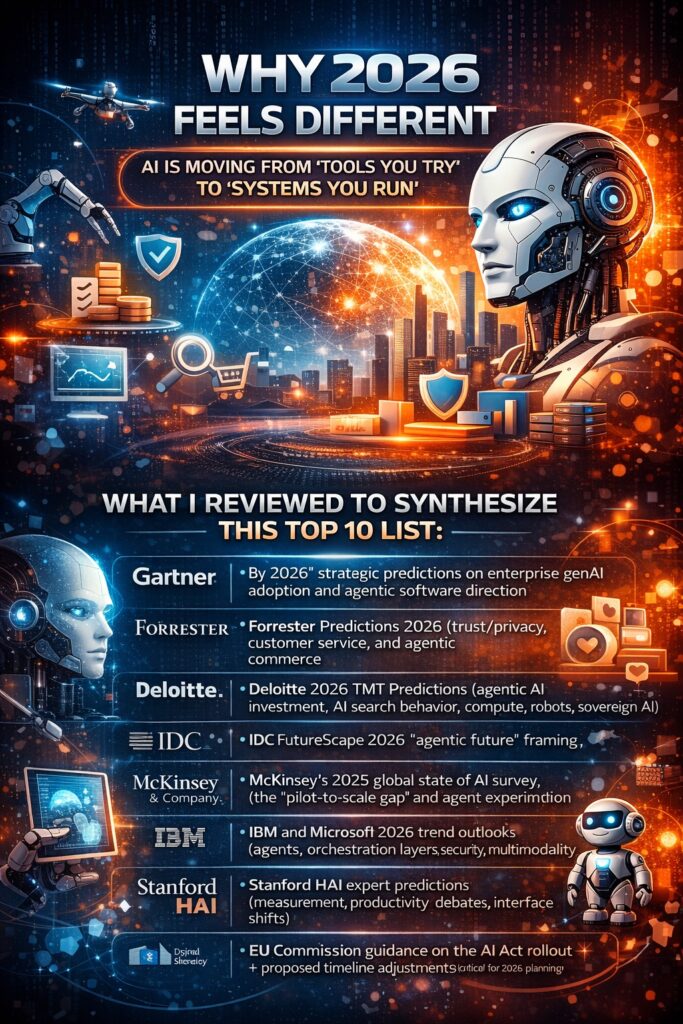

Why 2026 feels different

Across analyst houses and industry forecasts, one theme repeats: AI is moving from “tools you try” to “systems you run.” 2026 is framed as a year where agentic AI becomes operational, budgets consolidate around measurable value, and regulation/security force more disciplined deployment — while consumer behavior shifts toward AI-mediated search, shopping, and even relationships.

What I reviewed to synthesize this Top 10 list (a representative sample of the most-cited 2026 outlooks):

- Gartner’s “by 2026” strategic predictions on enterprise genAI adoption and agentic software direction Gartner+2Gartner+2

- Forrester Predictions 2026 (trust/privacy, customer service, and agentic commerce) Forrester+2Forrester+2

- Deloitte 2026 TMT Predictions (agentic AI investment, AI search behavior, compute, robots, sovereign AI) Deloitte

- IDC FutureScape 2026 “agentic future” framing IDC

- McKinsey’s 2025 global state of AI survey (the “pilot-to-scale gap” and agent experimentation) McKinsey & Company

- IBM and Microsoft 2026 trend outlooks (agents, orchestration layers, security, multimodality, physical AI) IBM+2IBM+2

- Stanford HAI expert predictions (measurement, productivity debates, interface shifts) Stanford HAI

- EU Commission guidance on the AI Act rollout + proposed timeline adjustments (critical for 2026 planning) Digital Strategy

Designing Multi-Agent Systems: Principles, Patterns, and Implementation for AI Agents

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

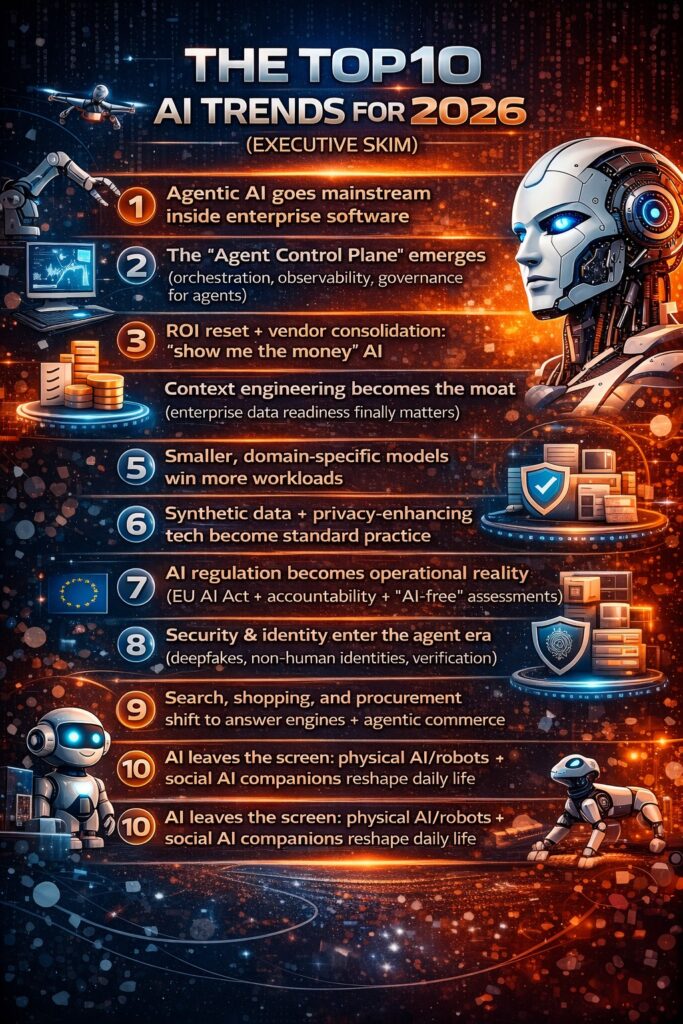

The Top 10 AI Trends for 2026 (executive skim)

- Agentic AI goes mainstream inside enterprise software

- The “Agent Control Plane” emerges (orchestration, observability, governance for agents)

- ROI reset + vendor consolidation: “show me the money” AI

- Context engineering becomes the moat (enterprise data readiness finally matters)

- Smaller, domain-specific models win more workloads

- Synthetic data + privacy-enhancing tech become standard practice

- AI regulation becomes operational reality (EU AI Act + accountability + “AI-free” assessments)

- Security & identity enter the agent era (deepfakes, non-human identities, verification)

- Search, shopping, and procurement shift to answer engines + agentic commerce

- AI leaves the screen: physical AI/robots + social AI companions reshape daily life

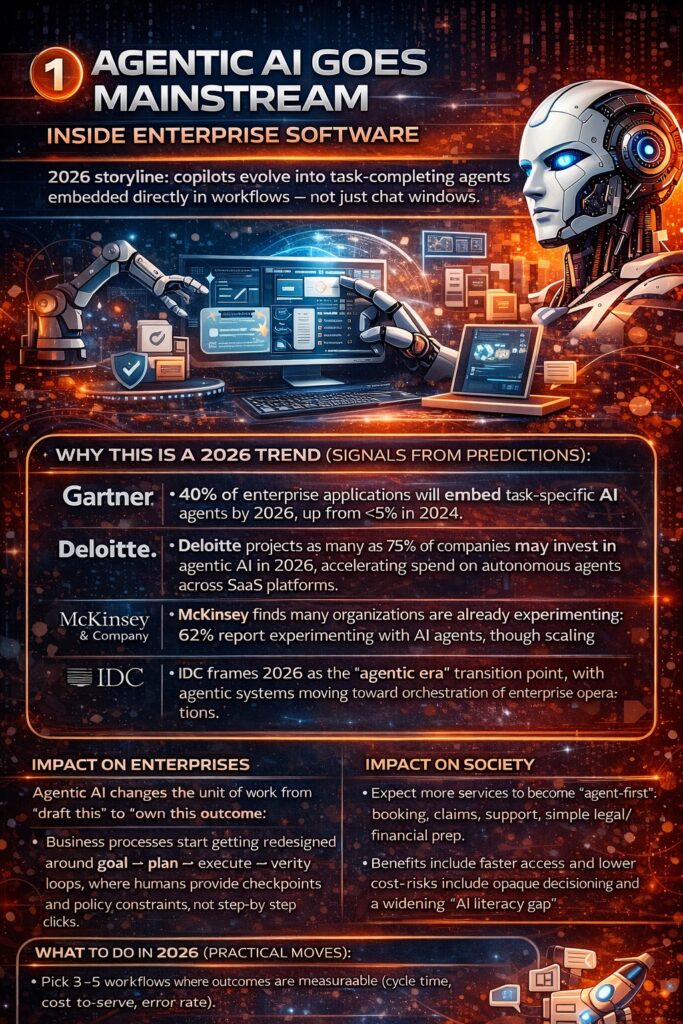

1) Agentic AI goes mainstream inside enterprise software

2026 storyline: copilots evolve into task-completing agents embedded directly in workflows — not just chat windows.

Why this is a 2026 trend (signals from predictions):

- Gartner predicts 40% of enterprise applications will embed task-specific AI agents by 2026, up from <5% in 2024. Gartner

- Deloitte projects that as many as 75% of companies may invest in agentic AI in 2026, accelerating spend on autonomous agents across SaaS platforms. Deloitte

- McKinsey finds many organizations are already experimenting: 62% report experimenting with AI agents, though scaling remains uneven. McKinsey & Company

- IDC frames 2026 as the “agentic era” transition point, with agentic systems moving toward orchestration of enterprise operations. IDC

Impact on enterprises

Agentic AI changes the unit of work from “draft this” to “own this outcome.” That means:

- Business processes start getting redesigned around goal → plan → execute → verify loops, where humans provide checkpoints and policy constraints, not step-by-step clicks.

- Integration becomes a competitive battleground: the value isn’t just the model — it’s whether the agent can safely operate across ERP/CRM/ticketing/email/data systems with clear permissions and audit trails. IBM+1

- The winners in 2026 won’t be the firms with the most pilots — they’ll be the firms with repeatable agent deployment patterns (templates, governance, monitoring, fallback). IDC+1

Impact on society

Expect more services to become “agent-first”: booking, claims, support, simple legal/financial prep. Benefits include faster access and lower cost; risks include opaque decisioning and a widening “AI literacy gap” between those who can steer agents and those who can’t. Digital Strategy+1

Impact on jobs

Jobs won’t simply disappear; they will decompose:

- Routine coordination work (status chasing, basic triage, repetitive documentation) shrinks.

- New roles expand: agent owners, workflow designers, QA/evaluation specialists, compliance reviewers, and domain experts who “teach” agents how work is done.

- Expect more performance measures to include “human+agent throughput” instead of pure headcount productivity. Forrester+1

Impact on how we interact socially

Agents start acting on our behalf — which changes etiquette:

- “I’ll have my agent coordinate” becomes normal inside companies.

- Externally, customers increasingly interact with bots/agents first — and frustration rises when emotions meet automation (complaints, cancellations, healthcare worries). Forrester explicitly warns that over-automation of complex/emotional inquiries can backfire. Forrester

What to do in 2026 (practical moves):

- Pick 3–5 workflows where outcomes are measurable (cycle time, cost-to-serve, error rate).

- Build “human checkpoints” into the agent loop for high-risk steps (payments, approvals, medical/financial guidance).

- Treat agent rollout like product launch: onboarding, telemetry, guardrails, iteration.

2) The “Agent Control Plane” emerges

2026 storyline: As agents multiply, enterprises need an operational layer to discover, govern, and monitor them — the equivalent of what observability did for cloud.

Why this is a 2026 trend:

- IBM forecasts multi-agent systems moving “into real life,” highlighting agent control planes and multi-agent dashboards as a 2026 expectation. IBM+1

- Deloitte underscores that agentic AI value depends on “proper orchestration,” implying that orchestration platforms (not just models) become essential. Deloitte

- Forrester predicts enterprises will build parallel AI functions — managers who onboard/coach agents, ops teams to optimize them, specialists to unblock them. That’s basically the human side of the “control plane.” Forrester

Impact on enterprises

In 2026, scaling agents without a control plane produces the same failure mode cloud had a decade ago: sprawl, cost surprises, security gaps, and inconsistent reliability.

Expect rapid adoption of:

- Agent registries (“what agents exist, who owns them, what systems they touch”)

- Policy enforcement (“this agent can read X but cannot send money”)

- Observability (agent traces, tool calls, retrieval sources, error handling)

- Evaluation pipelines (continuous testing against regressions and hallucinations) McKinsey & Company+1

Impact on society

This is where “trustworthy AI” stops being a slogan. If organizations can’t show provenance, policy, and accountability, public trust keeps eroding — and regulation tightens. Forrester+1

Impact on jobs

A big shift: operations roles come back in a new form.

- “Agent Ops” becomes real: monitoring, prompt/tool updates, knowledge base curation, failure investigations.

- Managers learn to run hybrid teams (humans + agents), including performance coaching for “digital coworkers.” Forrester

Impact on how we interact socially

Inside enterprises, collaboration changes:

- People delegate to agents in meetings (“summarize decisions, file tickets, draft follow-ups”).

- Social trust becomes more explicit: teams will ask “what did the agent use as sources?” or “did a human approve this?” — a new form of workplace accountability.

What to do in 2026:

- Create an “Agent Inventory” and require every agent to have an owner, purpose, and access profile.

- Standardize logging: every tool call + data retrieval needs to be traceable.

- Build escalation paths: what happens when an agent fails?

3) The ROI reset + vendor consolidation

2026 storyline: The era of “cool demos” fades. 2026 becomes the year of concentrated spend, fewer vendors, and hard ROI proof.

Why this is a 2026 trend:

- TechCrunch reports VCs broadly expect enterprises to increase AI budgets in 2026, but concentrate spend into fewer contracts/vendors as experimentation ends and winners are chosen. TechCrunch

- McKinsey’s 2025 survey highlights the core issue: most orgs still aren’t scaled, and enterprise-level EBIT impact is limited for many — which naturally triggers a “prove it” year. McKinsey & Company

- Stanford HAI predicts measurement will become more serious (e.g., AI economic dashboards), reframing debates about productivity and impact. Stanford HAI

Impact on enterprises

Expect 2026 decision-making to shift from “Which model is best?” to:

- “Which use cases measurably change cycle time, quality, revenue, or risk?”

- “Which vendors integrate cleanly into our architecture and governance?”

- “Which projects reduce the need for headcount growth without breaking customer trust?”

This also means:

- more platform buying (one stack)

- less point-solution sprawl

- tighter procurement requirements (security, data handling, auditability) TechCrunch+1

Impact on society

A good outcome: less hype, more useful deployments.

A bad outcome: small providers get squeezed, innovation narrows, and AI capability concentrates into a few large platforms — which has competition and power implications.

Impact on jobs

The people impact in 2026 is less “mass layoffs overnight” and more:

- work redesign (roles shift as agents take over fragments of tasks)

- skill bifurcation (those who can orchestrate AI gain leverage; those who can’t may stagnate)

- hiring growth in AI-adjacent roles (data engineering, security, evaluation) McKinsey & Company+1

Impact on how we interact socially

Workplace norms change:

- Expect more “AI usage transparency” — e.g., disclosing AI assistance in reports, presentations, customer interactions (especially in regulated domains). Digital Strategy

- Peer perception evolves: using AI becomes normal, but using it without verification becomes reputational risk.

What to do in 2026:

- Replace “pilot count” with a portfolio scorecard: value delivered, risk profile, adoption, unit economics.

- Kill zombie pilots. Fund the top 10% that show measurable impact.

- Build evaluation as a production system, not a one-time test.

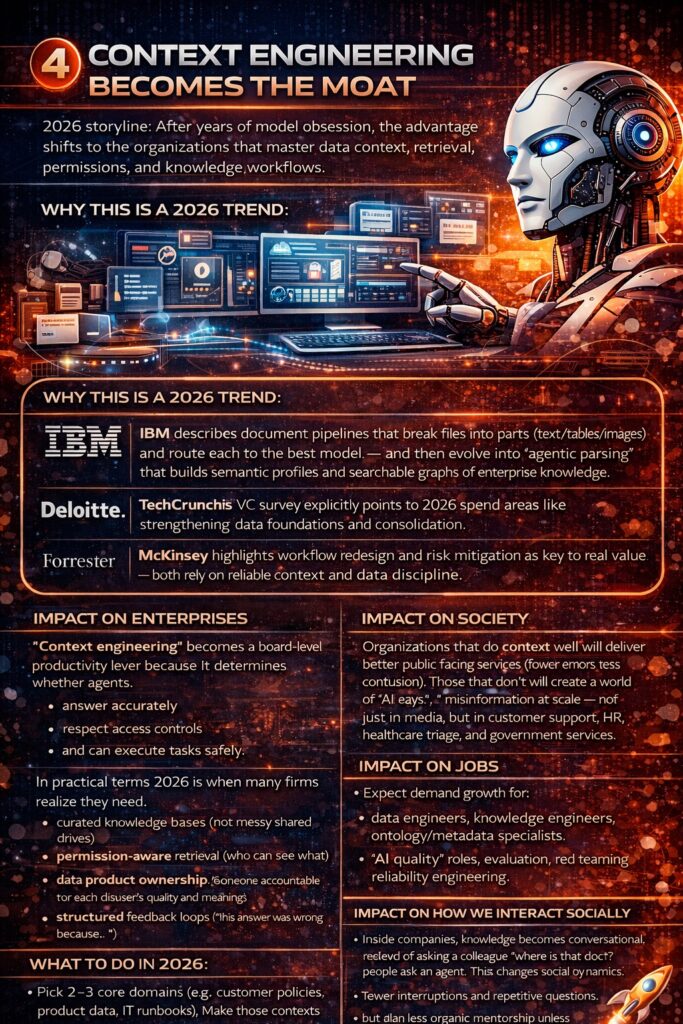

4) Context engineering becomes the moat

2026 storyline: After years of model obsession, the advantage shifts to the organizations that master data context, retrieval, permissions, and knowledge workflows.

Why this is a 2026 trend:

- IBM describes document pipelines that break files into parts (text/tables/images) and route each to the best model — and then evolve into “agentic parsing” that builds semantic profiles and searchable graphs of enterprise knowledge. IBM+1

- TechCrunch’s VC survey explicitly points to 2026 spend areas like strengthening data foundations and consolidation. TechCrunch

- McKinsey highlights workflow redesign and risk mitigation as key to real value — both rely on reliable context and data discipline. McKinsey & Company

Impact on enterprises

“Context engineering” becomes a board-level productivity lever because it determines whether agents:

- answer accurately,

- respect access controls,

- and can execute tasks safely.

In practical terms, 2026 is when many firms realize they need:

- curated knowledge bases (not messy shared drives)

- permission-aware retrieval (who can see what)

- data product ownership (someone accountable for each dataset’s quality and meaning)

- structured feedback loops (“this answer was wrong because…”)

Impact on society

Organizations that do context well will deliver better public-facing services (fewer errors, less confusion). Those that don’t will create a world of “AI says…” misinformation at scale — not just in media, but in customer support, HR, healthcare triage, and government services.

Impact on jobs

Expect demand growth for:

- data engineers, knowledge engineers, ontology/metadata specialists

- “AI quality” roles: evaluation, red teaming, reliability engineering

- domain experts who can codify policies and edge cases into agent workflows Forrester+1

Impact on how we interact socially

Inside companies, knowledge becomes conversational: instead of asking a colleague “where is that doc?”, people ask an agent. That changes social dynamics:

- fewer interruptions and repetitive questions

- but also less organic mentorship unless leaders design for it

What to do in 2026:

- Pick 2–3 core domains (e.g., customer policies, product data, IT runbooks). Make those contexts pristine.

- Implement permission-aware retrieval and logging.

- Treat internal knowledge like product UX.

5) Smaller, domain-specific models win more workloads

2026 storyline: Many tasks won’t need giant frontier models. Enterprises increasingly choose right-sized models tuned for specific domains and functions.

Why this is a 2026 trend:

- Gartner forecasts that by 2027, more than 50% of GenAI models used by enterprises will be industry- or function-specific, up from ~1% in 2023 — pointing to a 2026–2027 shift toward specialization. Gartner

- IBM anticipates “systems, not models” matter most, with model routing and smaller models delegating to bigger ones when needed — and highlights a shift toward domain-specific reasoning systems. IBM+1

Impact on enterprises

Enterprises will increasingly build portfolios:

- a strong general-purpose model for broad reasoning

- specialized models for regulated work (legal, finance, healthcare), customer service tone, code review, etc.

- local/edge models for latency and privacy needs

This is both a cost and a governance win: smaller models can be easier to control, audit, and deploy in constrained environments.

Impact on society

More specialized models can improve equity and accessibility when tuned for:

- local languages

- specific cultural contexts

- domain-specific safety constraints

But specialization can also fragment truth: different “expert AIs” can disagree and confuse users unless sources and reasoning are made transparent.

Impact on jobs

Two pressures emerge:

- More demand for “AI translators” — people who can convert domain expertise into evaluation sets, rules, and tuning data.

- A premium on domain professionals who can validate AI outputs with deep context (not just generic knowledge).

Impact on how we interact socially

Expect more “brand-personality AI” and “profession-specific AI” in daily interactions — the AI you talk to at your bank will feel different from the one you talk to at your healthcare provider. This shifts trust: people start trusting specific AIs for specific tasks rather than “AI” as a whole. Forrester

What to do in 2026:

- Define a “model portfolio strategy” (don’t bet everything on one model).

- Build evaluation that reflects your real edge cases.

- Don’t tune without governance: track datasets, consent, and bias risk.

6) Synthetic data + privacy-enhancing tech becomes standard practice

2026 storyline: Data privacy constraints and data hunger collide — synthetic data and privacy-enhancing technologies (PETs) move from niche to default.

Why this is a 2026 trend:

- Gartner predicts that by 2026, 75% of businesses will use generative AI to create synthetic customer data, up from <5% in 2023. Gartner

- Forrester forecasts increased adoption and consolidation around privacy-preserving technologies, naming approaches like secure multiparty computation, runtime encryption, and synthetic data. Forrester

Impact on enterprises

Synthetic data becomes “infrastructure,” not experimentation:

- safer model training and testing in regulated industries

- faster product development and QA (especially when real data access is slow)

- easier cross-org collaboration (synthetic datasets for partners)

But the trap is false confidence: synthetic data can still encode bias, and governance still matters. Forrester explicitly frames synthetic data as useful but still requiring compliance discipline. Forrester

Impact on society

The upside: more innovation without exposing real people’s data — especially in healthcare, finance, and public-sector services.

The risk: synthetic data used to simulate reality can be weaponized for misinformation or to “launder” biased assumptions into systems.

Impact on jobs

- Growth in privacy engineering, data governance, and applied cryptography expertise.

- More cross-functional work between legal, security, analytics, and product teams because “data readiness” becomes strategic.

Impact on how we interact socially

As privacy-preserving tech becomes more common, people may experience:

- more personalization with less overt data collection

- more demand for transparency (“what did you use my data for?”)

That can improve trust — if organizations explain it clearly, and don’t hide behind technical jargon.

What to do in 2026:

- Identify where synthetic data can replace production data in dev/test/analytics now.

- Create a synthetic data policy: acceptable use, bias checks, traceability.

- Invest in PETs where cross-party data sharing creates competitive advantage.

7) AI regulation becomes operational reality

2026 storyline: Regulation shifts from “coming soon” to “in force,” and enterprises build compliance into product and operations — not PowerPoints.

Why this is a 2026 trend:

- The European Commission states the AI Act entered into force on 1 August 2024 and follows a staggered rollout, with major parts set to apply on 2 August 2026 and 2 August 2027. Digital Strategy

- The Commission also describes proposed amendments to adjust timing for high-risk rules (linked to standards availability) with capped delays, plus transition periods for detectability requirements. Digital Strategy

- Gartner predicts “AI-free” skills assessments become more common due to critical-thinking atrophy concerns, with organizations distinguishing independent thinking from AI dependence. Gartner

Impact on enterprises

Compliance becomes an engineering problem:

- documentation, traceability, risk management, and human oversight features get built into systems

- procurement requires AI disclosures, test results, and risk controls

- “AI literacy” isn’t optional: it becomes part of operational readiness and workforce training (and in some cases, policy obligations) Digital Strategy

Impact on society

The near-term societal effect is mixed:

- better transparency and safety in high-risk systems

- more friction (disclosures, consent, verification, audit processes)

- potential regional fragmentation (how AI behaves depends on jurisdiction)

Impact on jobs

Expect two simultaneous shifts:

- hiring growth: compliance specialists, AI auditors, risk officers, model governance leads

- hiring skepticism: more “AI-free” assessments or proctored evaluation methods in recruiting to gauge real skills Gartner+1

Impact on how we interact socially

Disclosure norms expand:

- “This chat may be recorded” becomes “This interaction may use AI.”

- People become more cautious about what they believe (and what they sign), especially when AI content is involved.

What to do in 2026:

- Map where your AI systems touch regulated/high-risk domains.

- Create an AI compliance playbook: documentation templates, monitoring, incident response, and training.

- Audit your customer-facing AI for disclosures and escalation to humans.

8) Security & identity enter the agent era

2026 storyline: Deepfakes, synthetic identities, and non-human “users” force a redesign of security, IAM, and trust.

Why this is a 2026 trend:

- Forrester predicts deepfakes become mainstream and that spending on deepfake detection technology will grow by 40% in 2026. Forrester

- IBM’s 2026 outlook argues agent adoption forces a rethink of identity and access management, as non-human identities proliferate and visibility/accountability becomes essential. IBM+1

- Forrester also expects consumer-built agents to create operational “bot floods,” including extreme spikes in call volumes — turning provenance and intent into urgent needs. Forrester

Impact on enterprises

Security practices expand from “protect employees” to “protect employees and agents.”

Key shifts include:

- Treating agents as identities: least privilege, credentials, rotation, monitoring

- New attack surfaces: prompt injection, tool misuse, data exfiltration via agent actions

- Deepfake defense in HR, finance approvals, and executive communications

2026 is the year many organizations add:

- voice/video verification steps for sensitive approvals

- internal “authenticity infrastructure” (signing, watermarking, provenance metadata) Forrester+1

Impact on society

We move into a “permanent skepticism” phase — trust is earned by verification, not assumed. That can reduce fraud long-term, but it also increases friction and anxiety. Forrester

Impact on jobs

- High demand for cybersecurity, identity specialists, fraud analysts, and incident responders.

- New training expectations for all employees: deepfake awareness, secure usage of AI tools, and verification etiquette.

Impact on how we interact socially

This may be the biggest “social” shift of 2026:

- People will increasingly ask: “Is this real?”

- Verification becomes part of relationships — from workplace instructions to family video calls.

- Social platforms and media will face increased authenticity pressure, especially as generative video quality rises. Deloitte+1

What to do in 2026:

- Build “human + agent identity” into IAM strategy.

- Add deepfake response playbooks to incident response.

- Train teams on verification protocols (especially finance, HR, exec assistants).

9) Search, shopping, and procurement shift to answer engines + agentic commerce

2026 storyline: discovery becomes summarized, conversational, and increasingly agent-driven — and commerce begins moving toward agent-to-agent negotiation.

Why this is a 2026 trend:

- Deloitte predicts daily usage of AI within search will be three times greater than any standalone AI tool in 2026, with many consumers seeing AI summaries as a normal part of search. Deloitte

- Forrester predicts major brands will unify “agentic commerce” experiences, and that 20% of B2B sellers will be forced to engage in agent-led quote negotiations. Forrester

- Gartner predicts a future where B2B buying becomes heavily intermediated by AI agents (their longer-term view reinforces 2026 preparation). Gartner

Impact on enterprises

Marketing and commerce leaders face a structural change:

- Traditional SEO becomes less dominant when answers are summarized by AI.

- Product data quality becomes mission-critical: if answer engines can’t understand your catalog, you disappear.

- Procurement and sales workflows will adapt to “bot buyers” and “bot sellers.”

Expect investment in:

- Answer engine optimization (AEO)

- structured product feeds + content provenance

- negotiation policies for agent-to-agent interactions Forrester+1

Impact on society

Information access becomes easier — but also more mediated. Whoever controls answer engines can shape what people believe and buy. That intensifies debates about platform power, manipulation, and transparency.

Impact on jobs

- Growth in hybrid roles: content strategists + data modelers; product ops + AI feed optimization.

- New frontline realities: call centers and support teams handle escalations from AI-mediated misunderstandings — and even deal with automated agent traffic. Forrester+1

Impact on how we interact socially

As people rely on AI summaries, we’ll see:

- less “link sharing” and more “AI said…” in conversation

- increased social conflict about reality (“my AI says X, yours says Y”)

- new norms: asking friends for sources, demanding receipts, and choosing trusted advisors (human or AI)

What to do in 2026:

- Treat product/customer data as a growth channel.

- Instrument how AI-driven search changes your funnel.

- Prepare for agent traffic (rate limiting, bot detection, intent classification).

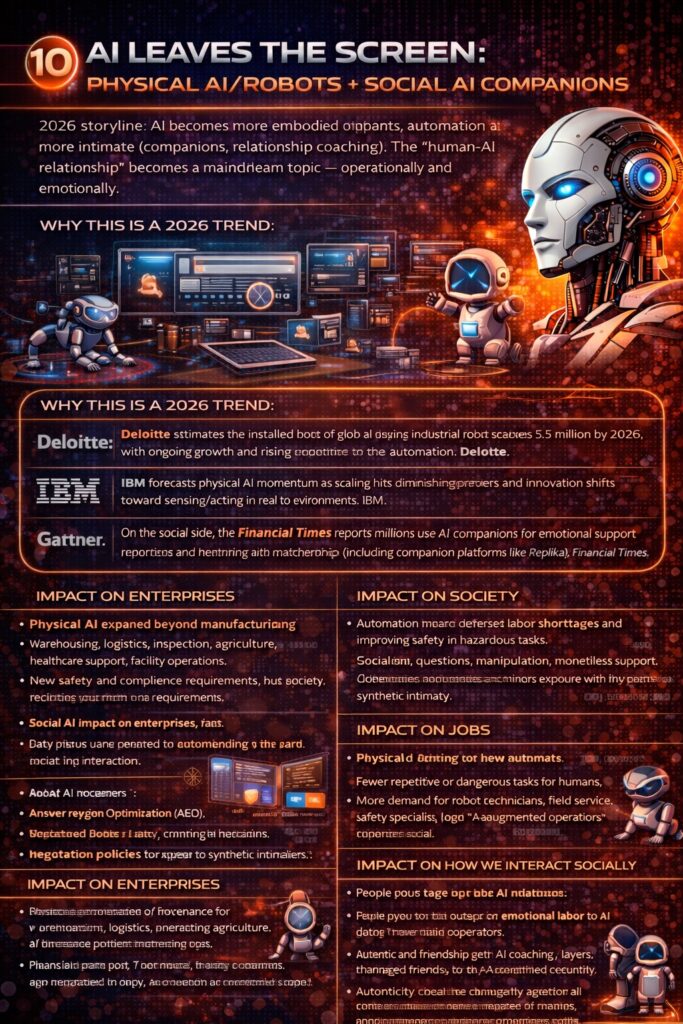

10) AI leaves the screen: physical AI/robots + social AI companions

2026 storyline: AI becomes more embodied (robots, automation) and more intimate (companions, relationship coaching). The “human-AI relationship” becomes a mainstream topic — operationally and emotionally.

Why this is a 2026 trend:

- Deloitte estimates the installed base of global industrial robots reaches 5.5 million by 2026, with ongoing growth and rising attention to automation. Deloitte

- IBM forecasts physical AI momentum as scaling hits diminishing returns and innovation shifts toward sensing/acting in real environments. IBM

- On the social side, the Financial Times reports millions use AI companions for emotional support and intimacy, highlighting AI’s growing role in relationships and matchmaking (including companion platforms like Replika). Financial Times

Impact on enterprises

Physical AI expands beyond manufacturing:

- warehousing, logistics, inspection, agriculture, healthcare support, and facility operations

- new safety and compliance requirements for systems that interact with the physical world

Social AI impacts enterprises too:

- HR policies must address AI companionship risks and workplace boundaries

- customer-facing brands must anticipate emotional dependency and safety concerns (especially in mental health-adjacent experiences)

Impact on society

Two big societal outcomes collide:

- Automation helps address labor shortages and improves safety in hazardous tasks.

- Social AI raises mental health and ethical questions: dependency, manipulation, monetization of loneliness, and minors’ exposure to “synthetic intimacy.” Financial Times

Impact on jobs

Physical AI changes labor demand:

- fewer repetitive or dangerous tasks for humans

- more demand for robot technicians, field service, safety specialists, and “AI-augmented operators”

Social AI changes “people jobs” too:

- therapists, educators, and counselors will increasingly encounter clients influenced by AI companions and AI advice.

Impact on how we interact socially

This is where 2026 can feel most alien:

- Some people will outsource emotional labor to AI (“talk it out with my bot first”).

- Dating and friendship get “AI coaching” layers (message drafting, advice, compatibility analysis). Financial Times+1

- Authenticity becomes central: people will want to know if they’re talking to a person, a bot, or a bot-assisted person — and what that means.

What to do in 2026:

- If you deploy robots: create safety + human factors programs (training, procedures, incident response).

- If you build social AI: implement strong guardrails, age-appropriate design, disclosures, and escalation paths.

- For everyone: build norms for “AI-mediated communication” (when it’s fine vs when it’s deceptive).

Agentic AI Development: Build Autonomous Systems and Intelligent AI Agents That Plan, Execute, and Deliver Real-World Results

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The 2026 Meta-Trend: Trust Becomes Infrastructure

Across all ten trends, one underlying shift defines 2026 more than any single technology:

Trust stops being a narrative — and becomes a system.

For the last decade, trust in technology was communicated through branding, policy statements, and intent. In 2026, that era ends. As AI systems move from advisory tools to autonomous actors — agents that decide, negotiate, execute, and speak on our behalf — trust must be designed, enforced, and continuously verified.

This is the real inflection point.

In 2026, trust is no longer a soft value. It is hard infrastructure.

Trust in outputs

AI systems must prove how an answer was generated, which context was used, and why a result can be relied upon. Evaluation, context engineering, provenance, and feedback loops become first-class engineering concerns — not afterthoughts.

Trust in operations

As agents multiply, enterprises must demonstrate control:

clear ownership, auditable decision paths, enforceable policies, and real-time observability. Agent control planes, logging, escalation paths, and human override are no longer optional — they are operational prerequisites.

Trust in society

Deepfakes, synthetic identities, and AI-mediated information flows force a shift from assumed authenticity to verified reality. Regulation, detectability, disclosure, and accountability move from policy documents into runtime systems.

Skepticism becomes the default. Trust must be earned continuously.

Trust in relationships

As AI enters emotional, social, and relational spaces — companions, coaching, negotiation, and communication — the question is no longer “Is this helpful?” but “Is this honest, appropriate, and transparent?”

In a world of AI-mediated interaction, authenticity becomes a design choice.

The strategic reality of 2026

The defining competitive advantage of the next AI era will not be intelligence alone.

It will be trust at scale.

Organizations that treat trust as infrastructure — measurable, testable, and enforceable — will move faster, scale safer, and earn lasting legitimacy. Those that treat trust as a slogan will struggle under regulation, public skepticism, and operational risk.

2026 marks the transition from experimental AI to accountable AI.

And accountability, ultimately, is the foundation on which all sustainable intelligence is built.

The AI Control Plane: Distributed Systems Engineering for Governance-First AI

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Develop a Face Recognition Tool with OpenCV: For Security and Verification

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.