By Thorsten Meyer — February 2026 – Thorsten Meyer AI

Anthropic flagged more than 24,000 coordinated fake accounts in 2025, all probing Claude for the same thing: its reasoning traces. That was not spam. That was a training pipeline.

By early 2026, U.S. regulators and Anthropic’s own threat intelligence have converged on a single conclusion. A cohort of Chinese labs has been building frontier-class open models without owning frontier hardware and without funding frontier research. They rent the chips. They distill the reasoning. And until 2026-01, the entire operation was legal on paper.

This article names the playbook, maps the two unauthorized pipelines, and tells you what the Remote Access Security Act actually closes — and what it does not.

Executive Summary

| Resource Required to Build a Frontier Model | How Western Labs Acquire It | How the Rent-and-Distill Cohort Acquires It |

|---|---|---|

| High-quality reasoning data | Thousands of PhDs, RLHF at scale, $100M+ annual | Siphoned from Claude via 24,000+ fake accounts |

| Frontier compute (H100/A100 clusters) | Direct NVIDIA purchases, captive data centers | Rented from AWS, Azure, GCP via offshore shells |

| Base model weights | Proprietary pre-training at $100M–$1B | Downloaded free from Hugging Face (Llama, Qwen) |

| Legal exposure | Not applicable | Zero, until January 2026 |

The arithmetic is embarrassing for the West. The two most expensive inputs to a frontier model — reasoning data and compute — were both available to any adversary with a credit card and a Singapore shell company.

The AI Factory Handbook: Build, Manage, and Scale NVIDIA AI Infrastructure (NCA-AIIO Exam Prep & Real-World Operations)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Pipeline 1 — Industrial-Scale Distillation

Distillation is not new. Academic labs have used teacher-student training for over a decade. What is new is the scale and the target: using a production frontier model as the unwitting teacher, at industrial volume, through a consumer API.

The mechanics, as described in Anthropic’s threat reporting:

The Siphon. Thousands of coordinated accounts send engineered prompts. Not “what is 2+2,” but “solve this competition-math problem step by step, showing every inference.” The goal is the chain-of-thought trace — the exact reasoning pattern that makes Claude capable on hard problems.

The Dataset. Millions of prompt-plus-trace pairs are cleaned, deduplicated, and formatted into supervised fine-tuning data. A student model does not need to invent reasoning. It needs to memorize Claude’s.

The Evasion. Residential proxy networks route API calls through the Wi-Fi routers of ordinary households in the U.S. and Europe. Rate limits designed for a single retail user do nothing against 24,000 distinct residential IPs.

The uncomfortable truth: every inference endpoint that returns a long reasoning trace is a training dataset in transit. The better your model is at showing its work, the more extractable it is.

cloud computing GPU rental services

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Pipeline 2 — The Cloud Loophole

Export controls on H100s and A100s blocked the boxes. They did not block the logins.

The shell game, as U.S. Commerce has now documented:

- Register a cloud account under a Singapore or UAE holding company.

- Rent a cluster of 4,000–8,000 H100s in a U.S. or allied data center.

- Upload the distilled golden dataset plus an open base model (Qwen 2.5, Llama 3, DeepSeek).

- Run the fine-tuning job on U.S. soil, billed in U.S. dollars, on U.S. chips.

- Download the final weights as a single file back to Shenzhen.

Until 2026-01, the Bureau of Industry and Security had no jurisdiction over step 4. The chips never left the country. Only their output did. And a set of model weights is not, under the pre-2026 export framework, a commodity.

Engineering a Small AI Language Model: Training, Evaluation, and Deployment Without Myth

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Frankenstein Model

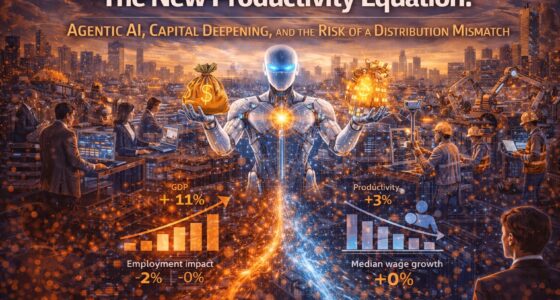

Combine the two pipelines and the output is exactly what the open benchmarks have been showing since late 2025.

| Component | Source | Cost to the Chinese Lab |

|---|---|---|

| Base architecture | Llama 3 / Qwen 2.5 open weights | €0 |

| Advanced reasoning behavior | Claude chain-of-thought traces | €2M–5M in API fees |

| Training compute | Hyperscaler rental, 4,000 H100s, two weeks | €8M–15M |

| PhD research staff | Not required | €0 |

| Total | €10M–20M |

Western labs spend €100M to €1B to ship a comparable model.

The point is not that distillation works. The point is that the two most expensive inputs in AI — reasoning data and frontier compute — were quietly outsourced to the very country the export regime was designed to exclude.

Generative AI for Cloud Solutions: Building end-to-end generative AI stacks with cloud services and model orchestration pipelines (English Edition)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What the Remote Access Security Act Actually Changes

In 2026-01, the U.S. House passed the Remote Access Security Act (RASA). It extends the export framework from chips to cloud access to chips. The operative mechanisms:

- KYC at the hyperscaler. AWS, Azure, GCP, Oracle, and CoreWeave must verify the ultimate beneficial owner of any account consuming above a threshold of regulated compute.

- Workload attestation. Training jobs above a FLOP threshold (reported around 10^25) must be logged with customer identity and general workload description.

- Offshore-shell backstop. Singapore and UAE intermediary structures are specifically named. Passthrough subsidiaries no longer provide a safe harbor.

What RASA does not fix:

- Pipeline 1 is still legal. API terms-of-service violations are civil, not criminal. Anthropic can ban accounts; it cannot stop a state actor from creating new ones faster than detection scales.

- The stock of Frankenstein models is already distributed. Every model trained before RASA is on Hugging Face, and weights do not unpublish.

- Non-U.S. hyperscalers are not bound. European, Gulf, and East Asian clouds are now receiving migration inquiries. Expect the rental market to re-route within 12 months.

What Leaders Should Do This Quarter

1. If you run an API-accessible frontier model: treat every long reasoning trace as an export-controlled artifact. Rate limiting and IP reputation are table stakes. Behavioral detection on coordinated prompt patterns is the new minimum.

2. If you train models on a hyperscaler: build the KYC documentation before your provider asks for it. RASA compliance will arrive as a contract amendment, not a negotiation.

3. If you consume open-weight models in regulated industries (finance, healthcare, defense): add a provenance clause to procurement. You cannot inherit liability for a model whose training data was siphoned from a competitor’s API.

The Strategic Read

Export controls assumed that frontier AI required physical possession of frontier hardware. That assumption died in 2023. The Rent-and-Distill playbook exposed the gap. RASA closes part of it. The remainder — ongoing distillation, non-U.S. clouds, already-released open weights — will require a different policy architecture entirely.

The deeper lesson for Western AI labs is strategic, not regulatory. A model that reasons well in public is a model that teaches well in private. The moat is not the weights. The moat is whatever you refuse to show.

The next frontier model China ships was trained on your API bill and your data center floor. The only question is whether you noticed in time to bill them for it.

About the Author

Thorsten Meyer is a Munich-based futurist, post-labor economist, and recipient of OpenAI’s 10 Billion Token Award. He spent two decades managing €1B+ portfolios in enterprise ICT before deciding that writing about the transition was more useful than managing quarterly slides through it. More at ThorstenMeyerAI.com.

Sources

- Anthropic, Threat Intelligence Report: Coordinated Distillation Attempts at Scale (2025 Q4)

- U.S. Bureau of Industry and Security, Advisory on Cloud-Based Access to Controlled Compute (2025-11)

- H.R. 4076, Remote Access Security Act of 2026 (passed House 2026-01)

- Reuters, How Chinese labs are training on U.S. cloud infrastructure (2025-12)

- Investing.com, Commerce weighs cloud access in new export framework (2025-10)

- Financial Times, The distillation problem: when your API becomes the training set (2026-01)