Introduction

On 23 October 2025, Anthropic announced that it would dramatically expand its use of Google Cloud Tensor Processing Units (TPUs). The company plans to deploy up to one million TPUs for training and running its Claude models by 2026. Analysts estimate that this expansion is worth tens of billions of dollars and will bring well over a gigawatt of new computing capacity onlineeenewseurope.com—roughly the capacity of a large nuclear power plant. According to Reuters, one gigawatt of AI compute can cost around US$50 billionreuters.com. This is one of the largest single commitments to specialized AI hardware and signals a new phase in the infrastructure arms race among foundation‑model providers.

Anthropic already uses Amazon’s Trainium chips and NVIDIA GPUs; the new deal deepens the company’s relationship with Google while maintaining a multi‑platform compute strategyeenewseurope.com. Anthropic’s CFO, Krishna Rao, said that the expansion is needed to meet exponentially growing demand from customers ranging from Fortune 500 companies to AI‑native startupseenewseurope.com. Thomas Kurian, CEO of Google Cloud, noted that Anthropic chose TPUs because of their strong price‑performance and efficiencyeenewseurope.com. As the number of enterprise customers using Claude models exceeds 300,000, with large accounts growing nearly seven‑fold year‑over‑yeareenewseurope.com, acquiring massive computing capacity is essential for Anthropic to remain competitive.

The graphic below illustrates how central AI compute resources enable a wide range of industries:

AI NPU System Design with Python and Verilog: Building from Scratch: A Complete Guide to Modeling, Custom ISA, Compiler, and FPGA Implementation

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Market Impact and Competitive Dynamics

Acceleration of the AI Infrastructure Arms Race

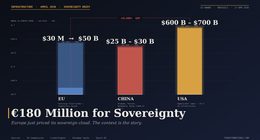

- Enormous scale and capital intensity. The commitment to deploy up to a million TPUs underscores how capital‑intensive AI has become. Reuters reports that a gigawatt of AI compute can cost roughly US$50 billionreuters.com. A deal worth “tens of billions of dollars” shows that foundation‑model developers are now competing not only through algorithms but through massive infrastructure build‑outs.

- Price‑performance and efficiency advantages. Google executives emphasised that TPUs provide favourable price‑performance and energy efficiency compared with general‑purpose GPUseenewseurope.com. This expands the competitive landscape: cloud providers that can offer proprietary accelerator chips (e.g., Google’s TPUs, Amazon’s Trainium) may challenge NVIDIA’s dominance in AI hardware while giving customers alternatives amid GPU shortages and supply‑chain constraints.

- Diversification and bargaining power. By spreading compute across TPUs, Trainium and GPUs, Anthropic reduces risk of vendor lock‑in. AI Business notes that multi‑cloud strategies help negotiate better pricing, avoid single‑supplier bottlenecks, and ensure availabilityaibusiness.com. This move pressures other model providers to pursue similar diversification strategies.

- Pressure on rivals. Anthropic’s gigawatt‑scale commitment increases competitive pressure on OpenAI, Meta and other foundation‑model developers. The Reuters report mentions that OpenAI is pursuing deals to secure 26 gigawatts of computing capacityreuters.com. The race to secure compute will shape which players can train the largest models and offer the best services.

- Energy consumption and sustainability. Bringing “well over a gigawatt” of AI compute online raises concerns about power consumption and environmental impact. Cloud providers must invest in efficient data centres and renewable‑energy offsets to mitigate the carbon footprint. Google claims its TPUs are energy‑efficient and that it has developed the Ironwood TPU offering 42.5 exaflops per pod with improved efficiencyeenewseurope.com. Nevertheless, the scale of this deployment intensifies the conversation around AI’s energy dilemma.

Implications for Cloud and Chip Providers

- Google Cloud gains a flagship customer that validates its TPU technology. TechRepublic notes that Anthropic’s choice demonstrates the strong price‑performance of TPUseenewseurope.com. This may encourage other AI developers to adopt TPUs, eroding NVIDIA’s near‑monopoly in AI accelerators.

- Amazon Web Services (AWS) remains Anthropic’s primary training partner, and the two companies are collaborating on Project Rainier, a supercomputer built with hundreds of thousands of chipseenewseurope.com. This highlights the competitive balancing act: by staying with AWS while expanding with Google, Anthropic keeps both vendors invested in meeting its needs.

- NVIDIA, the dominant GPU supplier, faces pressure as companies explore alternative chips. Analysts cited in AI Business warn that if TPUs deliver better price‑performance, more enterprises could migrate away from GPUsaibusiness.com. However, GPUs remain essential for a wide range of workloads, and Nvidia continues to innovate.

- Other AI startups and model providers may be forced to secure similar compute commitments or partner with multiple clouds to remain competitive. Smaller providers without access to such scale may focus on niche models or differentiate through domain specialisation.

Google Coral USB Edge TPU ML Accelerator coprocessor for Raspberry Pi and Other Embedded Single Board Computers

Specifications: Arm 32-bit Cortex-M0+ microprocessor (MCU): up to 32 MHz max 16 KB flash memory with ECC 2…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

How Vertical Industries Could Benefit

Anthropic’s expanded compute capacity can unlock more powerful versions of the Claude models and enable bespoke fine‑tuning for industry‑specific use cases. Below, we examine how major verticals could leverage these advancements and how competitive dynamics might shift within each sector.

Retail & E‑Commerce

- Enhanced AI shopping assistants and recommendation engines. Generative AI models already help retailers analyse sales data and optimise inventory

hatchworks.com, and conversational agents assist shoppers. With increased compute, Claude models can handle more complex queries, integrate multimodal data (text, images, voice) and provide real‑time, personalised assistance for millions of customers simultaneously.

- Improved product search and visual recommendations. AI can generate descriptive text and classify images to improve product discoverability. A large model backed by a gigawatt of compute can deliver more accurate search results and cross‑sell suggestions.

- Competitive landscape. Retailers currently rely heavily on Amazon’s AI services (e.g., AWS and Alexa). Anthropic’s partnership with Google may allow retailers to access high‑performing models on Google Cloud or through multi‑cloud deployments, increasing competition with Amazon in retail AI. Smaller e‑commerce platforms could differentiate through better AI‑powered personalisation.

Healthcare & Life Sciences

- AI‑driven diagnostics and medical imaging. AI models can analyse medical records and radiology images to detect anomalies and support diagnoses

hatchworks.com. Larger models trained on TPUs can improve accuracy, reduce false positives and support early detection of diseases.

- Drug discovery and genomics. Generative models simulate molecular interactions and predict drug‑target binding

hatchworks.com. Bigger models with more compute can explore larger chemical spaces, accelerating discovery and enabling personalised medicine based on genetic profiles.

- Precision treatment plans. AI can analyse patient histories and genetics to recommend personalised treatment

hatchworks.com. More compute enables models to incorporate diverse data types (genomics, wearables) and maintain high‑throughput inference in clinical settings.

- Competition and regulation. Healthcare AI is heavily regulated; reliability and bias mitigation are critical. Anthropic emphasises safety research, and new capacity will support extensive alignment testingeenewseurope.com. Competitors like Google’s Med‑PaLM and specialized startups (e.g., DeepMind’s medical spin‑offs) will vie for partnerships with healthcare providers. Access to multi‑cloud infrastructure could reduce dependence on a single vendor and encourage competitive pricing.

Financial Services

- Risk assessment and fraud detection. AI models analyse transactional data to flag suspicious activity and assess credit risk

hatchworks.com. Larger, more sophisticated models can better detect complex fraud patterns and incorporate macro‑economic signals.

- Algorithmic trading and market analysis. Generative models that summarise news and predict market sentiment can give traders an edge

hatchworks.com. High‑capacity compute allows for faster model updates and more accurate predictions.

- Customer service and personalised banking. AI chatbots provide 24/7 support and can offer tailored financial advice

hatchworks.com. With bigger models, these assistants will handle nuanced queries, cross‑sell financial products, and maintain compliance.

- Competition. Established banks are competing with fintech startups that leverage AI. Anthropic’s expanded compute could allow fintechs to access high‑quality models through API services on Google Cloud, challenging banks that build models in‑house. However, banks may prefer multi‑cloud deployments for redundancy and regulatory reasons.

Marketing & Advertising

- Automated content generation and localisation. Marketers use AI to draft ads, product descriptions and campaigns

hatchworks.com. Larger models can generate more creative and context‑aware content, reducing the time to market and enabling global localisation across languages.

- Targeted advertising and customer insights. AI tools derive insights from consumer data to optimise ads

hatchworks.com. More compute allows models to integrate real‑time signals (clickstream, social media) and test numerous campaign variants rapidly.

- Competitive dynamics. Google and Meta both offer advertising platforms; Meta’s generative AI for advertisers enables rapid creation of ad variants

hatchworks.com. Anthropic’s expanded capacity could allow it to offer AI copywriting and campaign‑optimisation tools integrated with Google Cloud or third‑party marketing platforms, increasing competition with Meta and startups like Jasper.

Manufacturing & Industrial IoT

- Predictive maintenance. AI models analyse sensor data from machines to predict failures; Deloitte estimates that predictive maintenance boosts productivity by 25% and reduces breakdowns by 70%

hatchworks.com. Larger models can fuse sensor data, maintenance records and environmental conditions to deliver more accurate predictions in real time.

- Product design and rapid prototyping. Generative models can create and refine product designs based on requirements

hatchworks.com. Greater compute enables exploring more complex design spaces and performing simulations faster.

- Supply‑chain optimisation. AI can analyse data from suppliers and logistics to optimise inventory and delivery

hatchworks.com. Higher capacity allows for dynamic re‑optimisation across global operations.

- Competition. Manufacturers typically work with industrial IoT vendors like Siemens and PTC. Anthropic’s improved models could be embedded in such platforms via API calls, challenging existing predictive maintenance tools. The price‑performance of TPUs may lower operational costs and encourage adoption by mid‑size manufacturers.

Education & E‑Learning

- Personalised learning and tutoring. AI systems can tailor curricula based on student performance and learning styles

hatchworks.com. With more compute, models can handle multi‑modal inputs (text, voice, images) and generate adaptive learning paths for large cohorts.

- Automated grading and feedback. AI can grade assignments and provide instant feedback, freeing educators to focus on mentorship

hatchworks.com. More powerful models can evaluate open‑ended responses, reasoning explanations and creative work.

- Language learning and accessibility. Tools like Duolingo use generative AI to allow students to practise conversation with AI “characters”

hatchworks.com. Larger models can support more languages, accent recognition and cultural nuances, making education more inclusive.

- Competitive landscape. Ed‑tech providers (Coursera, Chegg, Udemy) are incorporating generative AI. Anthropic’s multi‑cloud delivery may allow schools to choose infrastructure based on data residency and cost. Competition will revolve around content quality, data privacy and integration with learning management systems.

Entertainment & Media

- Content creation. AI systems can generate text, music, art and video

hatchworks.com. Larger models can produce higher‑fidelity and more coherent media, enabling new forms of storytelling and interactive experiences.

- Video editing and localisation. AI tools can analyse footage, automatically cut scenes and suggest engaging sequences

hatchworks.com. With more compute, these systems can edit longer content, adapt to different cultures and languages, and generate subtitled or dubbed versions in real time.

- Game development and interactive media. Generative AI can create game environments, characters and narratives, and enable adaptive gameplay

hatchworks.com. Larger models might support open‑world games with dynamic storylines and natural language interactions with non‑player characters.

- Competition. Entertainment giants (Netflix, Disney, video‑game studios) are all investing in generative AI. Anthropic’s compute expansion enables it to offer high‑end generative media APIs that could compete with OpenAI’s creative tools and startups like Runway. Multi‑cloud delivery ensures content creators can choose infrastructure to meet latency requirements.

Energy & Utilities

- Smart grid optimisation. AI models predict demand, integrate renewable energy and reroute electricity

hatchworks.com. Larger models trained on more data can improve forecasting accuracy, reducing waste and enhancing grid stability.

- Renewable integration and forecasting. Companies like Gridmatic build models of the U.S. electricity grid at a nodal level

hatchworks.com. Enhanced compute capacity could allow AI systems to simulate complex interactions across thousands of nodes and weather patterns, supporting better integration of solar and wind power.

- Environmental monitoring. AI analyses satellite and sensor data to monitor air quality and emissions

hatchworks.com. Larger models can detect subtler patterns and anomalies, aiding regulators and utilities.

- Competition and sustainability. Energy firms adopt AI from vendors like Palantir and C3 AI. Anthropic’s improved models could enter this market through partnerships with utilities and governments. However, the energy consumption of AI data centres (potentially >1 GW) raises sustainability concerns; utilities may favour providers that offset energy use with renewables.

Agriculture & Food Production

- Precision agriculture. AI processes data from sensors, drones and satellites to create maps for optimising irrigation, fertilizer and planting

hatchworks.com. Larger models can integrate more data sources (soil sensors, climate forecasts) and provide granular recommendations to farmers.

- Disease detection and crop management. Computer vision models identify crop diseases early by analysing images

hatchworks.com. More compute allows training on larger datasets, improving detection accuracy across diverse plant species.

- Yield prediction and logistics. AI predicts yields using weather, soil and historical data

hatchworks.com, helping farmers plan storage and distribution. High‑capacity models can update predictions in real time as conditions change.

- Competition. Agriculture technology providers (John Deere, Bayer’s digital division) incorporate AI into farm equipment. Access to Anthropic’s models via multi‑cloud infrastructure could reduce entry barriers for smaller ag‑tech startups. The ability to run AI models on the edge (e.g., on farm machinery) will influence which providers gain market share.

The AI Factory Handbook: Build, Manage, and Scale NVIDIA AI Infrastructure (NCA-AIIO Exam Prep & Real-World Operations)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Cross‑Vertical Considerations

- Safety and alignment testing. Anthropic emphasises that increased compute will enable more safety testing and researcheenewseurope.com. For regulated industries like healthcare and finance, rigorous safety and bias evaluation is essential.

- Data privacy and sovereignty. Different verticals operate under varying data‑governance regimes (e.g., HIPAA in healthcare, GDPR in Europe). Multi‑cloud strategies allow organisations to choose where data resides, but they also complicate compliance.

- Skill requirements and workforce impact. Deploying large models requires specialised expertise. Industries must invest in training and reskilling workers to leverage these tools while mitigating potential job displacement.

- Energy use and sustainability. The expansion’s gigawatt‑scale energy requirement may draw scrutiny from governments and environmental groups. Vertical industries seeking to reduce carbon footprints must consider whether the benefits of AI justify the energy costs.

MICRO CENTER AMD Ryzen 7 7800X3D CPU Processor with ASUS TUF Gaming B850-PLUS WiFi AM5 ATX Motherboard (DDR5, PCIe 5.0, 3X M.2, Wi-Fi 7, USB 20Gbps Type-C)

AMD Ryzen 7 7800X3D Desktop Processor, 8 Cores, 16 Threads, 5.0 GHz Max Boost, Unlocked Memory Overclocking. L2+L3…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Conclusion

Anthropic’s partnership with Google Cloud to deploy up to one million TPUs represents a landmark escalation in the AI compute arms race. By securing gigawatt‑scale capacity at tens of billions of dollars, Anthropic aims to maintain a competitive edge, improve model performance and meet exploding customer demand. The deal validates Google’s TPU technology, challenges NVIDIA’s GPU dominance and exemplifies the benefits of a multi‑cloud, multi‑chip strategy. However, the massive energy footprint raises sustainability questions.

For vertical industries—from retail and healthcare to energy and agriculture—the expanded compute capacity promises more powerful, tailored AI capabilities, enabling deeper insights, automation and customer engagement. Businesses must assess how to integrate these models responsibly, weigh vendor choices and invest in data governance and workforce preparation. Ultimately, Anthropic’s expansion is not just about more chips; it signals a shift toward computational scale as a key differentiator in the AI market, reshaping competition and opportunity across the economy.