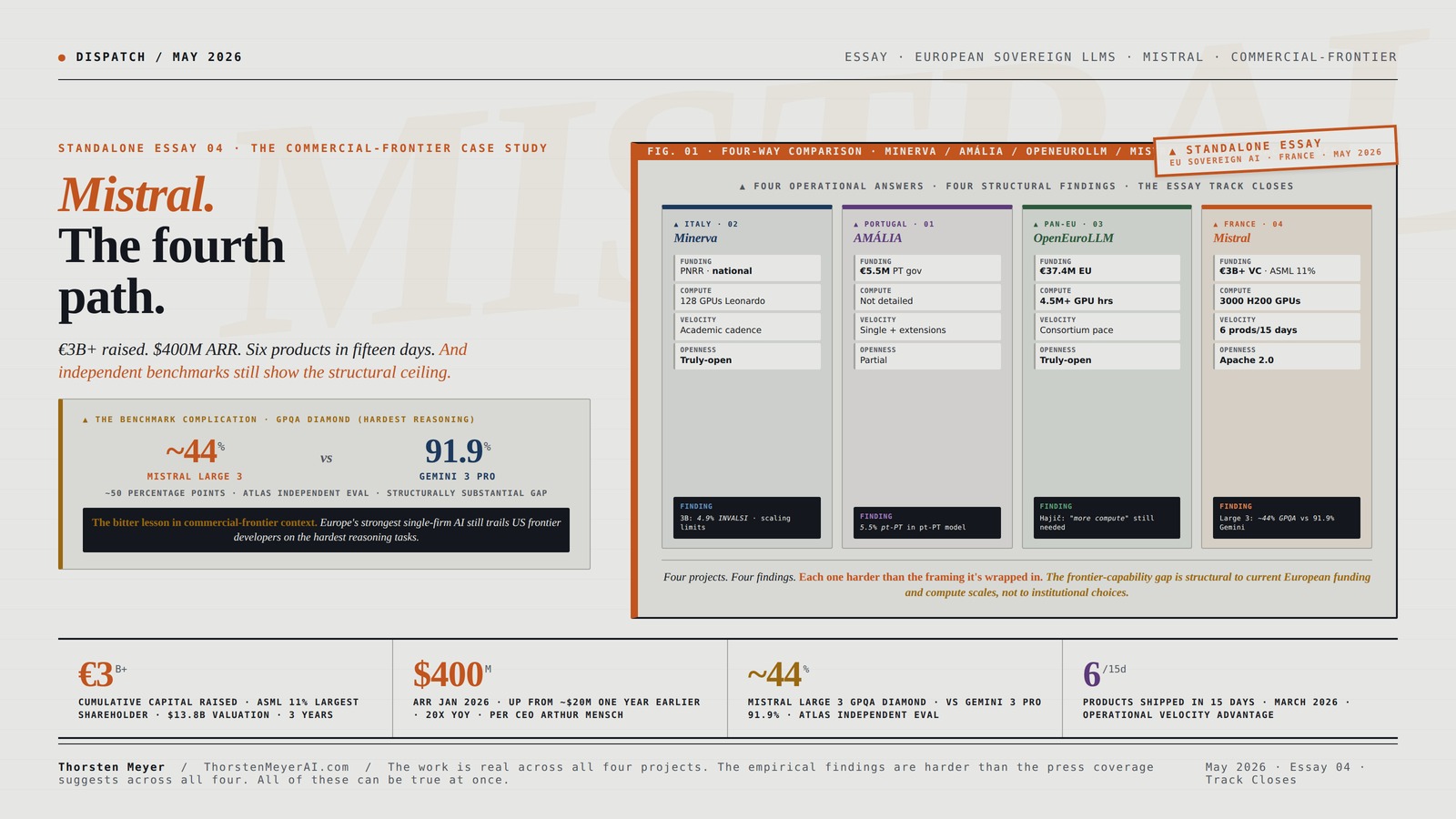

$830M raised in March 2026 for new data centers near Paris and Sweden. ARR up from ~$20M to $400M in twelve months. Six products shipped in fifteen days. Mistral Large 3 trained from scratch on 3,000 NVIDIA H200 GPUs. And independent benchmarks put it at ~40% AIME 2025 — still well behind Gemini 3 Pro, GPT-5.4, and Claude Opus 4.6 on the hardest reasoning tasks. This is what the commercial-frontier answer to the European sovereign-LLM question actually looks like.

By Thorsten Meyer — May 2026

This is the fourth standalone essay in the European sovereign-LLM track. Essays 01-03 walked the three institutional answers: AMÁLIA (Portugal · national continuation), Minerva (Italy · national from-scratch), and OpenEuroLLM (pan-European consortium). Each of those answers operates within academic-and-state institutional architecture. This piece is the structural counter-case. Mistral is the commercial-frontier answer — venture-funded, Paris-headquartered, French, deliberately not in the OpenEuroLLM consortium. Where the prior three answers all operate at academic-budget scale, Mistral operates at venture-capital scale. Where the prior three answers all share a methodological commitment to open data and consortium collaboration, Mistral commits to open weights under Apache 2.0 but treats training data and methodology as commercial trade secrets.

The structural editorial argument I’ve been building across the track: each European sovereign-LLM project represents a different bet about what institutional structure, funding model, and architectural commitment produces results that justify the investment. Italy bet national. Portugal bet continuation. The EU bet consortium. Mistral bet venture-funded commercial-frontier. And the empirical results are now visible across all four answers at the same time.

The headline finding of this piece: Mistral is by every operational measure Europe’s strongest single-firm AI play. $400M ARR (20x growth in 12 months). $13.8B valuation. ASML as largest shareholder at 11%. Six products in March 2026 alone. Mistral Large 3 trained on 3,000 H200 GPUs. Apache 2.0 licensing on nearly the entire product line. Le Chat free tier reaching market scale. Enterprise customers including ASML, ESA, and CMA CGM. And independent benchmarks still place Mistral Large 3 behind Gemini 3 Pro, GPT-5.4, and Claude Opus 4.6 on the hardest reasoning evaluations. The commercial-frontier path produces real results, real revenue, and real European AI sovereignty value. It is also structurally constrained — just by different things than the academic-and-state answers are.

The bitter lesson surfacing in commercial-frontier context: Mistral has substantially more capital, compute, and execution velocity than any other European AI actor. The structural gap with US frontier developers is smaller than the gap any consortium or national project faces. But it is still a gap. This is structurally important because it implies the European sovereign-AI strategic question is not just “which institutional model produces results” but “is any European institutional model — including the commercial-frontier model with venture-capital backing — sufficient to close the gap with US frontier developers on capability at the highest end?” Mistral’s empirical results suggest the answer may be no within current funding and compute scales, even at the commercial-frontier funding tier.

This piece walks the Mistral project forensically, situates it as the structural counter-case to the consortium answer, and closes with what the four-way comparison demonstrates about the European sovereign-LLM strategic landscape as of mid-May 2026. The standard caveat applies: Mistral is operating at high velocity. The strategic assessment may shift materially when the next model generation ships, or when Mistral’s data center buildout completes, or when the company’s commercial trajectory either accelerates or hits a structural ceiling.

Mistral.

The fourth

path.

€3B+ raised, $400M ARR, six products in fifteen days. And independent benchmarks still put Mistral Large 3 well behind Gemini 3 Pro, GPT-5.4, and Claude Opus 4.6 on the hardest reasoning tasks.

Italy bet national. Portugal bet continuation. The EU bet consortium. Mistral bet venture-funded commercial-frontier. By every operational measure, Mistral is Europe’s strongest single-firm AI play — $400M ARR, ASML as largest shareholder at 11%, Apache 2.0 across the catalog, $830M raised in March 2026 for new data centers near Paris and Sweden. And the empirical results still show the commercial-frontier path operating at the same structural ceiling all other European projects encounter. Four projects. Four findings. Each one harder than the framing it’s wrapped in.

Three years. €3B+ raised.

Mistral’s funding trajectory is operationally important because it demonstrates the commercial-frontier path at scale. This is not consortium-budget scale. European venture capital, augmented by strategic-investor capital from European industrial actors and US venture funds, can sustain frontier-AI development.

NVIDIA Tesla A100 Ampere 40 GB Graphics Processor Accelerator – PCIe 4.0 x16 – Dual Slot

Standard Memory: 40 GB

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

44% vs 91.9%. The bitter lesson in commercial-frontier context.

Mistral Large 3 was trained from scratch on 3,000 NVIDIA H200 GPUs. It is Mistral’s most ambitious training run to date and Europe’s strongest single-firm frontier-class model. Independent benchmarks from LayerLens/Atlas show the structural gap with US frontier developers on the hardest reasoning tasks.

LARGE 3

3 PRO

CLASS

Deep Learning at Scale: At the Intersection of Hardware, Software, and Data

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Six products. Fifteen days.

Between March 16 and March 31, 2026, Mistral shipped six products. This product cadence is structurally distinct from how the academic-and-state answers operate. OpenEuroLLM shipped two deliverables in the entirety of 2025. The commercial-frontier model’s strategic advantage is velocity.

/ 675B total

from-scratch training

~500 pages

LMArena ranking

Student Training endotrainer Box Simulation Practice Model Set

size:5mmx330mm

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Four answers. Four structural findings.

The Minerva national from-scratch path. The AMÁLIA national continuation path. The OpenEuroLLM pan-European consortium path. The Mistral commercial-frontier path. Together they map the European sovereign-LLM strategic option space comprehensively. Each surfaces an empirical complication the marketing materials downplay.

Four projects. Four findings. Each one harder than the framing it’s wrapped in. The frontier-capability gap appears to be structural to current European funding and compute scales, not to institutional choices. Even the strongest commercial-frontier model with substantially more capital than the others combined trails US frontier developers on the hardest benchmarks.

AI Prompt Engineering: Foundations of Communication with LLMs – Building Generative AI and Agentic AI Prompt Systems Across Development, Testing, and Deployment

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Five observations. The track closes.

The four-way essay track produces strategic recommendations grounded in operational realities. This is not a counsel of despair. It is a counsel of strategic clarity for European sovereign-AI development.

The work is real across all four projects. The institutional achievement is substantial across all four. The empirical findings are harder than the press coverage suggests across all four. All of these can be true at once. The strategic discourse benefits from holding all of them simultaneously rather than collapsing into single-answer triumphalism or single-failure pessimism. The European sovereign-AI agenda is at the empirical-data-ground-truth moment. The discourse should be ready for whatever the data actually shows.

I · What Mistral actually is · the institutional and technical foundation

The factual baseline before the structural argument. From Mistral’s official announcements, the Wikipedia historical timeline, Built In’s profile coverage, Serenities AI’s verified analysis, and the broader public record.

The founding architecture

Mistral AI SAS is a French artificial intelligence company headquartered in Paris, founded April 2023 by three French researchers who met at École Polytechnique:

- Arthur Mensch (CEO) — former Google DeepMind, expert in advanced AI systems

- Guillaume Lample — former Meta Platforms, large-scale AI models specialist

- Timothée Lacroix — former Meta Platforms, large-scale AI models specialist

The company is named after the mistral, a powerful cold wind in southern France. The naming choice is structurally significant — it explicitly positions the company as European-rooted rather than as a generic global AI startup. Mensch’s DeepMind background and Lample/Lacroix’s Meta background represent direct talent extraction from the two most significant US AI labs of the prior generation. This is part of the structural argument: Mistral demonstrated that European AI talent could be retained in Europe given sufficient venture-capital backing and clear strategic positioning.

The funding trajectory

The Mistral funding history is operationally important because it demonstrates the commercial-frontier path at scale. From Wikipedia and Built In’s coverage:

- June 2023 · €105M ($117M) seed round · Lightspeed Venture Partners, Eric Schmidt, Xavier Niel

- December 2023 · €385M ($428M) Series A · Andreessen Horowitz, BNP Paribas, Salesforce · €2B valuation

- February 2024 · $16M Microsoft strategic investment

- June 2024 · €600M ($645M) round led by General Catalyst · €5.8B ($6.2B) valuation

- April 2025 · €100M partnership with CMA CGM (shipping)

- August 2025 · Financial Times reports talks for $1B raise at $10B valuation

- September 2025 · €2B investment at €12B ($14B) valuation · ASML investment of $1.5B for 11% · ASML becomes largest shareholder · CFO Roger Dassen joins strategic committee

- March 2026 · $830M raised for new data centers near Paris and in Sweden

Cumulative capital raised: well over €3B / $3.5B+ in approximately three years. This is not consortium-budget scale. For comparison, OpenEuroLLM’s total budget for model-building is €37.4M — approximately 1% of what Mistral has raised. The commercial-frontier path operates at scale that academic-and-state answers structurally cannot access within current European public funding frameworks.

The commercial trajectory

Per Arthur Mensch’s CEO statements reported by Serenities AI, Mistral’s annual recurring revenue (ARR) hit $400 million in January 2026, up from approximately $20 million one year earlier. This is 20x year-over-year revenue growth at material scale. Named enterprise customers include:

- ASML (Dutch semiconductor lithography giant · also largest shareholder)

- ESA (European Space Agency)

- CMA CGM (French shipping multinational · €100M partnership announced April 2025)

- Le Chat consumer product · Pro subscription tier at $14.99/month · iOS and Android apps released February 2025 · “1 million app downloads in its first 14 days” per Built In

The combination of enterprise contracts, consumer subscription revenue, and API/Platform revenue produces a multi-pillar commercial trajectory that’s structurally different from the single-pillar institutional approaches the consortium and national projects take.

The technical scope · Mistral Large 3 and Ministral 3

The December 2, 2025 model release is structurally important because it represents Mistral’s first frontier-class training run after the major 2025 capital raises. From the official Mistral 3 announcement:

Mistral Large 3 (released December 2, 2025):

- Mixture-of-experts architecture · Mistral’s first MoE since the seminal Mixtral series

- 41 billion active parameters / 675 billion total parameters

- Trained from scratch on 3,000 NVIDIA H200 GPUs · the largest training run in Mistral’s history

- 256K context window

- #2 OSS non-reasoning category on LMArena leaderboard (#6 OSS overall) at release

- Apache 2.0 license · permissive open weights

- Native multimodal (text + image understanding)

- Multilingual coverage including French, German, Spanish, Italian, Dutch, Portuguese, Hindi, Arabic, and approximately 40 languages total

- Available on Mistral AI Studio, Amazon Bedrock, Azure Foundry, Hugging Face, IBM WatsonX, OpenRouter, Fireworks, Together AI, NVIDIA NIM

Ministral 3 family (released December 2, 2025 alongside Large 3):

- Three dense model sizes: 3B, 8B, 14B parameters

- Three variants per size: base, instruct, reasoning · 9 models total

- All Apache 2.0 license

- All multimodal (image understanding native)

- 14B reasoning variant: 85% on AIME 2025 · beats Qwen-14B’s 73.7% · “one of the best small reasoning models available at any price”

- Optimized for edge deployment on NVIDIA DGX Spark, RTX PCs, Jetson devices

The March 2026 product cadence

Between March 16 and March 31, 2026, Mistral shipped six products in 15 days per Serenities AI’s verified analysis:

- Mistral Small 4 · unified reasoning model · $0.15/M input tokens (5x cost advantage vs GPT-5.4 Mini at $0.75/M)

- Voxtral TTS · open-weight text-to-speech · built on Ministral 3B · 9 languages · zero-shot voice cloning with 3 seconds of reference audio · 70ms model latency on H200 · 73% cheaper than ElevenLabs

- Leanstral · formal proof agent · mathematical correctness for formal verification

- Forge · enterprise training platform · launched with ASML and ESA as customers

- Spaces CLI · developer command-line interface for production deployment

- NVIDIA Nemotron Coalition · founding partnership role announced

This product cadence is structurally distinct from how the academic-and-state answers operate. OpenEuroLLM shipped two deliverables in the entirety of 2025. Mistral shipped three times that number of products in fifteen days. The commercial-frontier model’s strategic advantage is velocity, and Mistral’s March 2026 cadence is the operational demonstration.

The licensing posture · Apache 2.0 as commercial strategy

Mistral releases nearly its entire product line under Apache 2.0 — the most permissive widely-used open-source license. Mistral Large 3, the entire Ministral 3 family (9 models), Mistral Small 4, Leanstral, Devstral 2 / Devstral Small 2 (released December 10, 2025), and most prior generations are all Apache 2.0. Voxtral TTS is the notable exception with separate licensing terms.

This is structurally important because it inverts the typical commercial AI lab posture. OpenAI, Anthropic, and Google Gemini keep their weights proprietary. Meta’s Llama uses a custom restrictive license. Mistral’s Apache 2.0 commitment means enterprises can self-host without vendor lock-in, regulators can verify compliance, fine-tuners can adapt models freely, and EU sovereignty requirements (GDPR, AI Act) can be satisfied through on-premise deployment. This is the commercial expression of the same operational-openness commitment that Minerva established at the academic-research level.

The Apache 2.0 commitment is also Mistral’s competitive moat against OpenAI/Anthropic/Google. US frontier developers cannot match this licensing posture without abandoning their proprietary-API revenue model. For European enterprises with data-sovereignty requirements, Apache 2.0 Mistral models are structurally superior to proprietary US alternatives regardless of raw capability rankings — because the deployment model is fundamentally different.

The pricing posture · price-performance as positioning

From PricePerToken’s pricing analysis (May 10, 2026):

- Mistral Nemo · $0.02 input / $0.04 output per 1M tokens · cheapest in catalog

- Ministral 3 3B · $0.10 input/output

- Mistral Small 3.1 24B · $0.10 / $0.30

- Mistral Small 4 · $0.15 input

- Mistral Medium 3 · $0.40 / $2.00

- Mistral Large 3 · $0.50 / $1.50 · “among the most cost-competitive frontier models”

For comparison, GPT-5.4 Mini is $0.75/M input — Mistral Small 4 is approximately 5x cheaper for comparable capability tier. The price-performance positioning is operationally significant for the European sovereign-AI agenda specifically: cost-effective frontier-class AI accessible to European SMEs and public sector institutions is exactly what the broader strategic discourse calls for. Mistral is delivering on price-performance positioning that the consortium and national projects do not yet match operationally.

II · The commercial-frontier strategic bet · what Mistral actually commits to

The Mistral architectural choice deserves explicit articulation as a strategic bet, because it organizes everything else about the company.

The four paths · structurally articulated

European sovereign-LLM development has, with Mistral, four primary architectural and institutional approaches. From the prior essays:

- The continuation approach (AMÁLIA) · continue pre-training of multilingual foundation with native-language data · national institutional · €5.5M scale

- The from-scratch approach (Minerva) · train new model from random initialization on native-language-heavy data · national institutional · ~€100M+ scale

- The consortium approach (OpenEuroLLM) · pool resources across 20+ institutions and supranational EU funding · €37.4M scale · academic-and-state institutional

The commercial-frontier approach (Mistral) · venture-funded private company building frontier-class models for commercial deployment · €3B+ raised · commercially institutional. The bet: frontier-class European AI requires commercial-scale capital, commercial-execution velocity, and commercial-license terms that academic-and-state institutions structurally cannot provide.

Each approach has real trade-offs. The commercial-frontier approach risks: (a) eventual acquisition by US or Chinese strategic actors (the Anthropic / Amazon, OpenAI / Microsoft, Silo AI / AMD pattern), (b) commercial pressure to compromise on openness or sovereignty commitments under capital-allocation stress, (c) frontier-capability gap with better-funded US competitors that may remain structurally insurmountable at current funding scales.

The commercial-frontier approach benefits: (a) access to capital scales that academic-and-state answers cannot match (€3B+ vs €37.4M for OpenEuroLLM), (b) execution velocity that academic-and-state answers cannot match (6 products in 15 days vs 2 deliverables in a year), (c) commercial product-market discipline that academic-and-state answers structurally don’t optimize for, (d) revenue trajectory ($400M ARR) that demonstrates commercial sustainability independent of public funding.

Mistral and OpenEuroLLM together bracket the strategic option space for European AI development. Mistral represents the commercial-frontier extreme. OpenEuroLLM represents the academic-and-state-consortium extreme. The deeper structural question is whether either extreme — or some combination — produces results that justify the European sovereign-AI agenda’s ambitions.

What Mistral committed to operationally

Mistral’s commitment to the commercial-frontier path shows up in every operational choice:

Capital strategy: Aggressive Series-after-Series fundraising to maintain frontier-compute access. €105M → €385M → €600M → €2B → $830M across approximately three years. This trajectory is sustainable only as long as the venture capital and strategic investor capital flows continue. The ASML 11% stake at $1.5B-$1.9B is the structurally most important signal — it represents European industrial capital recognizing Mistral as the European AI champion worth strategic concentration.

Compute strategy: Direct hardware partnerships with NVIDIA (Mistral 3 family trained on Hopper / H200 / Blackwell GPUs). The March 2026 $830M raise specifically targets new data centers near Paris and Sweden — vertical integration into compute infrastructure rather than depending on cloud providers or EuroHPC allocations. This is the commercial-frontier path’s structural advantage over consortium and national approaches: Mistral can commit to its own compute infrastructure rather than competing with other projects for shared HPC allocations.

Product strategy: Multi-pillar revenue (enterprise contracts, consumer subscription, API/Platform) with explicit price-performance positioning against US frontier developers. The Apache 2.0 licensing commitment is itself a product strategy — it differentiates Mistral from OpenAI/Anthropic/Google in the European enterprise market and creates a structural moat that US frontier developers cannot easily neutralize without abandoning their proprietary revenue models.

Talent strategy: European AI researchers retained in Europe through founder-employee equity, Paris headquartering, and explicit European-rooted brand positioning. Mistral demonstrates that European AI talent does not require US relocation if European venture capital exists at sufficient scale to retain it commercially. This is the talent-retention argument that no other European AI actor can currently make at Mistral’s scale.

Velocity strategy: Six products in 15 days during March 2026 is not normal AI lab cadence. It is operationally exceptional. Mistral has structured its engineering organization for high-velocity multi-product release, which produces compounding strategic advantages: more products → more enterprise contracts → more revenue → more capital → more products. The velocity flywheel is part of the commercial-frontier path’s structural advantage.

The funding and institutional choice

Mistral’s funding architecture is structurally distinct from every other European sovereign-LLM project. It is not institutional in the European public-sector sense. It is venture-funded, strategic-investor-anchored (ASML), and commercially-disciplined. The institutional model is American-style AI lab structure operated in European geography under European regulatory framework. This is structurally important because it answers the question of whether European institutional structures can produce frontier-class AI labs — the answer is yes, but only if the institutional structure imported is American venture-capital-funded private company structure operated within European geographic and regulatory boundaries.

The deeper structural question: does Mistral’s commercial-frontier model count as a European sovereign-AI answer, or is it structurally a US-style AI company that happens to be headquartered in Paris? This is contested. Mistral’s defenders argue that European headquartering, French founder team, ASML strategic investment, and explicit European-rooted brand positioning make it operationally European. Mistral’s skeptics argue that the institutional model, capital sources (significant US VC participation), and commercial trajectory make it structurally identical to a US frontier developer that happens to be in France geographically.

Both arguments are partially correct. Mistral is the most operationally European of the commercial-frontier AI labs and the most commercially-structured of the European AI projects. It bridges the categories rather than fitting cleanly into either.

III · The benchmark reality · the structural complication

Now the part of the Mistral story that the price-performance and Apache-2.0 framing tends to elide. This is not a critique of Mistral. It is a critique of the public discourse around what Mistral’s empirical results actually demonstrate at the frontier capability level.

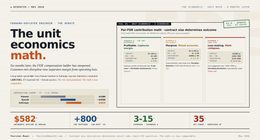

The independent benchmark results

From Serenities AI’s verified analysis of Mistral Large 3 using independent evaluations from LayerLens/Atlas:

- MMLU-Pro: 73.11% (LayerLens/Atlas)

- MATH-500: 93.60% (LayerLens/Atlas)

- AIME 2025 (per Atlas independent eval): ~40% (non-reasoning model)

- GPQA Diamond (per Atlas independent eval): ~44% (non-reasoning model)

Compare to publicly reported frontier-developer scores on the same benchmarks:

- Gemini 3 Pro · GPQA Diamond: 91.9% (per Serenities AI analysis)

- GPT-5.4 · GPQA Diamond: market-leading category

- Claude Opus 4.6: market-leading reasoning category

This is not a marginal gap. Mistral Large 3 scoring ~44% GPQA Diamond vs Gemini 3 Pro at 91.9% represents approximately 50 percentage points of capability difference on the hardest reasoning benchmark in standard use. For frontier-capability tasks, the gap between Mistral Large 3 and the US/Chinese frontier developers is structurally substantial.

The Ministral 14B reasoning variant is the exception. It scores 85% on AIME 2025 — competitive with much larger reasoning models and ahead of Qwen-14B at 73.7%. This is genuinely state-of-the-art for small reasoning models at any price. But the 14B reasoning variant is small — it is not Mistral’s frontier-class model. Mistral’s frontier capability (Large 3) lags US frontier developers; Mistral’s small-model capability (Ministral 14B reasoning) leads its class.

The output speed reality

Per Artificial Analysis, Mistral Large 3 produces approximately 38 tokens per second — “notably slow for its class — the tradeoff for 675B total parameters.” Frontier developers’ equivalent-class models produce substantially higher throughput. This is operationally meaningful for production deployment — slower output speed compounds with API cost considerations in a way that price-performance positioning at the per-token level doesn’t fully capture.

What this empirical reality implies

Three implications worth surfacing:

Implication 1 · The commercial-frontier path is operating at the same structural ceiling. Each of the prior three European sovereign-LLM essays in this track surfaced an empirical complication: Minerva’s INVALSI 4.9%, AMÁLIA’s 5.5% pt-PT share, OpenEuroLLM’s Hajič compute statement. Mistral’s complication is the GPQA Diamond / AIME 2025 gap with US frontier developers. Each of the four European answers, examined honestly, surfaces a finding the marketing materials downplay. Mistral is operationally Europe’s strongest single-firm AI play AND still operating below US frontier capability at the highest end. Both can be true at once.

Implication 2 · Apache 2.0 + price-performance + commercial velocity may be sufficient for the European market even if it isn’t sufficient for the frontier benchmark. This is the structural argument for the commercial-frontier path. Mistral doesn’t need to beat Gemini 3 Pro on GPQA Diamond to be the right answer for European enterprises that need GDPR-compliant, EU-hosted, self-hostable, Apache 2.0 frontier-class AI. The European market structurally values openness, sovereignty, and price-performance in ways the global frontier benchmark race does not capture. Mistral may be winning the right race, which is not the same race US frontier developers are running.

Implication 3 · Position 1 (frontier-match on overall capability) may be unachievable at any current European institutional scale. This is the structural finding that the four-way essay track has built toward. The consortium answer (OpenEuroLLM) is compute-constrained per Hajič’s March 2026 statement. The national from-scratch answer (Minerva) demonstrates scaling limits at 3B parameters per the INVALSI finding. The national continuation answer (AMÁLIA) is structurally below threshold at 5.5% pt-PT in 107B tokens. The commercial-frontier answer (Mistral) has substantially more capital than the others combined and still trails US frontier developers on the hardest benchmarks. The implication: frontier-match positioning may simply not be available to European AI development at current funding and compute scales, regardless of institutional model.

What the press coverage gets right · and what it understates

Mainstream Mistral coverage tends to emphasize the institutional achievement (Series rounds, valuations, ASML investment, European AI champion status) and the operational achievement (March 2026 product cadence, Apache 2.0 licensing, $400M ARR). These are real and important. Some independent analyses note the frontier-benchmark gap honestly. Most coverage tends to elide it.

This is the same discourse pattern I documented in the AMÁLIA, Minerva, and OpenEuroLLM essays. Press coverage of European AI projects emphasizes the institutional achievements (real and important) without surfacing the technical findings that complicate the simple narrative. Mistral’s GPQA Diamond / AIME 2025 gap is to Mistral what the INVALSI 4.9% finding is to Minerva, the 5.5% pt-PT share is to AMÁLIA, and the Hajič compute statement is to OpenEuroLLM.

Four projects. Four findings. Each one harder than the framing it’s wrapped in.

IV · The four-way structural comparison · the European sovereign-LLM landscape

With Mistral now documented at the same level of detail as the prior three essays, the four-way structural comparison becomes possible.

The institutional and resource comparison

| Dimension | Minerva (Italy) | AMÁLIA (Portugal) | OpenEuroLLM (Pan-EU) | Mistral (France) |

|---|---|---|---|---|

| Strategic answer | National from-scratch | National continuation | Pan-European consortium | Commercial-frontier |

| Institutional model | Academic (Sapienza NLP + FAIR) | Academic consortium | Academic-and-state consortium | Venture-funded private company |

| Lead | Roberto Navigli | (consortium-led) | Hajič + Sarlin | Mensch + Lample + Lacroix |

| Funding | PNRR via MUR · large national | €5.5M Portuguese gov | €37.4M EU (€20.6M DEP) | €3B+ venture capital |

| Largest investor | Italian state | Portuguese state | EU + member states | ASML at $1.5-1.9B / 11% |

| Revenue | None (academic) | None (academic) | None (research consortium) | $400M ARR (Jan 2026) |

| Flagship model | Minerva-7B (Nov 2024) | AMÁLIA base (Sep 2025) | First models (Jul 2026 target) | Mistral Large 3 (Dec 2025) |

| Parameters (flagship) | 7.4B dense | (EuroLLM derivative) | TBD · 8B planned summer 2026 | 41B active / 675B total MoE |

| Architecture | Mistral arch · custom IT tokenizer | EuroLLM continuation | From scratch · methodology developing | MoE · trained from scratch |

| Compute (flagship) | 128 GPUs Leonardo · weeks | Not publicly detailed | 4.5M+ GPU hours across 4 EuroHPC | 3,000 NVIDIA H200 GPUs |

| Languages | Italian + English (2.5T tokens) | Portuguese (5.8B clearly pt-PT) | 35 target languages | 40+ languages |

| Licensing | Truly-open (weights + data + code) | Partially open | Truly-open with EU-copyright caveats | Apache 2.0 (weights · methodology proprietary) |

| Product cadence | Iterative academic releases | Single model + extensions | Slow consortium cadence | 6 products in 15 days March 2026 |

| Structural finding | Minerva-3B 4.9% on INVALSI | 5.5% pt-PT in pt-PT-priority model | Hajič: “more compute still remain” | ~40% AIME 2025 · ~44% GPQA Diamond |

Each project surfaces an empirical complication the press coverage downplays. Each operates at a different scale of capital, compute, and institutional ambition. None of the four answers is the answer. Each of them is an answer for a specific positioning and resource context.

The strategic-positioning comparison

In the four-position framework from the AMÁLIA essay, with Mistral now added:

| Project | Position 1 (frontier-match) | Position 2 (sovereignty/openness) | Position 3 (country-knowledge depth) | Position 4 (vertical specialization) |

|---|---|---|---|---|

| Minerva | Not targeted | ✓ Operational (truly-open) | ✓ Strong commitment, scaling-limited at 7B | Not primary path |

| AMÁLIA | Not targeted | Partial | Partial (5.5% share insufficient) | Not primary path |

| OpenEuroLLM | Stated goal · compute-constrained | ✓ Strong commitment (EU AI Act compliance) | Targeted across 35 languages | Not primary path |

| Mistral | Strongest attempt · still trails US frontier developers | ✓ Apache 2.0 + EU-hosted + GDPR | Partial (40+ languages · not country-knowledge-depth focused) | ✓ Multiple verticals via Forge, Devstral, Voxtral, Leanstral |

Mistral is the only European AI actor genuinely pursuing Position 1 (frontier-match on overall capability) at the institutional level required to compete with US frontier developers. It is also the only European AI actor genuinely operating across all four positions simultaneously. This is the structural argument for the commercial-frontier path — it is the only European institutional model that scales to multi-position competitive coverage.

It is also the structural argument for why the commercial-frontier path may not be sufficient. Mistral’s Position 1 attempt produces ~44% GPQA Diamond vs Gemini 3 Pro’s 91.9%. Even the strongest European institutional model, operating with substantially more capital than any consortium or national project, does not currently produce frontier-match capability at the highest end. The European sovereign-AI strategic discourse needs to internalize this empirical reality rather than continuing to frame the question as “which institutional model produces frontier-class results.”

The temporal comparison

All four projects converge to mature operational artifacts in summer 2026:

- Already operational · Mistral Large 3 (December 2025), Minerva-7B (November 2024 + ongoing iteration), AMÁLIA base (September 2025)

- June 2026 · AMÁLIA final version target

- July 31, 2026 · OpenEuroLLM first models deliverable

- Summer 2026 · OpenEuroLLM 8B model target, Mistral Large 3 reasoning variant expected

- Ongoing · Mistral product cadence continues at March 2026 velocity

This is the strategic moment for the European sovereign-LLM movement. For the first time, all four answers have operational artifacts that can be empirically compared on the same benchmarks at the same time. The four-way structural comparison this essay track has built becomes empirically tractable.

V · What the Mistral case demonstrates about the European AI landscape

Five structural observations emerge from the Mistral case that close the four-way essay track.

Observation 1 · European venture capital exists at sufficient scale to support frontier AI

Three years ago, the structural argument against European frontier-AI capability included the claim that European venture capital simply did not exist at the scale required. Mistral’s funding trajectory — €105M to €3B+ in three years, with strategic investors including ASML, Microsoft, Andreessen Horowitz, General Catalyst, Lightspeed, BNP Paribas, Salesforce — disproves that claim operationally. European venture capital, augmented by strategic-investor capital from European industrial actors (ASML) and US venture funds, can sustain frontier-AI development at scale. The bottleneck is not capital availability; it is capital allocation to AI specifically.

Observation 2 · European talent retention is achievable at commercial scale

Mensch’s Google DeepMind background and Lample/Lacroix’s Meta background represented direct talent extraction from the two most significant US AI labs of the prior generation. Mistral demonstrates that European AI talent does not require US relocation if European institutional structures provide sufficient compensation, equity, and strategic ambition. This is structurally important for every subsequent European AI initiative — it answers the talent-retention question that all European AI strategic discourse has been concerned about for the past decade.

Observation 3 · Apache 2.0 is the European competitive moat against US proprietary alternatives

OpenAI, Anthropic, and Google Gemini cannot match Mistral’s Apache 2.0 licensing posture without abandoning their proprietary-API revenue model. For European enterprises with data-sovereignty requirements, Apache 2.0 Mistral models are structurally superior to proprietary US alternatives regardless of raw capability rankings. This is a competitive moat that scales with EU regulatory enforcement of AI Act, GDPR, and data-sovereignty requirements. The European sovereign-AI agenda’s regulatory framework structurally favors Mistral’s licensing model over US frontier developers’ proprietary models — Mistral has positioned itself to win on the dimensions Europe’s regulators are optimizing for.

Observation 4 · The frontier-capability gap is structural, not institutional

The deepest finding from the four-way essay track is that the frontier-capability gap between European AI development and US frontier developers does not appear to be solvable through institutional reorganization. The consortium model is compute-constrained. The national from-scratch model is parameter-scale-constrained. The national continuation model is data-share-constrained. The commercial-frontier model — with substantially more capital, compute, and velocity than the others — is still constrained on frontier benchmarks. This implies the gap is structural to the funding and compute scales currently accessible in Europe, not to the institutional choices made by individual projects. Closing the gap requires either substantially larger European investment in AI specifically, or different strategic positioning that doesn’t require frontier-match capability to deliver value.

Observation 5 · Position 2 + Position 4 may be the strategically correct European positioning

If Position 1 (frontier-match) is structurally unachievable at current European funding scales, the strategic-positioning recommendation that emerges is Position 2 + Position 4:

- Position 2 (sovereignty/openness/compliance) — Europe’s regulatory framework structurally favors this positioning. Apache 2.0 + EU-hosted + GDPR-compliant + AI-Act-aligned models are the European competitive advantage. Mistral is operationally delivering on this positioning.

- Position 4 (vertical specialization) — Europe’s industrial base (semiconductor manufacturing, automotive, pharmaceutical, aerospace, defense, shipping) creates vertical-specialization opportunities that US frontier developers cannot easily address from generic frontier-capability positioning. Mistral is operationally delivering on this through Forge, Devstral, Voxtral, Leanstral, and ASML / ESA / CMA CGM enterprise contracts.

The combination is: stop trying to match US frontier developers on raw capability, focus on the dimensions European regulatory framework and industrial base actually create competitive advantage on. This is what Mistral is operationally doing — and the empirical results (€400M ARR, 20x year-over-year growth, named enterprise customers, multi-product velocity) suggest it is working at the commercial-frontier scale.

VI · The closing argument · what the four-way essay track demonstrates

Across four standalone essays, this track has documented the operational state of European sovereign-LLM development from four institutional perspectives:

- Essay 01 · AMÁLIA · the national continuation answer · €5.5M Portuguese state investment · 5.5% pt-PT share finding

- Essay 02 · Minerva · the national from-scratch answer · large Italian PNRR investment · Minerva-3B 4.9% INVALSI finding

- Essay 03 · OpenEuroLLM · the pan-European consortium answer · €37.4M EU investment · Hajič compute bottleneck finding

- Essay 04 (this piece) · Mistral · the commercial-frontier answer · €3B+ venture-funded investment · GPQA Diamond gap finding

Each answer is valid for its specific positioning and resource context. Each surfaces an empirical complication the marketing materials downplay. None of the four is the answer in the abstract. Together they map the European sovereign-LLM strategic option space comprehensively.

The deepest structural finding the track produces: the frontier-capability gap between European AI development and US frontier developers appears to be structural to current European funding and compute scales, not to the institutional choices made by individual projects. Mistral has substantially more capital than the other three answers combined. Mistral still trails US frontier developers on the hardest benchmarks. This is the empirical reality the European strategic discourse should internalize.

This is not a counsel of despair. It is a counsel of strategic clarity. The implications:

- The European sovereign-AI agenda should explicitly deprioritize Position 1 (frontier-match) as a primary success metric. The empirical evidence across all four institutional models suggests this position is not achievable at current European investment scales. Continuing to frame the strategic discourse around “Europe needs its own ChatGPT” misallocates strategic attention.

- Position 2 (sovereignty/openness/compliance) is Europe’s structural competitive advantage. Apache 2.0 + EU-hosted + GDPR-compliant + AI-Act-aligned models are dimensions US frontier developers cannot easily neutralize. Mistral is operationally winning this position. The strategic discourse should recognize this as a victory rather than as a consolation prize.

- Position 4 (vertical specialization) is where European industrial base creates competitive moats. Semiconductor manufacturing (ASML), space (ESA), shipping (CMA CGM), automotive, pharmaceutical, aerospace, defense — these are industries where European industrial expertise combined with European AI capability produces results US frontier developers cannot easily match. Mistral’s enterprise customer base is the operational demonstration.

- Position 3 (country-knowledge depth) requires either substantially larger investment than current projects can sustain, or pan-European pooling at scales beyond OpenEuroLLM’s current €37.4M. The Minerva and AMÁLIA findings together imply this position is structurally underinvested at current funding scales.

- The consortium and commercial-frontier models are complementary, not competing. OpenEuroLLM provides public-goods research infrastructure (MixtureVitae, HPLT reference models, training data catalogue) that benefits every subsequent European AI initiative including Mistral’s. Mistral provides commercial product velocity and revenue trajectory that demonstrates European AI is commercially viable. The European sovereign-AI agenda benefits from both — and the strategic discourse should stop framing them as alternatives.

For Mistral specifically, the trajectory through 2026 and 2027 will determine whether the commercial-frontier path can sustain its current velocity and revenue growth, whether the GPQA Diamond / AIME 2025 gap with US frontier developers narrows or widens with subsequent model generations, and whether the ASML strategic investment translates into deeper European industrial integration that strengthens Mistral’s competitive moat. The next model generation after Mistral Large 3 is the next data point that matters.

For the European sovereign-LLM movement broadly, the four-way comparison this essay track has built is what the strategic discourse needs: a structurally honest framework for evaluating European AI development across multiple institutional models simultaneously, surfacing the empirical complications each project’s marketing materials downplay, and producing strategic recommendations grounded in the operational realities of what actually works at current European investment scales.

More analysis like this is needed. Not less. The questions are real. The answers are still being determined. The Sapienza NLP team’s work on Minerva, the AMÁLIA consortium’s work on Portuguese continuation pre-training, the OpenEuroLLM consortium’s work on pan-European pooled-resources research infrastructure, and Mistral’s work on commercial-frontier multi-product velocity are all substantial contributions to the European sovereign-AI agenda. Each deserves the careful, rigorous, structurally honest discourse this essay track has attempted to model. All four together are what European sovereign-AI development actually looks like in operational form as of mid-May 2026.

That’s the read on Mistral, and that’s the read on the four-way European sovereign-LLM landscape that closes the essay track. The work is real across all four projects. The institutional achievement is substantial across all four. The empirical findings are harder than the press coverage suggests across all four. All of these can be true at once. The strategic discourse benefits from holding all of them simultaneously rather than collapsing the analysis into single-answer triumphalism or single-failure pessimism.

The European sovereign-AI agenda is at the empirical-data-ground-truth moment that comes after years of speculation. Summer 2026 is when that moment becomes operational. The discourse should be ready for whatever the data actually shows.

About the Author

Thorsten Meyer is a Munich-based futurist, post-labor economist, and recipient of OpenAI’s 10 Billion Token Award. He spent two decades managing €1B+ portfolios in enterprise ICT before deciding that writing about the transition was more useful than managing quarterly slides through it. More at ThorstenMeyerAI.com.

Related Reading · the European sovereign-LLM essay track (now complete)

- AMÁLIA · The Three Hard Questions — Standalone Essay 01 · the Portuguese case study (national continuation answer)

- Minerva · The Opposite Path — Standalone Essay 02 · the Italian case study (national from-scratch answer)

- OpenEuroLLM · The Third Path — Standalone Essay 03 · the pan-European consortium case study (consortium answer)

- This piece — Standalone Essay 04 · the Mistral case study (commercial-frontier answer · closes the four-way essay track)

Sources

- Mistral AI · Introducing Mistral 3 · December 2, 2025 · Large 3 + Ministral 3 family announcement

- Wikipedia · Mistral AI · funding history, founding, model timeline

- Built In · Mistral AI: Models, Capabilities and Latest Developments · March 2026 · ASML investment, valuation, market positioning

- Serenities AI · Mistral AI Models 2026: Small 4, Large 3, Voxtral TTS, Forge — Complete Guide · April 2026 · verified benchmarks, March 2026 product cadence, ARR trajectory

- Shawn Kanungo · Mistral 3 AI Explained – Open-Source Models Guide 2026 · model family detail

- Aizolo · Mistral AI Models 2026: A Powerful Complete Guide for Builders · pricing and positioning analysis

- Aizolo · Mistral AI Latest Models 2026: The Powerful Breakthroughs (and Hidden Limitations) · licensing analysis

- PricePerToken · Mistral AI API Pricing (Updated 2026) · May 10, 2026 · complete pricing across 42 models

- CostBench · Mistral AI API Pricing 2026 · April 23, 2026 · Artificial Analysis pricing data

- TechCrunch · Open source LLMs hit Europe’s digital sovereignty roadmap · Hajič on Mistral OpenEuroLLM absence

- OpenEuroLLM launch press release · February 3, 2025 · Mistral notably absent from partner list

- LLM Stats · AI Updates Today (May 2026) · real-time model release tracking

- Arthur Mensch · CEO and co-founder Mistral AI · former Google DeepMind

- Guillaume Lample · co-founder Mistral AI · former Meta Platforms

- Timothée Lacroix · co-founder Mistral AI · former Meta Platforms

- Roger Dassen · ASML CFO · Mistral strategic committee member following $1.5-1.9B investment

- ASML · Dutch semiconductor lithography giant · largest Mistral shareholder at 11% as of September 2025

- Mistral Large 3 · December 2, 2025 release · 41B active / 675B total MoE · 3,000 NVIDIA H200 GPUs · Apache 2.0

- Ministral 3 family · 9 models (3 sizes × 3 variants) · 3B/8B/14B · Apache 2.0

- Mistral Small 4 · March 2026 release · $0.15/M input · 5x cost advantage vs GPT-5.4 Mini

- Voxtral TTS · March 2026 release · 9 languages · built on Ministral 3B · 73% cheaper than ElevenLabs

- Leanstral · March 2026 release · formal proof agent

- Forge · March 2026 release · enterprise training platform · ASML and ESA as launch customers

- Spaces CLI · March 2026 release · developer command-line interface

- NVIDIA Nemotron Coalition · March 2026 · Mistral founding partnership role

- Devstral 2 / Devstral Small 2 · December 10, 2025 release · coding specialization

- Le Chat · consumer chatbot · iOS/Android · Pro tier $14.99/month · 1M downloads in first 14 days

- ARR trajectory · ~$20M (January 2025) → $400M (January 2026) · per CEO Arthur Mensch

- Valuation · $13.8B as of late 2025/early 2026

- Cumulative capital raised · €3B+ across approximately 3 years

- March 2026 funding round · $830M raised · new data centers near Paris and Sweden

- NVIDIA partnership · Hopper / H200 / Blackwell GPU training infrastructure · TensorRT-LLM + SGLang efficient inference · co-designed prefill/decode disaggregated serving

- LayerLens/Atlas · independent evaluation framework · Mistral Large 3 benchmarks

- Artificial Analysis · independent throughput benchmarks · Mistral Large 3 ~38 t/s

- Apache 2.0 license · Mistral’s primary licensing posture · structural competitive moat vs US proprietary alternatives

- École Polytechnique · founder team alma mater · French elite engineering school

- Voxtral TTS · the one notable non-Apache-2.0 Mistral release

- AMÁLIA · Essay 01 · the Portuguese national continuation answer

- Minerva · Essay 02 · the Italian national from-scratch answer

- OpenEuroLLM · Essay 03 · the pan-European consortium answer