Reading capability data, deployment reality, and labor market consequences from outside the frontier lab

By Thorsten Meyer — May 2026

Jack Clark’s Import AI #455 has a section titled “The coding singularity – capabilities over time” that does the heavy lifting for his automated AI R&D thesis. The section assembles two data points — SWE-Bench saturation and METR time horizons — and connects them to the deployment behavior he sees inside frontier labs: “the vast majority of people I meet at frontier labs and around Silicon Valley now code entirely through AI systems.” The argument is structurally simple. AI systems have gotten dramatically better at writing real-world code. AI systems have gotten dramatically better at chaining together coding tasks autonomously. The two together produce a recursive loop where AI engineering automation accelerates the production of more capable AI systems, which produce more capable AI engineering, and so on. The “coding singularity” is what you call the inflection point in that loop.

This piece is the read on Clark’s section from outside the frontier lab. Three things to do here: confirm and update the capability numbers (the data has moved meaningfully in the week since publication), check the deployment reality against what’s actually happening across the broader software market (not just at frontier labs), and surface what I take to be the right framing of what “coding singularity” actually means — which is not primarily about coding.

The headline finding: Clark’s data is correct and probably understated. The deployment reality is more bifurcated than “everyone codes through AI” suggests. And the substantive thing happening here is not the coding capability itself — it is the opening of the recursive self-improvement loop that the coding capability makes operational. That is the singularity. The coding part is the visible wedge.

What follows works through each of those threads with current data, the deployment landscape as it exists in May 2026, and the structural read on what this implies for software engineers, software businesses, policy professionals, and investors.

The coding singularity is real —

and steeper than Clark presented.

Clark’s data is accurate. The trajectory is plausibly steeper. The deployment is bifurcated. The labor consequence is empirical. The substance is recursive self-improvement.

Jack Clark’s Import AI #455 has a section called “The coding singularity – capabilities over time” that does the heavy lifting for his automated AI R&D thesis. This is the read on Clark’s section from outside the frontier lab. The headline finding: the capability data is real and possibly understated, the deployment reality is more bifurcated than “everyone codes through AI” suggests, and the substantive event is not the coding part — it’s the opening of the recursive self-improvement loop the coding capability makes operational.

Clark’s numbers check out. Post-publication data is sharper.

Both benchmark trajectories Clark cites are publicly verifiable. Both have moved meaningfully in the week since Import AI #455 was published. The trajectory is plausibly steeper than the essay presents.

Coding with AI For Dummies (For Dummies: Learning Made Easy)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Five-tool consolidated stack. Bifurcated by segment.

Clark: “frontier-lab researchers code entirely through AI systems.” Correct for frontier labs. Partially correct across the broader market — with substantial segment-level variance. The Cambrian explosion of 2024 has consolidated to five production-grade tools.

24% US/CA

50%+ F500

40% large ent

Cursor usage

professional

AI-Powered Developer: Build great software with ChatGPT and Copilot

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Stanford data confirms what Clark’s data implies.

Junior software engineering postings down 40-50% since 2024. Age-inverted hiring relative to historical software engineering patterns. The data is unambiguous on the entry-level segment. The longer-term consequences are unresolved.

OPENCLAW AI WITHOUT CODING: Create Automated Workflows, Smart Systems, and AI Agents Without Writing Code

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

“Coding singularity” is the right name.

Clark calls it “the coding singularity.” The phrase is correct. The framing implies the significance is about coding. The actual significance is what the coding capability enables. Coding is the wedge. The thing on the other side is the singularity.

SWE-Bench saturating means the broader AI engineering capability has reached saturation. AI R&D is engineering with model training as the target output. The coding singularity is what you see. The recursive self-improvement loop is what you are looking at.

Learn AI-Assisted Python Programming, Second Edition: With GitHub Copilot and ChatGPT

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Five audiences. Five different obligations.

The coding singularity has specific implications by stakeholder. The institutional response cycle in most democracies is longer than the cadence the data implies.

ENGINEERS

BUSINESSES

PROFESSIONALS

INVESTORS

EVERYONE ELSE

The coding singularity is the canary. The mine is what matters. Software engineers and developer-tool investors are paying attention. Alignment researchers and policymakers are paying less attention than the math suggests they should.

The capability data, confirmed and updated

Clark cites two specific data points. Both are public, both check out, and both have moved since the essay was published on May 4.

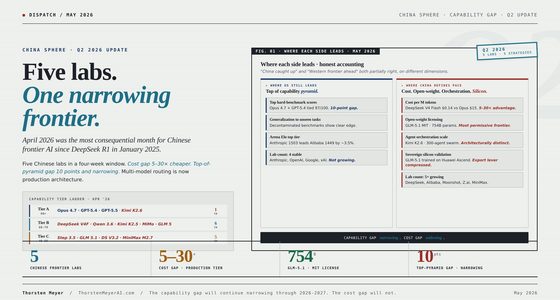

SWE-Bench

The number Clark uses: 93.9% by Claude Mythos Preview, up from ~2% at Claude 2 in late 2023. This is correct. The May 2026 SWE-Bench Verified leaderboard has Mythos Preview at 93.9%, Claude Opus 4.7 at 87.6%, GPT-5.3 Codex at 85%, Claude Opus 4.5 at 80.9%. The 6.1% remaining gap on Mythos is plausibly at the noise floor of the benchmark itself (the standard reference is the ~6% label error rate on the ImageNet validation set, which Clark cites).

What’s worth adding to Clark’s framing: SWE-Bench Verified is the easy version. SWE-Bench Pro tests harder problems, and the leaderboard there looks different. Mythos Preview at 77.8% versus Opus 4.6 at 53.4% and GPT-5.4 at 57.7%. The gap between models widens substantially as task difficulty increases. The “private subset” of SWE-Bench Pro (codebases the model hasn’t seen) drops scores further still — Claude Opus 4.1 fell from 22.7% on public benchmarks to 17.8% on private ones; GPT-5 from 23.1% to 14.9%.

The implication: the 93.9% headline number tells you about a specific class of work (Python-codebase bug fixes from public open-source projects). It is not a measure of “AI can do all software engineering.” A reasonable read is that frontier models handle the routine 80% of cognitive-software-engineering work at near-human or super-human levels on familiar codebases, and the difficulty curve gets steeper as you move toward unfamiliar codebases, harder problems, and tasks requiring substantial architectural judgment. Clark’s “vast majority of frontier-lab researchers code through AI” claim is consistent with this — frontier-lab work is mostly the easier-class of tasks where the headline number applies. Enterprise software engineering on private codebases of arbitrary complexity is harder, and the gap shows up in the Pro/private benchmarks.

This doesn’t break Clark’s argument. It refines it. The coding singularity is real for the class of work the leading benchmarks measure. The deployment of that capability to the broader software industry depends on how much of real engineering work falls into that class — which is probably the majority, but not all, and the timing of saturation in the harder classes is the empirical question that the next 12-24 months resolve.

METR time horizons

The trajectory Clark cites: 30 seconds (GPT-3.5, 2022) → 4 minutes (GPT-4, 2023) → 40 minutes (o1, 2024) → 6 hours (GPT 5.2 High, 2025) → 12 hours (Opus 4.6, 2026). Cotra’s forecast for end-2026: ~100 hours.

Here’s the update that matters. In January 2026, METR released Time Horizon 1.1 — a new task suite and updated methodology. The post-2023 doubling time recalculated to 130.8 days (4.3 months) rather than the 7-month doubling Clark uses. The trajectory has gotten faster since 2023, not slower. Cotra herself published a post titled “I underestimated AI capabilities (again)” in March 2026 revising her own forecasts upward.

Her current published median for end-2026: 24-hour 50% time horizon, with a 20th percentile of 15 hours and an 80th percentile of “too long for METR to accurately bound in practice but probably around 40 hours in reality.” Her earlier 100-hour figure (the one Clark cites from Import AI #448) was an extrapolation from older measurements. The newer measurements suggest 24 hours by end-2026 is the median expectation, not 100.

Wait — 24 hours is less than 100 hours. So this is the curve slowing, not steepening?

Not quite. The 100-hour figure was the naive extrapolation Cotra cited in late 2025; it assumed the older doubling time. The 24-hour median is calibrated to the corrected 4.3-month doubling time, with the 80th percentile possibly reaching 40 hours. The difference is that the new measurement model is more conservative about what counts as a 50% reliable task completion. The curve itself is steeper, but the new measurement is more honest about uncertainty at the long-task end.

Here’s the empirical evidence the curve is steepening rather than flattening: as of May 8, 2026, METR added Claude Mythos Preview to the leaderboard with a 50%-time horizon of likely at least 16 hours — accompanied by the note “Measurements above 16 hours are unreliable with our current task suite.” METR is hitting the saturation of its own measurement instrument. Mythos Preview’s 80% time horizon (the more reliable metric for production work) is 3 hours 6 minutes.

The structural read: METR’s task suite is being out-grown by the models faster than the suite can be updated. This is one of the strongest pieces of evidence for the trajectory Clark describes. When the measurement instrument can’t reliably measure the leading model anymore, the measurement is the rate-limiter, not the capability. METR is “furiously working” on new tasks per Cotra. The lag matters because what you can’t measure, you can’t forecast — which is the structural feature of the black hole metaphor the synthesis piece in my Clark series develops.

Net read on the capability data: Clark’s numbers are correct. The trajectory is plausibly steeper than the 7-month doubling cadence the essay cites, with the corrected post-2023 cadence closer to 4.3 months. The leading-model measurement is hitting the noise floor of the benchmark and METR’s task suite simultaneously. Both signals point to the curve continuing or accelerating, not flattening.

The deployment reality outside the frontier lab

Clark writes: “the vast majority of people I meet at frontier labs and around Silicon Valley now code entirely through AI systems.” This is true at frontier labs. It is partially true across the broader software market in May 2026. The texture of “partially true” is where the interesting structural information lives.

The five-tool consolidated stack

The AI coding tool market was a Cambrian explosion through 2024 with twenty-plus credible options. By April 2026, the field has consolidated to five production-grade tools:

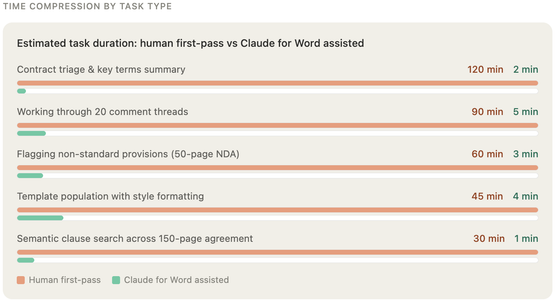

- Claude Code (Anthropic, terminal-native, MCP-deep): Reached $1B run-rate revenue within 6 months of launch, $2.5B annualized run-rate by early 2026. Highest SWE-Bench Verified score (~78.4% in production agent harnesses), strongest reasoning on hard tasks. 18% global developer adoption per JetBrains’ January 2026 survey (up 6x from April 2025), 24% adoption in US/Canada. CSAT 91%, NPS 54.

- Cursor (Anysphere, IDE-native): $29.3B valuation, $1.2B+ ARR by April 2026, used by 50%+ of Fortune 500. 18% global developer adoption (tied with Claude Code for second after Copilot). Composer 2 (March 2026) is the dominant multi-file editing surface. Credit-based billing is the persistent complaint.

- GitHub Copilot (Microsoft/GitHub): Still the most widely adopted (29% global) but growth has stalled. Multi-model platform since February 2026 — now backs Claude and Codex in addition to GPT. 40% adoption at companies with 5,000+ employees. The default for enterprise organizations already in the Microsoft ecosystem.

- OpenAI Codex (rebranded after the Windsurf acquisition for ~$3B): Strongest cloud-task-runner pattern. GPT-5.5 backbone. Roughly 60% of Cursor’s usage despite being newer in this form.

- Devin (Cognition Labs): Most autonomous — submit task, return PR after minutes-to-hours. Most demanding on review discipline. Cognition acquired the rest of Windsurf for $250M after the OpenAI acquisition split off the IDE.

This is a concentrated market. Five players, all with strong moats (model access, distribution, brand), all profitable or revenue-rich. The “Cambrian explosion” has been replaced by oligopoly faster than most software categories typically consolidate. The economics of the AI coding stack are not commodity economics. They are platform-and-brand economics with high switching costs and strong network effects via plugin ecosystems.

Adoption by segment

The “everyone codes through AI” framing collapses important segment-level variation. The actual picture in May 2026:

Frontier labs (Anthropic, OpenAI, DeepMind, xAI, Meta): Approximately 100% of cognitive software engineering work mediated by AI tools. Internal model access plus aggressive tool deployment. This is where Clark’s observation is unambiguously correct.

AI-native startups and Bay Area tech: ~90%+ adoption. Cursor and Claude Code are the dominant surfaces. The 24% US/Canada Claude Code adoption number is driven heavily by this segment.

Big tech (FAANG-adjacent enterprises): 60-75% adoption, mostly through enterprise Copilot deployments plus Cursor at the team level. Conservative on agentic autonomy due to security and compliance overhead.

Mid-market enterprise ($100M-$5B revenue, traditional software-engineering organizations): 40-55% adoption. Mostly Copilot-level inline assistance. Agentic tools (Claude Code, Devin) less common due to credential-handling concerns, opaque billing, and reviewer-discipline gaps.

Regulated industries (healthcare, finance, defense, public sector): 15-35% adoption. Heavy compliance and audit constraints. Adoption typically Copilot Business + custom internal tools. Agentic deployment generally restricted to non-production environments.

Long-tail enterprise and small-mid IT shops: 10-25% adoption. Mostly inline coding assistance, often via ChatGPT/Claude consumer apps rather than purpose-built tools.

Net read: AI coding tools have crossed the chasm. The 74% global adoption figure for “AI dev tools at work” from the JetBrains January 2026 survey is real. But the depth of adoption is segment-specific. Clark’s “frontier-lab researchers code entirely through AI” is the extreme end of a distribution that has substantial variance. The implication is not that Clark is wrong — it is that the deployment of the coding singularity is staged, with different sectors at different points along the same curve. The frontier-lab pattern Clark observes is the leading edge; the broader market is 12-36 months behind on the deployment curve, with regulated industries 36-60+ months behind.

This bifurcation is the same structural pattern that the machine economy piece in my Clark series describes for the broader economic transition. The capability is uniform; the deployment is bifurcated by friction. Software engineering is just the leading sector.

The labor market consequence is observable, not theoretical

The most consequential thing about the coding singularity is what it is doing to the software engineering labor market. The data here is uneven but the signal is clear.

Stanford Digital Economy Lab (October 2025): Employment for software developers aged 22-25 has declined ~20% from its late-2022 peak. The same dataset shows AI-exposure occupations (where software engineering sits at the top) experiencing -6% employment growth in the 22-25 cohort while seeing +9% in the 35-49 cohort. This is age-inverted hiring relative to historical software engineering patterns, which traditionally favored younger workers.

SignalFire (June 2025): Entry-level hiring at the 15 biggest tech firms fell 25% from 2023 to 2024. Big Tech overall hired 50% fewer fresh graduates over 2022-2024 than in the prior three-year period (Harvard research).

Bureau of Labor Statistics data on developer employment by age and experience: Total developer employment up moderately (~3% annually), but composition has shifted materially toward mid-career and senior workers. Junior postings as a share of all postings dropped from ~22% in 2022 to ~12% in early 2026 across major platforms.

Federal Reserve Labor Market Outcomes Report (2025): Computer science graduates at 6.1% unemployment; computer engineering at 7.5% — both higher than fine arts (no, really), liberal arts, nursing, and elementary education for recent graduates. CS unemployment was below 3% for most of the prior decade.

Salesforce (Marc Benioff, January 2025): “No new engineers in 2025.” Other major employers have announced similar but less aggressive policies. The Resume.org survey of 1,000 US business leaders found 60% likely to lay off in 2026; 40% planning AI replacement.

Reading the data: This is not “AI is killing all software engineering jobs.” Total developer employment is up. The 15% job growth projected by BLS through 2034 remains plausible (and 2034 is far enough out that the projection itself may be re-calibrated when the next major data point arrives). What the data unambiguously shows is that the entry-level segment of the software engineering labor market is being restructured. AI tools handle the routine cognitive work that has historically been the apprenticeship ground for junior engineers. The work is still happening; it is just happening through AI rather than through human apprentices.

The longer-term consequences are unresolved. The “missing generation” problem (no juniors trained today = no mid-career engineers in 5 years = no senior engineers in 10 years) is real and being warned about by senior engineering leaders (Addy Osmani’s “slow decay” framing has been the most-cited articulation). Companies that maintain junior hiring against the AI cost-savings logic are betting on the long-term talent pipeline. Companies that don’t are optimizing for short-term cost. The institutional behavior is splitting accordingly.

This connects directly to the labor displacement reality-check piece I published in early 2026. The pattern is empirically visible. The structural mechanism — AI labor at $1-10/agent-year versus human labor at $50K/agent-year — is the labor substitution math that the machine economy piece develops in detail. The coding singularity is the empirical leading indicator that the labor substitution math is operational, not theoretical.

What “coding singularity” actually means

Here is where I want to push back on Clark’s framing — not on the data, which is accurate, but on the framing choice.

Clark calls this “the coding singularity.” The phrase is correct in the sense that we are at an inflection point in coding capability. But the framing implies the significance is about coding. The actual significance is what the coding capability enables.

Coding is the wedge. The thing on the other side of the wedge is automated AI R&D — which is the actual singularity. Clark himself makes this connection in the section: “AI systems have gotten good enough to automate a major component of AI R&D, speeding up all the humans that work on it.” And: “if you look closely at the work of many AI researchers, a lot of their tasks boil down into things that might take a person a few hours to do – cleaning data, reading data, launching experiments, etc. All of this kind of work now sits inside the time horizon scope of modern systems.”

The implication, spelled out: the same AI capability that produces SWE-Bench saturation is the capability that produces automated AI R&D. Software engineering is the most concrete, well-benchmarked, deployment-validated instance of the broader AI engineering capability. When SWE-Bench saturates, what it tells you is that the broader AI engineering capability is at the saturation point. AI R&D is “just” engineering with model training as the target output.

This is the recursive loop. Claude writes code → that code is used to train the next Claude → next Claude writes better code → recursive improvement. The METR time horizons curve says this loop is operational at the 12-hour task level now and will be operational at the multi-day task level by end of 2026 on the corrected doubling time. The compounding error piece in my Clark series articulates what happens to alignment under recursive self-improvement: 0.999 raised to 500 generations equals 0.606. The math is elementary. The structural implication is not.

The coding singularity is what you see. The recursive self-improvement loop is what you are looking at. The naming choice matters because it shapes which institutional response gets organized. If the coding singularity is the news, the response is workforce-development policy and developer tooling regulation. If the recursive self-improvement loop is the news, the response is alignment research priority, coordination mechanism development, and the structural-policy response that the machine economy piece describes. The coding-singularity framing under-weights the alignment and policy response that the underlying recursive loop demands.

This is not a critique of Clark personally — Clark’s full essay develops the recursive self-improvement implication clearly and the 60%/2028 forecast is explicit about what’s at stake. The critique is of the public discourse that reads the “coding singularity” section as primarily a software-engineering story. The software-engineering story is the visible wedge. The recursive self-improvement loop is the actual content.

What this means for everyone

The coding singularity has specific implications by audience:

For software engineers. Career strategy should be calibrated to the empirical labor market data, not to either the techno-optimist “AI will create new jobs” story or the doomer “all software jobs disappear” story. The data says: routine coding work is being automated; entry-level postings are down 40-50%; mid-career and senior workers are gaining hiring share. The skills that retain value are those that combine engineering judgment with architecture, communication, regulatory understanding, and supervision of AI agents. “Code quality” is a depreciated asset; “code review quality” is an appreciating one. Practical move: develop agent orchestration skills now; treat AI tool fluency as table stakes rather than differentiation. The bilingual engineer (direct coding + agent orchestration) outperforms the monolingual engineer in either direction.

For software businesses. Three things change. First, internal engineering productivity is rising materially — 30-50% reported productivity gains in serious AI-tool deployments. Plan capacity accordingly. Second, the cost of building software is dropping toward the cost of compute, which means competitive advantages that depended on engineering capacity are eroding. What replaces them? Distribution, data network effects, domain specialization, regulatory expertise, customer relationships, brand, and operational excellence that AI cannot easily substitute. SaaS moat strategy needs to be examined explicitly. Third, the AI coding tools themselves are concentrating economic value — Cursor at $1.2B ARR and Claude Code at $2.5B run-rate represent value extraction from the productivity gains that historically would have accrued to engineering organizations directly. The middleware layer is the new moat-rich layer in the software stack.

For policy professionals. The labor market data resolves the empirical question of whether AI is affecting cognitive-work employment. It is. The policy response — reskilling programs, transition support, social safety net adjustments, education system updates — needs to operate on the cadence the data implies, which is faster than typical institutional response cycles. The “missing generation” problem is the most concrete near-term consequence to plan for. University CS programs need to update curricula. Apprenticeship and bootcamp models need to evolve. Public sector tech employment may need to maintain junior pipelines that private sector employers are cutting, with corresponding fiscal implications. The compute supply governance and tax base reform challenges that the machine economy piece develops apply here too — the AI coding tool oligopoly is a specific instance of the broader machine-economy structural concentration.

For investors. The capability trajectory is real, the deployment is staged across segments, the labor displacement is empirical, and the value extraction is concentrated. Three specific investment-thesis implications: (a) frontier-lab equity captures the upside of the recursive loop if alignment is solved; valuations are already pricing this but the joint distribution over alignment risk and machine-economy capture matters more than current discount rates assume; (b) AI coding tool platforms (Cursor, Claude Code) are the immediate value-extraction layer; the moat is real but defensibility against new model entrants is the open question; (c) human-labor-heavy software businesses face structural margin pressure as competitors restructure toward AI-native operations. The investment thesis that reads the coding singularity as primarily a productivity story rather than a structural-reorganization story will underperform.

For everyone else. The empirical reality of the coding singularity is one of the strongest available signals about the trajectory the broader AI transition is on. If you have been waiting for unambiguous evidence that the AI transition is operational, the public benchmark data plus the labor market data plus the deployment data plus the tool revenue data is that evidence. The window for understanding the transition and positioning for it is the same 32-month window that the synthesis piece describes for the broader Clark forecast. The institutional response cycles in most democracies are longer than 32 months. The response that gets built (or doesn’t) during the window will determine what the post-transition equilibrium looks like.

The honest assessment

Clark’s “coding singularity” section is the most empirically grounded part of Import AI #455. The data checks out. The deployment reality at frontier labs matches what Clark describes. The trajectory is plausibly steeper than the essay presents based on post-publication updates to METR’s methodology and Cotra’s revised forecasts. The labor market consequence is empirically visible in Stanford and SignalFire data, in Federal Reserve unemployment statistics, in BLS occupational data, and in the explicit announcements from major employers about junior hiring policy.

What I’d add to Clark’s framing: the coding singularity is real but it is not the most important thing happening. The recursive self-improvement loop is. The coding capability is the visible wedge that confirms the underlying capability has reached the threshold where the recursive loop can operate. SWE-Bench saturation, METR time horizons reaching 12-16+ hours, the 4.3-month doubling time, the deployment manifestation in 74% global developer adoption, the labor market response — all of these are surface phenomena. The substantive event is the inflection in the underlying capability that produces all of them simultaneously.

This is why I think the framing matters. If you read “coding singularity” as primarily a coding story, the institutional response is workforce-policy and developer-tool regulation. If you read it as the surface manifestation of automated AI R&D arrival, the response is the alignment research priority, the coordination mechanism development, and the structural-policy response that the machine economy and synthesis pieces develop. The two responses are not mutually exclusive but they require different institutional capacity and operate on different timelines.

The honest reading: both responses are needed, and the coding-singularity framing under-weights the alignment-and-coordination response in ways that the discourse around Clark’s essay has so far inherited. Software engineers, software businesses, and developer-tooling investors are paying attention. Alignment researchers, coordination institutions, and policymakers are paying less attention than the math suggests they should. The coding singularity is the canary. The mine is what matters.

That is the structural read on Clark’s section from outside the frontier lab. The data confirms the framing. The framing under-states the implication. The implication is what the next 32 months — Clark’s window for automated AI R&D arrival — will resolve.

About the Author

Thorsten Meyer is a Munich-based futurist, post-labor economist, and recipient of OpenAI’s 10 Billion Token Award. He spent two decades managing €1B+ portfolios in enterprise ICT before deciding that writing about the transition was more useful than managing quarterly slides through it. More at ThorstenMeyerAI.com.

Related Reading

- Jack Clark Says It Out Loud · 60%/2028 statement — Piece 1 of the Clark series

- The Benchmark Saturation Cascade — Piece 2 · the six benchmarks

- The Compounding Error Problem — Piece 3 · 0.999^500 = 0.606

- The Machine Economy — Piece 4 · capital-heavy, human-light

- The Co-Founder’s Black Hole · Synthesis — Piece 5 · the structural read

- The State of AI Replacing Jobs in 2026 — empirical leading indicator

- Post-Labor Economics franchise — the structural framework

- The AI Coding Stack for Executives — practical deployment guide

Sources

- Jack Clark · Import AI 455: Automating AI Research · “The coding singularity – capabilities over time” section · May 4, 2026 · jack-clark.net

- SWE-Bench Verified Leaderboard · llm-stats.com / BenchLM.ai · May 7, 2026

- SWE-Bench Pro benchmark data · Mythos Preview 77.8%, Opus 4.6 53.4%, GPT-5.4 57.7%

- METR · Time Horizon 1.1 · January 2026 methodology revision

- METR · Claude Mythos Preview measurement · “likely at least 16 hours” 50%-time horizon · May 8, 2026 update

- Ajeya Cotra · I underestimated AI capabilities (again) · Planned Obsolescence · March 2026

- Ajeya Cotra · AI predictions for 2026 · January 2026 forecast updates

- METR official time horizons page · metr.org/time-horizons · May 2026

- JetBrains AI Pulse Survey · 10,000+ professional developers · January 2026

- Claude Code revenue: $1B run-rate within 6 months, $2.5B annualized run-rate · Anthropic disclosures

- Cursor: $29.3B valuation, $1.2B ARR · multiple sources · Q1 2026

- Stanford Digital Economy Lab · software developer age 22-25 employment data

- SignalFire · Big Tech entry-level hiring report · 2024-2025

- Harvard research · companies adopting AI cut junior dev hiring 9-10% within six quarters

- Bureau of Labor Statistics · developer occupational employment data 2022-2026

- Federal Reserve · Labor Market Outcomes by College Major 2025 report

- Resume.org · 2026 AI Layoff Survey · 1,000 US business leaders

- Marc Benioff · Salesforce “no new engineers in 2025” announcement

- ImageNet validation set label error rate · arxiv.org/abs/2103.14749 · ~6% baseline reference