Thorsten Meyer | ThorstenMeyerAI.com | February 2026

Executive Summary

Governance has moved from compliance overhead to commercial differentiator. 71% of IT leaders say AI speed conflicts with governance enforcement (OneTrust, 2025). Two-thirds of technology leaders say governance capabilities consistently lag behind AI project speed. Yet the market for AI trust, risk, and security management hit $2.34 billion in 2024 and is growing at 21.6% CAGR toward $7.4 billion by 2030.

The reason: buyers changed. Procurement teams and risk officers now sit deeper in AI buying decisions. 82% of IT leaders say AI risks have accelerated the need to modernize governance. 88% of investors say companies should increase cybersecurity capital allocation (PwC). When two vendors offer similar capability, the one with clearer controls, auditability, and accountability wins — and the other enters a procurement review cycle that can last months.

This is no longer about whether governance matters. It’s about whether your governance artifacts are ready before the sales conversation starts.

| Metric | Value |

|---|---|

| AI TRiSM market size (2024) | $2.34 billion |

| AI TRiSM market (2030 projected) | $7.4 billion |

| AI TRiSM CAGR (2025–2030) | 21.6% |

| IT leaders: AI speed conflicts with governance | 71% (OneTrust) |

| IT leaders: AI risks accelerate governance need | 82% (OneTrust) |

| Tech leaders: governance lags AI project speed | 67%+ |

| Investors: increase cybersecurity allocation | 88% (PwC) |

| AI projects failing prototype-to-production | ~50% |

| Unauthorized AI transactions from internal violations (Gartner, through 2026) | 80%+ |

| Enterprise apps with AI agents by end 2026 | 40% (Gartner) |

| Organizations deeply transforming with AI | 34% (Deloitte) |

| Organizations applying AI superficially | 37% (Deloitte) |

| Revenue aspiration vs. reality gap | 74% aspire, 20% achieve |

| CFOs: poor data trust as top AI ROI barrier | 35% |

| Productivity/efficiency gains from AI | 66% (Deloitte) |

| CPO barrier: siloed working | 57% (Deloitte CPO Survey) |

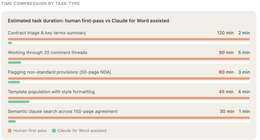

| Procurement cycle reduction with AI-assisted triage | 40% |

| Wealth clients: AI fraud resistance as top selection criterion | 54% |

| Survey base (OneTrust) | 1,250 IT leaders |

| Survey base (Deloitte) | 3,235 leaders, 24 countries |

AI FOR CORPORATE GOVERNANCE & COMPLIANCE: Your Complete Implementation Guide to Transforming Governance from Compliance Cost Center to Strategic Advantage … & MANAGEMENT LIBRARY SERIES Book 17)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

1. Why Governance Became a Sales Issue

Governance didn’t become a sales concern because CISOs started attending demos. It became a sales concern because three things happened simultaneously.

Procurement Got Smarter

Risk officers and legal teams now have standing review authority on AI vendor purchases. The question shifted — in the words of procurement analysts — from “Can this tool increase efficiency?” to “Can this tool withstand scrutiny if challenged?” That second question requires evidence, not a demo.

The EU AI Act, NIST AI Risk Management Framework, and ISO/IEC 42001 now serve as procurement criteria for vendors and partners. Broad AI governance statutes are shaping vendor contracting practices and downstream compliance expectations, particularly through AI-specific addenda and third-party risk allocation. Enterprises that can evidence compliance from day one move faster through procurement, legal, and risk review cycles.

Governance Maturity Became Visible

The IAPP’s AI Governance Vendor Report (2026) documents how AI governance capabilities have matured into four categories: policy and compliance tools, governance boards, regulatory alignment, and procurement processes. The Hackett Group evaluated approximately 220 procurement technology vendors globally for 2025–2026, scoring on technology capability, solution maturity, innovation, and customer adoption.

When governance maturity is scored, it becomes selectable. And when it’s selectable, it becomes a differentiator.

The Failure Signal Got Loud

Nearly 50% of AI projects fail to progress from prototype to production, often due to inadequate governance and risk management. 42% of companies scrapped most AI initiatives in 2025. Only 20% of organizations achieving revenue growth from AI (against 74% who aspire to it). The gap isn’t technology — it’s infrastructure. And governance is the infrastructure that makes the difference between a pilot that impresses and a deployment that survives.

| Shift | From | To |

|---|---|---|

| Buyer question | “What can this AI do?” | “Can this AI withstand scrutiny?” |

| Procurement authority | IT + business unit | IT + legal + risk + procurement |

| Governance timing | Post-deployment retrofit | Pre-sale requirement |

| Evidence standard | Vendor presentation | Auditable artifacts |

| Competitive differentiator | Model accuracy | Controls + accountability |

AI Prompts for Financial Analysis: 100+ Practical Prompts for Equity Research, Valuation, Investment Banking and Financial Risk Management (AI Tools for Finance Professionals)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

2. The Minimum Trust Stack Buyers Expect

When enterprise buyers evaluate AI vendors in 2026, they are looking for five layers of governance infrastructure. Not as aspirational commitments — as deployable evidence.

Layer 1: Identity and Access Controls

Least privilege. Role separation. Clear accountability for who can access what data and invoke which agent actions. This is where 80%+ of unauthorized AI transactions originate (Gartner): not from external attacks, but from internal policy violations — information oversharing, unacceptable use, misguided AI behavior.

The identity layer must answer: Who acted? Why? What data was involved? Without this, audit is impossible and legal exposure is unbounded.

Layer 2: Policy Enforcement

What agents can and cannot do, expressed as machine-enforceable rules, not PDF policies. The NIST AI RMF’s four pillars — Govern, Map, Measure, Manage — now function as procurement criteria. AI governance platforms enforce these as runtime constraints: scope boundaries, data access rules, escalation triggers.

Layer 3: Audit Logs

Immutable, reviewable, complete. Every AI input and output traceable to actor, timestamp, data source, and authorization. New architectures use cryptographic provenance chains and digital signatures. If a Pricing Agent drops a price by 20%, leadership needs to see the chain of thought that led to that decision. If an agent autonomously commits a contract term, the audit trail must prove authorization.

Layer 4: Human Oversight Model

Intervention thresholds — when does a human step in? The EU AI Act mandates human oversight for high-risk systems. But even outside regulated contexts, buyers want defined escalation paths: what triggers human review, who is notified, and how quickly.

Layer 5: Incident Response Protocol

Containment and disclosure. When an AI system fails, generates harmful output, or breaches policy, what happens? The protocol must specify: detection mechanism, containment steps, stakeholder notification, remediation timeline, and post-incident review.

| Trust Layer | What Buyers Ask | Evidence Required |

|---|---|---|

| Identity & access | Who can access what? | RBAC matrix, least-privilege attestation, agent identity architecture |

| Policy enforcement | What can agents do? | Machine-enforceable policy rules, scope boundaries, data access controls |

| Audit logs | What happened and why? | Immutable action logs, cryptographic provenance, decision traces |

| Human oversight | When does a human step in? | Escalation thresholds, notification paths, intervention SLAs |

| Incident response | What happens when it fails? | Detection, containment, disclosure protocol, remediation timeline |

AI auditability and control platforms

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

3. Governance Artifacts That Accelerate Sales

The governance stack is the architecture. Governance artifacts are the sales collateral. The difference between a 30-day close and a 6-month procurement review often comes down to what you can hand to legal and risk teams on the first call.

The Trust Appendix

A pre-packaged document set designed for procurement and legal reviewers:

| Artifact | What It Contains | Who Uses It |

|---|---|---|

| Control architecture diagram | One-page visual: identity, policy, audit, oversight, incident layers | CISO, procurement lead |

| Risk tiering matrix | Workflows classified by risk level, with corresponding controls | Risk officer, legal |

| Sample audit log | Redacted real example of decision trace + escalation path | Security team, auditor |

| Security and policy runbook | Operational procedures for enforcement, monitoring, response | IT operations, compliance |

| Accountability boundary language | Contractual clauses defining vendor vs. buyer responsibility | General counsel |

Why This Accelerates Sales

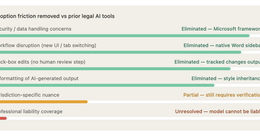

Enterprises that evidence compliance from day one move faster through procurement, legal, and risk review cycles. The alternative — waiting for legal to draft bespoke review questions, waiting for the vendor to scramble evidence, waiting for risk to schedule assessment — adds weeks or months.

The trust appendix doesn’t replace due diligence. It preempts the 80% of standard questions that slow every deal.

The Governance Scorecard

Forward-looking buyers are building governance scorecards into vendor shortlisting. The same way vendors are scored on technical capability, solution maturity, and customer adoption (Hackett Group methodology), governance dimensions are being added:

| Scorecard Dimension | What’s Evaluated |

|---|---|

| Policy maturity | Written, enforceable, audited? |

| Access architecture | Least privilege, role-based, agent-scoped? |

| Audit completeness | Immutable, traceable, review-ready? |

| Incident readiness | Documented, tested, disclosed? |

| Regulatory alignment | EU AI Act, NIST RMF, ISO 42001? |

| Transparency | Explainable decisions, provenance chains? |

AI and Third-Party Risk: Solutions for Assessing and Managing Your AI Vendors and Systems

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

4. What the Data Says About Trust as Commercial Lever

The commercial case is not theoretical.

Deloitte (3,235 leaders, 24 countries): 66% report productivity gains from AI, but only 34% are deeply transforming. 37% apply AI superficially. The governance gap is visible in the revenue data: 74% aspire to revenue growth from AI, only 20% achieve it. The missing infrastructure isn’t compute — it’s controls.

OneTrust (1,250 IT leaders): 71% say AI speed conflicts with governance enforcement. 82% say AI risks have accelerated governance modernization needs. The demand signal is clear. The supply of governance-ready vendors is not.

Gartner: 40% of enterprise apps will integrate AI agents by end 2026 (up from <5%). Through 2026, 80%+ of unauthorized AI transactions will come from internal policy violations. AI TRiSM will reach mainstream adoption within five years.

PwC: 88% of investors agree companies should increase cybersecurity capital allocation. Trust is showing up in capital allocation decisions, not just procurement checklists.

Insurance sector (Roots Automation): Carriers put black-box systems under the microscope. Transparency matters — systems must be explainable, auditable, and aligned with regulatory expectations. This isn’t a tech preference. It’s a selection criterion.

Wealth management: 54% of high-net-worth clients rank “demonstrable AI fraud resistance” and “geographic sovereignty of LLM inference” as top-two selection criteria when choosing a wealth manager.

CFO perspective: 35% of finance chiefs cite lack of trusted data as the top barrier to AI ROI. Trust isn’t a soft metric. It’s a financial constraint.

| Commercial Evidence | Finding |

|---|---|

| Revenue aspiration vs. reality | 74% aspire to AI revenue growth; 20% achieve it (Deloitte) |

| Governance-speed conflict | 71% of IT leaders see conflict (OneTrust) |

| Governance modernization urgency | 82% accelerated by AI risk (OneTrust) |

| Investor cybersecurity priority | 88% say increase allocation (PwC) |

| Data trust as ROI barrier | 35% of CFOs cite as #1 barrier |

| Unauthorized AI transactions (internal) | 80%+ by 2026 (Gartner) |

| HNW client selection criteria | 54% rank AI fraud resistance top-2 |

| AI prototype-to-production failure | ~50% (governance gap) |

| Procurement cycle reduction | 40% with AI-assisted triage + governance |

5. Practical Implications and Actions

Action 1: Put Governance in the Front Half of Enterprise Pitches

Not slide 47 in the appendix. Slides 3–5 in the main deck. Lead with the control architecture diagram. Show the trust stack before the feature demo. Enterprise buyers who see governance evidence early develop confidence faster — and confidence closes deals.

The 71% of IT leaders who see speed-governance conflict will respond to a vendor who has resolved that conflict in their architecture. That’s a sales message, not a compliance checkbox.

Action 2: Train GTM Teams to Explain Controls in Business Language

Sales engineers can explain model architecture. Can they explain the audit trail? The escalation path? The blast radius of a policy violation?

Governance fluency for go-to-market teams means:

- Translating RBAC into “who can see your data”

- Translating policy enforcement into “what the AI cannot do without permission”

- Translating audit logs into “the evidence we provide when something goes wrong”

- Translating incident response into “what happens in the first 60 minutes”

Action 3: Pre-Package a Trust Appendix for Procurement/Legal Review

Build it once. Include it in every enterprise proposal. The five artifacts — control diagram, risk matrix, sample audit log, security runbook, accountability language — address 80% of standard review questions.

The vendor who arrives at the procurement table with a trust appendix doesn’t just save time. They signal maturity. And maturity, in 2026 AI buying, is the differentiator that model accuracy used to be.

Action 4: Include Governance KPIs in Customer Success Reporting

Don’t just report uptime and usage. Report:

- Policy compliance rate (% of agent actions within defined bounds)

- Audit log completeness (% of decisions with full trace)

- Escalation frequency (interventions per 1,000 agent actions)

- Incident response time (mean time to containment)

- Access review cadence (last least-privilege attestation date)

These KPIs tell the customer: we don’t just deploy AI — we govern it. And they create renewal evidence that procurement teams can reference.

Action 5: Treat Governance Debt as Pipeline Risk

Every governance gap — missing audit logs, informal escalation paths, undocumented access controls — is a deal risk. Not a compliance risk. A revenue risk.

The AI TRiSM market is growing at 21.6% CAGR because enterprises are spending to close this gap. Vendors who carry governance debt into sales conversations are handing the deal to the competitor who resolved it.

6. What to Watch

Governance scorecards in vendor shortlists. The Hackett Group evaluated 220 vendors on maturity and capability. Expect governance dimensions — audit completeness, regulatory alignment, transparency — to become standard scoring criteria alongside functionality and price.

Sales-security team convergence. AI TRiSM initiatives reveal operational silos. The vendors who win will be those where sales, security, compliance, and product operate as a coordinated governance unit — not where sales improvises answers to security questions.

Trust-positioned vendors commanding pricing resilience. In wealth management, 54% of clients rank AI fraud resistance as a top selection criterion. In insurance, transparency is a vendor filter. When trust is a selection criterion, trust-positioned vendors have pricing power. The race to the bottom on model capability doesn’t apply to governance maturity.

Gartner’s mainstream adoption timeline. AI TRiSM will reach mainstream adoption within five years. The vendors who build governance infrastructure now capture the market. The vendors who treat it as a future problem concede it.

The Bottom Line

Governance is no longer the section of the proposal that procurement reads while the buyer champions skip. It’s the section that determines whether procurement approves the deal at all.

71% of IT leaders see AI speed conflicting with governance. 82% say AI risk accelerated governance modernization. 88% of investors want more cybersecurity investment. $2.34 billion is being spent on AI trust infrastructure — growing at 21.6% CAGR to $7.4 billion by 2030.

The vendor who arrives with a trust appendix, a control architecture diagram, and governance KPIs built into the success dashboard isn’t selling harder. They’re selling smarter. Because in 2026 enterprise AI, the deal doesn’t close when the buyer says “impressive.” It closes when procurement says “approved.”

The best AI pitch in the world doesn’t close a deal. The governance evidence attached to it does.

Thorsten Meyer is an AI strategy advisor who has noticed that the fastest-closing enterprise deals in 2026 have one thing in common: the governance slides come before the demo, not after. More at ThorstenMeyerAI.com.

Sources

- OneTrust — AI-Ready Governance Report: 71% Speed-Governance Conflict, 82% Accelerated Need (1,250 IT Leaders, 2025)

- Deloitte — State of AI in the Enterprise (3,235 Leaders, 24 Countries, 2025)

- PwC — Competing on Trust in the Age of AI: 88% Investor Cybersecurity Priority (2025)

- Gartner — AI TRiSM Market Guide (2025): 80%+ Unauthorized Transactions from Internal Violations

- Grand View Research — AI TRiSM Market: $2.34B (2024), $7.4B by 2030, 21.6% CAGR

- Gartner — 40% Enterprise Apps with AI Agents by End 2026

- IAPP — AI Governance Vendor Report (2026)

- Hackett Group — 220 Procurement Tech Vendors Evaluated (2025–2026)

- Deloitte — Global CPO Survey: 57% Siloed Working Barrier (2025)

- NIST — AI Risk Management Framework: Govern, Map, Measure, Manage

- ISO/IEC 42001 — AI Management System Standard

- EU AI Act — High-Risk AI System Requirements (2025–2026)

- Palo Alto Networks — AI TRiSM Guide: Four-Layer Architecture

- Mindgard — Gartner AI TRiSM Market Analysis

- Roots Automation — Insurance AI: Transparency as Selection Criterion

- OriginTrail — Trust Is Capital: 5 AI ROI Trends (2026)

- OneTrust — AI-Ready Governance: New Competitive Advantage

- Intelligent CIO — 2026: Experimental AI to Trusted Agentic Systems

- SC Media — Identity as 2026 Battleground

- Help Net Security — Five Identity Shifts Reshaping Enterprise Security (2026)

- Corporate Compliance Insights — AI Risk 2026: General Counsel Changes

- Art of Procurement — State of AI in Procurement (2026)

- PwC — 2026 AI Business Predictions

- IMD — 2026 AI Trends: Staying Competitive

- TechRadar — Responsible Innovation: What 2026 Should Look Like

© 2026 Thorsten Meyer. All rights reserved. ThorstenMeyerAI.com