Copy Fail, Mythos Preview, and the collapse of the cost curve that software security was built on

By Thorsten Meyer — May 2026

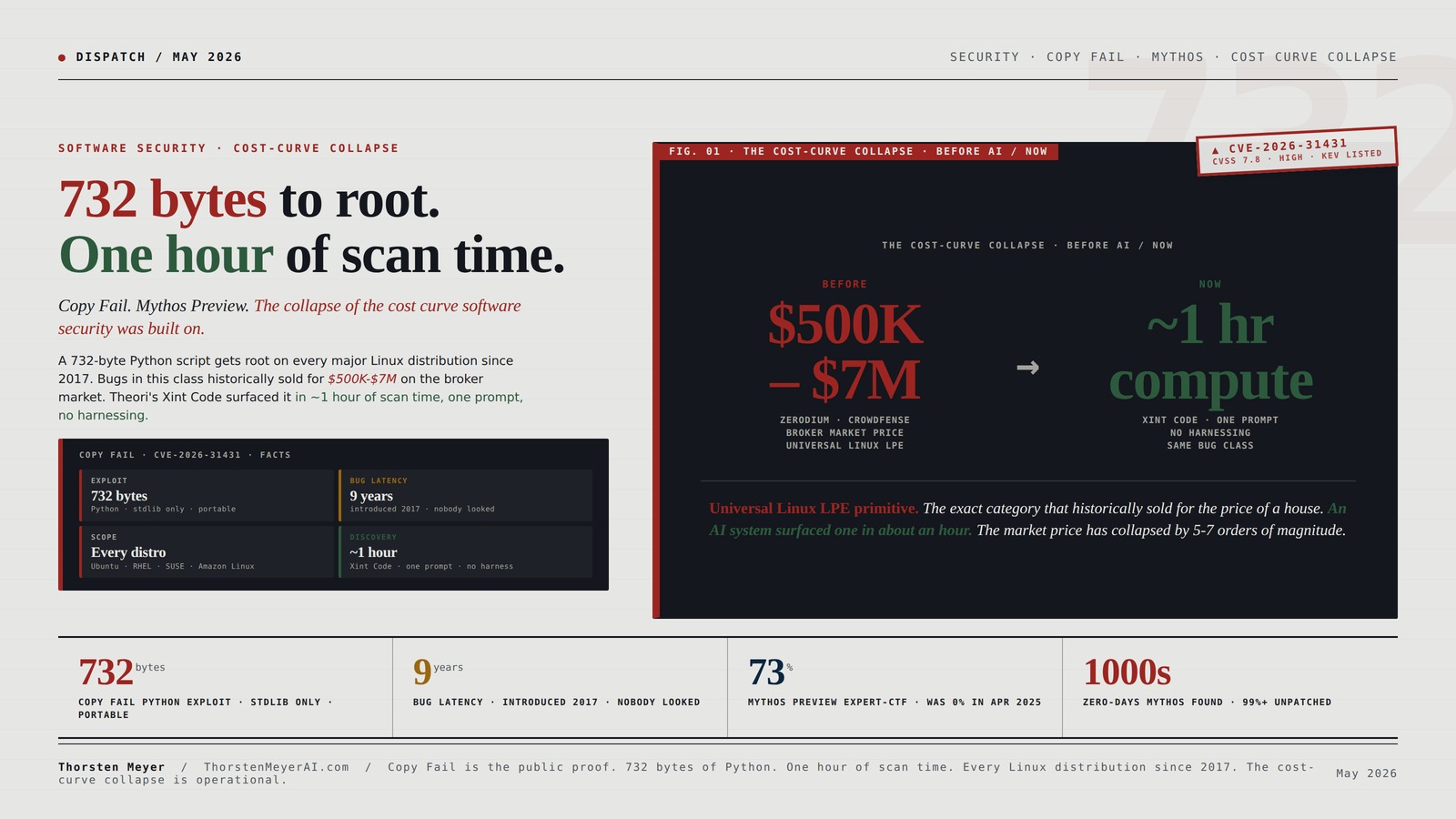

The most consequential security event of 2026 is not a breach. It is a disclosure. On April 29, the offensive security firm Theori publicly disclosed CVE-2026-31431 — Copy Fail — a local privilege escalation in the Linux kernel that affects every major distribution since 2017. Ubuntu, Amazon Linux 2023, RHEL 10.1, SUSE 16, Debian, Fedora, Arch. All of them. The exploit is a 732-byte Python script using only standard library modules. No race condition. No version-specific offsets. No recompilation required. The same script runs on every tested distribution and gets root in seconds.

This is the kind of bug that, when it has existed historically, has commanded $500,000 to $7 million on the gray market. Zerodium’s published price list paid up to $500K for a high-end Linux zero-day. Crowdfense runs $10K-$7M programs with the top of the band reserved for exactly this category — universal, reliable, no-precondition LPE primitives.

Copy Fail was surfaced by Theori’s Xint Code AI system in approximately one hour of scan time, with one operator prompt, and no harnessing.

That sentence is the editorial event. The market price of a universal Linux LPE has collapsed from “the cost of a house” to “the cost of an hour of inference compute.” Everything else in the security landscape — patch cycles, vulnerability budgets, CVE prioritization, the responsible disclosure framework, the threat models that enterprises and cloud providers operate on — was built on the assumption that finding bugs of this severity was expensive enough that the supply was bounded by how many skilled humans were looking. That assumption is now empirically false.

This piece is the read on what Copy Fail and Claude Mythos Preview together signify for the cost curve software security has operated on, the structural changes already underway, and what enterprise security leaders, policymakers, and software publishers should do in the 12-24 month window before the asymmetric capability becomes broadly distributed.

The headline finding: software security has historically been an asymmetric defender’s nightmare. Attackers need one bug; defenders need to find them all. AI-driven vulnerability discovery does not change the asymmetry — it lowers the cost floor for both sides while changing the volume on the offensive side first. The next 18-36 months are determined by whether defenders can match the offensive capability curve fast enough to prevent a “Y2K-equivalent” volume of zero-day disclosures from overwhelming patch infrastructure. The early signal — Copy Fail at one hour, Mythos Preview finding thousands of zero-days during testing — suggests the answer is currently no.

732 bytes to root.

One hour of scan time.

Copy Fail, Mythos Preview, and the collapse of the cost curve software security was built on.

On April 29, Theori disclosed CVE-2026-31431 — Copy Fail. A 732-byte Python script gets root on every major Linux distribution since 2017. Zero races, zero per-distro tuning. Bugs in this class historically sold for $500K-$7M. Xint Code surfaced it in ~1 hour of scan time, one prompt, no harnessing. The cost curve software security operated on for three decades has just collapsed.

The bug. The exploit. The discovery.

A logic flaw in algif_aead. The 2017 in-place optimization that nobody looked at hard enough. A 732-byte Python script that gets root on every Linux distribution since. Found by an AI in about an hour.

sg_chain(). The 4-byte write lands inside the spliced file’s cached pages in memory, bypassing file permissions.os + socket + zlib. Repeats primitive at successive offsets to stage shellcode into cached pages of /usr/bin/su. Running su after yields root shell. On-disk file unchanged · checksum verification doesn’t detect it.

Hands-On Penetration Testing on Windows: Unleash Kali Linux, PowerShell, and Windows debugging tools for security testing and analysis

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

This is not an isolated event.

Three weeks before Copy Fail, Anthropic published the system card for Claude Mythos Preview — the model they built and chose not to release because its cybersecurity capabilities were “a step-change.” Mythos is withheld. Copy Fail is what happens when equivalent capability operates outside the withholding framework.

system card

April 8

red team

evaluation

TLO benchmark

Institute

Cyber Security Essentials

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Three cost-curve assumptions. All broken.

Software security operated for three decades on a set of implicit cost-curve assumptions. Worth making them explicit, because they have just changed. Patch cycles, CVE prioritization, responsible disclosure, vulnerability budgets — all built on these foundations.

Claude Mythos for Software Engineers: A Practical Guide to AI-Powered Vulnerability Discovery

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The institutional response window is open but narrowing.

Specific operational implications for CISOs, security teams, and enterprise software architects. The 12-24 month window where defenders can pre-empt attackers using AI-driven discovery is open. It will not be open indefinitely.

multi-tenancythreat-model update

this week

infrastructurevolume planning

30 days

minimizationkernel modules

echo "install algif_aead /bin/false" >> /etc/modprobe.d/disable-algif-aead.conf. Minimize kernel surface exposed to unprivileged processes. Always good practice; now urgent.this month

vulnerability discoverydefensive tooling

quarter

breach assumptiondetect & contain

year

Ultimate Splunk for Cybersecurity: Practical Strategies for SIEM Using Splunk’s Enterprise Security (ES) for Threat Detection, Forensic Investigation, … (Security Analytics & Blockchain Defense)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

Four audiences. Different obligations.

CISOs · software publishers · policymakers · the public. Each role faces structurally different decisions in the 18-36 month window.

+ SECURITY TEAMS

PUBLISHERS

POLICYMAKERS

EVERYONE ELSE

Copy Fail is the public proof. 732 bytes of Python. One hour of scan time. Every Linux distribution since 2017. The cost-curve collapse is operational. The institutional response window is open but narrowing.

I · The Copy Fail facts

Worth establishing the technical specifics, because they matter for what follows:

The bug. A logic flaw in algif_aead, the AEAD socket interface of the kernel’s userspace crypto API (AF_ALG). Specifically in the authencesn(hmac(sha256),cbc(aes)) algorithm template. Following a 2017 in-place optimization (commit 72548b093ee3), the kernel chains tag pages from the source scatterlist into the output scatterlist via sg_chain(). When userspace feeds the socket through splice(), those tag pages reference the page cache of the spliced file. The algorithm writes 4 bytes at offset assoclen + cryptlen as scratch space — but the output scatterlist extends into the chained page cache pages, so the 4-byte write lands inside the spliced file’s cached pages in memory, bypassing file permissions.

The exploit. 732 bytes of Python using os, socket, and zlib. Requires Python 3.10+ for os.splice. Repeats the primitive at successive offsets to stage shellcode into the cached pages of /usr/bin/su. Running su afterward executes the corrupted version from page cache and yields a root shell. The on-disk file is unchanged — checksum verification doesn’t detect the modification. A reboot reloads the original, but by then the attacker has root.

The scope. Every Linux kernel built since July 2017. Every major distribution. The exploit is portable across kernels, distributions, and architectures with zero modification. Container boundaries also break — page cache is shared on the host, so a compromised container can write into the page cache of files visible to the host kernel, enabling container-to-host escape. Kubernetes nodes, CI/CD runners, multi-tenant shared-kernel cloud environments — all in scope.

What survives. Hardware-or-VM boundaries. AWS Lambda and Fargate (Firecracker microVMs, separate kernels per tenant). Cloudflare Workers (V8 isolates, no Linux kernel in the threat model). gVisor (user-space kernel that doesn’t share host’s algif_aead). The pattern is consistent: namespace boundaries fail; hardware boundaries hold.

Comparison to historical Linux LPEs. Dirty Cow (CVE-2016-5195) required winning a race condition, often crashed the system, took multiple attempts. Dirty Pipe (CVE-2022-0847) was version-specific and required precise pipe buffer manipulation. Copy Fail has none of those characteristics. No race. No retry. No version-specific tuning. Straight-line logic flaw. Reliable on every tested kernel.

The discovery. Theori’s writeup: “surfaced by Xint Code about an hour of scan time against the Linux crypto/ subsystem, with one operator prompt, no harnessing.” Theori isn’t a marketing-driven startup. They’re nine-time DEF CON CTF winners as MMM (with CMU’s PPP and UBC’s Maple Bacon), and took third in the DARPA AI Cyber Challenge finals. When Theori says “one prompt, one hour,” the default assumption is they did exactly that.

II · The Mythos signal · context for Copy Fail

Copy Fail did not arrive in a vacuum. It arrived three weeks after Anthropic published the system card for Claude Mythos Preview — the model Anthropic built and chose not to release publicly specifically because of its cybersecurity capabilities.

The specific findings from the Mythos system card and the AISI evaluation:

- Mythos Preview autonomously discovered and exploited zero-day vulnerabilities in every major operating system and web browser during internal testing

- Oldest discovered: 27-year-old bug in OpenBSD — a system whose reputation rests on security

- 16-year-old vulnerability in FFmpeg’s H.264 codec

- Memory-corruption vulnerability in a memory-safe virtual machine monitor

- One demonstration: model autonomously developed a browser exploit chaining four vulnerabilities to escape both renderer and OS sandboxes

- Thousands of high-severity zero-days found during evaluation, with over 99% reportedly not yet patched

- AISI evaluation: Mythos succeeds 73% of the time on expert-level CTFs — tasks no model could complete before April 2025

- AISI’s “The Last Ones” (TLO) corporate network attack simulation: 32 steps spanning reconnaissance through full network takeover, estimated 20 hours for human experts — Mythos completes it; no other frontier model has

The prompt Anthropic used to discover vulnerabilities with Mythos Preview “essentially amounted to ‘Please find a security vulnerability in this program.'” Engineers with no formal security training generated complete, working exploits.

This is the capability frontier. Mythos itself is withheld. Project Glasswing — Anthropic’s consortium of partners with restricted access — is the controlled deployment channel. But Mythos is not the only model in this category. Xint Code, the system that found Copy Fail, is publicly operational. GPT-5.x systems have similar capabilities at lower headline levels but still substantially above the 2025 baseline. Open-source frontier models lag by 6-18 months on a curve that is shortening.

The structural implication: the capability that Mythos demonstrates and that Anthropic is withholding is the same capability that produced Copy Fail in one hour of scan time at Theori. Different models, different scaffolding, different specific use cases — but the underlying capability is the same and is broadly distributed across multiple frontier labs and their downstream applications. Mythos is the published proof; Copy Fail is the public consequence; the broader category is everything in between that isn’t yet disclosed.

III · The cost-curve collapse · what historically held

Software security has operated for three decades on a set of implicit cost-curve assumptions. Worth making them explicit, because they have just changed:

Assumption 1: Finding kernel-grade bugs is expensive. Specifically expensive in skilled-human-time. A senior vulnerability researcher with 10+ years of experience working full-time produces, on a good year, roughly 2-6 high-severity findings of the category Copy Fail belongs to. Junior researchers find more findings of less severity. The aggregate supply of new universal-LPE-class bugs has historically been bounded by perhaps 200-500 senior researchers globally producing 500-3000 bugs per year across all categories. That’s the supply curve software security was built on.

Assumption 2: Defenders and attackers face roughly the same cost curve. Both rely on skilled humans. Defenders have institutional advantages (access to source, ability to coordinate disclosure, vendor relationships). Attackers have asymmetric advantages (only need one bug, can stockpile, can choose timing). But the underlying cost of finding new bugs is roughly the same for both sides. Patch cycles, CVE prioritization, and the responsible disclosure framework were designed around this rough parity.

Assumption 3: Public disclosure provides defenders meaningful response time. The 90-day coordinated disclosure window assumes that even after a bug is publicly disclosed, exploiting it broadly requires additional skilled work — building reliable exploits, integrating into attack infrastructure, surveying target inventories. This has historically meant defenders had days to weeks to patch before exploitation became widespread.

All three assumptions are partially or fully broken by AI-driven vulnerability discovery as it exists today in 2026:

Assumption 1 is broken. Copy Fail at one hour of scan time, Mythos finding thousands of zero-days during testing — these are not “AI helps skilled researchers be faster” outcomes. They are “AI is the researcher.” The aggregate supply of new universal-LPE-class bugs is now bounded by inference compute available to the entities doing the searching, not by the population of skilled researchers. The supply curve has shifted from human-bounded to compute-bounded. Compute is, structurally, much more elastic than skilled humans.

Assumption 2 is partially broken. Defenders and attackers both have access to AI-driven vulnerability discovery, but the application contexts differ. Defenders use it for finding bugs in their own code or licensed code. Attackers use it for finding bugs in target code. For most software in production, “their own code” is a small fraction of “target code” — most attackers have many more potential targets than any defender has assets to defend. The asymmetry persists; the cost floor for both sides drops; the volume available on the offensive side scales faster.

Assumption 3 is broken. Once a bug is disclosed, AI-driven exploitation is much faster than historical exploitation. A working PoC for Copy Fail was public at disclosure. Sysdig and other vendors reported preliminary exploitation testing within days. CISA added it to the KEV catalog. The window between disclosure and widespread exploitation has compressed from weeks to days. And for bugs that aren’t responsibly disclosed — where the discovering entity uses the bug themselves — there is no window.

The structural change isn’t subtle. The model the entire industry was built on is no longer accurate. Three decades of security engineering practice, regulatory framework design, enterprise risk management, and software publisher economics need to be re-evaluated against the new cost curve.

IV · What was always broken anyway

Worth a brief tangent on what the new cost curve makes more visible without actually creating: the structural fragility of shared-kernel multi-tenancy.

Copy Fail enables container-to-host escape because page cache is shared across containers on the same kernel. This was always true. Container security has been a known weak boundary in the multi-tenant cloud model since Docker shipped. The industry has been operating on the assumption that container escapes were rare enough to be acceptable risk for shared-kernel deployments. That assumption was based on the same “finding bugs is expensive” foundation that just collapsed.

The cloud providers that designed for hardware-or-VM boundaries (AWS Firecracker, gVisor, hardware-virtualized isolation) get to skip the recalibration. Shared-kernel multi-tenancy is going to have a hard 18-36 months. Kubernetes-as-a-service operators running shared-kernel nodes face an architectural reckoning. The industry will move toward stronger isolation models. The cost of compute will go up to accommodate the security cost.

This is not a new problem; it is an old problem made acute by the cost curve collapse. Many such problems are about to surface. Software architecture decisions made under the assumption that finding their flaws was hard will be re-evaluated under the assumption that finding their flaws is cheap. Some of those re-evaluations are routine; some will require structural redesigns of long-standing systems.

V · The disclosure economy · what changes

The responsible disclosure framework — 90-day windows, vendor coordination, embargoed patches, KEV catalog management — is a social technology built around the historical cost curve. It is going to need substantial revision.

Specific structural changes already underway or imminent:

Bug bounty economics. Pre-2026 bug bounties paid up to $500K-$1M for top-tier findings. The pricing reflected scarcity of finders. If AI-driven discovery becomes broadly available, the marginal cost of finding equivalent bugs drops to inference cost. Bug bounty programs that pay $500K for findings that cost $10 of inference to produce are not sustainable economically. Two outcomes are plausible: bug bounty payments compress dramatically (potentially destroying the bug bounty market as a viable income), or bug bounties shift to paying for novel findings only with rapid verification that the finding wasn’t AI-discoverable. Neither outcome preserves the current model.

Coordinated disclosure timelines. 90-day windows assume vendor patch development takes time and that attackers face friction in weaponizing public disclosure. Both assumptions weaken. Expect coordinated disclosure windows to compress (more pressure on vendors to patch faster) while simultaneously becoming harder to enforce (more bugs flowing through more channels with varying disclosure norms). Some bugs will be disclosed without any vendor coordination because the discovering entity uses them directly. Others will be disclosed with very short windows because public disclosure is itself faster.

Vendor patch cycles. Modern enterprise software stacks have patch cycles measured in weeks (cloud-native software) to months (enterprise software) to years (industrial control systems, embedded systems, legacy infrastructure). At the new cost curve, vulnerabilities will be discovered faster than vendors can patch them, structurally. This was already true for legacy systems; it is becoming true for modern systems. Expect aggregate “unpatched vulnerability” metrics to grow rather than shrink even as patch cadence accelerates. The denominator is growing faster than the numerator.

CVE assignment and prioritization. The CVE system was designed to track a manageable annual flow of vulnerabilities. The number of CVEs assigned per year has been growing exponentially since 2018; the AI-driven discovery curve makes the growth steeper. Expect institutional CVE management — MITRE, CISA, KEV catalog — to come under sustained pressure. The prioritization heuristics (CVSS scores, exploitation-likelihood scoring, KEV inclusion criteria) need to be re-designed for a regime where the volume is fundamentally higher.

Software publisher liability. The legal framework around software liability (mostly limited-liability EULAs, very narrow class-action remedies, no general product liability standard for software) was built when finding flaws was hard. It is starting to receive serious legal-policy attention. The EU Cyber Resilience Act, FDA premarket security requirements for medical devices, the SEC cyber-incident disclosure rule, NIST 800-218 SSDF for federal contractors — all of these are pre-AI-driven-discovery frameworks. They will need updating.

The aggregate change: software security as a profession, as an industry, as a regulatory domain, and as an economic category is going through a structural reorganization on a 18-36 month timeframe. The Copy Fail disclosure is one specific instance; the broader pattern is the cost-curve collapse and its consequences propagating through every adjacent system.

VI · What enterprise security leaders should do · now

Specific operational implications for CISOs, security teams, and enterprise software architects:

Re-evaluate the multi-tenancy threat model. If your isolation story depends on shared-kernel containers, the threat model needs a hardware-or-VM boundary, not a namespace boundary. This is not a future-state consideration; it is a current-state requirement. Copy Fail and its successors are in the wild now. Kubernetes nodes running untrusted workloads need to migrate to per-tenant hardware isolation or accept materially higher container-escape risk. Cloud providers that run shared-kernel multi-tenancy need to publish their threat model assumptions and what they’re doing about them.

Update patch cycle assumptions. Plan for the volume of high-severity vulnerabilities to grow rather than shrink. The 30-day patch SLA for critical vulnerabilities is going to break under volume. Build infrastructure for faster patch evaluation, faster deployment automation, faster rollback if patches cause regressions. The patch infrastructure that worked under historical CVE volume will not work under AI-driven CVE volume.

Audit AF_ALG-class attack surface specifically. Apply the CERT-EU mitigation for Copy Fail (echo "install algif_aead /bin/false" > /etc/modprobe.d/disable-algif-aead.conf && rmmod algif_aead) unless your environment requires AF_ALG. Most environments don’t. Audit similar kernel attack surfaces — userspace-facing kernel interfaces that aren’t required for your specific deployment but are enabled by default. The general pattern: minimize the kernel surface exposed to unprivileged processes. This was always good practice; it is now urgent practice.

Invest in AI-driven defensive vulnerability discovery. The capability is symmetric — defenders can use the same tools attackers use. Most enterprises haven’t deployed this capability internally. The 12-24 month window where defenders can pre-empt attackers using AI-driven discovery is open. It will not be open indefinitely. Tooling: GitHub’s emerging code security agents, Snyk’s AI-augmented scanning, Anthropic’s Project Glasswing partner program (if eligible), open-source projects like Atheris and ParticleFuzz with LLM-augmented modes. Start internal evaluation now.

Reconsider software supply chain assumptions. AI-driven vulnerability discovery extends to discovering bugs in third-party dependencies that your software stack depends on. The aggregate exposure to supply chain vulnerabilities is going to grow significantly. SBOM (Software Bill of Materials) discipline, dependency tracking, and rapid response to upstream disclosures all need to operate at higher cadence than historical norms.

Architect for breach assumption. The defender’s response to “bugs will be discovered faster than they can be patched” is to architect systems that assume some fraction of components are compromised. Network segmentation, least-privilege everywhere, robust logging and detection, incident response infrastructure. The traditional “prevent breaches” framing is increasingly outdated; the “detect and contain breaches” framing is the durable operating model.

VII · What software publishers should do · now

For organizations that publish software rather than just consume it, the implications are different and arguably more consequential:

Run AI-driven vulnerability discovery against your own codebase before attackers do. This is the highest-leverage action available. If your code has bugs of the Copy Fail class, AI-driven discovery will find them eventually — either by you or by someone else. The marginal cost of running discovery internally is now low. Failing to run it is failing to perform basic due diligence. Expect this to become a regulatory requirement in some jurisdictions within 24 months.

Rebuild security testing infrastructure around continuous AI-driven evaluation. The historical model of annual penetration tests, periodic code audits, and pre-release security review is structurally inadequate. Move toward continuous AI-driven scanning integrated into the development pipeline. The capability exists; the organizational change is the bottleneck.

Update threat models for AI-driven attacker capability. Most enterprise threat models were written when attacker capability was bounded by skilled-human time. Update the threat models to assume attacker capability is bounded by inference compute available to motivated actors. This is a significantly larger threat envelope. The mitigations that worked against the old threat model may not work against the new one.

Coordinate proactively with the security research community. Theori’s Xint Code, Anthropic’s Mythos / Project Glasswing, and similar capabilities at other labs are operating at the frontier. Publishers of widely-deployed software should be engaging with these programs proactively rather than waiting for disclosure. The cost of proactive engagement is much lower than the cost of reactive incident response after a public 732-byte-PoC disclosure.

Reconsider the architectural decisions where complexity creates undiscovered bugs. The Copy Fail bug was introduced in 2017 by an in-place optimization in the kernel crypto API. The optimization saved memory and CPU at the cost of architectural complexity in the scatterlist handling. For nearly a decade, the bug existed because no one looked carefully enough. AI-driven discovery is the thing that “looks carefully enough.” Architectural complexity that existed because nobody was systematically auditing it is going to surface. Simplify where possible. Optimize for auditability, not just for performance.

VIII · What policymakers should do

The structural changes have significant policy implications:

Update software security regulatory frameworks. The EU Cyber Resilience Act, NIST 800-218, FDA premarket security requirements, SEC cyber-incident disclosure — all of these were designed for the pre-AI-driven-discovery regime. They need substantial revision within 18-36 months. Specifically: regulatory standards should require AI-driven vulnerability discovery as part of pre-deployment security validation for critical software (medical devices, industrial control systems, critical infrastructure software, government-procured software). The capability exists; failure to use it should become regulatorily indefensible.

Strengthen the responsible disclosure framework. The 90-day coordinated disclosure window is going to come under sustained pressure. Build international agreement on minimum disclosure standards before the framework breaks down ad hoc. Specifically: vendor patch deadlines, KEV catalog integration, criminal penalties for non-disclosure of known vulnerabilities by responsible parties.

Coordinate AI vulnerability discovery capability for public-interest purposes. Most current AI vulnerability discovery capability sits with frontier labs and commercial security firms. Critical infrastructure operators (power grid, water, financial systems, healthcare) need access to equivalent capability for defensive purposes. Public-interest compute allocation mechanisms (analogous to the broader compute allocation question the Clark franchise raised) apply here specifically.

Address the bug bounty market collapse. If AI-driven discovery makes traditional bug bounty pricing economically untenable, legitimate security researchers face significant income disruption. This affects defender capability — security research jobs are a key channel for developing the next generation of defensive expertise. Without intervention, the field’s talent pipeline narrows precisely when more talent is needed. Possible interventions: public funding of security research, university programs, regulatory requirements for vendor-funded research, antitrust attention to bug bounty market consolidation.

Anticipate aggregate-vulnerability-volume effects on national infrastructure. Critical infrastructure operators face the same patch volume pressure as everyone else, except with longer patch cycles. The aggregate exposure of national critical infrastructure to AI-discoverable vulnerabilities is going to grow. This is a strategic concern at the geopolitical scale. State actors with AI-driven discovery capability gain asymmetric advantage over states without it. The export-control regime for AI compute interacts with the cybersecurity regime in ways the current frameworks don’t model.

IX · The geopolitical layer

Briefly, because it matters:

Nation-state attackers with frontier-lab access to AI-driven vulnerability discovery have a significant capability advantage. This is true now and becomes more true as the capability matures. The strategic-competition dynamic between US, China, and other major-power AI ecosystems is operative here. Each state has incentives to develop sovereign AI-driven discovery capability. Each state has incentives to make its critical infrastructure resilient to adversary AI-driven discovery. Neither set of incentives is fully aligned with the broader public interest.

The export-control regime for AI compute is partly about preventing exactly this scenario — frontier AI capability in adversary hands enabling asymmetric cybersecurity offensive capability. Whether the regime is effective at this is contested; the structural concern is real regardless of effectiveness.

The verification problem the Outside Read 03 piece raised applies here specifically. How do external observers verify that AI-driven vulnerability discovery capability has or hasn’t been deployed against specific targets? The answer is: currently, mostly we can’t. Attribution remains hard. The forensic infrastructure to verify AI-driven attacks does not exist at scale. This is one of the verification infrastructure gaps that needs sustained institutional investment over the next 24-36 months.

X · The structural read

The Clark essay franchise has been documenting the trajectory toward automated AI R&D — capability progress, deployment evidence, labor market consequences, corporate commitments, capital flows, the institutional response gap. The cybersecurity layer is the most concrete and immediate manifestation of the broader capability cascade.

Mythos Preview being withheld because of cybersecurity capability is the Anthropic version of the Forecast Is The Plan finding — the labs are publicly demonstrating capability that, by their own evaluation, is too dangerous to release broadly. Project Glasswing is the controlled deployment channel. The same capability is operational at Theori via Xint Code. Less controlled. Already public. Copy Fail is what happens when the capability operates at one entity outside the Anthropic Glasswing control framework.

The structural finding for the cybersecurity domain: this is the leading edge of the labor market consequence the coding singularity piece documented for software engineering generally. The cognitive work of vulnerability research — historically the highest-skill, highest-paid corner of cybersecurity — is being automated at a cost curve that collapses the historical economics. The same dynamic will play out in adjacent cognitive work categories on similar timelines. Cybersecurity is just the first domain where the consequences are operationally visible because the volume of bugs being discoverable is constrained by responsible disclosure rather than by the absence of capability.

What gets built institutionally during the next 18-36 months matters disproportionately. Patch infrastructure, AI-driven defensive tooling, regulatory frameworks for AI vulnerability discovery, coordinated international response to high-volume disclosure cycles, public-interest allocation of defensive AI compute, talent pipeline preservation for security research, threat model updates for shared-kernel multi-tenancy. None of this happens by itself. The asymmetric cost-of-being-wrong analysis from Outside Read 02 applies here directly. Capacity built is useful; capacity not built is a structural vulnerability.

Copy Fail is the public proof. 732 bytes of Python. One hour of scan time. Every Linux distribution since 2017. The cost-curve collapse is operational. The institutional response window is open but narrowing.

That is the read on what just happened.

About the Author

Thorsten Meyer is a Munich-based futurist, post-labor economist, and recipient of OpenAI’s 10 Billion Token Award. He spent two decades managing €1B+ portfolios in enterprise ICT before deciding that writing about the transition was more useful than managing quarterly slides through it. More at ThorstenMeyerAI.com.

Related Reading

- The Coding Singularity Outside Read · the capability that makes this possible

- Engineering Automated, Research Residual · the cognitive work being automated

- The Forecast Is the Plan · the corporate commitment cascade

- Project Glasswing · Anthropic’s controlled deployment of Mythos Preview

- The Mythos Preview System Card analysis

- The State of AI Replacing Jobs in 2026

Sources

- Theori / Xint Code · Copy Fail: 732 Bytes to Root on Every Major Linux Distribution · xint.io/blog/copy-fail-linux-distributions · April 29, 2026

- CVE-2026-31431 · NVD · CVSS 7.8 (High)

- Microsoft Security Blog · CVE-2026-31431: Copy Fail vulnerability enables Linux root privilege escalation across cloud environments · May 1, 2026

- Sysdig Threat Research · Copy Fail Linux kernel flaw lets local users gain root in seconds · May 2026

- CERT-EU · High Vulnerability in the Linux Kernel (“Copy Fail”) · 2026-005

- Tenable Research Special Operations · Copy Fail FAQ · April 30, 2026

- Ubuntu Security · CVE-2026-31431 Copy Fail · ubuntu.com/security

- Qualys ThreatPROTECT · Linux Kernel Vulnerability Exploited in the Wild (Copy Fail)

- Trend Micro · SECURITY ALERT: Copy Fail · success.trendmicro.com

- Bugcrowd · What we know about Copy Fail (CVE-2026-31431) · bugcrowd.com

- Anthropic · Claude Mythos Preview System Card · April 7, 2026 · anthropic.com

- Anthropic · Claude Mythos Preview Risk Report · anthropic.com/claude-mythos-preview-risk-report

- UK AI Security Institute · Our evaluation of Claude Mythos Preview’s cyber capabilities · aisi.gov.uk

- The Hacker News · Anthropic’s Claude Mythos Finds Thousands of Zero-Day Flaws Across Major Systems · April 8, 2026

- InfoQ · Anthropic Releases Claude Mythos Preview with Cybersecurity Capabilities but Withholds Public Access · April 2026

- Centre for Emerging Technology and Security (Alan Turing Institute) · Claude Mythos: What does it mean for cybersecurity?

- Zerodium · published price list pre-2025 platform shutdown

- Crowdfense · acquisition program ranges · 2026

- Theori · DEF CON CTF history as MMM + PPP + Maple Bacon

- DARPA AI Cyber Challenge · 2025 finals results